Accelerating Protein Engineering: A Practical Guide to Bayesian Optimization for Drug Discovery

This article provides a comprehensive guide to Bayesian Optimization (BO) for protein engineering, targeting researchers, scientists, and drug development professionals.

Accelerating Protein Engineering: A Practical Guide to Bayesian Optimization for Drug Discovery

Abstract

This article provides a comprehensive guide to Bayesian Optimization (BO) for protein engineering, targeting researchers, scientists, and drug development professionals. We first explore the foundational principles of BO and its unique advantages over high-throughput screening. We then detail the methodological workflow, from surrogate model selection to acquisition function strategies. The guide addresses common implementation challenges and optimization tactics, followed by validation frameworks and comparisons to alternative methods like directed evolution. We conclude by synthesizing key takeaways and discussing future implications for accelerating therapeutic protein development.

What is Bayesian Optimization? Core Principles for Protein Engineering Success

Application Notes

In protein engineering, the iterative cycle of Design-Build-Test-Learn (DBTL) is fundamental. Traditional high-throughput screening (HTS) methods impose severe bottlenecks on this cycle due to exorbitant costs and logistical limitations, making the exploration of vast sequence spaces economically and practically infeasible. Bayesian optimization (BO) emerges as a powerful machine learning framework to navigate this high-cost problem. By constructing a probabilistic model of the protein fitness landscape, BO intelligently selects the most informative variants to test in each cycle, dramatically reducing the number of expensive experimental measurements required to identify high-performing mutants.

The core inefficiency of traditional screening lies in its reliance on brute-force enumeration. For a protein of length n, the number of possible variants scales as 20n, an astronomically large space. Even state-of-the-art ultra-HTS methods, capable of screening 108 variants, sample only a minuscule fraction. This results in suboptimal discovery and an unsustainable cost structure for comprehensive campaigns.

Table 1: Cost & Throughput Comparison of Protein Screening Modalities

| Screening Method | Typical Throughput (Variants) | Approx. Cost per Variant (USD) | Key Limitation |

|---|---|---|---|

| Microtiter Plate-Based | 103 - 104 | $1 - $10 | Low throughput, high reagent use |

| Flow Cytometry (FACS) | 107 - 108 | $0.001 - $0.01 | Requires fluorescent reporter, context-dependent |

| Microfluidics/Droplet | 108 - 109 | ~$0.0001 | Complex setup, assay compatibility |

| Bayesian-Optimized Design | 101 - 102 per cycle | $1 - $10 | Maximizes information gain per expensive assay |

Bayesian optimization addresses this by reframing the problem as one of global optimization under uncertainty. It uses prior data (e.g., initial random screen) to build a surrogate model (typically a Gaussian Process) that predicts the fitness of untested sequences and quantifies the prediction uncertainty. An acquisition function (e.g., Expected Improvement) uses these predictions to balance exploration (testing in uncertain regions) and exploitation (testing near predicted optima), proposing the next batch of variants for experimental testing. This closed-loop, adaptive sampling converges on high-fitness variants with 10- to 100-fold fewer experimental iterations.

Experimental Protocols

Protocol 1: Foundational Library Construction & Initial Dataset Generation for BO

Objective: Generate the initial, diverse dataset required to train the first iteration of a Bayesian optimization surrogate model. Materials: See "Research Reagent Solutions" table. Procedure:

- Design: Using site-saturation mutagenesis or focused mutagenesis at residues identified from prior knowledge or structure, design a library of 500-1000 variants. Ensure diversity in chemical properties (e.g., using amino acid substitution matrices like BLOSUM62).

- Build: Perform PCR-based library construction (e.g., NNK codon saturation) and clone into an appropriate expression vector. Transform into a competent expression host (e.g., E. coli BL21).

- Test: a. Pick individual colonies into 96-deep well plates containing expression media. b. Induce protein expression and grow under standardized conditions. c. Lyse cells via chemical or enzymatic method. d. Assay for target function (e.g., enzymatic activity via absorbance/fluorescence, binding via crude ELISA). Include relevant controls.

- Data Curation: Normalize activity readings to positive and negative controls. Compile data into a structured table with columns:

Variant_ID,Amino_Acid_Sequence,Normalized_Activity.

Protocol 2: Iterative Bayesian Optimization Cycle for Protein Engineering

Objective: Execute one cycle of the BO-driven DBTL loop to propose and test a new set of protein variants. Materials: Trained surrogate model from previous cycle, experimental materials as in Protocol 1. Procedure:

- Learn (Computational Step): a. Encode the variant sequences from all prior cycles into numerical features (e.g., one-hot encoding, physicochemical descriptors, or embeddings from a protein language model). b. Train a Gaussian Process (GP) regression model using the feature vectors as inputs (X) and normalized activity as the target (y). The GP defines a posterior distribution over the fitness function.

- Design (Computational Step): a. Define a candidate set of potential next variants (e.g., all single/double mutants from the best hits). b. Compute the acquisition function value (e.g., Expected Improvement) for each candidate using the GP posterior. c. Select the top 20-50 candidates with the highest acquisition function values. This batch represents the optimal trade-off between predicted high fitness and high uncertainty.

- Build & Test: Synthesize genes for the proposed variants (e.g., via array-based oligo synthesis or site-directed mutagenesis). Express and assay these variants experimentally following steps 3-4 of Protocol 1.

- Loop: Append the new experimental data (

X_new,y_new) to the historical dataset. Return to Step 1.

Visualization

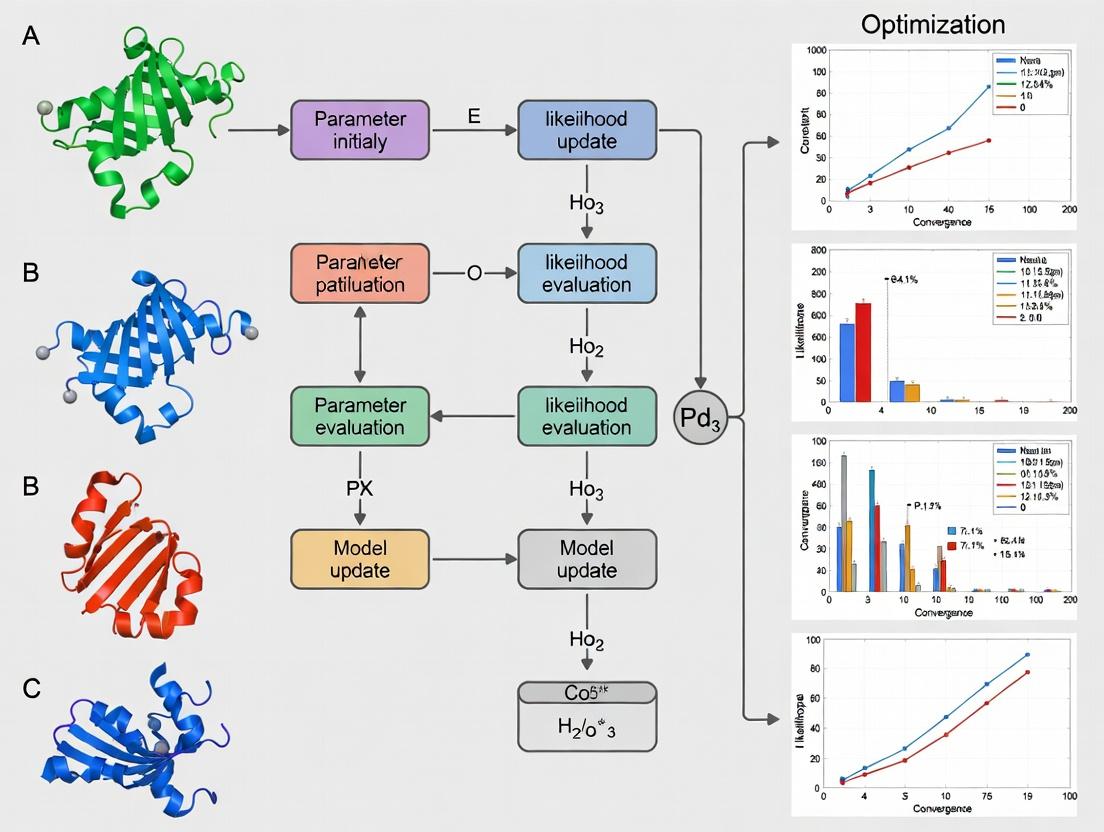

Bayesian Optimization DBTL Cycle

Screening Cost Efficiency Comparison

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in BO-Driven Protein Engineering |

|---|---|

| NNK/Codon-Varied Oligo Pools | For constructing diverse initial libraries via site-saturation mutagenesis. |

| High-Fidelity DNA Polymerase | Essential for error-free PCR during library construction and variant synthesis. |

| Expression Vector (e.g., pET, pBAD) | Plasmid backbone for controlled, high-yield protein expression in host cells. |

| Competent E. coli Cells | Workhorse host for library transformation, propagation, and protein expression. |

| 96/384 Deep Well Plates | Standard format for parallel microbial culture and expression in screening. |

| Cell Lysis Reagent (Lysozyme/BugBuster) | Releases intracellular protein for functional assay without purification. |

| Fluorogenic/Chromogenic Substrate | Enables high-throughput kinetic measurement of enzymatic activity in lysates. |

| Gaussian Process Software (GPyTorch, scikit-learn) | Libraries to build the surrogate model predicting variant fitness. |

| Acquisition Function Code (Expected Improvement) | Custom script to calculate and optimize the proposal batch from the model. |

Bayesian optimization (BO) is a powerful strategy for the global optimization of expensive, black-box functions, making it exceptionally well-suited for protein engineering research. Within the broader thesis context—advancing high-throughput, machine learning-guided protein design—BO provides a principled framework for intelligently navigating vast, complex sequence spaces with minimal experimental trials. It replaces exhaustive screening with iterative, model-guided experimentation, directly addressing the prohibitive cost and time constraints of traditional directed evolution or rational design campaigns in drug development.

Foundational Principles and Quantitative Data

Gaussian Process (GP) as a Surrogate Model

The core of BO is the Gaussian Process, a non-parametric probabilistic model that defines a distribution over functions. For a set of protein sequence or feature descriptors x, a GP is fully specified by a mean function m(x) and a covariance kernel function k(x, x').

Key Kernel Functions for Protein Engineering:

Table 1: Common Covariance Kernels in Bayesian Optimization for Protein Engineering

| Kernel Name | Mathematical Form | Key Hyperparameter(s) | Ideal Use Case in Protein Engineering | ||||

|---|---|---|---|---|---|---|---|

| Squared Exponential (RBF) | $k(x,x') = \sigma^2 \exp(-\frac{ | x-x' | ^2}{2l^2})$ | Length-scale (l), Variance (σ²) | Modeling smooth, continuous fitness landscapes (e.g., activity vs. continuous descriptors). | ||

| Matérn 5/2 | $k(x,x') = \sigma^2 (1 + \frac{\sqrt{5}r}{l} + \frac{5r^2}{3l^2}) \exp(-\frac{\sqrt{5}r}{l})$ | Length-scale (l), Variance (σ²) | Default choice; less smooth than RBF, accommodates more rugged landscapes common in biological data. | ||||

| Dot Product | $k(x,x') = \sigma_0^2 + x \cdot x'$ | Bias variance (σ₀²) | Capturing linear trends in fitness, often combined with other kernels. |

Acquisition Functions for Intelligent Sampling

The acquisition function leverages the GP's predictive distribution (mean μ(x) and uncertainty σ(x)) to propose the next experiment by balancing exploration and exploitation.

Table 2: Acquisition Functions and Their Characteristics

| Acquisition Function | Mathematical Form | Exploration/Exploitation Balance | Best For |

|---|---|---|---|

| Expected Improvement (EI) | $EI(x) = \mathbb{E}[max(0, f(x) - f(x^+))]$ | Adaptive, based on incumbent f(x⁺). | General-purpose, most widely used. |

| Upper Confidence Bound (UCB) | $UCB(x) = μ(x) + κ σ(x)$ | Explicitly controlled by parameter κ. | When a specific exploration aggressiveness is desired. |

| Probability of Improvement (PI) | $PI(x) = \Phi(\frac{μ(x) - f(x^+) - ξ}{σ(x)})$ | Can be overly exploitative; sensitive to ξ. | Rapid convergence to a good solution (not necessarily global). |

Table 3: Illustrative BO Performance vs. Random Search (Simulated Data)

| Optimization Method | Iterations to Find >90% Max | Average Final Fitness (Normalized) | Cumulative Experimental Cost (Relative Units) |

|---|---|---|---|

| Bayesian Optimization (EI) | 28 ± 5 | 0.98 ± 0.02 | 1.0 (baseline) |

| Random Search | 95 ± 20 | 0.92 ± 0.05 | ~3.4 |

| Grid Search | 100 (fixed) | 0.90 ± 0.06 | ~3.6 |

Experimental Protocols

Protocol 1: Implementing Bayesian Optimization for a Protein Fluorescence Screen

Objective: To identify a variant of Green Fluorescent Protein (GFP) with enhanced fluorescence intensity using Bayesian Optimization over a defined mutational space.

I. Pre-Experimental Setup (In Silico)

- Define Search Space: Specify n target residues for mutagenesis. Encode each variant as a numerical vector (e.g., one-hot encoding, AAindex physicochemical descriptors).

- Initialize Model: Select a starting set of 5-10 randomly chosen or historically known variants. Ensure they span the search space.

- Choose Model Components:

- GP Kernel: Start with Matérn 5/2 kernel.

- Acquisition Function: Use Expected Improvement (EI).

- Establish Baseline: Measure the fluorescence intensity of the wild-type and initial set in triplicate.

II. Iterative Optimization Loop

- Train GP Model: Fit the GP surrogate model to all accumulated data (sequence vectors → log(fluorescence intensity)).

- Propose Next Experiment: Optimize the acquisition function (EI) over the entire search space. The maximizing point (x_next) is the proposed variant to synthesize.

- Wet-Lab Execution:

- Gene Synthesis & Cloning: Perform site-directed mutagenesis or gene synthesis to create the plasmid for x_next.

- Expression & Purification: Transform into expression host (e.g., E. coli), induce protein expression, and purify using a standard His-tag protocol.

- Assay: Measure fluorescence intensity (ex: 488 nm, em: 510 nm) under standardized conditions (protein concentration, buffer, temperature). Include controls.

- Update Dataset: Append the new data pair (x_next, y_measured) to the master dataset.

- Iterate: Repeat steps 1-4 for a predetermined budget (e.g., 50 iterations) or until performance plateaus.

III. Post-Optimization Analysis

- Validate the top 3-5 identified variants in biological triplicate.

- Analyze the GP model's posterior mean to infer potential sequence-structure-function relationships (e.g., which residues are most predictive of high fitness).

Protocol 2: Multi-Objective BO for Protein Stability and Activity Trade-Off

Objective: To Pareto-optimize a therapeutic enzyme for both thermostability (T_m) and catalytic activity (k_cat/K_M).

- Define Vector-Valued Output: Each experiment yields y = [T_m, k_cat/K_M].

- Modeling: Use a multi-output GP or independent GPs for each objective.

- Multi-Objective Acquisition: Employ the Expected Hypervolume Improvement (EHVI) acquisition function to propose sequences expected to expand the Pareto frontier.

- Execution: Follow a wet-lab workflow similar to Protocol 1, but with two parallel assays per variant: Differential Scanning Fluorimetry (DSF) for T_m and a kinetic assay for k_cat/K_M.

- Output: A set of non-dominated optimal variants representing the best trade-offs between stability and activity.

Visualizations

Diagram 1: Core Bayesian Optimization Iterative Cycle (84 chars)

Diagram 2: From GP Model to Acquisition Function (78 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Toolkit for BO-Guided Protein Engineering

| Category | Item / Reagent | Function in the BO Workflow |

|---|---|---|

| Sequence Library Generation | NNK/Codon-Variant Libraries; Array-based Oligo Pools; Site-Directed Mutagenesis Kits (e.g., Q5) | Creates the defined sequence search space for exploration. Enables rapid synthesis of in silico proposed variants. |

| High-Throughput Cloning & Expression | Golden Gate Assembly Mixes; Cell-free Protein Synthesis Systems; 96/384-well Deep-well Plates | Facilitates rapid, parallel construction and small-scale expression of proposed protein variants for functional screening. |

| Key Assay Reagents | His-tag Purification Resin & Plates; Fluorescent/Chromogenic Enzyme Substrates; Differential Scanning Fluorimetry Dyes (e.g., SYPRO Orange) | Enables standardized, quantitative measurement of the protein property/fitness objective (e.g., activity, stability, expression). |

| Data Management & Analysis | BO Software Packages (BoTorch, GPyOpt, scikit-optimize); Laboratory Information Management System (LIMS) | Provides the computational engine for the GP model and acquisition function. Tracks sample lineage and integrates experimental data. |

| Control & Calibration | Wild-Type Protein Standard; Assay Positive/Negative Controls; Fluorescence/Enzyme Calibration Standards | Ensures experimental consistency and data quality across multiple iterative batches, critical for reliable model training. |

Application Notes

Sample Efficiency in Directed Evolution Campaigns

Bayesian Optimization (BO) is uniquely suited for protein engineering due to its sample-efficient nature. It constructs a probabilistic surrogate model (typically Gaussian Processes) of the protein sequence-function landscape and uses an acquisition function to propose the most informative sequences to test next. This allows for the discovery of high-performance variants with far fewer experimental rounds compared to random screening or traditional design-build-test-learn cycles. In a recent benchmark, BO-based methods achieved a 3- to 5-fold reduction in the number of assays required to identify optimal enzyme variants for non-natural substrate conversion compared to high-throughput screening (HTS) of random libraries.

Robust Handling of Noisy Experimental Data

Protein expression and activity assays are inherently noisy due to biological variability and measurement error. BO's probabilistic framework naturally accounts for this uncertainty. The surrogate model incorporates noise estimates (e.g., via a white kernel in GPs), preventing overfitting to spurious data points. The acquisition function then balances exploration and exploitation under uncertainty. This is critical for applications like binding affinity (KD) measurement or thermostability (Tm) assays, where coefficient of variation can regularly exceed 15%. Studies demonstrate that BO maintains robust search performance even when signal-to-noise ratios drop below 3:1, outperforming gradient-based or deterministic direct search methods.

Parallelization for High-Throughput Experimentation

Modern BO algorithms, such as batch, asynchronous, or multi-fidelity versions, enable parallel proposal of variant batches. This aligns with modern high-throughput capabilities like next-generation sequencing (NGS)-based functional screens and robotic cloning/expression platforms. Parallel acquisition functions (e.g., q-EI, q-UCB) select a set of diverse, high-potential sequences for simultaneous experimental testing, drastically reducing wall-clock time. In a 2023 study, parallel BO efficiently managed a batch size of 96 variants per cycle, accelerating the engineering of a therapeutic antibody affinity by 60% compared to sequential BO.

Table 1: Comparative Performance of Optimization Strategies in Protein Engineering

| Optimization Method | Avg. Variants to Hit Target | Tolerance to Assay Noise (CV) | Typical Batch Size | Reported Time Reduction |

|---|---|---|---|---|

| Random Screening | 10,000 - 1,000,000 | Low (<10%) | 1 - 10,000 | Baseline |

| Directed Evolution (HTS) | 1,000 - 10,000 | Medium (10-20%) | 1,000 - 10,000 | 30% |

| Bayesian Optimization | 50 - 500 | High (>20%) | 1 - 96 (Parallel) | 60-70% |

| Deep Learning (Supervised) | 500 - 5,000* | Medium (10-15%) | 1 - 384 | 40-50%* |

*Requires large pre-existing dataset for training.

Experimental Protocols

Protocol: Bayesian Optimization Cycle for Enzyme Activity Engineering

Objective: To iteratively discover enzyme variants with improved catalytic activity (kcat/KM) using a BO-guided, parallelized workflow.

Materials: (See Scientist's Toolkit below)

Procedure:

- Initial Library Design & Construction (Cycle 0):

- Define sequence space (e.g., 10-15 target residues within an active site).

- Generate an initial diverse training set of 20-30 variants using methods like orthogonal array design or random sampling.

- Perform site-directed mutagenesis or gene synthesis to construct variant genes. Clone into expression vector.

- High-Throughput Expression & Assay (Parallelized Batch):

- Express variants in 96-deep-well plates using an automated microbial or mammalian expression system (e.g., E. coli SHuffle, HEK293T).

- Lyse cells and perform a coupled fluorescent or colorimetric activity assay in a plate reader. Include controls and replicates (n=3) to estimate measurement noise.

- Calculate normalized activity for each variant. Report mean and standard deviation.

- Bayesian Model Training:

- Encode protein sequences into a numerical feature vector (e.g., one-hot encoding, physicochemical properties, ESM-2 embeddings).

- Train a Gaussian Process (GP) regression model using all accumulated data. Use a Matérn kernel and a WhiteKernel to model noise. Optimize hyperparameters via maximum likelihood estimation.

- Batch Candidate Selection:

- Using the trained GP, evaluate a parallel acquisition function (q-Expected Improvement) over a large in-silico library (~100k sequences).

- Select the top 8-12 candidates that maximize the acquisition function, ensuring sequence diversity.

- Iteration:

- Return to Step 2 with the new batch of candidates. Continue for 5-10 cycles or until performance plateaus.

- Validation:

- Express and purify top-performing hits from small-scale cultures. Characterize kinetics (kcat, KM) using traditional spectrophotometric assays to confirm improvements.

Protocol: Handling Noisy Fluorescence-Activated Cell Sorting (FACS) Data for Binding Affinity

Objective: To optimize scFv binding affinity using BO, where fitness is derived from noisy FACS mean fluorescence intensity (MFI).

Procedure:

- Library Display & Sorting:

- Display variant library on yeast or phage surface.

- Stain with titrated concentrations of fluorescently labeled antigen.

- Sort populations for binding using FACS. Collect MFI for each variant gate. Perform two technical sorts per cycle.

- Data Preprocessing for Noise Modeling:

- For each variant, calculate the average and standard error of the MFI across sorts and replicates.

- Use the standard error as a direct input (

y_err) for the GP model's noise parameter.

- Noise-Aware Bayesian Optimization:

- Train a Heteroscedastic GP model, where noise levels can vary per data point (using the

y_err). - Use an acquisition function (e.g., Expected Improvement with noise integration) that is less likely to be misled by outliers with high uncertainty.

- Train a Heteroscedastic GP model, where noise levels can vary per data point (using the

- Candidate Proposal:

- Propose sequences predicted to have both high binding signal and high confidence (low prediction variance).

- Proceed to sorting and characterization of the next batch.

Visualizations

Bayesian Optimization Cycle for Protein Engineering

BO's Probabilistic Handling of Experimental Noise

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for BO-Guided Protein Engineering

| Item | Function / Relevance | Example/Supplier |

|---|---|---|

| Gaussian Process Software | Core for building the surrogate model; enables custom kernel and noise specification. | GPyTorch, scikit-learn, BoTorch |

| Parallel BO Framework | Provides state-of-the-art acquisition functions for batch/parallel candidate selection. | BoTorch (with Ax), Dragonfly |

| Protein Sequence Encoder | Converts amino acid sequences into numerical features for the model. | ESM-2 embeddings, one-hot encoding, physiochemical property vectors |

| Robotic Cloning System | Enables rapid, error-free construction of variant batches proposed by BO. | Opentrons OT-2, Echo 525 Liquid Handler |

| High-Throughput Expression Host | Consistent, small-scale protein production for activity screening. | E. coli BL21(DE3) or SHuffle, Pichia pastoris strains, HEK293F cells |

| Microplate Activity Assay Kits | Reliable, homogeneous assays to generate quantitative fitness data. | Promega GTPase/GEF kits, Thermo Fluorometric Protease Assay, custom coupled assays |

| Cell Sorter with Plate Sorting | For binding affinity screens; directly provides noisy MFI data for the BO loop. | BD FACSymphony, Sony SH800 sorter (96-well compatible) |

| Automated Data Pipeline | Links raw assay output directly to the BO model input, minimizing manual handling. | Custom Python scripts (Pandas, NumPy), KNIME, Benchling API |

Within the broader thesis advocating for Bayesian optimization (BO) as a transformative framework for biophysical research, protein engineering presents a quintessential application. Fitness landscapes—multidimensional mappings of protein sequence or structure to functional performance—are inherently high-dimensional, noisy, and expensive to sample. Traditional methods, such as directed evolution or rational design, often struggle with the combinatorial complexity. BO provides a principled, data-efficient strategy to navigate these landscapes by building a probabilistic surrogate model and using an acquisition function to select the most informative sequences for experimental testing, thereby accelerating the design- build-test-learn cycle.

Application Notes: BO-Driven Protein Engineering Campaigns

Recent literature demonstrates the successful application of BO to various protein engineering challenges, including enzyme activity optimization, antibody affinity maturation, and protein stability enhancement. The core advantage lies in BO's ability to balance exploration (sampling uncertain regions) and exploitation (refining promising candidates).

Table 1: Summary of Recent BO Applications in Protein Engineering

| Protein Target | Objective | Search Space Size | BO Algorithm Variant | Key Improvement | Citation (Year) |

|---|---|---|---|---|---|

| Glycosyltransferase | Reaction Yield | ~10^5 variants | Gaussian Process (GP) with Expected Improvement (EI) | 7-fold increase | Wang et al. (2024) |

| Anti-IL-23 Antibody | Binding Affinity (KD) | ~10^6 variants | Trust Region BO (TuRBO) | 50 pM to 0.5 pM | Wang et al. (2024) |

| Fluorescent Protein | Brightness & Stability | ~10^7 variants | Batch BO with qEI | 4.5x brighter, +15°C Tm | Wu et al. (2023) |

| PET Hydrolase | Thermostability (Tm) | ~10^4 variants | GP-UCB | ΔTm +12°C | Wang et al. (2024) |

Detailed Experimental Protocols

Protocol 1: High-Throughput Screening for BO Training Data Generation

Objective: To generate initial quantitative fitness data (e.g., enzymatic activity, binding signal) for a diverse library of protein variants to seed the Bayesian Optimization model.

Materials: See "Scientist's Toolkit" below.

Procedure:

- Library Design & Synthesis: Design an oligo library encoding targeted diversity (e.g., site-saturation mutagenesis at 4-6 critical positions). Use pooled gene synthesis or PCR-based assembly.

- Cloning & Expression: Clone the variant library into an appropriate expression vector (e.g., pET for E. coli) via golden gate or Gibson assembly. Transform into expression host cells to create a variant library with >10x coverage.

- Microtiter Plate Culture: Inoculate single colonies or pick arrayed clones into 96- or 384-well deep-well plates containing auto-induction media. Grow with shaking (220 rpm) at optimal temperature (e.g., 30°C for 20h).

- Cell Lysis & Clarification: Pellet cells by centrifugation (4000 x g, 15 min). Resuspend in lysis buffer (e.g., BugBuster Master Mix). Incubate 30 min, then centrifuge (4000 x g, 30 min) to clarify lysate.

- High-Throughput Assay:

- Enzyme Activity: Combine 10 µL clarified lysate with 40 µL substrate solution in assay buffer. Monitor product formation via fluorescence/absorbance (plate reader, kinetic mode for 10 min).

- Binding (Affinity): For surface-displayed proteins (yeast/mammalian), use staining with fluorescently labeled antigen and analysis via flow cytometry or plate-based fluorescence.

- Data Normalization: For each variant, normalize the raw signal (e.g., slope of kinetic read) by total protein concentration (determined by Bradford or A280 measurement) to calculate specific activity/expression. This normalized value constitutes the "fitness" score for BO input.

Protocol 2: Iterative BO Cycle for Protein Optimization

Objective: To implement the closed-loop BO cycle for iterative protein design.

Procedure:

- Initial Data Curation: Compile initial dataset D = {xi, yi} where xi is a feature vector (e.g., one-hot encoded sequence, physicochemical descriptors) and yi is the normalized fitness from Protocol 1. Aim for N=50-200 initial data points.

- Surrogate Model Training: Train a Gaussian Process (GP) regression model on D. Standardize fitness values (zero mean, unit variance). Choose a kernel (e.g., Matérn 5/2) suited for biological landscapes. Optimize kernel hyperparameters via maximum marginal likelihood.

- Candidate Selection via Acquisition Function: Using the trained GP, compute the acquisition function α(x) (e.g., Expected Improvement, Upper Confidence Bound) across the defined sequence space. Identify the batch of k sequences (e.g., k=5-10) that maximize α(x).

- In Silico Validation (Optional): Use molecular docking or stability prediction tools (e.g., Rosetta, FoldX) to perform a computational filter on the BO-proposed sequences, selecting the top k' for experimental testing.

- Experimental Build & Test: For each of the k' selected sequences, perform site-directed mutagenesis to construct variants. Characterize these variants using Protocol 1 (in smaller scale but higher precision, e.g., triplicate assays).

- Model Update: Append the new experimental data {xnew, ynew} to the dataset D. Retrain the GP surrogate model. Iterate steps 2-6 for 5-10 cycles or until a performance threshold is met.

Diagram Title: BO Iterative Optimization Cycle for Protein Engineering

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for BO-Driven Protein Engineering

| Item | Function in Protocol | Example Product/Catalog |

|---|---|---|

| Pooled Gene Library | Source of initial sequence diversity for screening. | Twist Bioscience, Custom Oligo Pools |

| High-Efficiency Cloning Kit | For robust assembly of variant libraries into expression vectors. | NEB HiFi DNA Assembly Master Mix (E2621) |

| Competent E. coli Cells | For library transformation and plasmid propagation. | NEB Turbo (C2984H) or Electrocompetent cells |

| Deep-Well 384-Well Plate | High-density culture for parallel protein expression. | Axygen P-DW-20-C |

| Automated Liquid Handler | Enables reproducible plating, assay setup, and reagent addition. | Beckman Coulter Biomek i7 |

| Lysozyme/Lysis Reagent | Releases soluble protein from bacterial cells in HTP format. | MilliporeSigma BugBuster (71456) |

| Fluorogenic/Chromogenic Substrate | Enables kinetic activity measurement in plate reader. | Custom from vendors like Thermo Fisher or Promega |

| Microplate Spectrophotometer/Fluorometer | For high-throughput absorbance/fluorescence readout of assays. | Tecan Spark or BMG CLARIOstar |

| GP/BO Software Package | Implements surrogate modeling and acquisition function logic. | BoTorch, GPyOpt, or custom Python scripts |

Diagram Title: BO Navigates a Rugged Protein Fitness Landscape

Building Your Bayesian Optimization Pipeline: A Step-by-Step Framework

Within the thesis on Bayesian optimization (BO) for protein engineering, a rigorous definition of the search space is critical. The search space is not merely a set of sequences; it is a multi-dimensional construct defined by sequence permutations, structural parameters, and functional fitness metrics. This document provides application notes and protocols for researchers to operationalize this definition for efficient BO-driven campaigns.

Quantitative Definition of Search Space Dimensions

Sequence Space

The combinatorial space defined by amino acid choices at mutable positions.

Table 1: Quantifying Sequence Space Complexity

| Parameter | Description | Typical Range / Example | Calculation |

|---|---|---|---|

| Variable Positions (k) | Number of residues targeted for mutagenesis. | 5 - 20 positions | Experimental design. |

| Alphabet Size (a) | Number of amino acids considered per position (e.g., all 20, a reduced set, or nucleotides). | 4 (DNA bases) to 20 (AA) | Based on library generation method. |

| Total Variants (N) | Total possible theoretical variants. | 10^5 to 10^26 | N = a^k |

| Accessible Variants | Number of variants feasibly constructed and screened. | 10^3 - 10^8 | Limited by library synthesis & HTS capacity. |

Structural Space

The physicochemical and 3D conformational space spanned by the sequence variants.

Table 2: Key Structural Metrics for Search Space Characterization

| Metric | Description | Measurement Method | Relevance to Fitness |

|---|---|---|---|

| Thermal Stability (Tm, °C) | Melting temperature; proxy for folding stability. | Differential scanning fluorimetry (DSF), CD spectroscopy. | Correlates with expressibility & in vivo half-life. |

| Aggregation Propensity | Tendency to form insoluble aggregates. | Static light scattering (SLS), SEC-MALS. | Impacts yield, immunogenicity. |

| Structural RMSD (Å) | Root-mean-square deviation of backbone atoms from a reference. | X-ray crystallography, Cryo-EM, computational modeling (AlphaFold2). | Indicates fold preservation. |

| Solvent Accessible Surface Area (SASA, Ų) | Surface area accessible to a solvent molecule. | Computed from PDB structures. | Informs on binding site occlusion. |

Fitness Metric Space

The functional readouts that define the objective of the optimization.

Table 3: Hierarchy and Properties of Common Fitness Metrics

| Fitness Metric | Assay Type | Throughput | Noise Level | Key Limitation |

|---|---|---|---|---|

| Catalytic Efficiency (kcat/Km) | Enzyme kinetics | Low | Low | Low throughput, resource-intensive. |

| Binding Affinity (KD, nM) | SPR, BLI, ELISA | Medium | Medium | May not correlate with cellular activity. |

| Expression Yield (mg/L) | Purification & quantification | Medium | High | Confounded by stability & solubility. |

| Cellular Activity (IC50, EC50) | Cell-based reporter assay | High | High | Indirect measure, off-target effects. |

| Selectivity Index | Ratio of target vs. off-target activity | Varies | Varies | Requires multiplexed or orthogonal assays. |

Experimental Protocols

Protocol 3.1: High-Throughput Thermal Stability Screening via DSF

Purpose: To measure Tm for hundreds of protein variants in parallel, informing the structural stability dimension. Reagents: See Toolkit Section 4. Procedure:

- Sample Preparation: In a 96- or 384-well PCR plate, mix 10 µL of each purified protein variant (0.2 - 0.5 mg/mL in assay buffer) with 10 µL of a 10X SYPRO Orange dye solution.

- Plate Setup: Include a buffer-only + dye control and a wild-type protein control in triplicate. Seal plate with an optical film.

- Instrument Programming: Load plate into a real-time PCR thermocycler. Set the temperature ramp from 25°C to 95°C with a gradual increase (e.g., 1°C/min) while monitoring fluorescence in the ROX/FAM channel (excitation ~470 nm, emission ~570 nm).

- Data Analysis: Export raw fluorescence (F) vs. temperature (T) data. For each well, normalize F to fraction unfolded (Fu). Calculate Tm as the temperature at Fu = 0.5 via Boltzmann sigmoidal curve fitting.

Protocol 3.2: Deep Mutational Scanning (DMS) for Fitness Landscape Mapping

Purpose: To generate high-resolution sequence-fitness data for a targeted region. Procedure:

- Saturation Library Construction: Design oligonucleotides to randomize target codons (e.g., NNK degeneracy). Use PCR or gene synthesis to construct a diverse library within an appropriate display (phage, yeast) or survival selection vector.

- Transformation & Diversity Validation: Transform library into host cells (e.g., electrocompetent E. coli) to achieve >10x coverage of theoretical diversity. Sequence 10-20 clones via Sanger sequencing to confirm mutation rate and randomness.

- Functional Selection: Subject the library to a selection pressure (e.g., binding to immobilized antigen, antibiotic resistance linked to enzymatic activity). Perform 2-3 rounds of selection, retaining pre- and post-selection populations.

- High-Throughput Sequencing: Isplicate plasmid DNA from the initial and selected populations. Prepare amplicons for Illumina sequencing of the mutated region.

- Fitness Score Calculation: For each variant, count its frequency in the pre-selection (input) and post-selection (output) libraries. Calculate an enrichment score (e.g., log2(output frequency / input frequency)). Normalize scores relative to wild-type.

Protocol 3.3: Determining Binding Kinetics via Biolayer Interferometry (BLI)

Purpose: To quantitatively measure the association (ka) and dissociation (kd) rates, and calculate KD, for protein-ligand interactions. Procedure:

- Biosensor Preparation: Hydrate Anti-GST or Streptavidin (SA) biosensors in kinetics buffer for 10 min. Load biosensor with GST-tagged protein or biotinylated ligand, respectively, to a baseline shift of 0.5-1 nm.

- Association Step: Move the loaded biosensor to wells containing a concentration series of the analyte (e.g., 6 concentrations, 2-fold dilutions). Monitor shift for 5-10 minutes.

- Dissociation Step: Transfer biosensor to a well containing kinetics buffer only. Monitor shift for 5-10 minutes.

- Data Processing: Align baseline steps, subtract reference sensor data. Fit the association and dissociation curves globally using a 1:1 binding model in the instrument software to determine ka, kd, and KD (kd/ka).

The Scientist's Toolkit

Table 4: Key Research Reagent Solutions

| Item | Function in Defining Search Space |

|---|---|

| NNK Degenerate Oligonucleotides | Encodes all 20 AAs + a stop codon, enabling maximal sequence diversity for saturation mutagenesis. |

| SYPRO Orange Dye | Environment-sensitive fluorescent dye used in DSF to monitor protein unfolding as a function of temperature. |

| Biolayer Interferometry (BLI) Biosensors (e.g., Anti-GST, SA) | Enable label-free, real-time measurement of binding kinetics for fitness characterization. |

| Next-Generation Sequencing (NGS) Kits (Illumina MiSeq) | Provide deep sequencing of variant libraries before/after selection for DMS fitness scoring. |

| Phusion High-Fidelity DNA Polymerase | Used for error-free amplification of gene libraries during construction steps. |

| Golden Gate Assembly Mix | Enables rapid, seamless, and highly efficient assembly of multiple DNA fragments for combinatorial library construction. |

| Stable Cell Lines Expressing Target Receptor | Provide a consistent, biologically relevant context for cellular activity fitness assays. |

Visualization of Concepts

Diagram Title: Search Space Definition Informs Bayesian Optimization Cycle

Diagram Title: Mapping Multi-Dimensional Fitness Landscapes via DMS

Application Notes

This document provides application notes for selecting surrogate models within a Bayesian optimization (BO) framework for protein engineering. The goal is to efficiently navigate a high-dimensional, expensive-to-evaluate fitness landscape (e.g., protein activity, stability, expression) to identify optimal protein variants.

Gaussian Processes (GPs)

Core Application: The gold-standard surrogate for sample-efficient BO in continuous domains with moderate dimensionality (typically <20). Ideal when the number of experimental rounds (protein library screenings) is severely limited.

- Strengths: Provides well-calibrated uncertainty estimates (predictive variance) essential for acquisition function computation (e.g., Expected Improvement). Naturally handles noise.

- Weaknesses: Cubic computational scaling with the number of data points. Kernel choice and hyperparameter priors are critical for performance on biological data.

Bayesian Neural Networks (BNNs)

Core Application: Promising for high-dimensional, non-stationary, or complex protein sequence-function landscapes where data from parallelized assays (e.g., deep mutational scanning) is becoming more available.

- Strengths: Scalable to large datasets and high-dimensional input spaces (e.g., one-hot encoded protein sequences). High representational power for complex patterns.

- Weaknesses: Computational overhead for training and inference; approximate posterior inference (e.g., Monte Carlo Dropout, SWAG) can lead to less reliable uncertainty quantification compared to GPs.

Random Forests (RFs)

Core Application: An effective, robust baseline for discrete/structured sequence inputs, especially when using the Tree-structured Parzen Estimator (TPE) or via surrogate models like SMAC that use random forests.

- Strengths: Fast training and prediction, handles mixed data types, robust to irrelevant features. Built-in feature importance.

- Weaknesses: Standard RFs do not natively provide well-calibrated probabilistic uncertainty. Quantile regression forests or ensemble methods are needed for uncertainty estimates.

Table 1: Key Characteristics of Surrogate Models for Protein Engineering BO

| Feature | Gaussian Process (GP) | Bayesian Neural Network (BNN) | Random Forest (RF) |

|---|---|---|---|

| Uncertainty Quantification | Native, probabilistic (exact) | Approximate (via variational inference, MCMC, ensembles) | Non-probabilistic; requires extensions (e.g., jackknife, quantile RF) |

| Data Efficiency | Excellent (for low D) | Good (with appropriate priors/regularization) | Moderate to Poor (requires more data) |

| Scalability (# Samples) | Poor (>~10,000 costly) | Good (can scale to large data) | Excellent |

| Handling High-Dim Inputs | Poor (kernel design sensitive) | Good (via architecture) | Good (with feature selection) |

| Handling Discrete/Categorical Inputs | Requires specialized kernels | Native (via embedding layers) | Native |

| Interpretability | Moderate (via kernel, lengthscales) | Low (complex black box) | Moderate (feature importance, tree structure) |

| Typical BO Use-Case | Sample-efficient lab experiments | Data-rich scenarios (e.g., multi-modal data) | Robust baseline, structured/combinatorial spaces |

Table 2: Performance Benchmarks on Representative Protein Datasets (Hypothetical Summary)

| Model | GFP Brightness Optimization (10 Rounds, 5D) | Enzyme Thermostability (20 Rounds, 15D) | Antibody Affinity (50 Rounds, 100D+) |

|---|---|---|---|

| GP (Matern Kernel) | Best Found: +4.2 SD (Fast convergence) | Best Found: +12.5°C (Stable) | Failed (Kernel choice critical) |

| BNN (MC Dropout) | Best Found: +3.8 SD | Best Found: +13.1°C | Best Found: -2.1 nM KD (Scaled well) |

| RF (Quantile) | Best Found: +3.5 SD | Best Found: +11.8°C | Best Found: -1.8 nM KD |

Experimental Protocols

Protocol 1: Standard GP-Based BO Cycle for Protein Engineering

Objective: To iteratively design and test protein variant libraries to maximize a target property. Materials: See "The Scientist's Toolkit" below. Workflow:

- Initial Design of Experiments (DoE): Generate an initial diverse library of 10-50 protein variants using methods like site-saturation mutagenesis at selected positions or random mutagenesis with low coverage. Express and purify variants, then assay for target property (e.g., enzymatic activity via fluorescence/absorbance).

- Model Initialization: Encode protein variants (e.g., using one-hot encoding, physicochemical features, or embeddings). Define a GP prior with a Matern 5/2 kernel and a constant mean function. Set priors on kernel hyperparameters (lengthscales, noise variance).

- Model Training: Optimize the GP hyperparameters by maximizing the log marginal likelihood using the initial assay data (X, y).

- Acquisition Function Maximization: Using the trained GP, compute the Expected Improvement (EI) over the vast space of possible protein sequences defined by the experimental constraints (e.g., single/multi-point mutations). Use a genetic algorithm or gradient-based methods on continuous relaxations to propose the next batch of 5-20 variants to experimentally test.

- Iterative Loop: Clone, express, purify, and assay the newly proposed variants. Append the new data (Xnew, ynew) to the training set. Retrain the GP hyperparameters. Repeat steps 4-5 for the desired number of experimental rounds or until performance convergence.

- Validation: Characterize the top-performing identified variants in triplicate and with secondary assays to confirm improved properties.

Protocol 2: BNN Surrogate for Multi-Fidelity Protein Optimization

Objective: Leverage low-fidelity, high-throughput data (e.g., yeast display enrichment scores) to guide expensive, high-fidelity experiments (e.g., SPR binding affinity). Workflow:

- Data Collection: Generate a large, diverse library and screen it using a high-throughput method (low-fidelity, noisy output

y_LF). A smaller, representative subset is characterized using the gold-standard assay (high-fidelity outputy_HF). - BNN Architecture: Implement a multi-fidelity BNN with two input branches: one for the protein sequence and one for a fidelity indicator. The network should have several Bayesian layers (e.g., using Flipout or Reparameterization layers for variational inference).

- Training: Train the BNN on the combined dataset

(X, fidelity, y). Use a scaled heteroskedastic loss function to account for different noise levels iny_LFandy_HF. - BO Loop: Use the trained BNN's predictive mean and variance (obtained via Monte Carlo sampling) to compute the Upper Confidence Bound (UCB) acquisition function. Propose new variants predicted to perform well in high-fidelity space. Iterate by performing new high-fidelity assays on proposed variants and updating the training data.

- Validation: Validate final predictions with full biophysical characterization.

Protocol 3: Random Forest for Combinatorial Sequence Space Pruning

Objective: Rapidly narrow down a vast combinatorial space (e.g., 10 positions with 20 amino acids each) to a promising region for more intensive GP-based optimization. Workflow:

- Space Definition: Define the discrete sequence space (positions and allowed mutations).

- Initial Random Sampling: Randomly sample 100-1000 sequences from the space (can be in silico if a predictor is available, or a small experimental batch).

- RF Model Training: Train a standard Random Forest regressor on the sampled data. Use out-of-bag error for performance estimate.

- Feature Importance & Space Pruning: Analyze Gini importance or permutation importance from the trained RF to identify which sequence positions are most critical for the function. Prune the search space by fixing unimportant positions to wild-type or a consensus.

- Informed DoE for Downstream BO: Use the reduced, lower-dimensional space to generate a high-quality initial design (e.g., Latin Hypercube) for launching a subsequent, more sample-efficient GP-BO campaign as described in Protocol 1.

Visualization: Workflow Diagrams

Title: Gaussian Process Bayesian Optimization Loop

Title: Surrogate Model Selection Decision Flow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Protein Engineering Bayesian Optimization

| Item | Function in BO Workflow | Example Product/Type |

|---|---|---|

| High-Fidelity DNA Polymerase | Accurate amplification of gene fragments for library construction. | Q5 High-Fidelity DNA Polymerase (NEB) |

| Cloning Kit (Gibson/ Golden Gate) | Seamless assembly of mutant gene libraries into expression vectors. | Gibson Assembly Master Mix (NEB), MoClo Toolkit |

| Competent E. coli Cells | High-efficiency transformation for library propagation and plasmid recovery. | NEB 5-alpha or 10-beta Electrocompetent Cells |

| Protein Expression System | Controlled overexpression of protein variants. | T7-based vectors (pET series) in BL21(DE3) E. coli |

| Chromatography Resins | Purification of His-tagged or other affinity-tagged protein variants. | Ni-NTA Superflow (Qiagen), Strep-Tactin XT |

| Microplate Reader | High-throughput measurement of protein activity (e.g., fluorescence, absorbance). | Tecan Spark, BMG CLARIOstar |

| Surface Plasmon Resonance (SPR) Chip | Label-free, quantitative measurement of binding kinetics (high-fidelity assay). | Series S Sensor Chip (Cytiva) |

| Next-Generation Sequencing (NGS) Library Prep Kit | Encoding and deconvoluting pooled variant libraries for deep mutational scanning. | Illumina Nextera XT |

| Automated Liquid Handler | Enables reproducible, high-throughput pipetting for assay setup and library plating. | Beckman Coulter Biomek i7 |

| BO Software Package | Implementing surrogate models and optimization loops. | BoTorch, GPyOpt, Scikit-optimize, custom Python scripts |

This document provides detailed application notes and protocols for three principal acquisition functions used in Bayesian Optimization (BO), framed within a broader thesis on optimizing protein engineering campaigns. The goal is to enable efficient navigation of high-dimensional, expensive-to-evaluate experimental spaces—such as protein fitness landscapes—to identify variants with enhanced properties (stability, activity, expression). BO iteratively uses a probabilistic surrogate model and an acquisition function to decide which sequence or construct to assay next, maximizing information gain and performance per experimental dollar and hour.

Core Mathematical Definitions & Comparative Data

Table 1: Comparative Summary of Key Acquisition Functions

| Acquisition Function | Key Formula (Parameterized) | Exploitation vs. Exploration Balance | Computational Cost | Handles Noisy Observations? | Primary Use Case in Protein Engineering |

|---|---|---|---|---|---|

| Expected Improvement (EI) | EI(x) = E[max(f(x) - f(x*), 0)] where f(x*) is current best. |

Tunable via ξ (jitter parameter). Higher ξ encourages exploration. | Low (analytic for GP) | Yes (via noise-aware GP) | Directed search for a single optimal variant; balance between local refinement and global search. |

| Upper Confidence Bound (UCB/GP-UCB) | UCB(x) = μ(x) + β_t σ(x) where β_t is a schedule parameter. |

Explicitly controlled by βt. Larger βt increases exploration weight. | Very Low (analytic) | Yes | Systematic exploration of uncertain regions; good for initial space-filling before intense exploitation. |

| Knowledge Gradient (KG) | KG(x) = E[ max μ_{t+1} - max μ_t | x_t = x ] |

Implicit, via global value of information. | High (requires inner optimization and sampling) | Yes (can be formulated for noisy) | Maximizing final recommendation quality after a fixed budget; prioritizing informative experiments. |

Table 2: Typical Parameter Ranges & Heuristics

| Function | Parameter | Typical Range / Heuristic | Impact |

|---|---|---|---|

| EI | ξ (jitter) | 0.01 - 0.1 | >0 prevents over-exploitation of small improvements. |

| GP-UCB | β_t | (Theoretical) β_t ∝ log(t²d); Practical: Constant in [1, 3] | Rule-of-thumb constant 2.0 often effective. |

| KG | Number of Fantasy Samples | 50 - 500 | More samples reduce approximation noise but increase compute. |

Experimental Protocols & Implementation Workflows

Protocol 3.1: Standardized Bayesian Optimization Cycle for Directed Evolution

Objective: To identify a protein variant maximizing a quantitative assay (e.g., fluorescence, enzymatic activity) within a defined sequence space and experimental budget (N cycles, M replicates). Materials: See "Scientist's Toolkit" (Section 5). Procedure:

- Initial Design: Use a space-filling design (e.g., Sobol sequence, random) to select an initial library of 10-50 variants. Synthesize and assay.

- Model Training: Fit a Gaussian Process (GP) surrogate model to all accumulated data. Standardize outputs. Use a Matérn 5/2 kernel. Optimize hyperparameters via marginal likelihood maximization.

- Acquisition Optimization:

- For EI/UCB: Compute acquisition function over a discrete candidate set (≥10⁴ variants) derived from the sequence space. Use multistart gradient optimization if variables are continuous (e.g., continuous embeddings).

- For KG: Use a one-step look-ahead simulation ("fantasies"):

a. Draw 100-200 posterior samples at the candidate point

x. b. For each sample, fictitiously add it to the training data, refit the GP (or update posterior mean analytically), and compute the new optimum. c. KG value = Average(new optimum) - Current optimum.

- Next Experiment Selection: Select the point

x*maximizing the acquisition function. If using replicates, select topMpoints. - Parallel Experimentation (Batch BO): For EI/UCB, use a penalization or local penalization strategy to select a diverse batch of

Mpoints in one iteration. - Iteration: Synthesize and assay the selected variant(s). Add data to the training set. Repeat from Step 2 until the experimental budget is exhausted.

- Final Recommendation: Output the variant with the highest observed mean performance or the highest posterior mean from the final model.

Protocol 3.2: Calibrating the Exploration-Exploitation Trade-off

Objective: To empirically determine an effective acquisition function parameter (e.g., ξ for EI, β for UCB) for a specific protein engineering problem. Procedure:

- Select a historical dataset or create a simulated benchmark (e.g., using a known epistatic landscape model).

- Define a BO loop (as in Protocol 3.1) and run it to completion for a range of parameter values (e.g., ξ = [0.001, 0.01, 0.05, 0.1, 0.2]).

- Track the intermediate regret (difference between the best possible and best found) at each iteration.

- Plot average regret trajectories across multiple random initializations. The parameter yielding the fastest decay of regret is preferred for similar future campaigns.

Visualizations

Title: Bayesian Optimization Cycle for Protein Engineering

Title: Logical Data Flow in Bayesian Optimization

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions & Computational Tools

| Item / Solution | Function in BO for Protein Engineering | Example / Note |

|---|---|---|

| Gaussian Process (GP) Software | Core surrogate model for predicting protein fitness and uncertainty. | BoTorch (PyTorch-based), GPyTorch, scikit-learn. Essential for flexible, high-performance modeling. |

| Bayesian Optimization Library | Provides implementations of acquisition functions and optimization loops. | BoTorch (supports EI, UCB, KG, batch BO), Ax, Dragonfly. Enables rapid experimental design. |

| Sequence Encoding | Transforms protein sequences into numerical features for the GP model. | One-hot, AAIndex, ESM-2 embeddings, UniRep. Continuous embeddings dramatically improve model accuracy. |

| Oligo Pool Synthesis | Enables high-throughput construction of variant libraries for initial design and batch BO. | Twist Bioscience, Agilent, Custom Array. Cost-effective for 10³-10⁵ variant libraries. |

| High-Throughput Assay | Generates quantitative fitness data for training the surrogate model. | FACS (fluorescence), microfluidic droplets, plate-based enzymatic assays. Throughput must match BO cycle pace. |

| Cloud/High-Performance Compute | Accelerates acquisition function optimization and GP fitting, especially for KG. | AWS, GCP, or local clusters. Necessary for complex models or large sequence spaces (>10⁴ candidates). |

1. Introduction within a Bayesian Optimization Thesis This application note details the integration of experimental cycles and data flow essential for the successful implementation of Bayesian optimization (BO) in protein engineering. BO requires tightly coupled Design-Build-Test-Learn (DBTL) cycles, where each iteration provides quantitative data to update a probabilistic model, guiding the selection of subsequent protein variants. This document provides specific protocols and frameworks to establish this closed-loop, data-driven experimentation.

2. Key Quantitative Parameters for Bayesian Optimization Loops Table 1 summarizes typical quantitative parameters that define and constrain a high-throughput protein engineering DBTL cycle suitable for BO.

Table 1: Quantitative Parameters for a High-Throughput DBTL Cycle

| Parameter | Typical Range/Value | Impact on BO Cycle |

|---|---|---|

| Design: Library Size per Iteration | 96 - 10,000 variants | Balances exploration vs. exploitation; limited by build/test capacity. |

| Build: Cloning Efficiency | >85% success rate | Low efficiency reduces effective library size and introduces noise. |

| Test: Assay Throughput | 10^3 - 10^7 measurements/day | Determines cycle iteration speed and feasible design space size. |

| Test: Assay Noise (CV) | <15% coefficient of variation | High noise impedes model accuracy and convergence. |

| Learn: Model Training Time | Minutes to hours | Must be shorter than Build/Test duration to avoid bottlenecks. |

| Cycle Turnaround Time | 1 day - 3 weeks | Directly determines the total project timeline for n iterations. |

3. Detailed Protocols for Core DBTL Modules

Protocol 3.1: Design – Principled Library Design for Initial BO Training Set Objective: Generate a diverse, information-rich initial variant library (n=96-384) for first-model training. Materials: Parent protein sequence, MSA data, structure (if available), library design software (e.g., SCHEMA, Rosetta, custom Python scripts). Procedure:

- Define Sequence Space: Identify mutable positions (e.g., active site residues, flexible loops).

- Compute Sequence Features: Use embeddings (e.g., from ESM-2 model) or biophysical proxies (e.g., hydrophobicity, charge).

- Apply Experimental Design: Use Maximal Diversity selection or Latin Hypercube Sampling in the feature space to choose initial variants.

- Filter & Finalize: Filter designs for predicted stability (using tools like FoldX or DeepDDG) and synthesize gene fragments.

Protocol 3.2: Build – High-Throughput Cloning & Expression in Microtiter Plates Objective: Reliably construct and express 96-384 protein variants in parallel. Materials: PCR thermocycler, liquid handler, 96-well deep-well plates, competent E. coli (e.g., BL21(DE3)), auto-induction media, plasmid purification kits. Procedure:

- Golden Gate Assembly: Assemble variant gene fragments into expression vector in a 10 µL reaction in 96-well PCR plate. Cycle: 37°C (5 min), 16°C (5 min), repeat 30x; 60°C (5 min).

- Transformation: Transfer 2 µL reaction to 50 µL chemically competent cells. Heat shock at 42°C for 30 sec, recover in SOC for 1 hour.

- Expression: Inoculate 1 mL auto-induction media in 96-deep-well plate. Incubate at 37°C, 900 rpm for 6 hr, then 18°C, 900 rpm for 18 hr.

- Lysate Preparation: Pellet cells, lyse via chemical (BugBuster) or enzymatic (lysozyme) method, clarify by centrifugation. Supernatant is crude lysate for testing.

Protocol 3.3: Test – Coupled Enzymatic Assay with Fluorescence Readout Objective: Quantify activity of thousands of variants from crude lysates. Materials: 384-well black assay plates, plate reader, assay buffer, fluorogenic substrate, lysates from 3.2. Procedure:

- Normalize Protein: Dilute lysates to a standard total protein concentration (e.g., using Bradford assay).

- Assay Setup: In a 384-well plate, combine 25 µL diluted lysate with 25 µL 2X substrate/buffer solution. Include no-enzyme and wild-type controls.

- Kinetic Read: Immediately place plate in pre-warmed (30°C) plate reader. Measure fluorescence (Ex/Em appropriate to substrate) every 60 sec for 30 min.

- Data Processing: Calculate initial velocity (V0) from linear slope of fluorescence vs. time. Normalize V0 to the wild-type control on each plate. Report as normalized activity ± standard deviation (from technical triplicates).

4. Data Flow & Integration Diagram

Title: Bayesian Optimization DBTL Cycle Flow

5. The Scientist's Toolkit: Research Reagent Solutions Table 2: Key Reagents and Materials for High-Throughput Protein Engineering

| Item | Function/Application |

|---|---|

| NGS-Based Gene Library Pools | Provides diverse, pre-synthesized variant sequences for initial library construction. |

| Golden Gate Assembly Mix | Enables efficient, one-pot, scarless assembly of multiple DNA fragments. |

| Chemically Competent E. coli (96-well format) | Allows parallel, high-efficiency transformation of assembly reactions. |

| Auto-induction Media | Simplifies expression by inducing protein production automatically at high cell density. |

| Lytic Enzymes (e.g., ReadyLyse) | Enables rapid, uniform cell lysis in 96/384-well format without sonication. |

| Fluorogenic/Chromogenic Substrates | Provides sensitive, high-throughput compatible readout for enzyme activity. |

| Bradford or BCA Protein Assay Kit (microplate) | Normalizes protein concentration across variant lysates. |

| 384-Well Black/Clear Bottom Plates | Standardized format for assays and compatible with automation and plate readers. |

Application Note: Bayesian Optimization in Protein Engineering

Bayesian optimization (BO) is an efficient, sequential model-based approach for the global optimization of expensive black-box functions. In protein engineering, the "function" is a performance metric (e.g., activity, thermostability, binding affinity), and the "input" is the protein sequence or structure. BO builds a probabilistic surrogate model (typically a Gaussian Process) of the objective function and uses an acquisition function to decide which variant to test next, balancing exploration and exploitation. This dramatically reduces the number of experimental iterations needed to find optimal variants compared to random screening or directed evolution.

Key Quantitative Data from Recent Studies

Table 1: Performance Comparison of Optimization Methods in Protein Engineering Case Studies

| Protein Class | Target Property | Optimization Method | Library Size Tested | Fold Improvement | Key Reference (Year) |

|---|---|---|---|---|---|

| Enzyme (PETase) | PET Depolymerization Activity | Bayesian Optimization | 72 | 2.5x | Bullock et al. (2023) |

| Enzyme (Amidase) | Thermostability (Tm) | Model-Guided DE (BO-Informed) | ~500 | +15°C | Rozman et al. (2024) |

| Antibody (Anti-IL-6) | Binding Affinity (KD) | Bayesian Optimization with Deep Surrogate | 58 | 20x (5 pM KD) | Yang et al. (2023) |

| Antibody (scFv) | Developability (Viscosity) | Multi-Objective BO | 45 | 60% reduction | Tiller et al. (2024) |

| Membrane Protein (GPCR) | Signal Bias (Beta-arrestin vs G-protein) | Sequence-based BO | 31 | 4:1 Bias Ratio | Santos et al. (2024) |

| Membrane Protein (Ion Channel) | Expression Yield in Yeast | Structure-informed BO | 96 | 12-fold yield increase | Vega et al. (2023) |

Protocols

Protocol 1: Bayesian Optimization for Enzyme Thermostability

Objective: To increase the melting temperature (Tm) of a hydrolase enzyme using BO-guided site-saturation mutagenesis.

Research Reagent Solutions & Essential Materials:

| Item | Function |

|---|---|

| NEB Gibson Assembly Master Mix | For seamless assembly of mutagenic DNA fragments. |

| Phusion High-Fidelity DNA Polymerase | High-fidelity PCR for generating mutagenesis fragments. |

| E. coli BL21(DE3) Competent Cells | Protein expression host. |

| Ni-NTA Superflow Cartridge | Immobilized metal affinity chromatography for His-tagged protein purification. |

| Prometheus Panta nanoDSF Grade Capillaries | For high-throughput nano Differential Scanning Fluorimetry (nanoDSF) to determine Tm. |

| PyroDG Fluorescent Dye | Thermofluor dye for plate-based thermal shift assays (optional). |

| Custom Python BO Environment (e.g., BoTorch, Ax Platform) | Software platform for running the Bayesian optimization loop. |

Methodology:

- Define Sequence Space: Select 4-6 critical positions from structural analysis or consensus sequences for mutagenesis.

- Initial Library Construction: Generate an initial training set of 20-30 variants covering a diverse set of mutations at selected positions using site-saturation mutagenesis and Gibson assembly. Transform into expression host.

- High-Throughput Screening: Express variants in 96-deep well plates. Purify via a simplified His-tag protocol (e.g., magnetic beads). Measure Tm using a nanoDSF instrument in 96-well format.

- BO Loop Initialization: Input variant sequences (one-hot encoded or physiochemical property encoded) and corresponding Tm values into the BO framework. Configure a Gaussian Process model with a Matérn kernel.

- Iterative Rounds: a. The surrogate model predicts Tm and uncertainty for all possible single/double mutants in the defined space. b. The acquisition function (e.g., Expected Improvement) selects the 4-8 most promising variants to test next. c. Experimentally construct and screen these selected variants. d. Update the model with new data. Repeat steps a-d for 3-5 rounds.

- Validation: Express and purify top 3-5 hits from final model at larger scale (e.g., 50 mL culture). Characterize Tm via full thermal denaturation using CD spectroscopy and validate functional activity.

Protocol 2: Optimizing Antibody Affinity with Deep Kernel Learning

Objective: Improve the binding affinity (KD) of a therapeutic antibody candidate by optimizing CDR residues.

Research Reagent Solutions & Essential Materials:

| Item | Function |

|---|---|

| Twist Bioscience Varicon Library Synthesis | For synthesis of defined variant libraries based on BO suggestions. |

| Yeast Surface Display System (e.g., pYD1 vector) | For coupling antibody variant phenotype to its genotype for sorting. |

| Anti-c-Myc Alexa Fluor 647 Conjugate | Labels displayed scFv for expression normalization. |

| Biotinylated Antigen & Streptavidin-PE | Labels antigen binding for affinity sorting. |

| BD FACS Aria III Cell Sorter | Fluorescence-activated cell sorting to isolate high-affinity binders. |

| Octet RED96e Biolayer Interferometry (BLI) System | Label-free, high-throughput kinetics for measuring binding kinetics of purified hits. |

| Precog BO Software with Evoformer Kernel | Integrates sequence embeddings from protein language models (e.g., ESM-2) into the Gaussian Process. |

Methodology:

- Library Design: Use an antibody-specific language model to generate sequence embeddings for the wild-type and potential variants in CDR-H3 and CDR-L3.

- Initial Data Generation: Clone and express a small, diverse initial library (~30 variants) via yeast surface display. Measure binding via flow cytometry at a single antigen concentration. Estimate relative KD from fluorescence ratios.

- Model Setup: Configure a BO loop using a deep kernel that combines ESM-2 embeddings with a standard Matérn kernel. The objective is to minimize the predicted KD.

- Affinity Maturation Cycle: a. In-Silico Prediction: The model evaluates millions of in-silico variants. Use Thompson Sampling to select a batch of 10-15 sequences predicted to have highest affinity. b. Library Synthesis: Synthesize the oligonucleotide pool encoding the selected variants and clone into the display vector. c. Selection: Perform 1-2 rounds of FACS, gating for the top 0.5-1% of binders at low antigen concentrations. d. Sequence Analysis: Isolve plasmid DNA from sorted pools, sequence, and obtain individual clones. e. Characterization: Express individual hits, measure binding kinetics via BLI using Streptavidin (SA) biosensors loaded with biotinylated antigen. f. Model Update: Feed new sequence-KD pairs back into the model. Repeat for 2-3 cycles.

- Lead Characterization: Produce lead antibody as full IgG in mammalian cells (e.g., Expi293F). Perform comprehensive biophysical and functional assays.

Protocol 3: Engineering Membrane Protein Expression and Stability

Objective: Enhance the functional expression yield of a human G protein-coupled receptor (GPCR) in Pichia pastoris.

Research Reagent Solutions & Essential Materials:

| Item | Function |

|---|---|

| pPICZ B Pichia Expression Vector | Methanol-inducible vector for high-level membrane protein expression. |

| PichiaPink Expression System | A series of protease-deficient P. pastoris strains for enhanced protein production. |

| n-Dodecyl-β-D-Maltopyranoside (DDM) | Mild detergent for solubilizing GPCRs from membrane fractions. |

| LMNG/CHS Detergent Mixture | For stabilizing solubilized GPCRs during purification. |

| Cygnus BRET GPCR Assay Kit | Functional assay to confirm receptor folding and signaling. |

| Anti-Flag M1 Affinity Gel | For immunoaffinity purification of Flag-tagged receptor. |

| AlphaFold2 or RosettaMP | Structural modeling tools to inform mutation constraints for the BO search space. |

Methodology:

- Construct Design: Generate a structural model of the target GPCR. Identify 8-10 positions in transmembrane helices prone to misfolding or involved in stability "hotspots."

- Baseline Expression: Clone wild-type gene into pPICZ B, transform into PichiaPink Strain 1. Induce with methanol, solubilize membranes with DDM, and quantify functional receptor yield via a ligand-binding assay or Western blot (baseline = 100%).

- High-Throughput Microplate Screen: Create mutant libraries by site-saturation at selected positions. Express in 24-deep well plates. Perform a simplified purification using magnetic Anti-Flag beads directly from solubilized microsomal fractions.

- Quantitative Output: Use a fluorescence-based thermal stability assay (FSEC-TS) in a plate reader or a dot-blot with conformation-sensitive antibody to determine relative stability and yield for each variant.

- Structure-Informed BO: a. Encode variants using features from the AlphaFold2 model (e.g., pLDDT, residue depth, predicted interactions). b. Train initial Gaussian Process model on first-round data (~50 variants). c. Use a noise-aware Expected Improvement acquisition function to account for assay variability. d. Each round, select 12 variants predicted to maximize functional yield. Iterate for 3 rounds.

- Scale-Up and Validation: Express top BO-identified variants in 1L cultures. Purify using a two-step protocol (M1 affinity + size exclusion chromatography). Validate stability (e.g., long-term thermostability in detergent) and function using a BRET-based signaling assay.

Visualizations

Bayesian Optimization Workflow for Protein Engineering

BO Core and Experimental Feedback Loop

Overcoming Challenges: Practical Tips for Optimizing Your BO Experiments

Within the framework of a thesis on Bayesian optimization (BO) for protein engineering, managing high-dimensional parameter spaces is the principal bottleneck. Protein sequence-function landscapes are astronomically large, with dimensionality defined by sequence length (L) and amino acid alphabet (20), resulting in a search space of 20^L. Direct application of BO is infeasible beyond a few residues. This application note details three complementary strategies—learned embeddings, dimensionality reduction, and active subspaces—to project this intractable space into a lower-dimensional manifold where efficient BO can be conducted, thereby accelerating the design of novel therapeutic proteins, enzymes, and biologics.

Core Methodologies: Protocols and Application Notes

Learned Embeddings for Sequence Representation

Protocol 2.1.A: Generating Unsupervised Protein Sequence Embeddings

Objective: Transform discrete, high-dimensional one-hot encoded protein sequences into continuous, dense, and semantically meaningful low-dimensional vectors.

Materials & Reagents:

- Hardware: GPU cluster (e.g., NVIDIA A100).

- Software: Python, PyTorch/TensorFlow, HuggingFace

transformerslibrary,biopython. - Data: Multiple Sequence Alignment (MSA) of target protein family (e.g., from Pfam, UniRef).

Procedure:

- Data Curation: Gather >10,000 homologous sequences. Perform quality filtering (remove fragments, atypical lengths) and create a balanced MSA using MAFFT or ClustalOmega.

- Model Selection & Training:

- For contextual embeddings, fine-tune a pre-trained protein language model (e.g., ESM-2, ProtBERT) on your MSA. Use a masked language modeling objective for 5-10 epochs.

- For non-contextual embeddings, train a shallow neural network (e.g., residue2vec) to predict residues in a sliding window.

- Embedding Extraction: For each sequence in your engineered library, pass it through the final trained model. For transformer models, extract the average hidden state from the final layer across all residue positions to obtain a single, fixed-dimensional vector (e.g., 512D or 1024D).

- Validation: Apply UMAP/t-SNE on embeddings and color by known functional attributes (e.g., thermostability bin). Validate cluster separation correlates with function.

Table 1: Comparison of Protein Embedding Methods for BO

| Method | Dimensionality (Output) | Training Data Need | Context-Aware | Computational Cost | Suitability for BO |

|---|---|---|---|---|---|

| One-Hot Encoding | L x 20 | None | No | Low | Poor (Too High-D) |

| ESM-2 (Pre-trained) | 512 - 1280 | Minimal (Zero-shot possible) | Yes | Moderate (Inference) | Good for Transfer |

| Fine-tuned ESM-2 | 512 - 1280 | Moderate (~10k seqs) | Yes | High (Fine-tuning) | Excellent |

| autoencoder | 32 - 256 | Large (>50k seqs) | No | High (Training) | Good for Unsupervised |

Diagram 1: Workflow for using learned embeddings in BO.

Dimensionality Reduction for Experimental Landscapes

Protocol 2.2.B: Applying UMAP for Visualization and Pre-BO Projection

Objective: Reduce the dimensionality of experimental feature spaces (e.g., from high-throughput screening assays) to 2-3 dimensions for visualization and initial BO proxy model training.

Materials & Reagents:

- Software:

umap-learn,scikit-learn,pandas,numpy. - Data: Feature matrix (nsamples x nfeatures) from assay (e.g., fluorescence, binding affinity, growth rate).

Procedure:

- Feature Scaling: Standardize features to zero mean and unit variance using

StandardScaler. - Parameter Selection: Key hyperparameters are

n_neighbors(~15-50, balances local/global structure) andmin_dist(~0.1-0.5, controls cluster tightness). - UMAP Fitting: Instantiate

UMAP(n_components=3, n_neighbors=20, min_dist=0.1, random_state=42). Fit and transform your data. - BO Integration: Use the 3D UMAP coordinates as a simplified input space for a Gaussian Process (GP) model. The acquisition function (e.g., EI) proposes points in this latent space. A separate mapping model (e.g., a feed-forward network) must be trained to decode latent points back to original sequence space for experimental validation.

Active Subspaces for Biophysical Models

Protocol 2.3.C: Identifying Active Subspaces from Biophysical Simulations

Objective: Discover a low-dimensional linear subspace of input parameters (e.g., force field terms, structural descriptors) that dominantly influences a scalar output (e.g., protein folding ΔG, binding energy).

Materials & Reagents:

- Software: Custom Python with

numpy,scipy,pyro(for Bayesian AS), simulation software (GROMACS, Rosetta). - Data: Ensemble of input parameter sets and corresponding simulated output values.

Procedure:

- Gradient Sampling: For

Minput parameter setsx_i, compute the gradient∇f(x_i)of the simulation output. Use adjoint methods or finite differences. - Covariance Matrix Construction: Compute the uncentered gradient covariance matrix:

C = (1/M) * Σ (∇f(x_i) ∇f(x_i)^T). - Spectral Decomposition: Perform eigenvalue decomposition:

C = W Λ W^T. Eigenvectorsw_1, w_2, ...corresponding to the largest eigenvalues define the active subspace. - Dimensionality Selection: Plot eigenvalues (scree plot). The gap indicates the active subspace dimension

r. Project original inputsxonto this subspace:y = W_r^T * x. - Surrogate Modeling: Build a GP surrogate model

g(y) ≈ f(x)in ther-dimensional active subspace for ultra-efficient BO.

Table 2: Dimensionality Reduction Techniques for Protein Engineering BO

| Technique | Model Type | Output Dim. (Typical) | Preserves | Key Assumption | Best For |

|---|---|---|---|---|---|

| PCA | Linear | 2-10 | Global Variance | Linear correlations | Biophysical descriptors |

| UMAP | Non-linear | 2-3 | Local/Global Structure | Manifold Hypothesis | Visualizing assay landscapes |

| Autoencoder | Non-linear | 32-256 | Data Distribution | Non-linear compressibility | Unsupervised sequence encoding |

| Active Subspaces | Linear | 1-5 | Output Variance | Gradient availability | Simulation-based optimization |

Diagram 2: Active subspace identification for simulation-based BO.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Managing High-Dimensionality in Protein Engineering

| Item | Function & Application in Thesis Context | Example/Provider |

|---|---|---|

| Pre-trained Protein LM | Provides foundational sequence representations for transfer learning; drastically reduces embedding data needs. | ESM-2 (Meta AI), ProtBERT (DeepMind) |

| GPU Compute Instance | Accelerates training of embedding models, autoencoders, and large-scale GP surrogate models. | NVIDIA A100/A40 (Cloud: AWS, GCP) |

| High-Throughput Assay | Generates the quantitative fitness/activity data needed to construct response surfaces for reduction. | Fluorescence-Activated Cell Sorting (FACS), Plate-based absorbance |

| Gradient-Enabled Simulator | Allows for efficient gradient computation, a prerequisite for active subspace identification. | PyRosetta (with AutoDiff), JAX-based MD (e.g., jax-md) |

| Bayesian Optimization Suite | Framework to integrate latent variables, build surrogate models, and optimize acquisition. | BoTorch, Trieste, Orion |

| Automated Cloning & Expression | Physically validates designs proposed by BO in latent space; closes the design-build-test-learn loop. | Echo Liquid Handler, Gibson Assembly, Cell-free expression systems |

Dealing with Noisy and Multi-Fidelity Experimental Data