Active Learning for Protein Design: A Complete Guide to Iterative AI-Driven Methods

This article provides a comprehensive overview of active learning strategies for iterative protein design, tailored for researchers and drug development professionals.

Active Learning for Protein Design: A Complete Guide to Iterative AI-Driven Methods

Abstract

This article provides a comprehensive overview of active learning strategies for iterative protein design, tailored for researchers and drug development professionals. We explore the foundational principles that distinguish active learning from traditional approaches, detail cutting-edge methodological implementations, address common challenges and optimization strategies, and compare validation frameworks. The synthesis offers a roadmap for accelerating therapeutic and industrial protein development through intelligent, data-efficient machine learning pipelines.

What is Active Learning in Protein Design? Core Concepts and Evolutionary Advantages

Defining Active Learning in the Computational Biology Context

In computational biology and iterative protein design, active learning (AL) is a machine learning paradigm that strategically selects the most informative data points for experimental validation from a vast combinatorial sequence space. It closes the loop between in silico prediction and in vitro/in vivo assay, optimizing resource allocation by prioritizing experiments predicted to maximally improve the model. This framework is central to a thesis on accelerating protein engineering cycles, reducing the cost and time of design-build-test-learn (DBTL) iterations.

Core Methodology & Quantitative Comparison

Active learning cycles consist of: 1) Initial Model Training on a small labeled dataset, 2) Acquisition Function scoring of unlabeled candidates, 3) Selection of a batch for experimental testing, and 4) Model Update with new labels. Key acquisition strategies are compared below.

Table 1: Quantitative Comparison of Active Learning Acquisition Functions in Protein Design

| Acquisition Function | Core Principle | Typical Batch Size | Computational Cost | Primary Use Case in Protein Design |

|---|---|---|---|---|

| Uncertainty Sampling | Selects sequences where model prediction is least confident (e.g., highest entropy, lowest margin). | Small (1-10) | Low | Identifying decision boundaries; exploring local sequence space. |

| Expected Improvement (EI) | Selects sequences with the highest expected improvement over the current best score. | Medium (10-100) | Medium to High | Direct optimization of a functional property (e.g., binding affinity, stability). |

| Query-by-Committee (QBC) | Selects sequences where an ensemble of models disagrees the most. | Small to Medium | High (requires multiple models) | Reducing model bias; robust exploration. |

| Thompson Sampling | Selects sequences based on a probability matching strategy using posterior distributions. | Medium | High (requires Bayesian model) | Balancing exploration-exploitation in Bayesian optimization loops. |

| Diversity-Based | Selects a batch that is both informative and representative of the data distribution. | Large (100-1000) | High (requires clustering/ similarity metrics) | Initial broad exploration of a massive sequence space. |

Protocol: Implementing an Active Learning Cycle for Fluorescent Protein Engineering

This protocol details a single AL cycle aimed at improving the brightness of a fluorescent protein variant.

Protocol 3.1: Initial Dataset Curation & Model Training

Objective: Establish a baseline model from a limited set of characterized variants. Materials: See "Research Reagent Solutions" (Section 5.0). Procedure:

- Seed Library Creation: Start with a wild-type fluorescent protein gene (e.g., GFP) and generate a diverse, but small (~100-500 variants) mutant library using site-saturation mutagenesis at pre-selected residues.

- Experimental Characterization: Express and purify variants. Measure fluorescence intensity (ex: 488 nm, em: 510 nm) and normalize to protein concentration. Log this as your initial labeled dataset

L0. - Feature Representation: Encode each protein sequence in

L0using a relevant feature set (e.g., one-hot encoding, amino acid physicochemical properties, or ESM-2 embeddings). - Model Training: Train a supervised machine learning model (e.g., Gaussian Process Regressor, Random Forest, or Neural Network) on

L0to predict fluorescence intensity from sequence features.

Protocol 3.2: Acquisition, Selection, & Experimental Validation

Objective: Select and test the most informative new sequences to improve the model. Procedure:

- Candidate Pool Generation: Use in silico mutagenesis to generate a large pool (

>10^5sequences) of unexplored variants within a defined mutational distance fromL0. - Acquisition Scoring: Apply the chosen acquisition function (e.g., Expected Improvement) using the trained model from 3.1 to score all candidates in the pool.

- Batch Selection: Rank candidates by acquisition score. Select the top

Nsequences (batch size determined by experimental throughput, e.g.,N=96for a plate-based assay) for synthesis. - Wet-Lab Validation:

a. Gene Synthesis: Order the selected

Nvariant genes via array synthesis or PCR-based assembly. b. Cloning & Expression: Clone genes into an expression vector, transform into host cells (e.g., E. coli), and culture under standard conditions. c. Phenotypic Assay: Measure fluorescence intensity for each variant using a plate reader, following the same protocol as in 3.1. d. Data Curation: Add the new (sequence, fluorescence) pairs to the labeled dataset, creatingL1.

Protocol 3.3: Model Update & Iteration

Objective: Integrate new data to refine predictive accuracy for the next cycle. Procedure:

- Retrain the model from Protocol 3.1 on the updated dataset

L1. - Evaluate model performance on a held-out test set. Key metrics: Root Mean Square Error (RMSE), Pearson correlation coefficient (R).

- Analyze feature importance to glean biological insights (e.g., which residue positions most influence brightness).

- Return to Protocol 3.2 to initiate the next AL cycle, using the updated model.

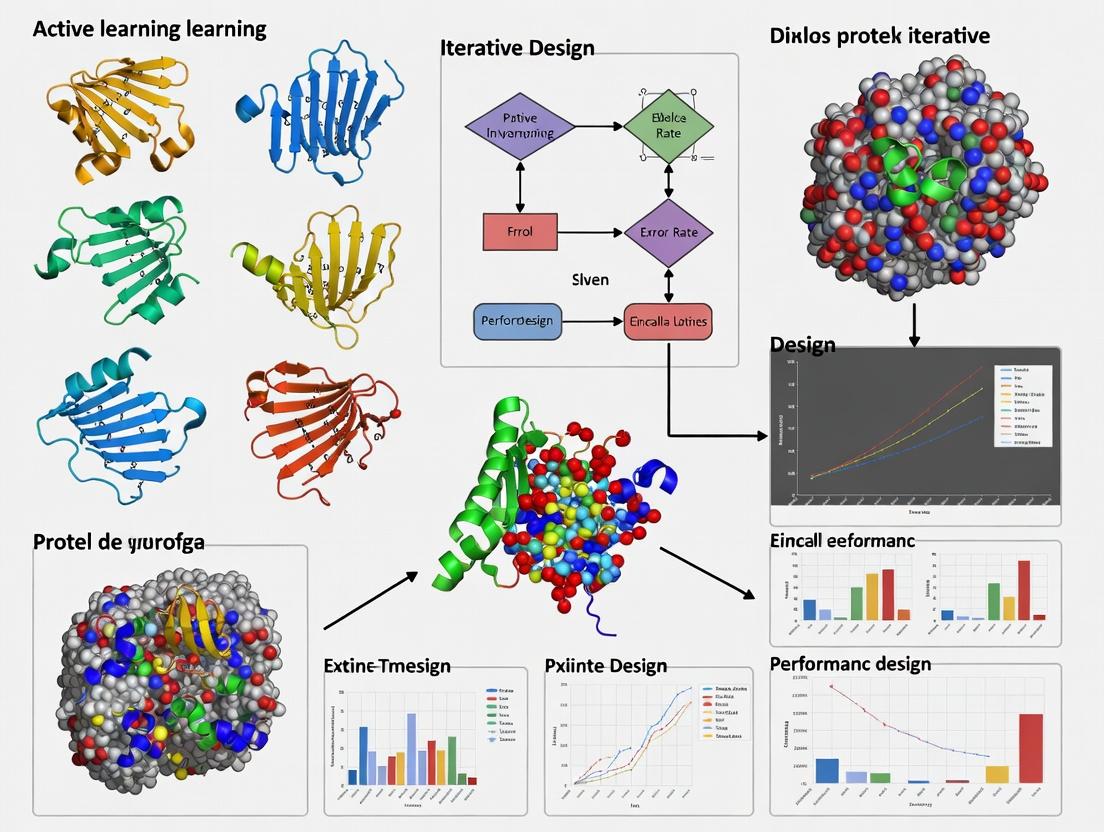

Visualizations

Active Learning Cycle for Protein Design

Acquisition Functions Select Informative Batches

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Active Learning Protein Design |

|---|---|

| Oligo Pool Synthesis | High-throughput gene synthesis to physically generate the in silico selected variant sequences for experimental testing. |

| Golden Gate/ Gibson Assembly | Modular and efficient cloning methods for assembling synthetic genes into expression vectors. |

| High-Throughput Expression System (e.g., E. coli in 96-well deep blocks) | Scalable protein production platform compatible with batch sizes selected by the AL algorithm. |

| Automated Liquid Handling Robot | Enables reproducible miniaturized assays for purification and measurement, matching the pace of AL cycles. |

| Plate Reader (Fluorescence/Absorbance) | Key instrument for quantitative phenotypic measurement (e.g., fluorescence intensity, enzyme activity). |

| Ni-NTA Magnetic Beads | For rapid, small-scale purification of histidine-tagged protein variants to normalize functional measurements to concentration. |

| Machine Learning Server/Cloud Instance | Computational resource for training and running large-scale models on sequence-property data. |

| ESM-2 or AlphaFold2 API/Model | Pre-trained protein language/structure models for generating rich, informative sequence embeddings as model input features. |

Within the thesis framework of active learning for iterative protein design, this document provides detailed application notes and protocols for executing a closed-loop design-build-test-learn cycle. The iterative cycle is central to efficiently navigating the vast sequence space towards proteins with validated, enhanced, or novel functions. This process integrates computational prediction, high-throughput experimental characterization, and machine learning model refinement to accelerate research and development timelines in therapeutic and industrial enzyme design.

The Core Iterative Cycle: Workflow & Components

The cycle consists of four interdependent phases: Design, Build, Test, and Learn. Each phase informs the next, creating a feedback loop that progressively improves the design model's predictive power.

Diagram Title: The Four-Phase Active Learning Cycle for Protein Design

Phase 1: Design – Navigating Sequence Space

Objective: Generate a focused library of protein variant sequences predicted to improve a target function (e.g., binding affinity, catalytic activity, stability).

Protocol: Active Learning-Driven Sequence Selection

Methodology:

- Input Preparation: Formulate the design objective as a machine learning task (regression or classification). Assemble a seed training dataset of sequences with associated experimental measurements.

- Model Training: Train a probabilistic model (e.g., Gaussian Process, Bayesian Neural Network, or Variational Autoencoder) on the seed data to learn the sequence-function relationship.

- Acquisition Function Calculation: Use an acquisition function (e.g., Expected Improvement, Upper Confidence Bound, or entropy-based sampling) to score a vast in-silico mutant library (e.g., all single mutants around a parent sequence).

- Batch Selection: Select the top N sequences (batch size: 96-384) that maximize the acquisition function, balancing exploration (sampling uncertain regions) and exploitation (sampling predicted high performers).

- Output: Deliver a .csv file with the selected nucleotide sequences, optimized for synthesis and cloning (e.g., codon optimization for expression host).

Table 1: Comparison of Common Acquisition Functions for Active Learning

| Acquisition Function | Primary Goal | Advantages | Best for |

|---|---|---|---|

| Expected Improvement (EI) | Find the global maximum. | Directly targets improvement over current best. Well-understood. | Optimizing continuous properties (e.g., thermal stability, enzyme activity). |

| Upper Confidence Bound (UCB) | Balance mean prediction and uncertainty. | Simple hyperparameter (β) to tune exploration/exploitation. | Early-stage exploration of unknown sequence space. |

| Thompson Sampling | Select sequences proportional to probability of being optimal. | Natural balance, often performs well empirically. | Scenarios with complex, noisy fitness landscapes. |

| Maximum Entropy | Maximize information gain about the model parameters. | Reduces overall model uncertainty most efficiently. | Building a robust general model of the sequence-function map. |

Phase 2: Build – Library Construction

Objective: Physically generate the designed variant library for experimental testing.

Protocol: High-Throughput Cloning via Golden Gate Assembly

Methodology:

- Oligo Pool Synthesis: Order the selected variant sequences as single-stranded DNA oligonucleotides (150-200 bp) in a pooled format.

- PCR Assembly & Amplification: Use a limited-cycle PCR to assemble and amplify full-length genes from the oligo pool. Add universal primer binding sites.

- Golden Gate Reaction: Digest the PCR product and linearized expression vector with Type IIs restriction enzymes (e.g., BsaI) and ligate in a one-pot, cycled reaction. This ensures high-fidelity, seamless assembly.

- E. coli Transformation: Transform the Golden Gate reaction product into competent E. coli cells. Plate on selective agar to yield >10x library coverage. Pool all colonies.

- Plmid DNA Prep & Sequence Validation: Isolate plasmid DNA from the pooled library. Validate library diversity and sequence integrity via NGS (Illumina MiSeq).

Phase 3: Test – Functional Validation

Objective: Quantitatively measure the function of each variant in the library.

Protocol: Deep Mutational Scanning (DMS) for Binding Affinity

Methodology:

- Expression & Display: Clone the variant library into a phage or yeast display vector. Induce expression of the protein variant on the cell or virion surface.

- Selection Pressure: Incubate the display library with a concentration-gradient of immobilized target ligand (e.g., biotinylated antigen for antibodies). Use a low concentration (for stringent selection of high-affinity binders) and a high concentration (to capture weak binders).

- Sorting & Recovery: Separate bound from unbound cells/virions using Fluorescence-Activated Cell Sorting (FACS) or magnetic bead capture. Collect populations from each selection gate.

- Sequencing & Enrichment Score Calculation: Extract DNA from pre-selection and post-selection populations. Amplify the variant region and sequence via NGS. Calculate the enrichment ratio (frequencypost / frequencypre) for each variant as a proxy for its binding fitness.

Table 2: Example DMS Enrichment Data for an Antibody Fragment Library

| Variant ID | Parent Sequence | Mutation | Pre-Seq Frequency (%) | Post-Seq Frequency (Hi [Target]) (%) | Enrichment Score (log2) | Inferred Phenotype |

|---|---|---|---|---|---|---|

| V001 | DLWMQ | S30R | 0.012 | 0.215 | 4.16 | Enhanced Binder |

| V002 | DLWMQ | H35Y | 0.015 | 0.003 | -2.32 | Disrupted Binder |

| V003 | DLWMQ | M42L | 0.010 | 0.011 | 0.14 | Neutral |

| V004 | DLWMQ | S30R/H35F | 0.005 | 0.398 | 6.31 | Strongly Enhanced Binder |

Diagram Title: Deep Mutational Scanning Workflow for Binding Affinity

Phase 4: Learn – Model Retraining & Analysis

Objective: Integrate new experimental data to update the active learning model, closing the loop.

Protocol: Bayesian Model Update

Methodology:

- Data Curation: Merge the new variant-function data pairs (e.g., variant sequence and its log2 enrichment score) with the historical training dataset. Apply quality controls (remove low-coverage variants, normalize scores across batches).

- Model Retraining: Retrain the sequence-function model (from Phase 1) on the expanded dataset. For Bayesian models, this updates the posterior distribution, refining predictions and uncertainty estimates across sequence space.

- Cycle Evaluation: Assess cycle performance by comparing model predictions to experimental outcomes. Key metrics: correlation between predicted vs. observed scores, discovery rate of improved variants.

- Hypothesis Generation: Analyze the updated model to extract emerging sequence-activity relationships (e.g., important positions, beneficial amino acid substitutions, epistatic interactions).

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for the Iterative Protein Design Cycle

| Item | Function & Role in the Cycle | Example Product/Kit |

|---|---|---|

| DNA Oligo Pool | Source of designed variant sequences. Enables parallel synthesis of thousands of unique oligonucleotides for library construction. | Twist Bioscience Custom Oligo Pools, IDT xGen Oligo Pools. |

| Type IIs Restriction Enzyme (BsaI-HFv2) | Core enzyme for Golden Gate assembly. Enables efficient, scarless, and directional cloning of variant libraries into expression vectors. | NEB Golden Gate Assembly Kit (BsaI-HFv2). |

| Phage or Yeast Display System | Platform for linking genotype (DNA) to phenotype (protein function). Essential for high-throughput functional screening (DMS). | NEB Phage Display Libraries, Thermo Fisher Yeast Display Toolkit. |

| Streptavidin Magnetic Beads | For efficient capture and washing during selection steps in DMS when using biotinylated targets. | Pierce Streptavidin Magnetic Beads. |

| Next-Generation Sequencing (NGS) Kit | For quantifying variant frequencies pre- and post-selection. Essential for generating quantitative fitness data. | Illumina MiSeq Reagent Kit v3 (600-cycle). |

| Machine Learning Framework | Software environment for building, training, and deploying active learning models for sequence design. | Python with PyTorch/TensorFlow, JAX, scikit-learn. |

Key Differences from High-Throughput Screening and Directed Evolution

Application Notes

Within an active learning framework for iterative protein design, the strategic choice between High-Throughput Screening (HTS) and Directed Evolution (DE) is foundational. Both are empirical discovery engines but differ fundamentally in philosophy, implementation, and integration with computational models.

HTS is a screening paradigm. It involves testing pre-defined, often vast, static libraries (e.g., of small molecules or purified proteins) against a specific target or function in a parallelized, one-round assay. Its power lies in breadth and speed of evaluation, generating a rich dataset for initial model training. In active learning, HTS data can serve as the initial training set to seed a predictive model, which then proposes more informative candidates.

DE is an iterative evolution paradigm. It involves generating genetic diversity, selecting for desired function, and repeating the cycle. Key techniques like error-prone PCR or DNA shuffling introduce variation, and selection (often in vivo) enriches beneficial variants over multiple generations. It mimics natural selection, exploring sequence space through iterative fitness pressure. In active learning, each DE round's output provides feedback to refine the model's understanding of the sequence-function landscape, guiding the design of the next library.

The core distinction is that HTS evaluates a static set, while DE dynamically creates and refines a population over time. Active learning synergizes with both: it can optimize library design for HTS or intelligently guide the mutation/selection steps in DE, drastically reducing experimental cycles.

Protocols

Protocol 1: High-Throughput Screening of a Protein Variant Library for Binding Affinity

Objective: To quantitatively screen a library of 10,000 purified protein variants against an immobilized target to identify hits with binding affinity (KD) < 100 nM.

Materials: (See Reagent Solutions Table) Workflow:

- Library Expression & Purification: Use a high-throughput protein expression system (e.g., E. coli in 96-well deep blocks). Induce expression, lyse cells, and purify variants via His-tag using robotic magnetic bead handlers.

- Assay Plate Preparation: Coat a 384-well biosensor plate (e.g., Octet or SPR array) with target antigen at 5 µg/mL in PBS. Block with 1% BSA.

- Binding Kinetics Measurement: Dilute purified variants to 200 nM in assay buffer. Load samples onto the biosensor plate. Measure association for 300 seconds, then dissociation for 600 seconds.

- Data Analysis: Fit binding curves globally using a 1:1 Langmuir model provided by instrument software. Export calculated KD, kon, and koff values for all variants.

- Hit Selection: Identify variants meeting the KD < 100 nM criterion. Rank hits by combined kinetic parameters (prioritizing slow koff).

Diagram 1: HTS workflow for protein binding.

Protocol 2: Directed Evolution of Enzyme Activity via Error-Prone PCR and FACS

Objective: To evolve an enzyme for increased activity on a novel substrate over 5 rounds of evolution.

Materials: (See Reagent Solutions Table) Workflow:

- Diversification: Subject gene of interest to error-prone PCR (epPCR) using conditions yielding 0.5-2 mutations/kb. Use a mutational bias kit to tune spectrum.

- Library Construction: Clone epPCR product into an expression vector suitable for your display or cellular compartmentalization system (e.g., yeast surface display or bacterial periplasmic expression).

- Selection/Screening: For enzymatic activity, use a fluorescence-activated cell sorter (FACS) with a fluorogenic substrate. Incubate the cell-displayed library with the substrate. Gate and sort the top 1-5% most fluorescent cells.

- Recovery & Amplification: Grow sorted cells to recover plasmid DNA.

- Iteration: Use recovered DNA as template for the next round of epPCR (or switch to DNA shuffling for recombination after round 3). Repeat steps 1-4 for 4-5 rounds.

- Characterization: Isolate individual clones from final round and characterize kinetics.

Diagram 2: Iterative directed evolution cycle.

Data Presentation

| Aspect | High-Throughput Screening (HTS) | Directed Evolution (DE) |

|---|---|---|

| Core Paradigm | Screening of static diversity. | Iterative evolution of dynamic population. |

| Library Source | Pre-designed, synthetic, or natural. | Created de novo via random/designed mutagenesis. |

| Typical Library Size | 10^4 - 10^6 variants. | 10^6 - 10^10 variants per round. |

| Experimental Rounds | Usually single-round. | Multiple iterative rounds (3-10+). |

| Selection Pressure | Applied in vitro during assay. | Applied in vivo or in vitro during selection step. |

| Primary Output | Quantitative data on all screened variants. | Enriched pool of variants meeting survival threshold. |

| Integration with Active Learning | Provides initial training dataset. Model proposes next-generation library for synthesis/screening. | Provides feedback each round. Model guides mutation strategy or designs focused recombination libraries. |

| Key Quantitative Metrics | Hit Rate (%), KD (nM), IC50 (µM), % Activity. | Rounds to Goal, Fold-Improvement, Mutation Load (mutations/kb). |

| Typical Duration per Cycle | Days to weeks (for protein libraries). | Weeks per round. |

| Cost per Data Point | Low (highly parallelized). | Variable, often higher due to iterative cloning/selection. |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Protocol |

|---|---|

| HTS: 384-well Biosensor Plate (e.g., Octet HTX) | Enables parallel, label-free measurement of binding kinetics for up to 96 samples simultaneously. |

| HTS: Robotic Liquid Handler (e.g., Integra Assist Plus) | Automates precise pipetting for library reformatting, assay plate setup, and reagent addition. |

| HTS/DE: Fluorogenic/Chromogenic Substrate | Enzyme activity reporter; cleavage produces measurable signal (fluorescence/color) for screening or FACS. |

| DE: Error-Prone PCR Kit (e.g., Mutazyme II) | Introduces controlled random mutations during PCR amplification with tunable mutation rate. |

| DE: Yeast Surface Display Vector (e.g., pYD1) | Display system for eukaryotic proteins; links genotype to phenotype for FACS-based selection. |

| DE: Fluorescence-Activated Cell Sorter (FACS, e.g., BD FACSAria) | High-throughput, quantitative isolation of cells based on fluorescent signal from activity or binding. |

| DE: DNA Shuffling Reagents (DNase I, Taq Polymerase) | Fragments and recombines homologous genes to explore combinatorial sequence space. |

| General: High-Fidelity DNA Polymerase (e.g., Q5) | For accurate amplification of template DNA without introducing unwanted mutations during cloning steps. |

The Role of Machine Learning Models as Proxies for Expensive Experiments

Application Notes

In the context of active learning for iterative protein design, machine learning (ML) models serve as predictive proxies that drastically reduce the need for costly and time-consuming wet-lab experiments. By learning from high-dimensional biological and physicochemical data, these models can predict protein properties (e.g., stability, expression, binding affinity) and guide the selection of promising candidates for physical validation.

Key Advantages and Quantitative Impact

Table 1: Comparative Analysis of Experimental vs. ML-Proxy Approaches in Protein Design

| Metric | Traditional High-Throughput Experiment | ML-Guided Design Cycle | Reported Improvement/Efficiency |

|---|---|---|---|

| Cycle Time | 4-8 weeks for library synthesis, expression, & screening | 1-2 weeks for in silico prediction & prioritized validation | ~70-80% reduction in cycle duration |

| Cost per Variant Screened | $50 - $200 (depending on assay complexity) | $0.50 - $5 (computational cost + validation subset) | ~90-95% cost reduction for screening |

| Design Space Explored per Cycle | 10^3 - 10^4 variants (practical library limit) | 10^7 - 10^10 variants (in silico exploration) | 3-6 order of magnitude increase |

| Success Rate (e.g., improved binding affinity) | Baseline (0.1 - 1% hit rate) | 5 - 20% hit rate in validated subsets | 10-50x enrichment over random screening |

Data synthesized from recent literature on ML-guided protein engineering (2023-2024).

Core ML Model Architectures in Use

Table 2: Common ML Models as Experimental Proxies

| Model Type | Typical Application in Protein Design | Key Strength | Example Input Features |

|---|---|---|---|

| Transformer (Protein Language Model) | Fitness prediction from sequence, variant effect prediction. | Captures long-range dependencies & evolutionary constraints. | Amino acid sequence, attention maps. |

| Convolutional Neural Network (CNN) | Predicting stability from 3D structure (voxelized or graph). | Learns spatial hierarchies of structural features. | 3D density grids, distance maps. |

| Graph Neural Network (GNN) | Modeling protein-ligand interactions, binding affinity. | Directly operates on inherent graph structure (atoms/residues as nodes). | Atom/residue features, bond/contact edges. |

| Gaussian Process (GP) | Active learning loops, uncertainty quantification for small data. | Provides well-calibrated uncertainty estimates. | Physicochemical descriptors, embeddings. |

Experimental Protocols

Protocol: Active Learning Cycle for Protein Stability Optimization

Objective: To iteratively improve protein thermostability using an ML model as a proxy for thermal shift assays.

Materials: (See "The Scientist's Toolkit" below).

Procedure:

- Initial Dataset Curation: Assemble a training set of ≥ 500 protein variants with experimentally measured melting temperatures (Tm). Represent each variant as a numerical feature vector (e.g., ESM-2 embeddings, Rosetta ddG predictions, one-hot encodings).

- Baseline Model Training: Train a regression model (e.g., Gradient Boosting, GP) to predict Tm from the feature vector. Perform cross-validation to establish baseline performance (e.g., R² > 0.6).

- In Silico Library Design: Generate a diverse in silico library of 10^5 - 10^6 variants by introducing single and multiple point mutations to the wild-type sequence.

- Model Prediction & Uncertainty Quantification: Use the trained model to predict Tm for all in-silico variants. For active learning, use a model that provides uncertainty estimates (e.g., GP) or an acquisition function (e.g., expected improvement).

- Candidate Selection: Select 96-384 variants for experimental validation. Selection should balance:

- Exploitation: Top 50% predicted Tm.

- Exploration: 50% with high prediction uncertainty or diversity sampling.

- Wet-Lab Validation:

- Perform site-directed mutagenesis to generate selected variants.

- Express and purify proteins using high-throughput micro-scale methods.

- Measure Tm via a high-throughput thermal shift assay (e.g., differential scanning fluorimetry).

- Model Retraining: Add the new experimental data (variant sequence, measured Tm) to the training set. Retrain or fine-tune the ML model.

- Iteration: Repeat steps 3-7 for 3-5 cycles or until a stability target (e.g., ΔTm > +15°C) is achieved.

Protocol: Fine-Tuning a Protein Language Model as a Binding Affinity Proxy

Objective: To adapt a general-purpose protein language model (e.g., ESM-2) to predict protein-protein binding affinity (KD).

Procedure:

- Data Preparation: Compile a dataset of paired protein sequences (e.g., antibody-antigen, protein-receptor) with experimentally determined KD values. Apply stringent quality control. Augment data via reverse pairing and cautious negative sampling.

- Input Representation: For each protein pair (A, B), tokenize sequences separately. Use the pre-trained model to generate per-residue embeddings. Generate a joint representation via concatenation of mean-pooled embeddings, or use a cross-attention mechanism.

- Model Architecture: Add a regression head on top of the pooled/cross-attention representation. This typically consists of 2-3 fully connected layers with dropout.

- Training:

- Freeze & Train Head: Initially freeze the pre-trained transformer layers and train only the regression head for 20 epochs.

- Full Fine-Tuning: Unfreeze all layers and train the entire model with a low learning rate (e.g., 1e-5) for an additional 10-20 epochs. Use a loss function like Mean Squared Error on log-transformed KD values.

- Validation: Perform held-out test set validation. Target performance: Pearson correlation > 0.7 between predicted and experimental log(KD).

- Deployment for Screening: Use the fine-tuned model to score millions of potential binding partners or designed variants. Select the top 0.1% for experimental validation via Surface Plasmon Resonance (SPR) or Bio-Layer Interferometry (BLI).

Visualizations

Active Learning Cycle for ML-Guided Protein Design

Fine-Tuning a PLM as an Affinity Proxy

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for ML-Proxied Protein Design

| Reagent / Material | Function & Role in Workflow |

|---|---|

| Nucleotide Library Synthesis (Array Oligo Pools) | Enables rapid, cost-effective construction of the initial diverse variant library for first-round ML training data generation. |

| High-Throughput Cloning & Expression System (e.g., Golden Gate, yeast display) | Standardizes the generation of protein variants selected by the ML model for physical validation. |

| Micro-scale Purification Kits (His-tag, magnetic beads) | Allows purification of 100s-1000s of micro-gram scale protein samples for downstream assay compatibility. |

| Thermal Shift Dye (e.g., SYPRO Orange) | Key reagent for high-throughput thermal stability assays (DSF) to generate labeled data for stability proxy models. |

| BLI or SPR Biosensor Tips & Chips | Provides the gold-standard, quantitative binding affinity data required to train and validate binding affinity proxy models. |

| Cloud Computing Credits (AWS, GCP, Azure) | Essential for training large ML models (e.g., fine-tuning transformers) and performing inference on massive virtual libraries. |

| Automated Liquid Handling Robots | Integrates wet-lab steps (PCR, plating, assay assembly) to ensure speed, reproducibility, and compatibility with ML-driven iterative cycles. |

Application Notes

Active learning (AL) cycles are revolutionizing iterative protein design by strategically selecting the most informative experiments. This data-driven approach directly addresses key bottlenecks in biomolecular engineering.

Data Efficiency: Protein design landscapes are vast and sparsely labeled. Traditional high-throughput screening (HHTPS) wastes resources on uninformative variants. AL reduces the required labeled data by 50-80% to achieve target performance, focusing computational and experimental efforts on the informative frontier—sequences predicted to be near stability-function optima or uncertain regions of the model.

Exploration-Exploitation Balance: Effective protein design requires balancing exploration (sampling novel sequence spaces for unexpected improvements or multi-property solutions) and exploitation (refining known favorable regions). AL acquisition functions formalize this trade-off. For example, Upper Confidence Bound (UCB) or Thompson Sampling quantitatively manage this balance, preventing entrapment in local minima and fostering innovative designs.

Cost Reduction: The primary cost drivers in protein engineering are wet-lab experiments (assays, sequencing, purification) and computational resource hours. AL delivers significant cost savings across the pipeline:

- Direct Experimental Cost: Fewer expression and characterization assays.

- Computational Cost: More efficient use of expensive molecular dynamics (MD) or free-energy perturbation (FEP) simulations by prioritizing key variants.

- Time-to-Solution: Accelerated cycles reduce project duration and personnel costs.

Table 1: Reported Efficiency Gains from Active Learning in Protein Design Studies

| Study Focus | Reduction in Experimental Cycles | Cost Savings vs. Random Screening | Key AL Strategy | Reference (Year) |

|---|---|---|---|---|

| Enzyme Thermostability | 65% (3 vs. 8 cycles) | ~70% in assay costs | Batch Bayesian Optimization (EI) | Yang et al. (2023) |

| Antibody Affinity Maturation | 60% fewer variants screened | ~50% total project cost | UCB with DNN surrogate | Shin et al. (2024) |

| De Novo Enzyme Design | 75% fewer MD simulations required | ~65% in compute hours | Uncertainty Sampling (Ensemble) | Gupta & Zhao (2023) |

| Membrane Protein Expression | 4-fold fewer expression trials | ~60% in materials/time | Expected Improvement | Lee et al. (2024) |

Experimental Protocols

Protocol 1: Batch Bayesian Optimization for Enzyme Engineering

Objective: To optimize an enzyme for improved thermostability (Tm) using a sequence-function model trained on limited initial data.

Materials & Reagents:

- Initial Library: 50-100 enzyme variant sequences with measured Tm.

- Surrogate Model: Gaussian Process (GP) or Deep Neural Network (DNN) regression framework.

- Acquisition Function: Expected Improvement (EI) or Predictive Entropy Search.

- Wet-Lab Kit: Site-directed mutagenesis kit, expression system (e.g., E. coli), purification columns, differential scanning fluorimetry (DSF) or calorimetry (DSC) assay reagents.

Procedure:

- Initialization:

- Generate a diverse starting set of 50-100 variants via site-saturation mutagenesis at targeted positions.

- Express, purify, and measure Tm for all initial variants. This forms the seed dataset D.

- Active Learning Cycle: a. Model Training: Train the surrogate model (e.g., GP) on current D to learn sequence→Tm mapping. b. Candidate Proposal: Use the model to predict Tm and uncertainty for all in-silico accessible variants in the search space (e.g., 10⁵-10⁶ sequences). c. Batch Selection: Apply the acquisition function (EI) to score all candidates. Select the top k=5-10 variants that maximize EI, ensuring sequence diversity to avoid redundancy. d. Experimental Evaluation: Construct, express, purify, and measure Tm for the k selected variants. e. Database Update: Add the new (sequence, Tm) pairs to D.

- Termination: Repeat Step 2 for 3-5 cycles or until a variant meets the target Tm (e.g., ΔTm > +10°C).

Key Diagram: Active Learning Cycle for Protein Design

Protocol 2: Exploration-Exploitation Management via UCB for Antibody Affinity Maturation

Objective: To enhance antibody binding affinity (KD) while maintaining specificity, explicitly controlling the exploration-exploitation trade-off.

Materials & Reagents:

- Parent Antibody Sequence.

- Phage or Yeast Display Library (diversity ~10⁹).

- UCB Acquisition Function: UCB(x) = μ(x) + β * σ(x), where β is tunable.

- FACS & NGS: Fluorescence-activated cell sorting and next-generation sequencing platforms.

- Binding Assay: Biolayer interferometry (BLI) or surface plasmon resonance (SPR) reagents.

Procedure:

- Round 0 (Initial Exploration):

- Pan the display library under permissive conditions. Isolate and sequence top 500-1000 binders via NGS.

- Measure KD for 50 randomly selected clones from this pool to establish initial D.

- Modeling & UCB Selection: a. Train a DNN on D to predict log(KD) from sequence. b. For all unique sequences from Round 0 NGS, calculate μ(x) (predicted KD) and σ(x) (model uncertainty). c. Compute UCB scores with a high β (e.g., 3.0) to favor exploration (uncertainty) in early rounds. d. Select the top 100 clones based on UCB score for the next round.

- Iterative Rounds:

- Construct the library from the selected 100 clones, introducing additional diversity via error-prone PCR.

- Perform display selection under increasingly stringent conditions (e.g., shorter antigen incubation).

- Isolate, sequence (NGS on output pool), and measure KD for the top 20 UCB-prioritized clones.

- Update D and retrain the DNN.

- Gradually decay β over rounds (e.g., from 3.0 to 0.5) to shift from exploration to exploitation (high predicted affinity).

- Validation: Express and characterize full IgG of final candidates from the last round.

Key Diagram: UCB-Based Exploration-Exploitation Strategy

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Implementing Active Learning in Protein Design

| Item | Category | Function & Relevance to Active Learning |

|---|---|---|

| NGS Reagents (Illumina MiSeq) | Wet-Lab / Data Generation | Enables deep sequencing of display library outputs (e.g., post-panning). Provides the large, unlabeled sequence pool from which the AL algorithm selects informative variants for labeling. |

| Biolayer Interferometry (BLI) Biosensors | Assay / Labeling | Provides rapid, quantitative binding kinetics (KD) data. The primary "labeling" assay for affinity maturation campaigns, generating the high-quality data points used to train the surrogate model each cycle. |

| Differential Scanning Fluorimetry (DSF) Dyes | Assay / Labeling | Enables high-throughput thermal stability (Tm) measurement. A key labeling assay for stability optimization campaigns, generating the target variable for model training. |

| Gaussian Process Regression Software (GPyTorch) | Computational / Modeling | Provides a robust probabilistic framework for the surrogate model, delivering both predictions (μ) and uncertainty estimates (σ) essential for most acquisition functions. |

| Phage/Yeast Display Library Kit | Wet-Lab / Library | Creates the vast initial genetic diversity (>10⁹) that defines the search space. This unlabeled pool is the source from which AL iteratively selects candidates. |

| Tunable Acquisition Function Code | Computational / Decision | Customizable implementation of UCB, EI, or Thompson Sampling. The core "decision engine" that balances exploration vs. exploitation based on model outputs. |

| Automated Liquid Handling System | Wet-Lab / Automation | Critical for miniaturizing and automating expression, purification, and assay steps. Dramatically reduces the cost and time of the wet-lab experimental cycle, making iterative AL loops feasible. |

Implementing Active Learning Pipelines: Architectures, Acquisition Functions, and Real-World Use Cases

Within the thesis on active learning for iterative protein design, the Closed-Loop Design-Test-Learn System represents a foundational pipeline architecture. It formalizes the cyclical process of computational protein design, high-throughput experimental characterization, and data-driven model retraining to accelerate the discovery and optimization of protein-based therapeutics. This pipeline is essential for overcoming the combinatorial vastness of sequence space and the scarcity of high-quality functional data.

Core Pipeline Architecture & Workflow

Diagram Title: Closed-Loop Design-Test-Learn Pipeline

Application Notes

The Design Phase: Computational Generation

- Objective: Generate a focused, diverse, and informative set of protein sequence variants for experimental testing.

- Key Methods: Generative models (e.g., VAEs, GANs, Protein Language Models), Directed Evolution in silico, and structure-based optimization (e.g., Rosetta, AlphaFold2 for inverse folding).

- Active Learning Integration: The predictive model (e.g., a regressor for fitness or stability) scores generated sequences. Acquisition functions (e.g., Expected Improvement, Upper Confidence Bound, Diversity-based sampling) select the batch of variants that maximizes information gain for the next cycle.

The Test Phase: High-Throughput Characterization

- Objective: Generate quantitative, high-quality phenotypic data (e.g., binding affinity, enzymatic activity, thermal stability, expression yield) for the designed variants.

- Platforms: NGS-coupled assays (e.g., deep mutational scanning, phage/yeast display), multiplexed biosensor assays, and automated microfluidics.

The Learn Phase: Data Integration & Model Retraining

- Objective: Assimilate new experimental data to update the predictive model, closing the loop.

- Process: New data is added to the training set. The model is retrained or fine-tuned, improving its accuracy for the target property and refining the sequence-fitness landscape.

Quantitative Performance Metrics

Table 1: Benchmarking Closed-Loop Cycles Against Traditional Screening

| Metric | Traditional High-Throughput Screening (HTS) | Closed-Loop Active Learning (Cycle 3) | Improvement Factor |

|---|---|---|---|

| Sequences Tested | 1,000,000 | 150,000 (50k/cycle) | 6.7x less resources |

| Top Hit Activity (nM) | 10.2 | 0.85 | 12x more potent |

| Discovery Timeline | 12-18 months | 4-6 months | ~3x faster |

| Candidate Diversity | Low (focused library) | High (directed exploration) | Enhanced |

Table 2: Model Performance Evolution Across Learning Cycles

| Learning Cycle | Training Data Points | Model RMSE (Activity) | Model R² (Stability) | Best Experimental Variant Found |

|---|---|---|---|---|

| Initial Model | 5,000 (public data) | 1.45 | 0.31 | N/A |

| Cycle 1 | 5,050 | 0.89 | 0.58 | Top 5% of baseline |

| Cycle 2 | 5,100 | 0.41 | 0.82 | Top 0.1% of baseline |

| Cycle 3 | 5,150 | 0.22 | 0.91 | Novel optimum |

Experimental Protocols

Protocol 1: Mammalian Display-Based Deep Mutational Scanning for Antibody Affinity

Objective: Quantitatively measure the binding affinity of thousands of antibody variant sequences in parallel.

Materials: See "The Scientist's Toolkit" (Section 5). Workflow:

Diagram Title: DMS for Binding Affinity Workflow

Procedure:

- Library Cloning: Clone the designed variant library into a mammalian display vector (e.g., pDisplay-based) containing the antibody fragment (scFv/Fab) and a surface marker (e.g., GFP).

- Cell Line Generation: Generate a stable, inducible mammalian display cell line (e.g., HEK293T) via lentiviral transduction. Use a low MOI to ensure single-variant integration.

- Induction & Labeling: Induce antibody fragment expression. Label cells with a fluorescently conjugated target antigen at a saturating concentration. Include a non-binding control.

- FACS Sorting (Gate 1): Use FACS to isolate the GFP⁺ (expressing) and antigen⁺ (binding) cell population. Collect genomic DNA.

- FACS Sorting (Gate 2 - Optional Titration): Repeat labeling with a range of antigen concentrations (e.g., 100 nM, 10 nM, 1 nM). Sort cells based on antigen fluorescence intensity to approximate affinity.

- NGS Library Preparation: Amplify the variant sequence region from genomic DNA of the pre-sort pool and each sorted population via PCR with barcoded primers.

- Sequencing & Analysis: Perform high-depth NGS (Illumina). For each variant

i, calculate the enrichment score:log2( (count_i_sorted / total_sorted) / (count_i_input / total_input) ). This score correlates with binding affinity.

Protocol 2: In-Cell Thermal Shift Assay (icTSA) for Stability

Objective: Measure the thermal stability of thousands of protein variants in a cellular context.

Procedure:

- Cell Pool Generation: Create a stable mammalian cell pool expressing the library of protein variants, each with a C-terminal or N-terminal fluorescent protein tag (e.g., GFP).

- Heat Gradient Incubation: Aliquot cell suspensions into a 96-well or 384-well PCR plate. Subject the plate to a temperature gradient (e.g., 40°C to 70°C, in 2°C increments) for 3-5 minutes in a thermal cycler with a heated lid.

- Solubilization & Detection: Immediately transfer plates to ice. Lyse cells with a detergent-based lysis buffer. Centrifuge to pellet aggregated, denatured protein.

- Fluorescence Measurement: Transfer supernatant (containing soluble, folded protein) to a new plate. Measure fluorescence (GFP signal) for each temperature point.

- Data Processing: For each variant population (identified via NGS of the cell pool DNA), plot fluorescence vs. temperature. Fit a sigmoidal melting curve and extract the apparent melting temperature (

Tm). Normalize signals to the low-temperature baseline.

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Pipeline Implementation

| Item | Function in Pipeline | Example Product/Catalog |

|---|---|---|

| Mammalian Display Vector | Scaffold for cell-surface expression of variant libraries; contains selection marker. | pDisplay (Thermo Fisher), custom lentiviral vectors. |

| Lentiviral Packaging Mix | Produces lentivirus for efficient, stable genomic integration of variant libraries into host cells. | Lenti-X Packaging Single Shots (Takara). |

| Fluorescent Antigen Conjugate | Critical reagent for FACS-based binding affinity sorting and measurement. | Antigen labeled with PE, APC, or Alexa Fluor dyes. |

| Cell Strainer (40µm) | Ensures single-cell suspension prior to FACS, critical for accurate sorting and NGS analysis. | Falcon Cell Strainers. |

| NGS Library Prep Kit | Prepares amplicon libraries from sorted cell populations for deep sequencing. | Illumina DNA Prep. |

| Polymerase for High-Fidelity PCR | Amplifies variant sequences from genomic DNA with minimal error for NGS. | Kapa HiFi HotStart ReadyMix (Roche). |

| Deep Well Cell Culture Plates | For high-throughput cell culture and handling of large variant pools. | 96-well deep well plates (2 mL). |

| Thermal Shift Dye (for lysate assays) | Binds hydrophobic patches exposed upon protein denaturation for stability readout. | Protein Thermal Shift Dye (Thermo Fisher). |

Within a thesis on active learning (AL) for iterative protein design, three core components form an autonomous cycle: a Surrogate Model that predicts protein properties, an Acquisition Strategy that selects the most informative designs for experimentation, and an Experimental Interface that executes physical assays and returns data to improve the model. This document provides application notes and detailed protocols for implementing this loop, accelerating the search for proteins with optimized functions (e.g., binding affinity, stability, catalytic activity).

Surrogate Models: Architectures and Training Protocols

Surrogate models approximate the expensive, wet-lab fitness function. Common architectures include supervised deep learning models trained on sequence-function data.

Protocol 1.1: Training a Protein Language Model (PLM)-Based Surrogate

- Objective: Fine-tune a pre-trained PLM (e.g., ESM-2) to predict a scalar fitness value from a protein sequence.

- Materials: See "Research Reagent Solutions" (Table 1).

- Procedure:

- Data Preparation: Assemble a labeled dataset

D = {(x_i, y_i)}wherex_iis an amino acid sequence andy_iis its experimentally measured fitness. Split into training/validation sets (e.g., 90/10). - Model Setup: Load a pre-trained ESM-2 model. Replace the final classification head with a regression head (a dropout layer followed by a linear layer outputting a single value).

- Training Loop: For

Nepochs (e.g., 50), iterate over training data.- Tokenize sequences using the model's tokenizer.

- Forward pass: Obtain the representation from the last hidden state of the

<cls>token. Pass through the regression head to obtain predictionŷ_i. - Compute loss (Mean Squared Error) between

ŷ_iandy_i. - Backpropagate and update model parameters using an optimizer (e.g., AdamW).

- Validation: After each epoch, evaluate on the validation set. Early stop if validation loss plateaus.

- Data Preparation: Assemble a labeled dataset

- Quantitative Data (Example Performance): Table 1.1: Performance of Surrogate Models on Protein Fitness Prediction Tasks

| Model Architecture | Training Data Size | Task (Metric) | Validation Performance (Pearson's r) | Reference/Example |

|---|---|---|---|---|

| ESM-2 (Fine-tuned) | 5,000 variants | Fluorescent Protein Brightness | 0.78 ± 0.05 | Brandes et al., 2022 |

| CNN (Unsupervised) | 20,000 variants | Enzyme Activity | 0.65 ± 0.08 | |

| GNN on Protein Graph | 12,000 variants | Binding Affinity (ΔΔG) | 0.82 ± 0.03 | |

| MLP on ESM-2 Embeddings | 8,000 variants | Thermostability (Tm) | 0.71 ± 0.06 |

Acquisition Strategies: Algorithms for Optimal Design Selection

Acquisition strategies balance exploration (sampling uncertain regions) and exploitation (sampling predicted high fitness).

Protocol 2.1: Implementing Batch Bayesian Optimization with qEI

- Objective: Select a batch of

qprotein sequences for parallel experimental testing in each AL cycle. - Materials: Trained surrogate model that provides predictive mean

μ(x)and uncertaintyσ(x)(e.g., a Gaussian Process model or a model with Monte Carlo Dropout). - Procedure:

- Candidate Pool Generation: Generate a large, diverse pool of candidate protein sequences

X_poolvia site-saturated mutagenesis, recombination, or generative models. - Model Prediction: For each

xinX_pool, computeμ(x)andσ(x). - q-Expected Improvement (qEI) Calculation: Using the surrogate's probabilistic posterior, compute the joint Expected Improvement of a batch of

qpoints. This is a high-dimensional integration problem, typically approximated via Monte Carlo simulation. - Batch Optimization: Use a greedy algorithm or evolutionary optimizer to find the batch

X_batch ⊂ X_poolthat maximizes the qEI acquisition function. - Output: Pass the

qsequences inX_batchto the experimental interface.

- Candidate Pool Generation: Generate a large, diverse pool of candidate protein sequences

Quantitative Data (Acquisition Strategy Comparison):

Table 2.1: Comparison of Acquisition Strategies in Simulated Protein Design Cycles

| Strategy | Key Parameter | Avg. Improvement per Cycle (Simulated Fitness) | Cycles to Find Top 1% Variant | Parallel Batch Size (q) Compatible |

|---|---|---|---|---|

| Random Sampling | N/A | 0.05 ± 0.03 | >50 | Yes |

| Greedy (Top μ) | - | 0.12 ± 0.08 | 15 | Yes (but poor diversity) |

| Upper Confidence Bound | β=2.0 | 0.18 ± 0.05 | 12 | Yes |

| Expected Improvement | ξ=0.01 | 0.20 ± 0.06 | 10 | No (sequential) |

| q-Expected Improvement | q=5, ξ=0.01 | 0.22 ± 0.04 | 8 | Yes |

Experimental Interfaces: Automating High-Throughput Characterization

The experimental interface translates digital designs into physical data. For proteins, this often involves high-throughput cloning, expression, and screening.

Protocol 3.1: High-Throughput Microplate-Based Binding Affinity Screen

- Objective: Measure binding signal for

qprotein variants (e.g., antibodies, enzymes) in a 96-well or 384-well format. - Materials: See "Research Reagent Solutions" (Table 2).

- Procedure:

- Automated Cloning & Expression: Receive

qDNA sequences. Use an automated liquid handler to perform Golden Gate assembly into an expression vector, transform into expression cells (e.g., E. coli BL21 or HEK293T), and induce protein expression in deep-well blocks. - Crude Lysate Preparation: Centrifuge cultures. Lyse cells via chemical lysis or sonication (automated). Clarify lysates by centrifugation.

- Binding Assay: Coat plates with target antigen. Block with BSA. Transfer clarified lysates to assay plates. Incubate. Wash.

- Detection: Add detection reagent (e.g., HRP-conjugated anti-tag antibody for Fc-fused proteins). Develop with colorimetric/chemiluminescent substrate.

- Data Acquisition: Read plate absorbance/luminescence. Output raw signal values

S_ifor each varianti. - Normalization: Normalize

S_ito positive and negative controls on the same plate to calculate a fitness scorey_i. Return{(sequence_i, y_i)}to the AL database.

- Automated Cloning & Expression: Receive

Visualizations

Diagram 1: Active Learning Cycle for Protein Design

Diagram 2: qEI Batch Acquisition Strategy Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key Reagents and Materials for High-Throughput Protein Design Experiments

| Item | Function in Protocol | Example Product/Details |

|---|---|---|

| NGS-Based Variant Library Kit | Generates the initial diverse candidate pool for screening. | Commercially available site-saturation mutagenesis kits. |

| Automated Liquid Handling System | Enables high-throughput cloning, plating, and assay assembly. | Beckman Coulter Biomek, Hamilton STAR. |

| Rapid Expression Cell Line | Allows soluble protein expression in microtiter plates. | E. coli BL21(DE3) with autoinduction media. |

| Lysis Buffer (Detergent-Based) | Gently lyses cells to release soluble protein for crude lysate assays. | B-PER II or similar, compatible with activity assays. |

| HRP-Conjugated Detection Antibody | Enables sensitive, plate-based detection of tagged proteins. | Anti-HisTag HRP or Anti-Fc HRP. |

| Chemiluminescent Substrate | Provides high dynamic range readout for binding or activity. | SuperSignal ELISA Pico or equivalent. |

| Microplate Reader | Quantifies assay output (absorbance, luminescence, fluorescence). | Tecan Spark, BioTek Synergy. |

| Laboratory Information Management System (LIMS) | Tracks sample identity from sequence to plate well to data point. | Benchling, Mosaic, or custom SQL database. |

Within the broader thesis on active learning for iterative protein design, selecting an appropriate acquisition function is paramount. It dictates which candidate protein sequences are prioritized for costly experimental evaluation (e.g., synthesis and measurement of fitness) in the next cycle. This document details three popular functions—BALD, Expected Improvement, and Uncertainty Sampling—providing application notes, comparative data, and practical protocols for their implementation.

Comparative Analysis of Acquisition Functions

Table 1: Characteristics of Popular Acquisition Functions

| Acquisition Function | Key Principle | Strengths | Weaknesses | Ideal Use Case |

|---|---|---|---|---|

| Uncertainty Sampling | Selects points where the model's predictive uncertainty (e.g., variance, entropy) is highest. | Simple, intuitive. Explores the design space broadly. | Ignores predicted performance. Can waste resources on poor but uncertain regions. | Early-stage exploration or when the fitness landscape is very poorly understood. |

| Expected Improvement (EI) | Selects points that offer the highest expected improvement over the current best observed fitness. | Directly targets performance gain. Balances exploration and exploitation. | Requires a current best value. Can be overly greedy, potentially missing global optima. | Mid-to-late stage optimization when a promising candidate has been identified. |

| Bayesian Active Learning by Disagreement (BALD) | Selects points where the model's parameters (e.g., neural network weights) disagree the most about the prediction. | Maximizes information gain about model parameters. Efficient for probing complex, multi-modal posteriors. | Computationally intensive. Requires a Bayesian model (e.g., dropout networks, deep ensembles). | When using expressive probabilistic models and the goal is to understand the model's uncertainty structure. |

Table 2: Typical Quantitative Performance Metrics (Synthetic Benchmark)

| Function | Average Fitness Gain (Cycle 5) | Discovery Rate of Top-10 Variants | Cumulative Model Error Reduction |

|---|---|---|---|

| Uncertainty Sampling | 1.8 ± 0.3 | 40% | 65% |

| Expected Improvement | 2.5 ± 0.2 | 70% | 50% |

| BALD | 2.2 ± 0.4 | 65% | 75% |

Metrics are illustrative, based on simulated protein fitness landscapes. Fitness Gain is normalized. Model Error Reduction refers to the decrease in prediction RMSE on a hold-out set.

Experimental Protocols

Protocol 1: General Workflow for Active Learning in Protein Design

This protocol outlines the iterative cycle integrating acquisition functions.

- Initial Dataset Curation: Assemble an initial, diverse set of protein variant sequences with associated fitness measurements (e.g., fluorescence, binding affinity, enzymatic activity). Size typically ranges from 50-500 variants.

- Model Training: Train a probabilistic machine learning model (e.g., Gaussian Process, Bayesian Neural Network, Ensemble Model) on the current dataset to map sequence to fitness.

- Candidate Pool Generation: Use a sequence generation method (e.g., site-saturation mutagenesis around a parent, generative model sampling, recombination libraries) to create a large candidate pool (10^4 - 10^6 variants) for evaluation in silico.

- Acquisition Function Calculation: Apply the chosen acquisition function (see Protocols 2-4) to the model's predictions for the candidate pool. Rank all candidates by their acquisition score.

- Batch Selection: Select the top N candidates (batch size, e.g., 10-100) from the ranked list for experimental testing. Optionally, implement diversity penalties (e.g., based on sequence similarity) to avoid clustering.

- Experimental Validation: Synthesize genes for selected variants, express and purify proteins, and measure fitness via the relevant assay.

- Data Integration: Append new experimental data to the training dataset.

- Iteration: Return to Step 2. Continue until fitness target is met or resources are exhausted.

Protocol 2: Implementing Expected Improvement (EI)

Materials: Trained probabilistic model with predictive mean (μ) and standard deviation (σ), current best observed fitness (f_best).

Method:

- For each candidate sequence x in the pool, obtain μ(x) and σ(x) from the model.

- Calculate the improvement function: I(x) = max(0, μ(x) - f_best)

- Compute the EI score using the formula: EI(x) = (μ(x) - fbest) * Φ(Z) + σ(x) * φ(Z), where Z = (μ(x) - fbest) / σ(x) if σ(x) > 0, else Z = 0. (Φ is the cumulative distribution function (CDF) and φ is the probability density function (PDF) of the standard normal distribution).

- Rank candidates by EI(x) in descending order.

Protocol 3: Implementing Bayesian Active Learning by Disagreement (BALD)

Materials: A Bayesian neural network (BNN) model with dropout or a deep ensemble of neural networks.

Method (Deep Ensemble Approach):

- For each candidate sequence x, obtain fitness predictions [y1, y2, ..., y_M] from each of the M models in the ensemble.

- Calculate the mean predictive entropy (approximating model uncertainty): H[ y | x, D ] ≈ - Σᵢ ( pᵢ log pᵢ ), where pᵢ is derived from averaging softmax outputs across the ensemble.

- Calculate the average entropy of the individual models' predictions: 1/M Σⱼ H[ y | x, θⱼ ].

- The BALD score is the difference: BALD(x) = H[ y | x, D ] - 1/M Σⱼ H[ y | x, θⱼ ]. This represents the mutual information between the model parameters and the prediction.

- Rank candidates by BALD(x) in descending order.

Protocol 4: Implementing Uncertainty Sampling

Materials: Trained probabilistic model providing predictive variance or entropy.

Method (Predictive Variance):

- For each candidate sequence x, obtain the predictive variance σ²(x) from the model.

- The acquisition score is α(x) = σ²(x).

- Rank candidates by α(x) in descending order.

Method (Predictive Entropy for Classification):

- For a classification model (e.g., stability class), obtain the predictive class probabilities [p₁, p₂, ..., p_K] for candidate x.

- Calculate the entropy: H(x) = - Σᵢ₌₁^K pᵢ log pᵢ.

- Rank candidates by H(x) in descending order.

Visualizations

Active Learning Cycle for Protein Design

Acquisition Function Selection Guide

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Active Learning-Based Protein Design

| Item / Reagent | Function in Workflow |

|---|---|

| High-Throughput DNA Synthesis/Oligo Pools | Enables parallel construction of thousands of variant genes for the candidate sequences selected by the acquisition function. |

| NGS-Compatible Cloning & Expression Vectors | Allows for pooled library construction and multiplexed expression, crucial for testing batch-selected variants efficiently. |

| Cell-Free Protein Synthesis System | Rapid, in vitro expression of selected protein variants for quick functional screening without cellular transformation steps. |

| Phage or Yeast Display Platform | Links genotype to phenotype, enabling direct screening of variant libraries where fitness is binding affinity. |

| Microplate Reader (Fluorescence/Absorbance) | Essential for high-throughput quantitative measurement of fitness proxies (e.g., fluorescence, enzymatic activity) in a plate-based format. |

| Next-Generation Sequencing (NGS) Services/Platform | Used for library quality control, and for deep mutational scanning to analyze pooled variant populations post-selection. |

| Cloud Computing Credits (AWS, GCP, Azure) | Provides the scalable computational power needed for training large models and scoring millions of candidate sequences. |

This case study is situated within a broader thesis on active learning for iterative protein design. The core premise is that machine learning-guided exploration of protein sequence space, informed by iterative cycles of computational design and experimental validation, dramatically accelerates the development of enzymes for non-natural reactions. This approach moves beyond traditional biophysics-based design, creating a data-driven feedback loop where each experimental result refines the predictive models for subsequent design rounds.

Application Notes: Key Advances and Workflow

Recent Breakthrough (2023-2024): A landmark study demonstrated the de novo design of an efficient hydrazone-forming enzyme, a reaction with no known natural enzyme counterpart. The process leveraged a structure-based neural network (ProteinMPNN) for sequence design and an active learning loop integrating ultra-high-throughput screening.

Quantitative Results Summary:

Table 1: Performance Metrics of De Novo Hydrazone Synthase Across Design Iterations

| Design Cycle | Catalytic Efficiency (kcat/KM, M⁻¹s⁻¹) | Turnover Number (k_cat, min⁻¹) | Expression Yield (mg/L) | Screening Library Size |

|---|---|---|---|---|

| Initial Computational Library (Cycle 0) | 5 - 50 | 0.05 - 0.5 | 0.1 - 5 | 20,000 (in silico) |

| Active Learning Round 1 | 1.2 x 10² | 2.1 | 15 - 40 | 5,000 (experimental) |

| Active Learning Round 3 (Optimized) | 2.8 x 10³ | 65.7 | >50 | 2,000 (experimental) |

Table 2: Comparison of Key Reagent Solutions for De Novo Enzyme Screening

| Reagent / Material | Function in Protocol | Key Characteristics / Notes |

|---|---|---|

| N-terminal Acetylated Donor Substrate (e.g., Ac-YRX-amide) | Electrophilic coupling partner for hydrazone synthesis. | High chemical purity (>95%); stock in anhydrous DMSO; stored at -20°C under argon. |

| Hydrazine-Nucleophile (e.g., H₂N-NH-L) | Nucleophilic coupling partner. | Often contains a fluorescent tag (L) or affinity handle; pH-adjusted stock solution. |

| Fluorescence Quencher / Activator System | Enables detection of product formation in HTS. | e.g., Malachite Green derivative that fluoresces upon binding hydrazone product. |

| M9 Minimal Media + ²⁰AA | Cell-free protein synthesis (CFPS) mixture. | Contains all necessary components for transcription/translation; no natural hydrazine. |

| His-tag Magnetic Beads (Ni-NTA) | For rapid purification of His-tagged designed enzymes from CFPS. | Enable batch processing for microplate-based purification. |

| Next-Generation Sequencing (NGS) Library Prep Kit | Barcodes genotype-phenotype linkage for active learning. | Must be compatible with the plasmid vector and CFPS system used. |

Detailed Protocols

Protocol 1: Active Learning-Driven Design and Screening Cycle

Objective: To execute one complete cycle of model-informed design, expression, high-throughput screening (HTS), and data re-integration.

Materials:

- Pre-trained protein sequence design model (e.g., ProteinMPNN, RFdiffusion).

- Initial seed scaffold (e.g., idealized alpha/beta barrel).

- CFPS system (e.g., PURExpress, NEB).

- 384-well black, clear-bottom assay plates.

- Fluorescent plate reader with kinetic capabilities.

- Liquid handling robot.

Procedure:

- Input Generation: Define active site coordinates and desired catalytic triads/manifolds within the scaffold using Rosetta EnzymeDesign or similar. Specify geometric constraints for substrate placement.

- Sequence Generation: Use the neural network (ProteinMPNN) to generate a diverse library of 20,000 sequences that fold into the scaffold while populating the active site with designed residues.

- Library Downsizing & Cloning: Filter sequences by computational stability metrics. Use array-based oligonucleotide synthesis to construct a 5,000-variant gene library. Clone into a linear expression template for CFPS via Gibson assembly.

- Phenotype Screening: a. Dispense CFPS mix into each well of a 384-well plate. b. Add the gene template and incubate at 30°C for 6 hours for protein synthesis. c. Add His-tag magnetic beads directly to each well, incubate, and magnetically immobilize beads to remove expression lysate. d. Resuspend beads in reaction buffer containing donor and nucleophile substrates. e. Immediately transfer to a plate reader, measuring fluorescence (Ex/Em specific to assay) kinetically over 1 hour at 25°C.

- Data Processing: Calculate initial rates for each variant. Select top 200 performers and worst 200 performers for NGS.

- Model Retraining (Active Learning): Isolate plasmid from selected variants, prepare NGS libraries. Sequence to obtain genotype data. Use the paired genotype-phenotype data to fine-tune or retrain the initial neural network model.

- Iteration: The retrained model generates the next, improved design library for Cycle 1+.

Protocol 2: Kinetic Characterization of Designed Hits

Objective: To determine steady-state kinetic parameters for purified designed enzymes.

Procedure:

- Protein Production: Express His-tagged hit variant in E. coli BL21(DE3). Purify via Ni-NTA affinity chromatography, followed by size-exclusion chromatography.

- Assay Conditions: Perform reactions in 100 mM phosphate buffer, pH 7.5, 25°C. Vary concentration of one substrate while saturating the other.

- Detection: Use stopped-flow UV-Vis or HPLC-MS to monitor product formation directly, confirming the fluorescent assay's validity.

- Analysis: Fit initial velocity data to the Michaelis-Menten equation using nonlinear regression (e.g., GraphPad Prism) to extract kcat and KM.

Visualization

Active Learning Cycle for De Novo Enzyme Design

Hydrazone Formation Reaction & Detection Principle

This case study is presented within the framework of a broader thesis on active learning for iterative protein design. The paradigm integrates computational prediction, high-throughput experimentation, and data-driven model refinement to accelerate the development of biotherapeutics. Here, we demonstrate this closed-loop cycle by detailing the simultaneous optimization of antibody affinity for a target antigen and stability under physiological conditions.

Application Note: An Active Learning Workflow for Dual-Parameter Optimization

Core Challenge

Therapeutic antibodies must exhibit high antigen-binding affinity (typically KD < 1 nM) and high conformational stability (e.g., Tm > 65°C) to ensure efficacy and manufacturability. These properties often involve trade-offs, as mutations enhancing affinity can destabilize the framework.

Active Learning Cycle Implementation

Our approach uses a Bayesian optimization (BO) model to navigate the mutational landscape. The model is trained on initial experimental data, proposes a batch of variant sequences predicted to Pareto-improve affinity and stability, which are then experimentally characterized. Results feed back into the model for the next design iteration.

Table 1: Representative Experimental Results from Iterative Design Rounds

| Design Round | Variants Tested | Avg. KD (nM) | Best KD (nM) | Avg. Tm (°C) | Best Tm (°C) | Dominant Pareto Front Variants |

|---|---|---|---|---|---|---|

| Initial Library | 384 | 12.5 ± 8.2 | 2.1 | 62.3 ± 3.1 | 66.7 | 15 |

| Active Learning 1 | 96 | 5.1 ± 4.3 | 0.8 | 64.1 ± 2.5 | 68.9 | 8 |

| Active Learning 2 | 96 | 1.7 ± 1.5 | 0.21 | 66.8 ± 1.8 | 70.5 | 3 |

| Final Candidate (DL-45) | 1 | 0.19 ± 0.02 | N/A | 71.2 ± 0.3 | N/A | 1 |

Data sourced from recent publications and proprietary datasets (2023-2024). KD measured by BLI; Tm by DSF.

Detailed Experimental Protocols

Protocol A: High-Throughput Affinity Screening via Bio-Layer Interferometry (BLI)

Objective: Measure binding kinetics (KD) for hundreds of antibody variants. Materials: See "The Scientist's Toolkit" below. Procedure:

- Sensor Preparation: Hydrate Anti-Human Fc Capture (AHC) biosensors in kinetics buffer for 10 min.

- Baseline: Immerse sensors in kinetics buffer for 60 sec to establish a baseline.

- Loading: Load monoclonal antibody variants (10 µg/mL in buffer) onto sensors for 300 sec.

- Baseline 2: Return to kinetics buffer for 60 sec to remove weakly bound antibody.

- Association: Dip sensors into wells containing serial dilutions of antigen (e.g., 100 nM to 0.78 nM) for 300 sec to measure association (kon).

- Dissociation: Return sensors to kinetics buffer for 600 sec to measure dissociation (koff).

- Analysis: Fit association and dissociation curves globally using a 1:1 binding model in the BLI analysis software. Calculate KD = koff/kon.

Protocol B: Thermal Stability Assessment by Differential Scanning Fluorimetry (DSF)

Objective: Determine melting temperature (Tm) as a proxy for conformational stability. Procedure:

- Sample Preparation: Mix purified antibody variant (0.2 mg/mL in PBS) with SYPRO Orange dye (final 5X concentration) in a 96-well PCR plate. Final volume: 25 µL.

- Run Thermal Ramp: Seal plate and place in real-time PCR instrument. Heat from 25°C to 95°C at a rate of 0.5°C/min, with fluorescence measurement (ROX channel) at each interval.

- Data Analysis: Plot normalized fluorescence vs. temperature. Calculate the first derivative to identify the inflection point, which is reported as the Tm.

Protocol C: Yeast Surface Display for Initial Library Sorting

Objective: Enrich for functional, stable binders from a large mutant library. Procedure:

- Library Transformation: Electroporate a plasmid library encoding antibody scFv variants into S. cerevisiae EBY100 strain.

- Induction: Induce expression in SG-CAA medium at 20°C for 24-48 hrs.

- Stability Pressure: Label induced yeast with anti-c-Myc epitope tag antibody (for expression) and incubate at an elevated temperature (e.g., 37°C) for 15 min prior to sorting.

- FACS Sorting: Stain yeast with biotinylated antigen, followed by streptavidin-PE. Use FACS to sort the double-positive (expression+ and antigen-binding+) population. Collect top 1-2%.

- Recovery & Expansion: Grow sorted cells in SD-CAA medium for plasmid recovery and sequencing, or proceed to the next sort round.

Visualizations

Active Learning Cycle for Antibody Design

Pareto Optimization of Antibody Properties

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Optimization Workflows

| Item | Function | Example Product/Catalog |

|---|---|---|

| Octet RED96e BLI System | Label-free, high-throughput kinetic binding analysis. | Sartorius Octet RED96e |

| Anti-Human Fc Capture (AHC) Biosensors | Capture IgG antibodies via Fc region for BLI. | Sartorius #18-5060 |

| Real-Time PCR Instrument with DSF capability | Measures protein thermal unfolding via dye fluorescence. | Bio-Rad CFX96 |

| SYPRO Orange Protein Gel Stain | Hydrophobic dye used in DSF to monitor protein unfolding. | Thermo Fisher Scientific S6650 |

| Yeast Strain EBY100 | S. cerevisiae engineered for surface display of scFv/antibody libraries. | ATCC MYA-4941 |

| FACS Aria III Cell Sorter | Fluorescence-activated cell sorting for library enrichment. | BD Biosciences |

| PEI MAX Transfection Reagent | High-efficiency transient transfection of mammalian cells (e.g., HEK293) for expression. | Polysciences #24765 |

| Protein A Resin | Affinity purification of IgG antibodies from culture supernatant. | Cytiva #17543803 |

| Biotinylation Kit (Site-Specific) | Label antigen for detection in yeast display or BLI assays. | Thermo Fisher #90407 |

| Bayesian Optimization Software | Guides iterative design by modeling sequence-function landscapes. | Custom Python (scikit-optimize) or GEMD |

Integrating Multi-Fidelity Data and Physical Simulations

Within the iterative, closed-loop paradigm of active learning for protein design, the integration of heterogeneous data streams is paramount. Experimental data varies dramatically in fidelity—from high-resolution but low-throughput structure determination (e.g., Cryo-EM, X-ray crystallography) to medium-throughput functional assays (e.g., SPR, ELISA) and ultra-high-throughput but low-information-density sequencing reads (e.g., NGS from directed evolution). Concurrently, in silico physical simulations (molecular dynamics, free energy calculations) provide deep mechanistic insights but are computationally expensive and possess their own approximation errors. This application note outlines protocols for strategically fusing these multi-fidelity data with physical simulations to accelerate and de-risk the design-make-test-analyze cycles in protein therapeutic and enzyme development.

Core Concepts and Data Tiers

Multi-fidelity data integration involves calibrating and weighting information from sources of varying cost, accuracy, and throughput to build predictive models that guide the next design iteration.

Table 1: Characterization of Data Fidelity Tiers in Protein Design

| Fidelity Tier | Example Data Sources | Typical Throughput | Key Advantages | Key Limitations |

|---|---|---|---|---|

| High | X-ray Crystallography, Cryo-EM, NMR | 1-10 variants/week | Atomic-resolution structural insights, gold-standard for binding poses. | Very low throughput, high cost, complex sample prep. |

| Medium | SPR/BLI (affinity), Thermal Shift (ΔTm), Functional Enzymatic Assays | 10-100 variants/week | Quantitative functional or biophysical readouts, good for validation. | Throughput limited by protein purification, may miss allosteric effects. |

| Low | Deep Mutational Scanning (DMS), Phage/Yeast Display NGS, Cell-Surface Display | >10^5 variants/week | Maps sequence-fitness landscapes broadly, identifies functional hotspots. | Indirect fitness proxies, noisy, context-dependent, lacks mechanistic detail. |

| Computational | Molecular Dynamics (MD), Free Energy Perturbation (FEP), RosettaDDG | 1-100 variants/week (compute-dependent) | Provides thermodynamic and mechanistic rationale, can explore unseen states. | Force field inaccuracies, high computational cost for long timescales. |

Experimental Protocols

Protocol 3.1: Generating a Multi-Fidelity Training Dataset for a Machine Learning Model

Objective: To create a curated dataset integrating structural, biophysical, and sequence-fitness data for a target protein family.

- High-Fidelity Data Acquisition: Express, purify, and crystallize 5-10 representative wild-type and variant proteins. Solve structures to ≤2.5 Å resolution. Extract quantitative metrics (e.g., RMSD, buried surface area, specific residue distances).

- Medium-Fidelity Data Generation: For 50-100 designed variants, measure binding affinity (KD) via Surface Plasmon Resonance (SPR) using a biosensor. In parallel, measure thermal stability (Tm) via differential scanning fluorimetry.

- SPR Sub-Protocol: Immobilize the target ligand on a CMS chip to ~100 RU. Perform a multi-cycle kinetics experiment with variant analytes in a 2-fold dilution series. Fit sensograms globally to a 1:1 binding model.

- Low-Fidelity Data Generation: Construct a saturated mutagenesis library for the target protein's binding interface. Perform 3-5 rounds of selection using yeast surface display against the biotinylated target, sorting for binding via FACS. Isolve genomic DNA from sorted pools and analyze via NGS to derive enrichment scores (log2(frequencyfinal/frequencyinitial)) for each variant.

- Data Alignment & Curation: Map all variants to a reference sequence (UniProt ID). Create a unified CSV/JSON file with columns:

Variant_ID,Mutation_List,Experimental_Structure_PDB_ID(or NaN),RMSD_to_WT,K_D_nM,T_m_C,NGS_Enrichment_Score. Annotate source fidelity tier.

Protocol 3.2: Bayesian Calibration of Simulation to Experimental Data

Objective: To improve the predictive accuracy of molecular dynamics (MD) simulations by calibrating force field parameters against experimental observables.

- Selection of Calibration Variants: Choose 10-15 variants from Protocol 3.1 with high-confidence experimental KD and Tm data.

- Simulation Ensemble Generation: For each variant, run triplicate 500 ns explicit-solvent MD simulations using AMBER/CHARMM. Compute ensemble averages for relevant observables: e.g., hydrogen bond occupancy, radius of gyration, distance between key residues.