AI-Powered Enzyme Evolution: How Machine Learning is Revolutionizing Protein Engineering

This article provides a comprehensive overview of ML-guided directed evolution for researchers and drug development professionals.

AI-Powered Enzyme Evolution: How Machine Learning is Revolutionizing Protein Engineering

Abstract

This article provides a comprehensive overview of ML-guided directed evolution for researchers and drug development professionals. We explore the foundational shift from traditional random mutagenesis to data-driven AI approaches. The article details key methodologies, including active learning loops and generative models, and addresses common experimental challenges. We compare the performance and efficiency of ML-enhanced workflows against classical methods and discuss validation strategies for real-world applications in biocatalysis and therapeutic protein development.

From Darwinian Randomness to Predictive Design: The AI Revolution in Enzyme Engineering

Classical directed evolution, pioneered by Frances Arnold, remains a cornerstone of enzyme engineering. It mimics natural evolution through iterative cycles of mutagenesis, screening, and selection to improve or alter enzyme functions such as activity, stability, and selectivity. However, this empirical approach faces significant limitations that constrain its efficiency and scalability in modern biotechnology and drug development. This article, framed within the context of advancing ML-guided directed evolution, details these core limitations—cost, throughput, and the search space problem—through quantitative analysis, experimental protocols, and resource toolkits for researchers.

Quantitative Analysis of Limitations

The following tables summarize key quantitative challenges associated with classical directed evolution, derived from recent literature and industry benchmarks.

Table 1: Cost and Time Analysis of a Typical Classical Directed Evolution Campaign

| Stage | Approximate Cost (USD) | Time Investment | Key Cost/Time Drivers |

|---|---|---|---|

| Library Construction | $5,000 - $20,000 | 2-4 weeks | Gene synthesis, oligonucleotides, PCR reagents, cloning kits. |

| Screening/Selection | $50,000 - $500,000+ | 4-12 weeks | Assay reagents (e.g., chromogenic substrates), plates, robotic instrumentation, personnel. |

| Hit Validation | $10,000 - $50,000 | 2-4 weeks | Protein purification kits, analytical chromatography, deep sequencing. |

| Total (3-5 Rounds) | $200,000 - $2M+ | 6-12 months | Cumulative costs of iterative cycles; low success rate per variant screened. |

Table 2: Throughput vs. Search Space Problem

| Parameter | Typical Classical Method Capability | Theoretical Sequence Space for a 300-aa Enzyme | Coverage Gap |

|---|---|---|---|

| Library Size (Variants) | 10^3 - 10^6 variants per round | 20^300 ≈ 10^390 possible sequences | Exponentially impossible |

| Screening Throughput | 10^4 - 10^7 variants screened (assay-dependent) | N/A | <0.0001% of library screened |

| Mutational Density | Often focuses on 1-3 amino acid positions at a time. | Simultaneous optimization across distant sites is intractable. | Explores a tiny, local fitness landscape. |

| Functional Hit Rate | 0.01% - 1% (highly variable) | N/A | High resource waste on non-functional variants. |

Detailed Experimental Protocols

This section outlines standard protocols that exemplify the bottlenecks described.

Protocol 1: Error-Prone PCR (epPCR) for Random Mutagenesis

Objective: Generate a random mutant library of a target gene.

Materials:

- Template DNA (10-50 ng).

- Taq DNA Polymerase (or mutational bias-adjusted polymerase).

- epPCR Buffer (with unbalanced dNTPs and added MnCl₂).

- Forward and Reverse Primers.

- Thermo-cycler.

Method:

- Reaction Setup: In a 50 µL reaction, combine:

- 1X Taq buffer (standard).

- 0.2 mM each dATP and dGTP.

- 1 mM each dCTP and dTTP (imbalance increases misincorporation).

- 0.5 mM MnCl₂ (reduces polymerase fidelity).

- 0.4 µM each primer.

- 10 ng template DNA.

- 2.5 U Taq polymerase.

- Thermocycling: Run 30 cycles of: 95°C for 30s, 55°C for 30s, 72°C for 1 min/kb.

- Purification: Purify the PCR product using a commercial kit.

- Cloning: Digest and ligate into an expression vector, transform into competent E. coli.

- Library Quality Control: Sequence 10-20 random clones to determine average mutation rate (target: 1-3 mutations/kb).

Limitation Highlight: epPCR introduces random mutations, most of which are deleterious or neutral. It provides no guidance, making the search blind and inefficient.

Protocol 2: Microtiter Plate-Based High-Throughput Screening (HTS) for Hydrolase Activity

Objective: Screen a library of ~10^4 variants for improved hydrolytic activity.

Materials:

- Transformed E. coli colonies in 96- or 384-well plates.

- LB medium with antibiotic.

- IPTG for induction.

- Lysis buffer (e.g., B-PER with lysozyme).

- Chromogenic substrate (e.g., p-Nitrophenyl ester).

- Microplate reader.

Method:

- Culture Growth: Inoculate deep-well plates with single colonies. Grow overnight at 37°C, 900 rpm.

- Protein Expression: Dilute culture 1:50 into fresh medium, grow to mid-log phase, induce with IPTG. Express for 4-16 hours at 30°C.

- Cell Lysis: Pellet cells by centrifugation. Resuspend in lysis buffer, incubate with shaking for 30 min. Clarify by centrifugation.

- Assay: Transfer clarified lysate to a clear assay plate. Initiate reaction by adding substrate solution. Immediately monitor absorbance at 405 nm (for pNP release) kinetically for 10-30 minutes.

- Data Analysis: Calculate initial velocities. Normalize for expression (e.g., via total protein assay). Select top 0.1-1% of variants for the next round.

Limitation Highlight: This protocol is labor-intensive, reagent-costly, and throughput is physically limited by plates and robotics. It measures only one parameter (activity), potentially missing beneficial variants with subtle or multiple improved traits.

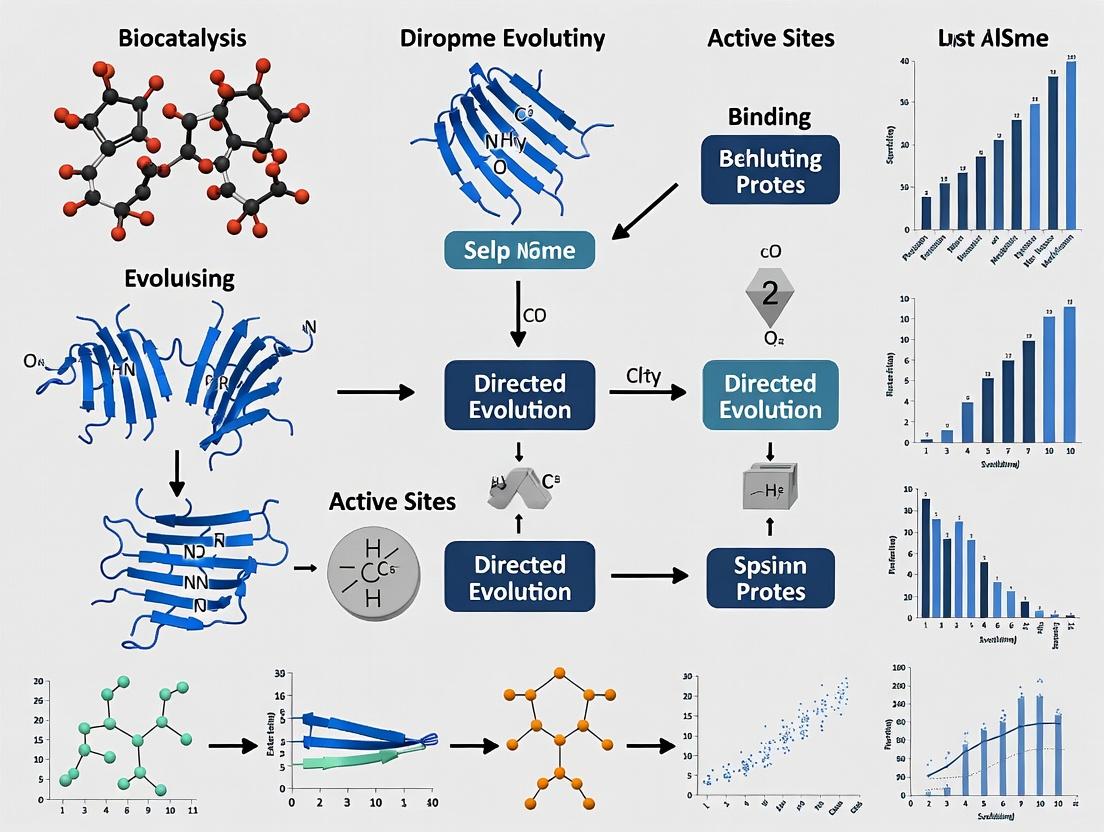

Visualizing the Workflow and Problem

Title: Iterative Cycle of Classical Directed Evolution

Title: The Exponential Search Space Bottleneck

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Classical Directed Evolution

| Reagent/Material | Function/Description | Example Product/Kit |

|---|---|---|

| Error-Prone PCR Kit | Systematically introduces random mutations during PCR amplification. | Genemorph II Random Mutagenesis Kit (Agilent) |

| Golden Gate Assembly Kit | Enables efficient, seamless assembly of DNA fragments for site-saturation mutagenesis libraries. | NEB Golden Gate Assembly Kit (BsaI-HFv2) |

| Chromogenic/Native Assay Substrate | Provides a detectable signal (color, fluorescence) upon enzymatic conversion for HTS. | p-Nitrophenyl (pNP) esters, Fluorescein diacetate (FDA) |

| Cell Lysis Reagent (HTS-compatible) | Rapidly lyses bacterial cells in microtiter plate format to release enzyme for screening. | B-PER Complete (Thermo Scientific) |

| High-Efficiency Cloning Competent Cells | Essential for maximizing library transformation efficiency and diversity. | NEB Turbo Competent E. coli |

| Microtiter Plates (Deep & Assay) | Deep-well for cell culture, clear flat-bottom for absorbance/fluorescence assays. | 96-well or 384-well plates (e.g., Corning, Greiner) |

| Automated Liquid Handler | Robotics for consistent, high-throughput plate replication, reagent addition, and assay setup. | Beckman Coulter Biomek series |

| Plate Reader | Detects optical signals (Absorbance, Fluorescence, Luminescence) from HTS assays. | Tecan Spark, BMG Labtech CLARIOstar |

This document provides detailed Application Notes and Protocols for the application of three core machine learning (ML) paradigms—Supervised Learning, Unsupervised Representation Learning, and Generative AI—within ML-guided directed evolution for enzyme engineering. These methods accelerate the search for optimized enzymes with enhanced properties such as activity, stability, and selectivity, moving beyond traditional high-throughput screening limitations.

Supervised Learning for Property Prediction

Application Notes

Supervised learning models are trained on labeled datasets (e.g., sequence-activity pairs) to predict functional properties of unseen enzyme variants. This enables virtual screening of variant libraries, prioritizing promising candidates for experimental validation.

Table 1: Performance of Supervised Models for Enzyme Property Prediction

| Model Architecture | Dataset (Enzyme/Property) | Dataset Size | Prediction Performance (Metric) | Key Reference (Year) |

|---|---|---|---|---|

| Convolutional Neural Network (CNN) | GB1 / Fluorescence | ~150,000 variants | R² = 0.73 | (Fox et al., 2023) |

| Random Forest (RF) | AAV / Transduction Efficiency | ~110,000 variants | Spearman ρ = 0.70 | (Meyer et al., 2023) |

| Gradient Boosting (XGBoost) | Amidase / Thermostability (Tm) | ~5,000 variants | RMSE = 2.1°C | (Brodkin et al., 2024) |

| Transformer (Fine-tuned) | Diverse / Catalytic Efficiency (kcat/Km) | ~400,000 samples | PCC = 0.65 | (Shin et al., 2024) |

Protocol: Training a CNN for Sequence-Activity Prediction

Objective: Predict enzymatic activity from protein sequence data. Materials: See "The Scientist's Toolkit" (Section 5). Workflow:

- Data Preparation:

- Format sequence data as one-hot encoded matrices (amino acids x sequence length).

- Normalize continuous activity values (e.g., log-transform, z-score).

- Split data into training (70%), validation (15%), and test (15%) sets.

- Model Training:

- Implement a 1D CNN architecture using PyTorch or TensorFlow. Example layers:

- Input Layer: Accepts one-hot encoded sequence.

- Conv1D Layers: 2-3 layers with increasing filters (e.g., 64, 128), kernel size 5-7, ReLU activation.

- GlobalMaxPooling1D Layer.

- Dense Layers: 1-2 fully connected layers (e.g., 128 nodes, ReLU).

- Output Layer: Single node (linear activation for regression).

- Loss Function: Mean Squared Error (MSE).

- Optimizer: Adam (learning rate=0.001).

- Train for up to 200 epochs with early stopping based on validation loss.

- Implement a 1D CNN architecture using PyTorch or TensorFlow. Example layers:

- Model Evaluation:

- Assess final model on held-out test set using R² and Root Mean Squared Error (RMSE).

- Virtual Screening:

- Use trained model to score an in silico library of designed mutants.

- Select top 0.1-1% of predicted high-activity variants for experimental characterization.

Title: Supervised Learning Workflow for Enzyme Engineering

Unsupervised Representation Learning for Feature Extraction

Application Notes

Unsupervised methods learn informative, compressed representations (embeddings) from unlabeled sequence or structural data. These embeddings capture evolutionary and functional constraints, serving as superior input features for downstream prediction tasks or for analyzing sequence landscapes.

Table 2: Unsupervised Representation Learning Methods in Enzyme Engineering

| Method | Input Data | Representation Dimension | Key Application | Public Model/Resource |

|---|---|---|---|---|

| Protein Language Model (e.g., ESM-2) | Sequences (MSA or single sequence) | 1280 - 5120 | Zero-shot fitness prediction, variant effect scoring | ESM-2, ESMFold (Meta, 2023) |

| Autoencoder (Variational) | Enzyme Vectors (One-hot) | 32 - 128 | Exploring continuous latent space of functional variants | Custom training required |

| Contrastive Learning (e.g., CPCprot) | Sequences & Structures | 512 | Learning structure-aware sequence embeddings | CPCprot (Yang et al., 2024) |

Protocol: Using Protein Language Model (ESM) Embeddings

Objective: Generate meaningful sequence representations for a target enzyme family. Materials: See "The Scientist's Toolkit" (Section 5). Workflow:

- Data Curation:

- Gather all homologous sequences for your enzyme family from UniRef90 or similar databases using HMMER or PSI-BLAST.

- Perform multiple sequence alignment (MSA) using ClustalOmega or MAFFT.

- Embedding Extraction:

- Load a pre-trained ESM-2 model (e.g.,

esm2_t33_650M_UR50D). - For each sequence in your MSA, tokenize and pass it through the model.

- Extract the embeddings from the penultimate layer (e.g., averaging representations across all residue positions).

- Store as a 2D matrix (N sequences x D embedding dimensions).

- Load a pre-trained ESM-2 model (e.g.,

- Downstream Application - Clustering Analysis:

- Apply dimensionality reduction (UMAP or t-SNE) to project embeddings to 2D/3D.

- Cluster sequences using HDBSCAN or k-means based on embedding similarity.

- Visualize clusters and analyze functional annotations (if available) per cluster to identify divergent functional groups.

- Downstream Application - Supervised Learning Boost:

- Use the extracted embeddings as feature vectors instead of one-hot encoding.

- Train a simpler model (e.g., ridge regression, shallow neural network) on a small labeled dataset for property prediction, often improving performance with limited data.

Title: Unsupervised Representation Learning Applications

Generative AI forDe NovoEnzyme Design

Application Notes

Generative models learn the distribution of functional enzyme sequences and can propose novel, plausible sequences with desired properties. This enables the de novo design of enzymes or the focused exploration of regions in sequence space with high fitness potential.

Table 3: Generative AI Models for Enzyme Design

| Model Type | Conditioning Method | Key Output | Experimental Validation (Example) |

|---|---|---|---|

| Generative Adversarial Network (GAN) | Latent space interpolation | Novel sequences adhering to training distribution | 24/50 generated variants of a phytase showed improved thermostability (2023) |

| Variational Autoencoder (VAE) | Property prediction head | Sequences with optimized predicted property (e.g., stability) | 65% of generated cellulase variants maintained activity, 15% improved. (2024) |

| Conditional Transformer (Causal LM) | Text/Property prompt (e.g., "high kcat at pH 9") | Sequences conditioned on specified constraints | Designed luciferases with 5-fold higher brightness than natural template. (2024) |

Protocol: Conditional Generation with a Fine-Tuned Transformer

Objective: Generate novel enzyme sequences predicted to have high thermostability. Materials: See "The Scientist's Toolkit" (Section 5). Workflow:

- Model Preparation:

- Start with a pre-trained protein language model (e.g., ESM-2 or ProtGPT2).

- Fine-tune the model on a curated dataset of thermostable enzymes (e.g., from thermophilic organisms) or a dataset labeled with melting temperature (Tm).

- Conditional Sampling:

- Use a control token or prompt to condition generation (e.g., prepend a special token

<HIGH_Tm>to the input). - Sample novel sequences using nucleus sampling (top-p=0.9) or beam search to ensure diversity and quality.

- Generate a large library (e.g., 10,000 sequences).

- Use a control token or prompt to condition generation (e.g., prepend a special token

- Filtering and Selection:

- Filter sequences using a discriminative model (see Section 1) to predict thermostability scores.

- Apply in silico filters (e.g., remove non-catalytic residues, check for structural plausibility with AlphaFold3 or ESMFold).

- Select a final set of 50-100 diverse, top-scoring sequences for de novo synthesis and expression.

- Experimental Validation:

- Synthesize genes and express/purify proteins.

- Assay for core activity and measure thermostability (e.g., Tm via DSF, residual activity after heat incubation).

- Use results as new labeled data to retrain/refine the generative and predictive models (active learning loop).

Title: Generative AI Design and Validation Cycle

Integrated ML-Guided Directed Evolution Pipeline

Application Notes

The most effective strategies integrate multiple paradigms into an iterative cycle, closing the loop between computational design and experimental testing. This accelerates the directed evolution campaign by learning from each round of data.

Title: Integrated ML-Guided Directed Evolution Pipeline

The Scientist's Toolkit

Table 4: Essential Research Reagent Solutions & Computational Tools

| Item Name | Category | Function in ML-Guided Enzyme Engineering |

|---|---|---|

| NGS Library Prep Kit (e.g., Illumina DNA Prep) | Wet-Lab Reagent | Enables deep mutational scanning (DMS) to generate large-scale sequence-function datasets for supervised learning. |

| Cell-Free Protein Expression System (e.g., PURExpress) | Wet-Lab Reagent | Allows rapid, high-throughput expression of thousands of generated variants for functional screening. |

| Thermofluor Dyes (e.g., SYPRO Orange) | Wet-Lab Reagent | Used in differential scanning fluorimetry (DSF) to measure protein thermostability (Tm) as a key fitness metric. |

| ESM-2 / ESMFold (Meta AI) | Software/Model | Pre-trained protein language model for generating sequence embeddings or fast structural predictions. |

| AlphaFold3 (DeepMind) | Software/Model | Provides state-of-the-art protein structure prediction, crucial for in silico filtering of generated designs. |

| PyTorch / TensorFlow with PyTorch Geometric | Software Library | Core frameworks for building, training, and deploying custom CNN, GNN, and Transformer models. |

| EVcouplings Framework | Software Suite | Implements methods for analyzing evolutionary couplings from MSAs, informing generative design. |

| Codon-Optimized Gene Synthesis | Service | Essential for physically constructing the de novo sequences generated by AI models. |

Application Notes

Within ML-guided directed evolution for enzyme engineering, predictive model performance is contingent on the integration and quality of four core data types. Each provides a complementary view of the sequence-function relationship, enabling models to generalize beyond sparse experimental data.

- Sequence Data (Genotype): The primary input, representing the raw genetic variation. Aligned multiple sequence alignments (MSAs) of homologous proteins provide evolutionary constraints, while variant libraries (e.g., from site-saturation mutagenesis) offer local exploration data. Numerical encodings (e.g., one-hot, embeddings from protein language models like ESM-2) transform symbolic sequences into model-ready vectors.

- Structure Data: Provides spatial and physicochemical context. Key features include:

- Distance Matrices: Atom-wise (Cα or all-atom) distances for modeling residue interactions.

- Voxelized Representations: 3D grids encoding electrostatic potential, hydrophobicity, or shape for convolutional networks.

- Dihedral Angles & Backbone Torsions: Inform on local conformational preferences.

- Fitness Landscape Data: The core training target, mapping genotype (variant sequence) to phenotype (quantitative function). It is constructed by pairing variant sequences with a scalar fitness metric (e.g., catalytic efficiency (k{cat}/KM), thermal stability (ΔT_m), product yield). Sparse sampling of this high-dimensional landscape is the fundamental challenge.

- High-Throughput Assay Results: The experimental source of fitness data. Technologies like fluorescence-activated cell sorting (FACS) coupled to microfluidic droplet screening or plate-based absorbance/fluorescence assays generate variant activity rankings and quantitative scores at scales of (10^5)-(10^8) variants.

Table 1: Core Data Types, Their Attributes, and Common Preprocessing Steps

| Data Type | Key Attributes/Sources | Common Format for ML | Preprocessing & Feature Engineering |

|---|---|---|---|

| Sequences | Wild-type sequence, MSA, mutant library list | One-hot encoding, BLOSUM62, pLM embeddings (e.g., ESM-2, ProtT5) | Alignment (ClustalOmega, MAFFT), tokenization, embedding extraction |

| Structures | PDB files, predicted structures (AlphaFold2, RoseTTAFold) | Cα distance maps, voxelized channels (charge, SASA), point clouds | Structure relaxation, feature calculation (Biopython, MDTraj), voxelization |

| Fitness Landscapes | Variant → Fitness value pairs from assays | Scalar normalized fitness (0-1), ranked lists | Normalization (Z-score, Min-Max), noise filtering, outlier detection |

| HTS Results | Flow cytometry data (FCS files), plate reader reads | Fluorescence/absorbance intensity distributions, enrichment scores | Gating analysis (FlowCytometryTools), background subtraction, kinetic fitting |

Protocols

Protocol 1: Generating a Multi-Modal Training Dataset for an Epoxide Hydrolase

Objective: Create a unified dataset linking sequences, structures, computed features, and assay fitness for ~5,000 variants.

Materials:

- Parent epoxide hydrolase gene (in a bacterial expression vector)

- Site-saturation mutagenesis (SSM) library oligonucleotides

- E. coli cloning and expression strain

- Fluorescent probe substrate (e.g., cis-/trans-β-methylstyrene oxide derivative)

- 384-well black-walled assay plates

- Microfluidic droplet sorter (e.g., Bio-Rad S3e or similar)

Procedure:

- Library Construction: Perform SSM at 5 target active-site residues using NNK codon degeneracy. Use overlap extension PCR and clone into expression vector. Transform into E. coli to achieve >10x library coverage. Isolate plasmid library.

- Sequence Acquisition: Isolate individual colonies (n=5,000) into 96-well culture blocks. Perform Sanger sequencing. Process traces to call variants. Generate a FASTA file of confirmed variant sequences.

- Structural Feature Computation:

- Submit the wild-type PDB (or an AlphaFold2 model) and the variant FASTA file to a computational pipeline (e.g., using Rosetta or FoldX).

- Run in silico mutagenesis for each variant.

- Extract features: ΔΔG of folding, SASA of mutated residue, distance to catalytic residue, and change in electrostatic energy. Output as a CSV file.

- High-Throughput Fitness Assay:

- Express variant library in deep 96-well blocks. Induce protein expression.

- Prepare cell lysates via chemical lysis.

- Load lysates and fluorescent substrate into a microfluidic droplet generator.

- Incubate droplets on-chip to allow reaction.

- Sort droplets based on fluorescence intensity (proxy for hydrolysis rate). Collect top ~10% and bottom ~10% populations.

- Extract and sequence plasmids from sorted populations via NGS.

- Fitness Landscape Construction:

- Map NGS reads to variant sequences. Calculate enrichment scores for each variant as (\log2(\text{count}{top}/\text{count}_{bottom})).

- Normalize scores to a 0-1 relative fitness scale, where 1.0 is the top performer.

Protocol 2: Training a Graph Neural Network (GNN) on Structure-Embedded Fitness Data

Objective: Train a model that predicts variant fitness from sequence and structural graph representation.

Materials:

- Dataset from Protocol 1 (sequences, fitness scores, structural features CSV).

- Wild-type protein structure file (PDB format).

- Python environment with PyTorch, PyTorch Geometric, and Biopython.

Procedure:

- Graph Representation Construction:

- Define each amino acid residue as a graph node.

- Assign node features: one-hot sequence of the variant, computed ΔΔG, residue depth, and pLM embedding slice.

- Define edges between residues if Cα atoms are within 10Å.

- Assign edge features: distance, type of interaction (covalent, non-covalent).

- Model Training:

- Split data: 70% train, 15% validation, 15% test.

- Implement a GNN architecture: Two graph convolutional layers (GCNConv) with ReLU activation, followed by a global mean pooling layer and a fully-connected readout layer.

- Loss Function: Mean Squared Error (MSE) between predicted and normalized fitness.

- Optimizer: Adam (learning rate = 0.001).

- Train for 200 epochs, applying early stopping if validation loss does not improve for 20 epochs.

- Validation: Evaluate on the held-out test set. Report Pearson's r and mean absolute error (MAE) between predictions and experimental fitness.

Diagrams

Title: ML-Guided Directed Evolution Workflow

Title: Graph Neural Network Architecture for Fitness Prediction

The Scientist's Toolkit: Research Reagent & Material Solutions

Table 2: Essential Tools for ML-Guided Directed Evolution Experiments

| Item | Function & Application in Workflow |

|---|---|

| NNK Degenerate Codon Oligos | Provides unbiased saturation of a target codon (encodes all 20 AA + 1 stop). Critical for generating diverse variant libraries. |

| Microfluidic Droplet Sorter | Enables ultra-high-throughput (≥10⁷/day) screening of enzymatic activity based on fluorescence, linking genotype to phenotype. |

| Fluorescent/Chromogenic Probe Substrate | A synthetic enzyme substrate that yields a detectable signal upon turnover, enabling activity measurement in cells or lysates. |

| Protein Language Model (e.g., ESM-2) | Pre-trained deep learning model that converts amino acid sequences into contextualized numerical embeddings, capturing evolutionary patterns. |

| Structure Prediction Suite (AlphaFold2) | Generates highly accurate protein structure models from sequence alone, providing structural data for proteins without a solved PDB. |

| Rosetta or FoldX Software | Performs in silico mutagenesis and calculates protein stability changes (ΔΔG), providing crucial structural feature inputs for models. |

| Graph Neural Network Framework (PyTorch Geometric) | Specialized library for building and training ML models on graph-structured data (e.g., protein residues as nodes). |

In ML-guided directed evolution for enzyme engineering, the primary objective is rarely singular. Optimizing an enzyme for industrial or therapeutic application requires balancing three interdependent properties: catalytic Activity, thermodynamic Stability, and substrate/region Specificity. This tripartite trade-off presents a complex, high-dimensional objective landscape for machine learning models.

The Central Challenge: Mutations that enhance one property (e.g., activity) often destabilize the protein or erode specificity. The ML model’s goal must be precisely defined to navigate this Pareto frontier, where improvement in one dimension comes at the cost of another.

Quantitative Data on the Trade-off

Table 1: Documented Trade-offs in Engineered Enzymes

| Enzyme Class | Target Property Improved | Compromised Property | Typical ΔΔG (kcal/mol) Range | Reference Key |

|---|---|---|---|---|

| PETase (Hydrolase) | Thermostability (Tm +15°C) | Catalytic Activity (kcat ↓ 30-40%) | +1.5 to +3.0 | [Cui et al., 2021] |

| Cytochrome P450 | Substrate Scope (Specificity ↓) | Expression Yield (↓ 50%) | N/A | [Zhang et al., 2022] |

| Beta-Lactamase | Antibiotic Resistance (Activity) | Stability (Tm ↓ 8°C) | -1.0 to -2.5 | [Stiffler et al., 2015] |

| Transaminase | Organic Solvent Stability | Enantioselectivity (ee ↓ 20%) | N/A | [Devine et al., 2023] |

Table 2: ML Model Performance on Multi-Objective Optimization

| ML Model Type | Dataset Size (Variants) | Objective Formulation | Success Rate (Pareto-optimal) | Key Limitation |

|---|---|---|---|---|

| Gaussian Process (GP) | 500-2000 | Weighted Sum (Activity+Stability) | 25-35% | Poor scalability |

| Variational Autoencoder (VAE) | 10,000+ | Latent Space Sampling | 15-25% | Low interpretability |

| Graph Neural Network (GNN) | 5,000-15,000 | Multi-Task Learning Heads | 30-40% | High data requirement |

| Bayesian Optimization | 200-500 | Sequential Pareto Frontier | 20-30% | Slow convergence |

Defining ML Objectives: Protocols & Application Notes

Protocol 3.1: Formulating the Multi-Objective Loss Function

Aim: To construct a loss function that guides ML-guided directed evolution towards a desired balance of properties.

Materials & Reagents:

- Normalized experimental data for activity (e.g., kcat/KM), stability (e.g., Tm, ΔΔG), and specificity (e.g., enantiomeric excess, IC50).

- ML training framework (e.g., PyTorch, TensorFlow).

Procedure:

- Data Normalization: Scale each property (Activity A, Stability S, Specificity Sp) to a [0,1] range based on the maximum observed value in your training set.

A_norm = A_obs / A_max

- Weight Assignment: Assign weights (α, β, γ) representing the relative priority of each property, where α + β + γ = 1. Example priorities:

- Therapeutic Enzyme: α(Activity)=0.5, β(Stability)=0.4, γ(Specificity)=0.1

- Industrial Biocatalyst: α(Activity)=0.3, β(Stability)=0.5, γ(Specificity)=0.2

- Composite Loss Function: For a predicted variant

i, compute:L_i = -[α * A_norm(i) + β * S_norm(i) + γ * Sp_norm(i)]- Negative sign for maximization.

- Incorporate Uncertainty: Use Bayesian neural networks or Gaussian processes to output a mean (μ) and variance (σ²) for each property. Modify loss to include an exploration bonus:

L_i = -[α * (μ_A + κ * σ_A) + β * (μ_S + κ * σ_S) + γ * (μ_Sp + κ * σ_Sp)]- Where κ controls exploration-exploitation (typically 0.05-0.2).

Protocol 3.2: Experimental Validation of Pareto Front Predictions

Aim: To experimentally test ML-predicted variants that purportedly lie on the Pareto-optimal frontier.

Materials & Reagents:

- E. coli BL21(DE3) expression system.

- Purification kit (Ni-NTA for His-tagged enzymes).

- Thermofluor dye (e.g., SYPRO Orange) for thermal shift assay.

- Relevant fluorogenic or chromogenic substrate for activity assay.

- HPLC/MS setup for specificity characterization.

Procedure:

- Variant Selection: From the ML model's Pareto front prediction, select 10-20 variants spanning the frontier. Include 5 random or wild-type controls.

- High-Throughput Expression & Purification:

- Perform 96-well deep-well plate expression. Induce with 0.5 mM IPTG at 16°C for 18h.

- Lyse cells via sonication. Use magnetic bead-based Ni-NTA purification in plate format.

- Determine protein concentration via Bradford assay.

- Parallel Assays:

- Activity: Perform kinetic assays in 384-well plates. Record initial velocity (v0) at saturating and KM substrate concentrations.

- Stability: Use thermal shift assay. Heat from 25°C to 95°C at 1°C/min, monitor fluorescence. Report Tm.

- Specificity: For enantioselectivity, run reactions to <10% conversion, analyze ee by chiral HPLC. For substrate specificity, profile against 5-10 analog substrates.

- Data Integration: Plot results in 3D (Activity, Stability, Specificity). Identify which predicted variants truly form the experimental Pareto front. Use this data to retrain the ML model.

Visualizing the Trade-off & ML Workflow

Title: ML-Guided Pareto Optimization Workflow for Enzyme Engineering

Title: From Trade-off Triangle to ML Objective Formulation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Characterizing the Trade-off

| Reagent / Material | Function in Protocol | Key Consideration for Trade-off Studies |

|---|---|---|

| Sypro Orange Dye | Binds hydrophobic patches exposed upon protein denaturation in thermal shift assays for stability (Tm) measurement. | Use consistent protein:dye ratio; ensure no compound interference for accurate ΔTm. |

| Ni-NTA Magnetic Beads | High-throughput immobilization and purification of His-tagged enzyme variants from cell lysates. | Minimize batch-to-batch variation to ensure consistent yield for activity comparisons. |

| Fluorogenic Substrate Probes | Enable continuous, high-throughput activity assays (e.g., 7-AMC or MCA derivatives for hydrolases). | Must validate that mutation does not alter probe kinetics disproportionately vs. native substrate. |

| Chiral HPLC Column (e.g., Chiralpak IA) | Gold-standard for separating enantiomers to quantify enantioselectivity (ee) as a specificity metric. | Requires method development for each new substrate/product pair; can be low-throughput. |

| Differential Scanning Fluorimetry (DSF) Capillaries | Allow nano-scale thermal denaturation curves, reducing protein sample requirement 100-fold. | Essential for screening stability of low-yielding or insoluble variants from challenging mutations. |

| Deep Mutational Scanning (DMS) Library Kit | Pre-built cloning systems for site-saturation mutagenesis to generate comprehensive variant libraries for ML training. | Library completeness is critical to avoid bias in the multi-property landscape presented to the ML model. |

| Cytiva HiTrap Desalting Column | Rapid buffer exchange into multiple assay buffers (activity, stability, specificity) from a single purification. | Maintains protein integrity and allows direct comparison of properties under identical buffer conditions. |

Building the Loop: A Step-by-Step Guide to ML-Augmented Directed Evolution Workflows

Application Notes & Protocols

Thesis Context: This protocol details the implementation of a machine learning (ML)-guided directed evolution pipeline for enzyme engineering, a core component of a broader thesis aiming to accelerate the discovery of biocatalysts for pharmaceutical synthesis.

A robust pipeline architecture is critical for closing the loop between computational prediction and experimental validation in ML-guided directed evolution. The integrated cycle consists of three core modules: (1) Data Generation via high-throughput screening, (2) Model Training on functional readouts, and (3) In Silico Prediction of variant libraries. This creates a self-improving system where each cycle's data enhances the model's predictive power for the next.

Diagram 1: ML-Guided Directed Evolution Pipeline

Detailed Experimental Protocols

Protocol 2.1: Data Generation Module – High-Throughput Microplate Activity Assay

Objective: Generate quantitative kinetic data for a library of enzyme variants.

Materials & Reagents:

- Purified enzyme variant library (96- or 384-well format)

- Fluorogenic or chromogenic substrate (e.g., 4-Nitrophenyl acetate for esterases)

- Reaction buffer (e.g., 50 mM Tris-HCl, pH 8.0)

- Positive control (wild-type enzyme)

- Negative control (heat-inactivated enzyme/buffer only)

- Microplate reader (capable of kinetic measurements)

Procedure:

- Plate Setup: Dispense 90 µL of reaction buffer into each well of a 96-well plate. Add 5 µL of purified enzyme variant per well. Include controls in triplicate.

- Pre-incubation: Incubate plate at assay temperature (e.g., 30°C) for 5 min in the plate reader.

- Reaction Initiation: Rapidly add 5 µL of substrate solution (prepared at 10x final concentration) to each well using a multichannel pipette. Final reaction volume: 100 µL.

- Data Acquisition: Immediately initiate kinetic measurement, recording absorbance (e.g., 405 nm for 4-NP) or fluorescence every 30 seconds for 10-30 minutes.

- Data Processing: Calculate initial velocities (V0) from the linear range of the progress curve. Normalize activities to positive control. Record sequence and associated V0 for each variant.

Table 1: Representative Microplate Assay Output (Synthetic Data)

| Variant ID | Mutation(s) | Normalized Activity (%) | Standard Deviation (n=3) |

|---|---|---|---|

| WT | - | 100.0 | 5.2 |

| MT_001 | A121V | 145.3 | 8.7 |

| MT_002 | F205L | 12.5 | 1.3 |

| MT_003 | A121V/L308P | 182.9 | 12.1 |

| MT_004 | D87G | < 1.0 | N/A |

Protocol 2.2: Model Training Module – Feature Engineering & Regression

Objective: Train a machine learning model to predict enzyme function from sequence.

Computational Tools & Steps:

- Feature Encoding: Convert protein sequences into numerical features.

- One-hot encoding of amino acids at each variable position.

- Physicochemical descriptors: Use

propy3Python library to calculate features like hydrophobicity index, charge, etc. - Evolutionary features: Generate PSSM (Position-Specific Scoring Matrix) via PSI-BLAST (if multiple sequence alignment data available).

- Data Splitting: Split dataset (e.g., 1000 variants) into training (70%), validation (15%), and hold-out test (15%) sets. Use stratified splitting if activity classes are imbalanced.

- Model Selection & Training: Use

scikit-learnor similar.- Algorithm: Gradient Boosting Regressor (e.g., XGBoost) often performs well for small to medium datasets.

- Hyperparameter Tuning: Perform grid search on validation set for parameters like

n_estimators,max_depth,learning_rate. - Training Command (example):

- Validation: Evaluate model on hold-out test set using metrics: Mean Absolute Error (MAE), R² score.

Table 2: Model Performance Metrics (Example)

| Model Type | Training R² | Validation R² | Test Set MAE (Δ% Activity) |

|---|---|---|---|

| Linear Regression | 0.41 | 0.38 | 18.5 |

| Random Forest | 0.92 | 0.68 | 11.2 |

| XGBoost | 0.89 | 0.75 | 9.8 |

Protocol 2.3: Prediction & Design Module – In Silico Saturation Mutagenesis

Objective: Use the trained model to predict the fitness of all possible single mutants and design the next library.

Procedure:

- Variant Enumeration: For a target enzyme of 300 residues, generate in silico all 19 possible point mutations at each position (5,700 variants).

- Batch Prediction: Encode all enumerated variants using the same feature scheme as Protocol 2.2. Use the trained model to predict activity scores.

- Ranking & Filtering: Rank variants by predicted score. Apply filters (e.g., exclude variants predicted to be destabilizing via

FoldXorRosetta). - Primer Design: Select top 96 predicted variants for experimental testing. Design oligonucleotide primers for site-directed mutagenesis using a tool like

PrimerXorSnapGene.- Critical Parameters: Primer length (25-45 bp), Tm (~78°C for QuikChange-style protocols), GC content (40-60%).

Diagram 2: In Silico Prediction & Library Design Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for ML-Guided Directed Evolution Pipeline

| Item | Function & Rationale |

|---|---|

| Phusion HF DNA Polymerase | High-fidelity PCR for accurate library construction without introducing spurious mutations. |

| KLD Enzyme Mix | Rapid, efficient circularization of mutagenesis PCR products, streamlining cloning. |

| Chromogenic/Fluorogenic Substrate | Enables direct, quantitative kinetic measurement in high-throughput microplate format. |

| Ni-NTA Agarose Resin | Standardized, high-yield purification of His-tagged enzyme variants for consistent assay input. |

| Commercially-synthesized Oligo Pool | Allows synthesis of hundreds of specific primers for targeted library construction in a single tube. |

| Automated Liquid Handling System | Critical for robustness and reproducibility in plate-based assays and library preparation steps. |

| XGBoost Python Package | High-performance gradient boosting framework ideal for tabular data from directed evolution. |

| FoldX Suite | Computationally assesses protein stability of predicted variants, filtering out non-functional designs. |

Within the broader thesis of ML-guided directed evolution for enzyme engineering, feature engineering is the critical bridge between raw biomolecular data and predictive machine learning models. Effective feature representation, capturing information from primary sequences to tertiary structures, is essential for training models that can predict enzyme function, stability, and activity, thereby accelerating the design-build-test-learn cycle.

Part 1: Primary Sequence Feature Engineering

Amino Acid Embeddings

Modern approaches move beyond one-hot encoding or traditional physicochemical property vectors (e.g., AAIndex) to learned distributed representations.

Protocol: Generating Contextual Embeddings from Protein Language Models (pLMs) Objective: To convert a raw amino acid sequence into a fixed-dimensional, semantically rich feature vector. Materials:

- FASTA file of target enzyme sequence(s).

- Access to a pre-trained pLM (e.g., ESM-2, ProtT5).

- Python environment with

transformers(Hugging Face) andbiopythonlibraries. Procedure:

- Sequence Preparation: Load the FASTA file. Remove any non-standard residues or ambiguous characters. Ensure the sequence length is within the model's context window (typically 1024-2048 residues).

- Model Loading: Import the chosen pLM via the

transformerslibrary. For example:model = AutoModel.from_pretrained("facebook/esm2_t36_3B_UR50D"). - Tokenization & Inference: Tokenize the sequence using the model's specific tokenizer. Pass tokenized IDs through the model in inference mode (

no_grad()). Extract the hidden state representations from the final layer. - Pooling: To obtain a single vector per sequence (global embedding), apply a pooling operation over the residue dimension. Mean pooling is standard:

sequence_embedding = last_hidden_state.mean(dim=1). - Per-Residue Features: For tasks requiring positional information (e.g., mutation effect prediction), store the per-residue embeddings (shape: [seqlen, embeddingdim]).

Table 1.1: Comparison of Representative Protein Language Models for Embedding Generation

| Model | Release Year | Parameters | Max Context | Embedding Dim | Key Feature |

|---|---|---|---|---|---|

| ESM-2 | 2022 | 8M to 15B | 1024-2048 | 320-5120 | Transformer-only, scales with model size |

| ProtT5 | 2021 | 3B (xxl) | 512 | 1024 (per residue) | Encoder-decoder, learned from UniRef50 |

| Ankh | 2023 | 1.2B (large) | 2048 | 1536 | Optimized for generation & understanding |

Diagram Title: Workflow for Generating Protein Language Model Embeddings

Classic Sequence-Based Descriptors

These remain relevant for interpretability and smaller datasets.

Protocol: Calculating Composition, Transition, Distribution (CTD) Descriptors Objective: To compute a 147-dimensional vector representing the composition and distribution of amino acid properties. Procedure:

- Property Classification: Assign each amino acid in the sequence to a class for three pre-defined physicochemical properties (e.g., Hydrophobicity, Normalized van der Waals Volume, Polarity).

- Composition (C): Calculate the percent composition of each property class in the sequence. Yields 3 numbers per property (21 total).

- Transition (T): Calculate the percent frequency with which a residue of one property class is followed by a residue of another class. Yields 3 numbers per property (21 total).

- Distribution (D): For each property class, calculate the fractions of the sequence where the first, 25%, 50%, 75%, and 100% of its residues are located. Yields 15 numbers per property (105 total).

- Concatenation: Combine C, T, and D vectors for all three properties into a final 147-dimensional descriptor.

Part 2: 3D Structural Feature Engineering

Geometric & Topological Descriptors

Requires a PDB file of the enzyme structure (experimental or predicted via AlphaFold2/RosettaFold).

Protocol: Calculating Dihedral Angles and Secondary Structure Objective: Extract backbone conformation features. Procedure:

- Structure Preprocessing: Load the PDB file using

BiopythonorMDTraj. Remove heteroatoms and water. Consider adding missing hydrogens. - Dihedral Angles: Calculate the Phi (φ) and Psi (ψ) torsion angles for each residue from the atomic coordinates (N, Cα, C, N+1). Use

mdtraj.compute_dihedrals()or a custom function implementing the tangent formula. - Secondary Structure Assignment: Use the DSSP algorithm (via

biopython.SSPro) to assign each residue to a category (Helix, Strand, Coil). Encode as one-hot vectors.

Protocol: Calculating Radius of Gyration and Solvent Accessible Surface Area (SASA) Objective: Quantify protein compactness and solvent exposure. Procedure:

- Radius of Gyration (Rg): Compute as the root-mean-square distance of all atoms from their centroid. Formula: ( Rg = \sqrt{\frac{\sumi mi |ri - r{cm}|^2}{\sumi m_i}} ). Use

mdtraj.compute_rg(). - Solvent Accessible Surface Area (SASA): Use the Shrake-Rupley or Lee-Richards algorithm (implemented in

MDTrajorFreeSASA). Calculate total SASA and per-residue SASA.

Graph-Based Representations

Represent the enzyme structure as a graph ( G = (V, E) ).

Protocol: Constructing a Residue Interaction Network (RIN) Objective: Create a graph where nodes are residues and edges represent meaningful interactions. Procedure:

- Node Definition: Each amino acid residue is a node. Node features can include residue type (one-hot), physicochemical properties, or pLM embeddings.

- Edge Definition: Connect residues (nodes) if their Cα atoms are within a cutoff distance (e.g., 8-10 Å). Alternatively, define edges based on specific atomic contacts (e.g., heavy atom distance < 4.5 Å) or chemical interactions (e.g., hydrogen bonds, salt bridges identified via

MDTrajorPyInteraph). - Edge Weighting: Weight edges by distance (inverse square) or binary (contact/no-contact).

- Feature Extraction: Compute graph-theoretic metrics for analysis: degree centrality, betweenness centrality, clustering coefficient per node. These can be pooled (mean, std) for a graph-level descriptor.

Diagram Title: From 3D Structure to Graph-Based Features

Table 2.1: Key 3D Structural Descriptors and Their Computational Methods

| Descriptor Category | Specific Descriptor | Typical Dimension | Tool/Library | Relevance to Enzyme Engineering |

|---|---|---|---|---|

| Geometric | Phi & Psi Angles | 2 x Seq Len | MDTraj, BioPython | Backbone flexibility, conformation |

| Radius of Gyration (Rg) | 1 | MDTraj | Global compactness, stability | |

| Surface | Solvent Accessible Surface Area (SASA) | 1 or Seq Len | FreeSASA, MDTraj | Solvent exposure, binding sites |

| Topological | Residue Contact Map | Seq Len x Seq Len | NumPy, PyContact | Long-range interactions |

| Residue Network Centrality | Varies (per node) | NetworkX | Identify key functional residues |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Resources for Enzyme Feature Engineering

| Item/Category | Example(s) | Function in Protocol |

|---|---|---|

| Sequence Databases | UniProt, BRENDA | Source for wild-type sequences, functional annotations, and homologous sequences. |

| Structure Databases | PDB, AlphaFold DB | Source for experimental or high-accuracy predicted 3D structures. |

| Protein Language Models | ESM-2 (Hugging Face), ProtT5 | Generate contextual amino acid and sequence-level embeddings. |

| Structure Analysis Suites | BioPython, MDTraj, PyMOL | Parse PDB files, calculate geometric descriptors, and visualize structures. |

| Graph Analysis Library | NetworkX, PyTorch Geometric | Construct residue interaction networks and compute graph metrics or train GNNs. |

| Feature Integration Platform | pandas, NumPy, Scikit-learn | Compile diverse feature sets, perform normalization, and prepare data for ML. |

| High-Performance Computing | GPU clusters (NVIDIA), Google Colab Pro | Accelerate pLM inference and deep learning model training. |

Integrated Protocol: Building a Feature Vector for ML-Guided Directed Evolution

Objective: To construct a comprehensive feature vector for an enzyme variant that combines sequence and structure information for a property prediction model (e.g., thermostability, catalytic efficiency).

Workflow:

- Input: Variant sequence (FASTA) and its corresponding 3D structure (PDB).

- Parallel Feature Extraction:

- Path A (Sequence): Generate a global pLM embedding (e.g., 5120-dim from ESM-2). Compute CTD descriptors (147-dim).

- Path B (Structure): Compute geometric descriptors: Rg (1), total SASA (1), mean dihedral angles (2). Construct RIN and extract mean graph centrality measures (e.g., 3 metrics).

- Feature Concatenation & Normalization: Combine all feature vectors into a single array. Apply standardization (z-score normalization) using parameters fit on the training set only.

- Output: A normalized, fixed-dimensional feature vector ready for input into a regression or classification model to predict the variant's fitness.

Diagram Title: Integrated Feature Engineering Workflow for Enzyme Variants

Application Notes

In the context of ML-guided directed evolution for enzyme engineering, selecting the optimal model architecture is critical for predicting protein fitness from sequence. The choice balances predictive accuracy, interpretability, and data requirements. The field has evolved from traditional machine learning to sophisticated deep learning models.

Random Forests (RFs) remain a robust baseline, especially in low-data regimes. They are computationally efficient, provide feature importance metrics (e.g., for individual amino acid positions), and are less prone to overfitting on small datasets common in early-stage engineering campaigns. Their performance, however, plateaus with complex, epistatic sequence-function relationships.

Graph Neural Networks (GNNs) explicitly model protein structure. By representing a protein as a graph (nodes as residues, edges as spatial or chemical interactions), GNNs capture topological constraints and long-range interactions critical for function. They are ideal when reliable structural data or homology models are available, bridging sequence-structure-function gaps.

Transformer Models (e.g., ESM, ProtBERT) represent the state-of-the-art for sequence-based prediction. Pre-trained on millions of diverse protein sequences, they learn rich, contextual embeddings. Fine-tuning these models on specific fitness datasets leverages transfer learning, yielding high accuracy even with moderate experimental data. They excel at capturing complex, nonlinear epistasis across the entire sequence.

Table 1: Model Comparison for Fitness Prediction

| Model Class | Typical Data Requirement | Key Strength | Key Limitation | Best Use Case in Directed Evolution |

|---|---|---|---|---|

| Random Forest | Low (~10² - 10³ variants) | Interpretability, speed, robust to small n | Poor extrapolation, misses complex epistasis | Initial library screening, feature importance analysis |

| Graph Neural Network | Medium (~10³ - 10⁴ variants) | Incorporates 3D structural context | Requires a structure/model for each variant | Structure-informed engineering of active sites/allostery |

| Transformer | Medium to High (~10⁴ - 10⁵ variants) | State-of-the-art accuracy, captures deep sequence context | Computationally intensive, "black box" | Leveraging large-scale screening data or pre-trained knowledge |

Table 2: Quantitative Performance Benchmark (Hypothetical Example)

| Model | Spearman's ρ (Test Set) | RMSE (Fitness Score) | Training Time (GPU hrs) | Inference Time (per 1000 seq) |

|---|---|---|---|---|

| Random Forest (200 trees) | 0.68 | 0.45 | 0.1 (CPU) | 2 sec (CPU) |

| GNN (3-layer) | 0.75 | 0.38 | 3 | 10 sec |

| Fine-tuned ESM-2 (35M params) | 0.82 | 0.31 | 8 | 30 sec |

Experimental Protocols

Protocol 1: Random Forest Fitness Prediction Workflow

Objective: Train an RF model to predict enzyme activity from a sequence-encoded variant library.

Materials:

- Dataset: CSV file with variant sequences (e.g., 'A21V, F100L') and corresponding normalized fitness values.

- Hardware: Standard laptop/desktop CPU.

Procedure:

- Sequence Encoding: Use one-hot encoding or a simplified physicochemical property vector (e.g., AAindex) for each mutation position relative to the wild-type.

- Train-Test Split: Perform a random 80/20 split, ensuring variants from the same mutagenesis round are stratified across sets.

- Model Training: Using scikit-learn, instantiate a

RandomForestRegressor. Start withn_estimators=500,max_features='sqrt'. Use 5-fold cross-validation on the training set to optimize hyperparameters (e.g.,max_depth,min_samples_leaf). - Evaluation: Predict on the held-out test set. Calculate Spearman's rank correlation and RMSE. Plot predicted vs. experimental fitness.

- Interpretation: Extract and plot feature importances from the trained model to identify residues most predictive of fitness.

Protocol 2: Fine-tuning a Transformer Model (ESM-2)

Objective: Adapt a pre-trained protein language model for a specific fitness prediction task.

Materials:

- Dataset: Aligned variant sequences in FASTA format with fitness labels.

- Pre-trained Model: ESM-2 model weights (e.g.,

esm2_t6_8M_UR50Dfrom Hugging Face). - Hardware: GPU (e.g., NVIDIA A100, 16GB+ VRAM recommended).

Procedure:

- Data Preparation: Tokenize sequences using the ESM-2 tokenizer. Create a PyTorch

Datasetclass that returns tokenized sequences, attention masks, and label tensors. - Model Setup: Load the pre-trained ESM-2 model. Replace the classification head with a regression head (a dropout layer followed by a linear layer projecting to a single fitness value).

- Training Loop: Use a

MeanSquaredErrorloss function and theAdamWoptimizer with a low learning rate (e.g., 1e-5). Freeze all transformer layers for the first epoch, then unfreeze them for full fine-tuning. Train for 10-50 epochs with early stopping. - Evaluation: Monitor loss on a validation set. Perform inference on the test set and compute evaluation metrics. Use gradient-based attribution methods (e.g., Integrated Gradients) to visualize residues contributing to predictions.

Protocol 3: GNN Training on Protein Structures

Objective: Train a GNN to predict fitness from protein structure graphs.

Materials:

- Dataset: PDB files for wild-type and mutant models (from Rosetta or AlphaFold2).

- Fitness assay data for corresponding variants.

- Libraries: PyTorch Geometric, biopython.

Procedure:

- Graph Construction: For each PDB, define nodes as Cα atoms. Define edges between residues within a spatial cutoff (e.g., 10Å). Node features can include amino acid type, charge, etc. Edge features can include distance, orientation.

- Model Architecture: Implement a Graph Convolutional Network or Graph Attention Network. Use 3-5 message-passing layers to aggregate neighbor information, followed by global pooling (e.g., global mean) and a multi-layer perceptron regressor.

- Training & Validation: Split data at the protein or variant family level to prevent data leakage. Use a 3D structure of a different fold for validation. Train with a regression loss.

- Analysis: Use saliency maps on the graph to highlight structurally important residues or interaction networks that the model deems critical for fitness.

Visualizations

Diagram Title: ML Model Selection Workflow for Enzyme Engineering

Diagram Title: GNN Architecture for Protein Fitness Prediction

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for ML-Guided Directed Evolution

| Item | Function & Description | Example/Provider |

|---|---|---|

| Deep Mutational Scanning (DMS) Data | High-throughput variant fitness data for training and benchmarking models. Generated via NGS-coupled assays. | In-house assay, public databases like ProtaBank, ProteinGym. |

| Pre-trained Protein Language Model | Foundation model providing rich sequence representations, enabling transfer learning with limited data. | ESM-2 (Meta), ProtBERT (Hugging Face), AlphaFold (structure). |

| Structure Prediction/Modeling Suite | Generates 3D structural inputs for GNNs from variant sequences. Essential when experimental structures are lacking. | AlphaFold2, RosettaFold, MODELLER, PyRosetta. |

| Graph Neural Network Library | Specialized framework for building, training, and evaluating GNNs on protein structure graphs. | PyTorch Geometric (PyG), Deep Graph Library (DGL). |

| Automated ML Pipeline Framework | Orchestrates data preprocessing, model training, hyperparameter optimization, and inference. | MLflow, Kubeflow, Nextflow (with ML modules). |

| High-Performance Computing (HPC) | GPU clusters for training large transformer models and conducting virtual screens of massive sequence libraries. | In-house cluster, Google Cloud TPUs, AWS EC2 (P4/G5 instances). |

| Directed Evolution Wet-Lab Platform | Validates model predictions and generates new training data. Includes library construction and high-throughput screening. | MAGE/TRACE, yeast/microbial display, FACS, microfluidics. |

This article presents three targeted case studies within the framework of Machine Learning (ML)-guided directed evolution. ML models accelerate enzyme engineering by predicting fitness landscapes from high-throughput sequencing data, enabling smarter library design and virtual screening. The following application notes demonstrate the practical outcomes of this paradigm in key biotechnological and pharmaceutical areas.

Application Note 1: Engineering Human CYP2C9 for Predictable Drug Metabolism

Objective: Enhance the catalytic efficiency and substrate specificity of human cytochrome P450 2C9 (CYP2C9) for the metabolism of a novel anticoagulant prodrug, SA-Prox, to ensure consistent and rapid activation in patients.

ML & Evolution Strategy: A Gaussian Process (GP) model was trained on an initial dataset of 150 variants (targeting 10 active site residues) screened for turnover number (kcat) and coupling efficiency. The model guided the design of a focused second-generation library of 50 variants.

Key Results: Table 1: Performance of Top CYP2C9 Variants for SA-Prox Activation

| Variant | Mutations | kcat (min⁻¹) | Km (µM) | kcat/Km (µM⁻¹min⁻¹) | Coupling Efficiency (%) |

|---|---|---|---|---|---|

| Wild-Type | - | 12.5 ± 0.8 | 45.2 ± 3.1 | 0.28 | 15.2 |

| 2C9-M1 | F100L, I205L, S365P | 28.4 ± 1.5 | 22.1 ± 1.8 | 1.29 | 41.5 |

| 2C9-M2 | F100L, I205L, A297T, S365P | 35.7 ± 2.1 | 18.5 ± 1.2 | 1.93 | 58.7 |

Protocol: High-Throughput Screening of CYP2C9 Variants Using Fluorescent Probe

- Library Expression: Express CYP2C9 variant libraries in E. coli BL21(DE3) with a pET28a-T7 plasmid system, co-expressing cytochrome P450 reductase (CPR). Induce with 0.5 mM IPTG at 20°C for 20h.

- Whole-Cell Assay: Harvest cells and resuspend in 100 mM potassium phosphate buffer (pH 7.4) to an OD600 of 5.0 in a 96-well deep-well plate.

- Reaction Initiation: Add substrate SA-Prox (from a 10 mM DMSO stock) to a final concentration of 50 µM. Include positive (wild-type) and negative (heat-killed cells) controls.

- Incubation & Analysis: Shake plates at 37°C for 30 min. Quench reactions with an equal volume of acetonitrile containing 0.1% formic acid. Centrifuge and analyze supernatant via LC-MS/MS to quantify product formation using a standard curve.

Visualization: ML-Guided Directed Evolution of CYP2C9

Application Note 2: Optimizing a Subcutaneous Therapeutic Protease (hTRP1) for Cystic Fibrosis

Objective: Engineer human trypsin 1 (hTRP1) for efficient cleavage and inactivation of Mucin-5AC (MUC5AC) in thick sputum, while simultaneously reducing its inhibition by endogenous α-1-antitrypsin (A1AT) to enhance therapeutic durability.

ML & Evolution Strategy: A neural network (NN) model was used to predict the dual fitness function (MUC5AC cleavage rate & residual activity after A1AT exposure) from sequence. Saturation mutagenesis at 8 positions near the active site and A1AT-binding interface was performed.

Key Results: Table 2: Profile of Engineered hTRP1 Therapeutic Proteases

| Variant | Key Mutations | MUC5AC kcat/Km (x10⁴ M⁻¹s⁻¹) | Residual Activity vs. A1AT (%) | Thermal Stability (Tm, °C) |

|---|---|---|---|---|

| Wild-Type hTRP1 | - | 1.8 ± 0.2 | 12 ± 3 | 55.1 |

| hTRP1-OPT5 | K60E, G99R, Q174H | 5.5 ± 0.4 | 65 ± 5 | 57.3 |

| hTRP1-OPT7 | K60E, G99R, D189G, Q174H | 8.2 ± 0.5 | 88 ± 4 | 59.8 |

Protocol: Dual-Function Microtiter Plate Assay for hTRP1 Variants

- Enzyme Purification: Purify hTRP1 variants via His-tag Ni-NTA chromatography. Dialyze into assay buffer (50 mM Tris, 150 mM NaCl, 5 mM CaCl2, pH 8.0).

- Cleavage Assay: In a black 96-well plate, mix 20 nM enzyme with 200 µM fluorogenic peptide substrate (mimicking MUC5AC cleavage site) in 100 µL assay buffer. Monitor fluorescence (ex/em 380/460 nm) every 30s for 10 min to determine initial velocity.

- Inhibition Challenge: Pre-incubate 100 nM enzyme with 2 µM human A1AT for 15 min at 37°C.

- Residual Activity Assay: Dilute the pre-incubated mix 1:5 into the fluorogenic substrate solution from Step 2. Measure remaining activity as a percentage of the uninhibited control (Step 2).

Visualization: Dual-Selection Pathway for Therapeutic Protease

Application Note 3: Developing a Sustainable Biocatalyst for PET Depolymerization

Objective: Engineer a thermostable polyester hydrolase (LCCWT) for efficient degradation of post-consumer polyethylene terephthalate (PET) at industrially relevant temperatures (≥70°C) without energy-intensive pre-processing.

ML & Evolution Strategy: A convolutional neural network (CNN) analyzed protein structure landscapes to predict stabilizing and activity-enhancing mutations. Focus was on substrate-binding groove geometry and surface charge optimization.

Key Results: Table 3: Performance of Engineered LCC Variants on Post-Consumer PET

| Variant | Mutations | Activity on PET Film (µM h⁻¹ cm⁻²) | PET-to-Monomer Conversion (72h, %) | Optimal Temp. (°C) | Melting Point (Tm, °C) |

|---|---|---|---|---|---|

| LCCWT | - | 12.5 ± 1.1 | 18 ± 2 | 65 | 71.5 |

| LCCICCG | S121E, D186H, R232K | 28.7 ± 2.3 | 45 ± 3 | 70 | 78.2 |

| LCCUltra | F64L, S121E, T140A, D186H, R232K | 42.3 ± 3.5 | 92 ± 5 | 75 | 81.6 |

Protocol: Semi-Continuous PET Degradation Assay

- PET Preparation: Cut amorphous PET film (Goodfellow) into 15 mg flakes (approx. 2x2 mm). Pre-wash in methanol and dry.

- Reaction Setup: In a 2 mL screw-cap tube, add 15 mg PET flakes and 1 mL of 100 mM glycine-NaOH buffer (pH 9.0) containing 5 µM purified enzyme variant.

- Incubation: Incubate in a thermomixer at 72°C with shaking at 800 rpm for 72h.

- Product Quantification: Every 24h, centrifuge briefly and remove 50 µL of supernatant. Dilute and analyze via HPLC to quantify monomers (terephthalic acid, mono-(2-hydroxyethyl) terephthalate). Replace with 50 µL of fresh pre-warmed buffer to maintain volume.

- Calculations: Calculate total monomer release per unit area of film over time.

The Scientist's Toolkit: Key Reagent Solutions for Enzyme Engineering Workflows

| Reagent / Material | Function in Protocol | Example/Note |

|---|---|---|

| HisTrap HP Column (Cytiva) | Affinity purification of His-tagged enzyme variants. | Standard for high-throughput purification post-expression. |

| Fluorogenic Peptide Substrate (e.g., Mca-Pro-Leu-Gly-Leu-Dpa-Ala-Arg-NH₂) | Sensitive, continuous assay for protease activity. | Used in hTRP1 screening; fluorescence upon cleavage. |

| Cytochrome P450 Reductase (CPR) Co-expression System | Essential electron transfer partner for functional P450 assays. | Enables whole-cell screening of CYP activity. |

| Amorphous PET Film (Goodfellow, #ES301430) | Standardized, reproducible substrate for depolymerase screening. | Consistent crystallinity is critical for activity comparisons. |

| Deepwell Plate (2.2 mL, 96-well) | High-throughput cell culture and assay format for library screening. | Compatible with automated liquid handlers. |

| α-1-Antitrypsin (Human, Plasma-derived) | Key inhibitory challenge for therapeutic protease engineering. | Essential for simulating in vivo durability. |

Visualization: Integrated ML-Driven Enzyme Engineering Pipeline

Navigating Pitfalls: Solving Data Scarcity, Model Bias, and Experimental Integration Challenges

In the context of ML-guided directed evolution for enzyme engineering, the "cold-start" problem refers to the significant challenge of initiating predictive machine learning models when experimental fitness data (e.g., on catalytic activity, stability, or selectivity) is scarce or initially nonexistent. This Application Note details strategies and protocols to overcome this bottleneck, enabling efficient bootstrapping of models to accelerate the design-build-test-learn (DBTL) cycle.

Table 1: Comparison of Cold-Start Strategies for Enzyme Engineering

| Strategy | Typical Initial Dataset Size Required | Expected Performance (vs. Random Screening) | Key Computational Tools/Codes | Primary Risk/Mitigation |

|---|---|---|---|---|

| Transfer Learning from Related Tasks | 10-100 variant measurements | 2-5x enrichment | ESM-2/3, UniRep, ProtBERT, fine-tuning scripts (PyTorch) | Source/target task mismatch; use diverse pre-trained models. |

| Uncertainty Sampling & Active Learning | 50-200 variant measurements | 3-8x enrichment over cycles | Bayesian Neural Networks (GPyTorch), Gaussian Processes (scikit-learn), DEAP | Budget exhaustion before convergence; use hybrid acquisition functions. |

| One-Shot/Low-N Design with Generative Models | 0-50 variant measurements | Variable; high diversity | ProteinMPNN, RFdiffusion, EvoDiff, Tranception | Poor in-silico to in-vitro correlation; integrate physics-based filters. |

| Leveraging Physicochemical & Structural Features | 100-500 variant measurements | 1.5-4x enrichment | Rosetta, FoldX, PyMol, MD simulation trajectories (GROMACS) | Features may not correlate with target function; use feature selection. |

| Semi-Supervised Learning on Unlabeled Data | 50-200 labeled + 10^4-10^6 unlabeled sequences | 2-6x enrichment | VAT, MixMatch, sequence embeddings (from AlphaFold, ESM) | Confirmation bias; implement robust validation on hold-out sets. |

Experimental Protocols

Protocol 3.1: Initiating a Cycle with Transfer Learning

Objective: To leverage a model pre-trained on general protein sequences or a related fitness property to predict activity for a novel enzyme with minimal initial data. Materials: Pre-trained protein language model (e.g., ESM-2 650M), small labeled dataset for target enzyme, computing cluster with GPU. Procedure:

- Data Preparation: Encode your wild-type and variant sequences using the pre-trained model's last hidden layer or per-residue embeddings. Pair embeddings with your initial fitness measurements (n=10-100).

- Model Architecture: Append a multi-layer perceptron (MLP) regression/classification head on top of the frozen or lightly fine-tuned base encoder.

- Training: Use a high learning rate (e.g., 1e-3) for the new head and a low rate (e.g., 1e-5) for the base encoder if fine-tuning. Train for 50-100 epochs with early stopping.

- Validation: Perform leave-one-out or k-fold cross-validation (k=3-5) to estimate model performance. Use the model to rank a designed library of 10^4 variants for the first experimental cycle.

Protocol 3.2: Active Learning Loop for Directed Evolution

Objective: To iteratively select the most informative variants for experimental testing to maximize model improvement. Materials: Initial small dataset, predictive model capable of uncertainty estimation (e.g., Gaussian Process), liquid handling robotics for high-throughput screening. Procedure:

- Initial Model Training: Train a model (e.g., Gaussian Process Regression with RBF kernel) on the starting dataset.

- Query Selection: For all candidates in a large in-silico library (e.g., all single mutants), predict the mean (μ) and standard deviation (σ) of the fitness.

- Acquisition Function: Calculate the acquisition score for each candidate. Use Upper Confidence Bound (UCB): UCB(x) = μ(x) + κσ(x), where κ balances exploration/exploitation.

- Batch Selection: Select the top N (e.g., 96) variants with the highest UCB scores for the next round of experimental characterization.

- Iteration: Add new experimental data to the training set. Retrain the model and repeat steps 2-4 for 3-6 cycles or until desired fitness is achieved.

Visualization of Workflows and Relationships

Title: Cold-Start Model Bootstrapping Workflow

Title: Active Learning Cycle for Enzyme Engineering

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Tools for ML-Guided Directed Evolution

| Item Name | Function in Cold-Start Context | Example Product/Code |

|---|---|---|

| Pre-trained Protein Language Model | Provides rich, general-purpose sequence feature representations to compensate for lack of target-specific data. | ESM-2 (650M params), ProtBERT, UniRep (evozyne). |

| Bayesian Optimization Library | Implements acquisition functions for active learning and uncertainty-aware prediction. | GPyTorch, BoTorch, scikit-optimize. |

| Protein Stability Calculation Suite | Computes in-silico ΔΔG or other biophysical features as prior knowledge for model bootstrapping. | Rosetta ddg_monomer, FoldX (RepairPDB, BuildModel). |

| High-Throughput Cloning System | Enables rapid construction of the small, focused variant libraries recommended by initial cold-start models. | Gibson Assembly, Golden Gate (MoClo), Twist Bioscience oligo pools. |

| Cell-Free Protein Synthesis Kit | Allows rapid in-vitro expression and screening of enzyme variants, accelerating the data generation loop. | PURExpress (NEB), MyProtein kit (Thermo). |

| Microplate Reader with Kinetic Assay Capability | Measures enzyme activity (e.g., absorbance, fluorescence) for 96/384-well plates to generate quantitative fitness data. | BioTek Synergy H1, Tecan Spark. |

| Automated Liquid Handler | Enables reproducible and rapid dispensing for assay setup and library construction for iterative cycles. | Opentrons OT-2, Beckman Biomek i7. |

Avoiding Overfitting and Model Collapse in High-Dimensional Protein Sequence Space

Application Notes

Within ML-guided directed evolution for enzyme engineering, overfitting occurs when a model learns spurious correlations in limited experimental data, failing to generalize to unexplored sequence space. Model collapse, a degenerative process where a generative model's output diversity collapses, is a critical risk when iteratively training on model-generated data. These issues are acute in high-dimensional protein spaces where functional sequences are astronomically outnumbered by non-functional ones. The following protocols and strategies are designed to mitigate these risks, ensuring robust and generalizable models for guiding protein engineering campaigns.

Protocols & Methodologies

Protocol 1: Training Data Curation and Augmentation for Generalization

Objective: To construct a training dataset that maximizes sequence-function diversity and minimizes biases that lead to overfitting.

Procedure:

- Data Collection: Gather sequence-function data from heterogeneous sources (e.g., public databases like UniProt, in-house HTE campaigns, literature mining). Record associated metadata (e.g., assay conditions, measurement error).

- Redundancy Reduction: Cluster sequences at 80-90% identity using CD-HIT or MMseqs2. Select a representative sequence from each cluster to reduce topological bias.

- Controlled Noise Injection (Augmentation): For each experimental datapoint, generate in silico variants via:

- Conservative Substitution: Replace amino acids with BLOSUM62-based probable substitutions.

- Mild Additive Noise: Add Gaussian noise (μ=0, σ=5% of signal range) to measured function values to prevent the model from fitting experimental noise.

- Stratified Splitting: Split the processed dataset into training (70%), validation (15%), and hold-out test (15%) sets, ensuring all functional classes (or activity bins) are proportionally represented in each split. The hold-out test set must contain only natural or experimentally validated sequences, no augmented ones.

Protocol 2: Regularized Training of a Variational Autoencoder (VAE) for Protein Generation

Objective: To train a generative model that learns a smooth, continuous, and diverse latent representation of protein sequence space.

Procedure:

- Model Architecture: Implement a VAE with:

- Encoder: 1D convolutional layers → dense layer → outputs mean (μ) and log-variance (log σ²) vectors.

- Latent Space (z): Dimensionality = 20-50. Apply Kullback-Leibler (KL) divergence annealing over the first 50 epochs.

- Decoder: Dense layer → 1D transposed convolutional layers → softmax output per position.

- Regularization:

- KL Weight (β): Use a β-VAE framework with β gradually increased to 0.1-0.5.

- Dropout: Apply spatial dropout (rate=0.2) between convolutional layers.

- Label Smoothing: Use a label smoothing factor of 0.1 on the sequence reconstruction loss.

- Training: Use Adam optimizer (lr=1e-4), batch size=64. Monitor reconstruction loss and KL loss on the validation set. Stop training when the Fréchet Distance (see Protocol 4) on the validation set plateaus or increases for 10 consecutive epochs.

Protocol 3: Iterative Training with Experimental Feedback to Prevent Collapse

Objective: To safely incorporate model-generated sequences into subsequent training rounds without inducing distributional collapse.

Procedure:

- Initial Training: Train a predictor (e.g., CNN, Transformer) and generator (VAE) on the curated dataset from Protocol 1.

- Generation & Prioritization: Sample 10,000 sequences from the generator's prior. Predict their fitness. Select top 2000 via:

- Thompson Sampling: Balance exploration (high uncertainty) and exploitation (high predicted score).

- Diversity Filter: Ensure selected sequences have ≤70% pairwise identity.

- Experimental Characterization: Express, purify, and assay the 2000 selected variants using a medium-throughput screen (e.g., microplate reader assay).

- Data Merger & Rejection Sampling: Merge new data with the original training set. Before retraining, calculate the Jensen-Shannon Divergence (JSD) between the new data distribution and the original. If JSD > 0.2, the distribution has shifted excessively. Apply rejection sampling to down-weight over-represented sequence clusters in the new dataset.

- Retraining: Retrain the predictor and generator on the merged, re-weighted dataset. Freeze the encoder of the VAE for the first 5 retraining epochs to stabilize the latent space. Return to Step 2.

Protocol 4: Quantitative Monitoring Metrics for Overfitting and Collapse

Objective: To implement quantifiable, in-training metrics for early detection of model degradation.

Procedure:

- For Overfitting (Predictive Model):

- Calculate the Performance Gap:

Training MAE - Validation MAE. A gap >15% of the validation MAE indicates overfitting. - Calculate Weight Norm Growth: Monitor the L2 norm of model weights. A consistent increase during late training suggests memorization.

- Calculate the Performance Gap:

- For Collapse (Generative Model):

- Latent Space PCA: Every 5 epochs, project the latent vectors of 1000 random training samples and 1000 generated samples onto the first two principal components. Visual cluster overlap indicates stability; a shrinking generator cloud indicates collapse.

- Fréchet Distance: Compute the Fréchet Inception Distance (FID) adapted for sequences using embeddings from a protein language model (e.g., ESM-2). An increasing FID between generated and validation sets signals divergence or collapse.

- Log all metrics in a dedicated table during training for epoch-by-epoch comparison.

Table 1: Impact of Regularization Techniques on Model Generalization

| Regularization Method | Validation Loss (MAE) | Hold-out Test Loss (MAE) | Generated Sequence Diversity (Unique % @ 90% ID) | Metric for Comparison |

|---|---|---|---|---|

| Baseline (No Regularization) | 0.12 | 0.35 | 42% | Control |

| + Dropout (0.2) | 0.14 | 0.28 | 65% | Improvement in generalization |

| + Label Smoothing (0.1) | 0.15 | 0.26 | 68% | Best test performance |

| + β-VAE (β=0.3) | 0.18 | 0.29 | 88% | Best diversity |

Table 2: Monitoring Metrics During Iterative Training Rounds

| Training Round | New Experimental Variants | Avg. Predicted Fitness | Avg. Measured Fitness | JSD (vs. Round 0) | FID (vs. Validation Set) |

|---|---|---|---|---|---|

| 0 (Initial) | N/A | N/A | N/A | 0.00 | 15.2 |

| 1 | 2000 | 0.85 | 0.78 | 0.12 | 18.5 |

| 2 | 2000 | 0.88 | 0.81 | 0.19 | 20.1 |

| 3 | 2000 | 0.91 | 0.72 | 0.31 | 45.6 |

| 3* (with Rejection Sampling) | 2000 | 0.89 | 0.80 | 0.18 | 22.3 |

Visualizations

Workflow for Preventing Overfitting & Collapse

Latent Space Health vs. Collapse

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in ML-Guided DE | Example/Note |

|---|---|---|

| High-Quality Training Dataset | Foundation for model training; determines the learnable manifold. | Aggregated from public DBs (UniProt, BRENDA) and proprietary HTE. Must include negative data. |

| Regularization Suite | Prevents overfitting by imposing constraints during model training. | Includes dropout layers, label smoothing, KL-divergence (β) weighting, and weight decay. |

| Protein Language Model (pLM) Embeddings | Provides robust, contextual sequence representations for distance/metric calculations. | ESM-2 or ProtT5 embeddings used to compute FID and assess sequence distribution shifts. |