Automating Enzyme Discovery: A Comprehensive Snakemake Workflow for Targeted Metagenomic Screening

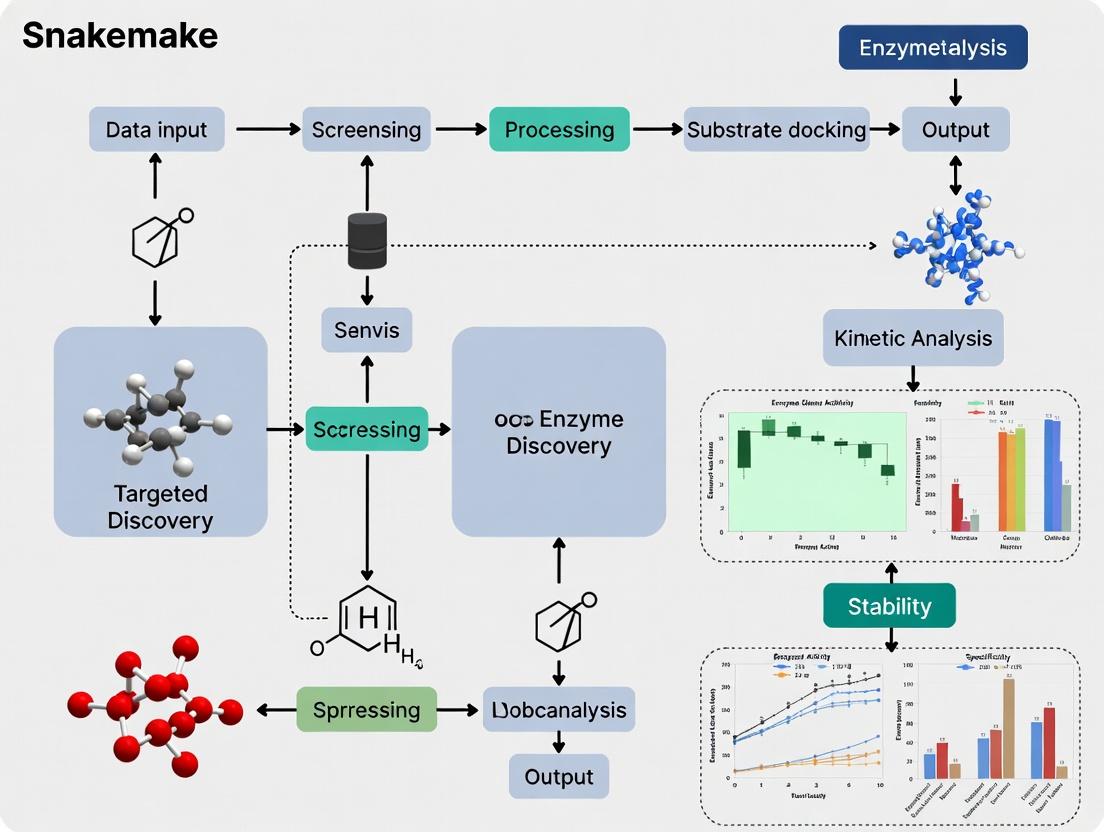

This article provides a complete guide for researchers and drug discovery professionals on implementing a reproducible Snakemake workflow for targeted enzyme discovery.

Automating Enzyme Discovery: A Comprehensive Snakemake Workflow for Targeted Metagenomic Screening

Abstract

This article provides a complete guide for researchers and drug discovery professionals on implementing a reproducible Snakemake workflow for targeted enzyme discovery. We begin by establishing the core bioinformatics principles, exploring key databases, and defining common targets like lipases, proteases, and polymer-degrading enzymes. The methodological section details the step-by-step construction of the pipeline, from raw metagenomic read processing and quality control to gene prediction, functional annotation, and candidate ranking. We then address common computational challenges, optimization strategies for performance and cost, and best practices for reproducibility. Finally, we cover methods for validating computational predictions through sequence analysis, structural modeling, and comparative benchmarking against existing tools. This guide empowers scientists to build robust, scalable, and transparent workflows to accelerate the identification of novel biocatalysts for industrial and therapeutic applications.

The Bioinformatics Bedrock: Core Concepts and Targets for Enzyme Discovery

Within a Snakemake-driven pipeline for targeted enzyme discovery, precise initial target definition is critical. This involves selecting enzyme classes based on desired catalytic function, relevance to a biological pathway, or industrial process suitability. This document outlines key enzyme classes, their applications, and core experimental protocols for initial characterization, forming the essential first module of a reproducible bioinformatics-to-bench workflow.

Table 1: High-Value Enzyme Classes for Targeted Discovery

| Enzyme Class (EC) | Primary Function | Key Biomedical Application | Key Industrial Application | Representative Market/Research Metric |

|---|---|---|---|---|

| Kinases (EC 2.7.11.-) | Transfer phosphate group (ATP → substrate) | Oncology (e.g., EGFR, BCR-ABL inhibitors), Inflammation | Rare; specialized biocatalysis | >50 FDA-approved drugs; ~538 human kinases mapped |

| Proteases (EC 3.4.-.-) | Hydrolyze peptide bonds | Antiviral (e.g., HIV-1 protease inhibitors), Hypertension (ACE inhibitors) | Detergent formulation, food processing (tenderization) | Global protease market ~$2.3B (2023); >60 human proteases as drug targets |

| Polymerases (EC 2.7.7.-) | Synthesize nucleic acid chains | Antiviral therapy (e.g., HCV NS5B inhibitors), Diagnostics (PCR) | Next-generation sequencing, Synthetic biology | PCR enzyme market ~$4.1B (2024); Fidelity rates: 10^-4 to 10^-6 errors/base |

| Oxidoreductases (EC 1.-.-.-) | Catalyze oxidation/reduction reactions | Antibacterial (targeting metabolic pathways), Antioxidant therapies | Bulk chemical synthesis, biosensors, biofuel cells | Global market ~$7.5B (2024); Industrial turnover numbers up to 10^6 min^-1 |

| Glycosyltransferases (EC 2.4.-.-) | Transfer sugar moieties to substrates | Antibiotic development (targeting cell wall synthesis), Glyco-engineering | Synthesis of oligosaccharides, glycoconjugates | ~200 human GT targets in disease; key for antibody-drug conjugate (ADC) development |

| Hydrolases (e.g., Lipases, EC 3.1.1.3) | Hydrolytic cleavage of esters, amides, glycosides | Digestive disorders, Lipid metabolism diseases | Bio-diesel production, enantioselective resolution, pulp/paper | Lipase market ~$735M (2024); Industrial process yields >99% enantiomeric excess |

Application Notes & Core Protocols

Application Note 1: Kinase Target Validation in a Cell Signaling Pathway Kinases are pivotal in signal transduction. Targeting specific nodes can modulate disease pathways (e.g., MAPK/ERK in cancer). A Snakemake workflow can automate the analysis of phosphoproteomics data to identify active kinase nodes from mass spectrometry output.

Protocol 1.1: In Vitro Kinase Activity Assay (Adaptable for High-Throughput Screening) Objective: Measure the phosphotransferase activity of a purified recombinant kinase candidate. Reagents: Purified kinase, specific peptide/protein substrate, ATP, Reaction Buffer (50 mM HEPES pH 7.5, 10 mM MgCl₂, 1 mM DTT), [γ-³²P]ATP or ADP-Glo Kit. Procedure:

- In a 25 µL reaction, combine 50 ng kinase, 10 µM substrate, and 10 µM ATP (with trace [γ-³²P]ATP for radiometric assay) in buffer.

- Incubate at 30°C for 30 minutes.

- Terminate Reaction:

- Radiometric: Spot reaction onto phosphocellulose paper, wash with 0.75% phosphoric acid, measure scintillation.

- Luminescent (ADP-Glo): Add ADP-Glo Reagent to deplete ATP, then add Kinase Detection Reagent to convert ADP to ATP, followed by luciferase/luciferin reaction.

- Quantify phosphate incorporation via scintillation counting or luminescence (relative light units). Data Integration: Snakemake rule can process raw luminescence/scintillation counts from plate readers into normalized activity plots.

Diagram Title: Kinase Activity Assay Workflow

Application Note 2: Hydrolase Characterization for Biocatalysis Industrial hydrolases (lipases, cellulases) require characterization of activity under process-like conditions (e.g., organic solvents, high temperature). Snakemake can manage parallel enzyme variant screening against multiple substrate and condition sets.

Protocol 2.1: pH & Temperature Profiling of Esterase/Lipase Activity Objective: Determine optimal pH and temperature for a novel hydrolase. Reagents: Purified hydrolase, p-nitrophenyl ester substrate (e.g., pNP-acetate, pNP-palmitate), Buffers (pH 4-10), Thermostatically controlled spectrophotometer. Procedure:

- Prepare 1 mM substrate in appropriate buffer (varying pH) with 1% (v/v) acetonitrile for solubility.

- Aliquot 190 µL substrate solution into a 96-well plate, pre-equilibrate at target temperature (e.g., 20°C to 70°C).

- Initiate reaction by adding 10 µL of diluted enzyme.

- Continuously monitor absorbance at 405 nm (release of p-nitrophenol) for 5 minutes.

- Calculate initial velocity (V₀) using the molar extinction coefficient of p-nitrophenol (ε₄₀₅ ≈ 16,200 M⁻¹cm⁻¹ for basic pH).

- Plot V₀ vs. pH and vs. temperature to determine optima and stability range. Workflow Integration: Snakemake rules can collate A405 time-course data from multiple plates, apply the extinction coefficient calculation, and generate comparative profile plots for hundreds of variants.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Enzyme Target Discovery & Characterization

| Reagent / Material | Primary Function in Target Research | Example Product/Kit |

|---|---|---|

| Recombinant Enzyme (Purified) | Core substrate for in vitro kinetic assays, structural studies. | His-tagged enzymes from expression systems (E. coli, insect cells). |

| Activity Assay Kits (Luminescent) | Enable high-throughput, homogeneous, non-radiometric activity measurement. | ADP-Glo Kinase Assay, Protease-Glo, CellTiter-Glo (viability). |

| Fluorogenic/Chromogenic Substrates | Provide sensitive, continuous readout of hydrolase (protease, lipase) activity. | p-Nitrophenyl (pNP) esters, AMC/Rho110-coupled peptides. |

| Phospho-Specific Antibodies | Detect and quantify phosphorylation state of kinase substrates in cell lysates. | Anti-phospho-Tyr/Ser/Thr antibodies for Western blot/ELISA. |

| Inhibitor Libraries (Small Molecule) | For target validation, selectivity screening, and drug discovery. | Published kinase inhibitor sets, protease inhibitor cocktails. |

| Thermostability Agents | Enhance enzyme stability for storage and industrial application screening. | Glycerol, trehalose, polyethylene glycols (PEGs). |

| Snakemake Workflow Manager | Automates and reproduces data analysis pipelines from raw data to figures. | Snakemake (v7.32+), conda for environment management. |

Diagram Title: Target Enzyme Discovery Thesis Workflow

Application Notes

This guide details the integration of four essential bioinformatics databases into a Snakemake workflow for targeted enzyme discovery. The workflow standardizes data retrieval and processing, enabling reproducible in silico characterization of enzyme families for applications in biotechnology and drug development.

Table 1: Core Database Comparison for Enzyme Discovery

| Database | Primary Focus | Key Data Types (Quantitative) | Relevance to Enzyme Discovery |

|---|---|---|---|

| CAZy | Carbohydrate-Active Enzymes | ~400 enzyme families; ~3M classified proteins (2024) | Identifies glycosyl hydrolases, transferases, etc., for polysaccharide degradation/synthesis. |

| MEROPS | Proteolytic Enzymes | ~4,800 peptidases; ~82,000 inhibitors (Release 12.3) | Targets protease and inhibitor discovery for therapeutic intervention. |

| BRENDA | Comprehensive Enzyme Data | ~90k enzymes; ~200k kinetic parameters (KM, kcat); ~2M EC numbers | Provides functional parameters (pH/Temp optima, kinetics) for enzyme characterization. |

| UniProt | Comprehensive Protein Data | ~220M protein entries; ~600k manually reviewed (Swiss-Prot) (2024_03) | Central source for sequence, functional annotation, and structural data cross-referencing. |

Table 2: Snakemake Workflow: Key Rule Outputs & Databases

| Rule Name | Input | Output | Primary Database Used |

|---|---|---|---|

fetch_target_families |

EC list / target | data/cazy_families.txt data/merops_clans.txt |

CAZy, MEROPS |

retrieve_sequences |

Family/Clan IDs | data/uniprot_sequences.fasta |

UniProt (via API) |

annotate_with_brenda |

Sequence IDs | data/kinetic_parameters.tsv |

BRENDA (via REST) |

generate_report |

All outputs | report/enzyme_candidates.html |

Integrated Data |

Experimental Protocols

Protocol 1: Automated Retrieval of CAZy Family Members

Objective: To programmatically obtain all protein accessions for a target Glycosyl Hydrolase (GH) family.

Materials: Snakemake workflow, Python 3.10+, biopython, requests libraries.

- Input Preparation: Create a file (

config.yaml) specifying the target CAZy family (e.g.,GH7). - Rule Execution: The Snakemake rule

fetch_cazyexecutes a Python script. - Data Fetching: Script sends an HTTP request to the CAZy API (

www.cazy.org/api). For GH7, the query is:http://www.cazy.org/api/family/GH7/proteins?format=json. - Data Parsing: Parse JSON response to extract UniProt accession numbers and protein names.

- Output: A tab-separated file (

results/cazy_GH7_members.tsv) is created, containing columns:UniProt_Acc,Protein_Name,CAZy_Family.

Protocol 2: Querying BRENDA for Kinetic Parameters

Objective: To extract kinetic data (KM, kcat) for a specified Enzyme Commission (EC) number.

Materials: Snakemake, brenda-py Python package, BRENDA license key.

- Authentication: Store BRENDA license key securely in workflow configuration.

- Rule Definition: The

query_brendarule takes an EC number (e.g.,3.2.1.176) as input. - Parameter Extraction: Using

brenda-py, query for allKM_VALUEandTURNOVER_NUMBERparameters. - Data Filtering: Filter results by organism (e.g.,

Homo sapiens) and substrate. Calculate mean ± SD for replicates. - Output: A structured file (

results/brenda_kinetics_3.2.1.176.tsv) with columns:Substrate,KM_mM,kcat_per_s,Organism,Reference.

Protocol 3: Integrated Annotation Pipeline via Snakemake

Objective: To unify data from CAZy, MEROPS, and BRENDA for a target enzyme sequence via its UniProt ID. Materials: Integrated Snakemake workflow, all listed databases.

- Start Point: Input a UniProt ID (e.g.,

P00784) into the workflow. - Parallel Queries: The workflow triggers concurrent rules:

- Rule A: Maps ID to CAZy family via CAZy-UniProt cross-reference.

- Rule B: Maps ID to MEROPS peptidase family via MEROPS flat-file download.

- Rule C: Fetches all kinetic data for the protein's EC number from BRENDA.

- Data Aggregation: A consolidation rule (

aggregate_annotations) merges all results into a single JSON document. - Validation: Check for conflicting annotations (e.g., CAZy family vs. MEROPS clan).

- Final Output: A comprehensive annotation file (

results/{uniprot_id}_full_annotation.json) ready for candidate prioritization.

Visualizations

Database Integration in Snakemake Workflow

Protocol: Kinetic Data from BRENDA

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Digital Tools & Resources for the Workflow

| Item / Resource | Function / Description | Source / Example |

|---|---|---|

| Snakemake | Workflow management system to create reproducible and scalable data analyses. | https://snakemake.github.io |

| Biopython | Python library for biological computation; used for parsing FASTA, GenBank, and API calls. | https://biopython.org |

| Brenda-py | Official Python client for programmatically querying the BRENDA database. | https://pypi.org/project/brenda-py |

| CAZy API | Programmatic interface (REST) to retrieve CAZy family and protein data in JSON format. | http://www.cazy.org/api |

| MEROPS Flatfiles | Regularly updated text files containing all peptidase and inhibitor data for local parsing. | FTP: ftp.ebi.ac.uk/pub/databases/merops |

| UniProt REST API | Web service to retrieve protein data in various formats (JSON, XML, FASTA) by accession. | https://www.uniprot.org/help/api |

| Conda/Mamba | Package and environment management to ensure dependency stability across analyses. | https://mamba.readthedocs.io |

| Graphviz (Dot) | Open-source graph visualization software used to render workflow diagrams. | https://graphviz.org |

Application Notes: Snakemake in Targeted Enzyme Discovery

Within a thesis focused on developing a Snakemake workflow for targeted enzyme discovery, the platform's core features directly address critical research bottlenecks. Enzyme discovery pipelines involve sequential, data-dependent steps—genome mining, sequence analysis, homology modeling, cloning, expression, and functional assays. Snakemake orchestrates these steps into a single, reproducible, and scalable computational workflow.

Table 1: Quantitative Impact of Adopting Snakemake for Bioinformatics Workflows

| Metric | Traditional Scripting | Snakemake Workflow | Improvement |

|---|---|---|---|

| Reproducibility | Manual documentation; hard-coded paths | Declarative, version-controlled rule definitions | High (Automated environment & data provenance) |

| Pipeline Execution Time | Linear, manual step triggering | Automatic parallelization of independent jobs | Up to 90% reduction on multi-core systems |

| Computational Resource Use | Often underutilized | Efficient, configurable resource management (cores, memory) | 30-70% more efficient |

| Error Recovery | Full restart or manual intervention | Automatic checkpointing and partial re-runs | Saves >50% time after mid-pipeline failures |

| Scalability (HPC/Cloud) | Requires extensive manual modification | Native support for cluster and cloud execution | Seamless transition from laptop to cluster |

Detailed Experimental Protocols

Protocol 1: Snakemake Workflow for In Silico Enzyme Candidate Identification

This protocol details the computational steps for identifying putative hydrolase enzymes from metagenomic data, a common first step in discovery pipelines.

- Input Preparation: Place raw paired-end metagenomic reads (e.g.,

sample_R1.fastq,sample_R2.fastq) in the/data/rawdirectory. Create a sample sheet (samples.tsv) mapping sample IDs to file paths. - Quality Control & Assembly:

- Implement a Snakemake rule using

FastQC(v0.12.1) for initial quality reports. - Implement a rule using

Trimmomatic(v0.39) to remove adapters and low-quality bases (parameters: ILLUMINACLIP:TruSeq3-PE.fa:2:30:10, LEADING:3, TRAILING:3, SLIDINGWINDOW:4:15, MINLEN:36). - Implement a rule for de novo assembly using

MEGAHIT(v1.2.9) with default parameters.

- Implement a Snakemake rule using

- Open Reading Frame (ORF) Prediction: Implement a rule using

Prodigal(v2.6.3) in meta-mode (-p meta) on the assembled contigs (*.fa). Output will be nucleotide (*.fna) and amino acid (*.faa) sequence files. - Homology-Based Screening: Implement a rule using

HMMER(v3.3.2) to search the*.faaproteins against thePfamdatabase. Use a curated Hidden Markov Model (HMM) profile for the enzyme family of interest (e.g.,PF07859for alpha/beta hydrolase fold). Retain hits with an e-value< 1e-10. - Output Consolidation: A final rule collates all high-confidence hits into a single, annotated FASTA file (

final_candidates.faa) with associated metadata (sample origin, contig length, HMM score).

Protocol 2: Snakemake-Driven Structural Characterization Pipeline

This protocol follows Protocol 1, taking candidate sequences for 3D structure prediction and analysis.

- Input: The

final_candidates.faafile from Protocol 1. - Multiple Sequence Alignment (MSA): Implement a rule using

Clustal Omega(v1.2.4) orMAFFT(v7.505) to generate an MSA of candidate sequences against known reference structures. - Homology Modeling: Implement a rule using

MODELLER(v10.4) or a wrapper forSWISS-MODEL. The rule downloads a suitable template (e.g., PDB ID: 1EXA) and generates 5 models per candidate. - Model Evaluation: Implement a rule using

DOPEorQMEANscores within MODELLER to select the best model for each candidate. - Active Site Analysis: Implement a rule using

PyMOLorfpocketto analyze the predicted model's active site cavity, generating report files.

Mandatory Visualization

Diagram 1: Snakemake Enzyme Discovery Workflow

Diagram 2: Snakemake Rule Dependency Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools & Resources for Snakemake-Driven Enzyme Discovery

| Item | Function in Workflow | Example/Note |

|---|---|---|

| Snakemake (v7.32+) | Core workflow management system. Enforces reproducible and scalable pipeline execution. | Must be installed via Conda/Mamba. |

| Conda/Mamba | Environment and package manager. Creates isolated software environments for each pipeline step. | Critical for reproducibility of tool versions. |

| HMMER Suite | Scans protein sequences against profile HMM databases (e.g., Pfam) to identify enzyme families. | hmmsearch is the key command. |

| Prodigal | Predicts protein-coding genes (ORFs) in microbial (meta)genomic sequences. | Operates in -p meta mode for complex communities. |

| MEGAHIT | Efficient de novo assembler for large and complex metagenomic datasets. | Used for uncultivable microbial samples. |

| MODELLER | Generates 3D homology models of protein structures based on known templates. | Requires a PDB template of a related enzyme. |

| Conda Forge/Bioconda | Community repositories providing >10,000 bioinformatics packages as Conda recipes. | Primary source for installing tools within Snakemake. |

| Pfam Database | Curated collection of protein family HMM profiles. Used for functional annotation. | Profile for target enzyme family (e.g., hydrolase) is required. |

| Git | Version control for the Snakemake workflow script (Snakefile), configs, and analysis code. |

Tracks all changes to the computational methods. |

| Singularity/Apptainer | Containerization platform. Used with Snakemake to encapsulate entire software environments. | Ensures perfect reproducibility on HPC/clusters. |

Metagenomics has revolutionized our ability to access the genetic potential of uncultured microorganisms from any environment. This approach functions as a powerful discovery engine, bypassing the need for cultivation by extracting and sequencing DNA directly from complex samples like soil, ocean water, or the human gut. The resulting sequence data is assembled and binned to reconstruct genomes or gene fragments, culminating in extensive gene catalogs. These catalogs are treasure troves for identifying novel enzymes, biosynthetic pathways, and bioactive compounds with applications in drug discovery, industrial biocatalysis, and bioremediation.

Within the context of a targeted enzyme discovery thesis, a reproducible and scalable bioinformatics workflow is paramount. The Snakemake workflow management system provides an ideal framework for constructing a pipeline that standardizes the process from raw sequencing reads to a curated gene catalog, enabling the identification of target enzyme families (e.g., glycoside hydrolases, polyketide synthases) across multiple metagenomic datasets.

Key Protocols for Metagenomic Gene Catalog Construction

Protocol 2.1: Environmental DNA Extraction and Library Preparation

Objective: To obtain high-quality, high-molecular-weight DNA from a complex environmental sample suitable for shotgun metagenomic sequencing. Materials: See The Scientist's Toolkit (Section 5). Method:

- Sample Collection & Stabilization: Collect sample (e.g., 1g soil, 200ml water filtered onto 0.22μm membrane). Immediately freeze in liquid nitrogen or preserve in a DNA/RNA stabilization buffer.

- Cell Lysis: Use a combination of mechanical (bead-beating), chemical (lysozyme, SDS), and thermal lysis. For soil, subject sample to bead-beating in lysis buffer for 45 seconds at 6.0 m/s.

- DNA Purification: Remove contaminants (humic acids, proteins) using CTAB or dedicated commercial kit columns. Perform precipitations with isopropanol.

- Quality Assessment: Verify DNA integrity via pulsed-field gel electrophoresis (>20 kb ideal) and quantify using Qubit fluorometry. Ensure A260/A280 ratio is ~1.8.

- Library Preparation: Fragment DNA to ~350 bp (e.g., using ultrasonication). Perform end-repair, A-tailing, and adapter ligation using a commercial kit (e.g., Illumina TruSeq). Amplify library with limited-cycle PCR.

- Sequencing: Pool libraries and sequence on an Illumina NovaSeq platform to a target depth of ≥50 million 150-bp paired-end reads per sample.

Protocol 2.2: Snakemake Workflow for Read Processing, Assembly, and Gene Prediction

Objective: To deploy a standardized, reproducible pipeline for converting raw reads into a predicted protein catalog.

Prerequisites: Install Snakemake, conda, and required bioinformatics tools (FastQC, Trimmomatic, MEGAHIT, metaSPAdes, MetaGeneMark, Prokka).

Workflow Configuration (config.yaml):

Core Snakemake Rulefile (Snakefile):

Execution: Run the workflow with snakemake --cores 16 --use-conda.

Protocol 2.3: Targeted Enzyme Discovery from Gene Catalogs

Objective: To screen the assembled gene catalog for sequences homologous to a target enzyme family. Method:

- Catalog Compilation: Concatenate all per-sample

.faafiles into a non-redundant catalog usingcd-hitat 95% identity (cd-hit -i catalog.faa -o catalog_nr.faa -c 0.95). - Homology Search: Create a Hidden Markov Model (HMM) profile from a multiple sequence alignment of known target enzymes (e.g., from PFAM). Search the catalog against this HMM using

hmmsearch(hmmsearch --cpu 8 --tblout hits.txt pfam_profile.hmm catalog_nr.faa). - Functional Annotation: Annotate candidate hits with known domains using

hmmscanagainst the PFAM database. - Phylogenetic Analysis: Align candidate sequences with references using MAFFT, build a phylogenetic tree with FastTree, and visualize to identify novel clades.

- Priority Ranking: Rank candidates based on sequence completeness, novelty (distance to known sequences), and domain architecture complexity.

Table 1: Representative Metagenomic Sequencing and Assembly Statistics

| Metric | Soil Sample | Marine Sample | Human Gut Sample | Unit |

|---|---|---|---|---|

| Sequencing Depth | 80 | 60 | 100 | Million reads |

| Raw Data | 24 | 18 | 30 | GB |

| Post-QC Reads | 76.5 | 78.2 | 82.1 | % retained |

| Number of Contigs | 1,200,000 | 850,000 | 450,000 | Count (>500 bp) |

| N50 Contig Length | 2,450 | 3,100 | 5,800 | Base pairs |

| Predicted Genes | 1.8 | 1.2 | 0.9 | Million |

| Non-Redundant Genes | 1.1 | 0.8 | 0.6 | Million (95% ID) |

Table 2: Enzyme Discovery Yield from a 10-Sample Soil Metagenome Catalog

| Target Enzyme Class | PFAM Profile | Candidate Hits | Complete ORFs* | Novel Clades |

|---|---|---|---|---|

| Glycoside Hydrolases | PF00150 (Cel5) | 1,250 | 890 | 3 |

| Proteases (Serine) | PF00089 (Trypsin) | 680 | 550 | 2 |

| Polyketide Synthases | PF00109 (KS domain) | 95 | 42 | 5 |

| Laccases | PF00394 (Cu-oxidase) | 310 | 180 | 1 |

| Esterases/Lipases | PF00151 (Lipase) | 720 | 610 | 4 |

Open Reading Frame with start and stop codon. *Monophyletic branch containing only environmental sequences.

Mandatory Visualizations

Metagenomics Discovery Pipeline Overview

Snakemake for Reproducible Gene Catalog

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Metagenomic Workflows

| Item | Function & Rationale | Example Product |

|---|---|---|

| DNA/RNA Shield | Immediate biological sample preservation at point of collection. Inactivates nucleases and stabilizes community profile. | Zymo Research DNA/RNA Shield |

| Magnetic Bead-Based Cleanup Kits | High-throughput, automatable purification of DNA from complex samples, removing PCR inhibitors (humics, polyphenols). | MagMAX Microbiome Ultra Kit |

| Ultra-high-quality DNA Polymerase | Accurate amplification of metagenomic libraries with minimal bias, essential for representing low-abundance species. | KAPA HiFi HotStart ReadyMix |

| Unique Dual Index (UDI) Kits | Multiplexing of hundreds of samples without index crosstalk, enabling large-scale comparative studies. | Illumina IDT for Illumina UDIs |

| Long-read Sequencing Chemistry | Resolving complex repeats and producing complete microbial genomes from metagenomes. | PacBio HiFi sequencing |

| HMMER Software Suite | Sensitive profile Hidden Markov Model searches for detecting distant homologs of target enzyme families in gene catalogs. | HMMER v3.4 |

| cd-hit Suite | Clustering of predicted protein sequences to generate non-redundant gene catalogs, reducing downstream analysis complexity. | cd-hit v4.8.1 |

This application note details the establishment of explicit criteria for enzyme candidate selection within a Snakemake workflow for targeted enzyme discovery. The reproducibility and scalability of the automated workflow depend on the precise, programmatic definition of success at each stage: Specificity (target engagement), Activity (catalytic function), and Expression (biochemical yield). These criteria form the decision gates within the workflow's directed acyclic graph (DAG).

Table 1: Specificity & Binding Criteria

| Criterion | Target Metric | Threshold Value | Experimental Method |

|---|---|---|---|

| Binding Affinity | Dissociation Constant (Kd) | ≤ 10 µM | Surface Plasmon Resonance (SPR) |

| Target Selectivity | Fold-Change vs. Off-Targets | ≥ 100-fold | Thermal Shift Assay (ΔTm) |

| Theoretical Specificity | Predicted Docking Score | ≤ -7.0 kcal/mol | In silico Molecular Docking |

Table 2: Enzymatic Activity Criteria

| Criterion | Target Metric | Threshold Value | Notes |

|---|---|---|---|

| Catalytic Efficiency | kcat/KM | ≥ 10^3 M^-1s^-1 | Initial screen |

| Thermostability | T_m (Melting Temp) | ≥ 55°C | For industrial relevance |

| pH Stability | % Activity Retention (pH 4-9) | ≥ 70% | Broad-range activity |

Table 3: Expression & Solubility Criteria

| Criterion | Target Metric | Threshold Value | Assessment Point |

|---|---|---|---|

| Soluble Yield | Protein Concentration | ≥ 0.5 mg/L culture | After affinity purification |

| Purity | Single Band on SDS-PAGE | ≥ 90% | Post-purification analysis |

| Aggregation State | Monomeric Peak (%) | ≥ 85% | Size-Exclusion Chromatography |

Experimental Protocols

Protocol 3.1: High-Throughput Thermal Shift Assay for Specificity

- Objective: Quantify target binding and selectivity via ligand-induced thermal stabilization.

- Reagents: Purified enzyme (2 µM), target ligand (10 µM), SYPRO Orange dye (5X), off-target ligands.

- Procedure:

- In a 96-well PCR plate, mix 18 µL of enzyme solution with 2 µL of ligand (or buffer control).

- Add 5 µL of 5X SYPRO Orange dye. Final reaction volume: 25 µL.

- Perform a temperature ramp from 25°C to 95°C at 1°C/min in a real-time PCR instrument.

- Monitor fluorescence intensity (excitation/emission ~470/570 nm).

- Calculate melting temperature (Tm) from the first derivative of the fluorescence curve.

- Determine ΔTm (Tm,with ligand - Tm,buffer). A ΔTm ≥ 2°C indicates significant binding. Selectivity is confirmed if ΔTm for target is ≥ 2°C and for off-targets is < 1°C.

Protocol 3.2: Microtiter Plate-Based Kinetic Assay (kcat/KM)

- Objective: Determine initial catalytic efficiency.

- Reagents: Purified enzyme, chromogenic/fluorogenic substrate, assay buffer.

- Procedure:

- Prepare substrate solutions across a 8-point concentration range (e.g., 0.1-5 x K_M).

- In a 96-well plate, add 80 µL of substrate solution per well. Pre-incubate at assay temperature (e.g., 30°C).

- Initiate reaction by adding 20 µL of diluted enzyme. Final volume: 100 µL.

- Immediately monitor product formation by absorbance/fluorescence every 10-30 seconds for 5-10 minutes.

- Fit initial linear rates (v0) to the Michaelis-Menten equation (v0 = (Vmax * [S]) / (KM + [S])) using nonlinear regression software (e.g., GraphPad Prism).

- Calculate kcat = Vmax / [Enzyme]. Report kcat/KM.

Mandatory Visualizations

Diagram 1: Snakemake DAG for Enzyme Discovery

Diagram 2: Specificity-Activity-Expression Funnel

The Scientist's Toolkit: Research Reagent Solutions

| Reagent/Material | Supplier Examples | Function in Discovery Workflow |

|---|---|---|

| HisTrap HP Columns | Cytiva, Qiagen | Immobilized metal affinity chromatography (IMAC) for high-yield purification of His-tagged recombinant enzymes. |

| Chromogenic Substrates (pNP-esters) | Sigma-Aldrich, Tokyo Chemical Industry | Hydrolyzable substrates that release para-nitrophenol (yellow), enabling rapid, spectrophotometric activity screens. |

| SYPRO Orange Protein Gel Stain | Thermo Fisher Scientific | Environment-sensitive dye used in Thermal Shift Assays to monitor protein unfolding and ligand binding. |

| Biacore CM5 Sensor Chips | Cytiva | Gold surface for covalent immobilization of target molecules for real-time, label-free binding kinetics via SPR. |

| HiLoad 16/600 Superdex 200 pg | Cytiva | High-resolution size-exclusion chromatography column for assessing protein purity, aggregation state, and oligomerization. |

| IPTG (Isopropyl β-d-1-thiogalactopyranoside) | GoldBio, Thermo Fisher | Chemical inducer for lac/tac promoter systems in E. coli expression, triggering recombinant protein production. |

Building the Pipeline: A Step-by-Step Snakemake Workflow from Reads to Candidates

1. Introduction and Application Notes Within a thesis on developing a Snakemake workflow for targeted enzyme discovery, visualizing the computational pipeline as a Directed Acyclic Graph (DAG) is critical. The DAG provides a formal, reproducible representation of data provenance, rule dependencies, and execution order. This protocol details the creation and interpretation of such a DAG for a standard enzyme discovery pipeline, which integrates genomic data mining, homology modeling, and functional prediction.

2. Protocol: Generating and Interpreting the Snakemake DAG

- Objective: To generate a visual DAG of the enzyme discovery workflow and interpret key components.

- Software: Snakemake (v7+), Graphviz (system installation), Python.

- Procedure:

- Workflow Design: Implement a Snakemake workflow (

Snakefile) with rules for each discovery step (see Table 1). - DAG Generation: In the terminal, execute:

snakemake --dag | dot -Tpng > workflow_dag.png. - Interpretation: Analyze the generated PNG file. Rectangular nodes represent jobs (rule execution), directed edges represent dependencies, and oval nodes represent input/output files.

- Workflow Design: Implement a Snakemake workflow (

- Key Output: A DAG diagram visualizing the entire workflow's data flow and rule hierarchy.

3. Workflow DAG Visualization The core enzyme discovery pipeline DAG, generated from the Snakemake rules, is depicted below.

Diagram Title: Snakemake DAG for Enzyme Discovery Pipeline

4. Core Snakemake Rules for Enzyme Discovery Table 1: Summary of Key Workflow Rules and Data Flow

| Rule Name | Input(s) | Output(s) | Software/Tool | Core Function |

|---|---|---|---|---|

BLAST_homologs |

metagenome_db.fasta, query.faa |

homologs.fasta |

DIAMOND/BLAST | Identifies homologous sequences from metagenomic data. |

build_msa |

homologs.fasta |

alignment.clustal |

Clustal Omega/MAFFT | Generates multiple sequence alignment of homologs. |

build_hmm |

alignment.clustal |

profile.hmm |

HMMER | Creates a Hidden Markov Model profile for sensitive searches. |

comparative_modeling |

profile.hmm, templates.pdb |

models/ (PDB files) |

MODELLER, SWISS-MODEL | Builds 3D protein structure models. |

predict_active_sites |

models/ (PDB files) |

active_sites.tsv |

CASTp, DeepSite | Predicts catalytic and binding pockets from 3D models. |

generate_report |

All major outputs (homologs.fasta, active_sites.tsv, etc.) |

discovery_report.pdf |

Pandas, Matplotlib, ReportLab | Compiles results into a comprehensive summary document. |

5. Protocol: Active Site Prediction from Homology Models

- Objective: To identify and characterize putative active sites from computationally modeled enzyme structures.

- Reagents/Materials: Homology model in PDB format, computing cluster or workstation.

- Software: CASTp 3.0 (web server or local) or DeepSite (DL-based).

- Procedure (using CASTp):

- Input Preparation: Ensure your homology model PDB file is correctly formatted (ATOM records only).

- Submission: Upload the PDB file to the CASTp web server (http://sts.bioe.uic.edu/castp/).

- Parameter Setting: Set the probe radius to 1.4 Å (approximating a water molecule). Use default settings for other parameters.

- Execution: Run the analysis. The server will calculate surface-accessible and molecular-surface pockets.

- Analysis: Review the ranked list of predicted pockets. Identify the largest pocket with complementary chemical features (e.g., proximity to conserved catalytic residues from the MSA). Export the coordinates and volume data.

- Expected Output: A table of predicted binding pockets with metrics (volume, area, residues).

6. The Scientist's Toolkit: Key Research Reagent Solutions Table 2: Essential Digital and Computational Tools for the Workflow

| Item | Function/Benefit | Example/Provider |

|---|---|---|

| Snakemake | Workflow management system enabling reproducible, scalable, and modular data analysis pipelines. | https://snakemake.github.io/ |

| DIAMOND | Ultra-fast protein sequence aligner for BLAST-like searches against large metagenome databases. | https://github.com/bbuchfink/diamond |

| HMMER | Suite for profiling with Hidden Markov Models, essential for sensitive remote homology detection. | http://hmmer.org/ |

| SWISS-MODEL | Fully automated, web-based protein structure homology-modelling server. | https://swissmodel.expasy.org/ |

| CASTp | Computes topographic descriptors of protein structures, identifying and measuring binding pockets. | University of Illinois at Chicago |

| Conda/Bioconda | Package and environment manager crucial for installing and maintaining consistent bioinformatics tool versions. | https://conda.io/, https://bioconda.github.io/ |

| Jupyter Notebook | Interactive environment for exploratory data analysis, visualization, and documenting interim results. | https://jupyter.org/ |

Application Notes

Within the thesis project "A Scalable Snakemake Workflow for High-Throughput Targeted Enzyme Discovery," the initial preprocessing of raw sequencing reads is the critical first step that determines downstream analytical success. This stage ensures that low-quality data, adapter contamination, and sequencing artifacts do not bias subsequent assembly, annotation, and functional screening for novel biocatalysts. The implementation of Rule 1 as a modular, reproducible Snakemake rule guarantees consistent quality assessment and trimming across hundreds of metagenomic or transcriptomic samples typical in discovery campaigns.

Recent benchmarking studies (2023-2024) indicate that rigorous preprocessing improves functional gene annotation accuracy by 15-25% and reduces false-positive variant calls in heterogeneous environmental samples. The integrated use of FastQC for diagnostic reporting, Trimmomatic for adaptive trimming, and MultiQC for aggregated visualization is now considered the standard for robust NGS pipelines in industrial enzyme discovery.

Table 1: Impact of Preprocessing Parameters on Enzyme Discovery Metrics

| Parameter | Typical Value | Post-Trim Mean Read Length (bp) | Gene Call Recovery Rate (%) | Effect on Downstream Assembly (N50) |

|---|---|---|---|---|

| No Trimming | N/A | 150 | 100 (Baseline) | 1.2 Mb |

| SLIDINGWINDOW (4:20) | Default | 132 | 98.5 | 1.5 Mb |

| SLIDINGWINDOW (4:30) | Aggressive | 118 | 96.8 | 1.7 Mb |

| MINLEN (36) | Standard | 130 | 98.2 | 1.55 Mb |

| MINLEN (75) | Stringent | 140 | 97.1 | 1.65 Mb |

Experimental Protocols

Protocol 1.1: Initial Quality Assessment with FastQC

Objective: Generate per-base sequence quality, adapter contamination, and GC content reports for raw FASTQ files.

- Input: Paired-end or single-end raw FASTQ files (

*.fastq.gz). - Software: FastQC v0.12.1.

- Command:

- Output Interpretation: Examine

fastqc_report.html. Key warnings requiring Trimmomatic intervention include:- "Per base sequence quality" falling below Phred score 20 in later cycles.

- "Adapter Content" exceeding 5%.

- "Overrepresented sequences" > 1% of total.

Protocol 1.2: Read Trimming and Filtering with Trimmomatic

Objective: Remove adapters, low-quality bases, and discard short reads.

- Input: Raw FASTQ files.

- Software: Trimmomatic-0.39.

- Reagents/Materials:

- Adapter FASTA file (e.g.,

TruSeq3-PE-2.fafor Illumina). - Java Runtime Environment (JRE).

- Adapter FASTA file (e.g.,

- Command for Paired-End Reads:

- Parameters Justification for Enzyme Discovery:

ILLUMINACLIP:2:30:10: Removes adapters with high stringency to prevent false gene fusion.SLIDINGWINDOW:4:25: Balances quality retention with removal of error-prone regions.MINLEN:36: Ensures reads are long enough for open-reading-frame prediction.

Protocol 1.3: Aggregated Reporting with MultiQC

Objective: Compile all FastQC and Trimmomatic reports into a single interactive document.

- Input: All

fastqc_data.txt,summary.txt, and Trimmomatic log files. - Software: MultiQC v1.14.

- Command:

- Output Use: The

multiqc_report.htmlprovides a cohort-level view, enabling batch effect detection critical for comparative metagenomics in enzyme discovery.

Visualizations

Diagram Title: Snakemake Preprocessing & QC Workflow

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for NGS Preprocessing

| Item | Function in Preprocessing | Example/Supplier |

|---|---|---|

| Illumina Adapter Sequences | Specifies oligonucleotide sequences for Trimmomatic to identify and remove. Required for accurate read trimming. | File: TruSeq3-PE-2.fa (bundled with Trimmomatic). |

| High-Quality Reference Genome (Optional) | For quality control alignment to assess read integrity in known systems. | e.g., E. coli K-12 spike-in control genome. |

| Phred Score Calibration Libraries | Validates base-calling accuracy of the sequencing platform used. | PhiX Control v3 (Illumina). |

| Computational Resources | Adequate RAM and CPU for in-memory sorting of reads during trimming. | Minimum 16GB RAM, 4+ cores per sample. |

| Sample Tracking Sheet | Critical metadata linking sample ID to sequencing lane and library prep. | CSV file with headers: SampleID, Lane, AdapterSet. |

Application Notes

This section details the integration of metagenomic assembly and binning tools into a unified Snakemake workflow designed for targeted enzyme discovery. Efficient recovery of high-quality metagenome-assembled genomes (MAGs) is critical for identifying novel biosynthetic gene clusters and catalytic proteins from complex microbial communities.

Comparative Analysis of Assembly and Binning Tools: The performance of assemblers and binners varies with dataset characteristics such as read length, community complexity, and sequencing depth. The following table summarizes key quantitative metrics from recent benchmark studies, guiding tool selection within the workflow.

Table 1: Performance Metrics for Assembly and Binning Tools

| Tool Name | Primary Use | Key Metric (NGA50)* | Key Metric (Completeness)* | Key Metric (Contamination)* | Optimal Use Case |

|---|---|---|---|---|---|

| MEGAHIT | De novo assembly | High (for complex communities) | N/A | N/A | Large, complex communities; compute/memory efficient. |

| metaSPAdes | De novo assembly | Very High (for isolate-like samples) | N/A | N/A | Lower-complexity communities or high-depth datasets. |

| MaxBin 2.0 | Contig binning | N/A | 70-90% | 0-10% | Binning based on sequence composition and abundance. |

*Representative ranges from benchmark studies; actual values are dataset-dependent.

Experimental Protocols

Protocol 1: Metagenomic Co-Assembly with MEGAHIT and metaSPAdes

This protocol describes the assembly of quality-filtered, host-depleted paired-end reads into contigs.

Materials:

- Input: Interleaved or paired forward/reverse FASTQ files from multiple samples (e.g.,

sample1_qc_R1.fq.gz,sample1_qc_R2.fq.gz). - Hardware: Multi-core server with ≥64 GB RAM for moderate-sized datasets.

- Software: MEGAHIT (v1.2.9) or metaSPAdes (v3.15.5) installed via Conda.

Method:

- Tool Selection: For a typical multi-sample enzyme discovery project, MEGAHIT is recommended for its efficiency. metaSPAdes can be used for higher-quality, less complex samples.

- Co-assembly Execution: For MEGAHIT: For metaSPAdes:

- Output: The primary contig file is

./assembly_output/final_contigs.fasta(MEGAHIT) or./assembly_output/contigs.fasta(metaSPAdes).

Protocol 2: Contig Binning with MaxBin 2.0

This protocol recovers draft genomes (MAGs) from assembled contigs using sequence composition and sample-specific abundance profiles.

Materials:

- Input: Assembled contigs (

final_contigs.fasta). - Input: Read abundance files in

.abundformat for each sample, generated by aligning reads back to contigs (e.g., using Bowtie2 andcoverm). - Software: MaxBin 2.0 (v2.2.7), Bowtie2,

coverm.

Method:

- Generate Abundance Profiles:

- Execute MaxBin Binning:

Where

abundance_filelist.txtcontains paths to all.abundfiles. - Output: Binned contigs in FASTA format (e.g.,

maxbin_output.001.fasta). Each file represents one draft MAG.

Protocol 3: Integrated Snakemake Rule Definition

This protocol integrates the above steps into a reproducible Snakemake rule for the thesis workflow.

Materials: Snakemake (v7+), Conda environment with required tools.

Method:

- Define the rule in the

Snakefile:

Mandatory Visualization

Title: Metagenomic Assembly and Binning Workflow for Enzyme Discovery

The Scientist's Toolkit

Table 2: Essential Research Reagents and Materials for Metagenomic Assembly & Binning

| Item | Function/Application | Notes for Workflow Integration |

|---|---|---|

| High-Quality DNA Extraction Kit (e.g., DNeasy PowerSoil Pro) | Inhibitor-free DNA isolation from environmental samples. | Critical for long, accurate reads; input for library prep. |

| Illumina-Compatible Library Prep Kit | Prepares sequencing libraries from metagenomic DNA. | Ensure insert size is optimal for assembler (e.g., 300-800bp). |

| Bowtie2 | Fast, sensitive read alignment for generating coverage profiles. | Used in Snakemake rule to map reads back to contigs for binning. |

| CheckM | Assesses completeness and contamination of recovered MAGs. | Essential QC step after binning to filter low-quality MAGs. |

Conda Environment (metagenomics.yaml) |

Manages precise software versions for reproducibility. | Includes MEGAHIT, metaSPAdes, MaxBin2, Bowtie2, coverm. |

| High-Performance Computing Cluster | Provides necessary CPU cores and RAM for assembly steps. | MEGAHIT is memory-efficient; metaSPAdes requires significant RAM. |

Application Notes

Within a Snakemake workflow for targeted enzyme discovery, accurate gene prediction and ORF calling are critical first steps in converting assembled metagenomic or genomic sequences into actionable protein candidates. This step transforms contiguous DNA sequences (contigs) into a standardized protein catalog, which serves as the input for downstream homology searches, domain analysis, and functional screening.

Prodigal is a fast, prokaryotic gene-finding tool widely used for bacterial and archaeal genomes and metagenomes. It is favored for its speed and accuracy in identifying coding sequences without prior training.

MetaGeneMark employs a hidden Markov model (HMM) trained on metagenomic sequences, making it particularly robust for diverse, fragmented, and unknown microbial communities often encountered in environmental samples.

The choice between tools depends on the sample origin. For well-characterized, single-organism genomes, Prodigal is typically sufficient. For complex metagenomes, MetaGeneMark or a consensus approach may yield a more complete gene set. Integrating both tools and comparing results can increase confidence in predictions, especially for novel enzymes where gene boundaries are ambiguous.

Protocols

Protocol 1: ORF Prediction with Prodigal in a Snakemake Context

Objective: Predict protein-coding genes from assembled contigs.

Input: {sample}.contigs.fasta

Output: {sample}_prodigal.faa (protein sequences), {sample}_prodigal.gff (gene coordinates).

Snakemake Rule Definition:

Conda Environment (

envs/prodigal.yaml):

Protocol 2: ORF Prediction with MetaGeneMark in a Snakemake Context

Objective: Predict genes using models optimized for metagenomes.

Input: {sample}.contigs.fasta

Output: {sample}_mgm.faa, {sample}_mgm.gff

Download Model File: First, download the appropriate model file (

MetaGeneMark_v1.mod) from http://topaz.gatech.edu/GeneMark/license_download.cgi (requires registration). Place it in aresources/directory.Snakemake Rule Definition:

Conda Environment (

envs/mgm.yaml):

Protocol 3: Consensus Prediction Workflow

Objective: Merge predictions from both tools to generate a high-confidence non-redundant protein set. Strategy: Use CD-HIT to cluster proteins from both tools at 100% identity, then select the longest representative sequence per cluster.

- Snakemake Rule for Clustering:

Table 1: Comparison of Gene Prediction Tools for Enzyme Discovery

| Feature | Prodigal | MetaGeneMark |

|---|---|---|

| Primary Use Case | Isolated prokaryotic genomes | Metagenomic/metatranscriptomic assemblies |

| Underlying Model | Dynamic programming (Markov models) | Hidden Markov Model (HMM) |

| Speed | Very Fast | Moderate |

| Metagenome Mode | Yes (-p meta) |

Native |

| Typical Outputs | FAA (proteins), GFF (coordinates), NT (nucleotide genes) | FAA, GFF |

| Key Advantage | Speed, low false positive rate | Accuracy on short, novel fragments |

| Recommendation in Workflow | Default for isolate data | Use for complex environmental samples |

Visualizations

Title: Consensus Gene Prediction & ORF Calling Workflow

Title: Snakemake Rule Integration for Gene Prediction

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Gene Prediction & ORF Calling

| Item | Function in Experiment | Example/Notes |

|---|---|---|

| High-Quality Assembled Contigs | The substrate for gene prediction. Accuracy depends heavily on assembly quality. | Output from SPAdes, MEGAHIT, Flye. |

| Prodigal Software (v2.6.3+) | Performs fast, ab initio prokaryotic gene prediction. | Run with -p meta for metagenomes. |

| MetaGeneMark Software (v3.38+) | Gene prediction using HMMs trained on metagenomic sequences. | Requires license file for model download. |

| CD-HIT Suite (v4.8.1+) | Clusters redundant protein sequences to create a non-redundant catalog. | Essential for consensus workflows. |

| Conda/Bioconda | Reproducible environment management for installing bioinformatics tools. | environments.yaml files define tool versions. |

| Snakemake (v7.0+) | Workflow management system to orchestrate, parallelize, and track gene prediction steps. | Ensures reproducibility and scalability. |

| High-Performance Computing (HPC) Cluster or Cloud Instance | Provides computational resources for processing large metagenomic datasets. | Required for large-scale enzyme discovery projects. |

Application Notes

Within the thesis framework "A Scalable Snakemake Workflow for Targeted Enzyme Discovery in Metagenomic Datasets," Rule 4 represents the critical functional annotation layer. It transitions from gene calls (Rule 3) to biologically meaningful predictions. This rule executes two complementary homology search strategies to infer protein function, each with distinct strengths in sensitivity and specificity for enzyme discovery.

DIAMOND (BLAST-based Search): Utilized for high-speed, sensitive alignment against large, comprehensive reference databases (e.g., UniRef90, NCBI-nr). It provides broad functional labels (e.g., EC numbers, Pfam domains) and is excellent for detecting distant, but linear, sequence homology. It is the first pass for general annotation.

HMMER (Profile HMM Search): Used for high-specificity searches against custom-built Hidden Markov Model (HMM) profiles. These profiles are constructed from multiple sequence alignments of known enzyme families of interest (e.g., polyketide synthases, nitrilases). This step is crucial for targeted discovery, as it can detect remote homologs that share a common structural fold but may have low pairwise sequence identity, which DIAMOND might miss.

Integrating both methods in a single Snakemake rule ensures a balanced annotation approach: broad sensitivity (DIAMOND) coupled with targeted, family-specific precision (HMMER). The output is a consolidated annotation table, essential for downstream rules that perform filtering, prioritization, and phylogenetic analysis of candidate enzymes.

Table 1: Comparative Analysis of Homology Search Tools in Rule 4

| Feature | DIAMOND (BLASTX) | HMMER (hmmscan) | Purpose in Workflow |

|---|---|---|---|

| Search Type | Pairwise sequence alignment | Profile Hidden Markov Model alignment | Broad vs. targeted homology |

| Primary Database | UniRef90, NCBI-nr (large, flat) | Custom HMM profiles (focused, curated) | General annotation vs. family-specific discovery |

| Speed | Very Fast (~20,000x BLAST) | Moderate to Slow | Enable high-throughput screening |

| Sensitivity | High for linear homology | Very High for structural/remote homology | Catch divergent enzyme variants |

| Key Output | Best-hit taxonomic & functional ID | Bit scores, E-values, domain architecture | Candidate scoring and ranking |

Experimental Protocols

Protocol 1: DIAMOND-based Functional Annotation

Objective: To rapidly annotate predicted protein sequences with putative functions from a comprehensive reference database.

- Input: FASTA file of predicted amino acid sequences (

proteins.faa) from Rule 3 (Gene Prediction). - Database Preparation:

- Download the latest UniRef90 database:

wget ftp://ftp.uniprot.org/pub/databases/uniprot/uniref/uniref90/uniref90.fasta.gz - Decompress:

gunzip uniref90.fasta.gz - Format for DIAMOND:

diamond makedb --in uniref90.fasta -d uniref90

- Download the latest UniRef90 database:

Execution:

Run DIAMOND in

blastxmode (if starting from nucleotide contigs) orblastpmode (for protein input):Parameters:

--evalue 1e-5sets significance threshold;--max-target-seqs 5reports top 5 hits.

- Output Parsing: The tab-separated results (

diamond_results.tsv) are parsed within the Snakemake rule to extract best-hit annotations, including enzyme commission (EC) numbers from thestitlefield.

Protocol 2: Custom HMM Profile Construction & Search

Objective: To identify sequences belonging to a specific enzyme family of interest using sensitive profile HMMs.

- Input: Seed multiple sequence alignment (MSA) of a known enzyme family (e.g., from Pfam or manually curated literature data).

- HMM Profile Building:

- Align seed sequences using MUSCLE or MAFFT:

mafft --auto seed_sequences.fa > family_alignment.sto - Convert to Stockholm format if needed.

- Build the HMM profile:

hmmbuild family_profile.hmm family_alignment.sto - Calibrate the profile (for statistical scoring):

hmmpress family_profile.hmm

- Align seed sequences using MUSCLE or MAFFT:

HMMER Search:

Execute

hmmscanagainst the predicted proteome:The

--cut_gaoption uses curated model-specific thresholds for optimal family membership prediction.

- Output Interpretation: The domain table output (

hmmer_results.domtblout) lists sequences that significantly match the profile. Sequences with an independent E-value < 0.01 are considered strong candidates for the target enzyme family.

Visualizations

Diagram 1: Rule 4 Workflow in Snakemake Pipeline (90 chars)

Diagram 2: Homology Search Logic & Integration (95 chars)

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Functional Annotation

| Item | Function in Protocol | Example/Supplier |

|---|---|---|

| UniRef90 Database | Comprehensive, clustered non-redundant protein sequence database used as the reference for DIAMOND searches. Provides standardized functional annotations. | UniProt Consortium |

| Custom HMM Profile | A statistical model representing the consensus and variation of a specific enzyme family, enabling sensitive detection of remote homologs. | Built via hmmbuild from curated alignments. |

| Pfam Database | Repository of protein family HMMs. Source of seed alignments for building or validating custom profiles for common enzyme families. | EMBL-EBI |

| MUSCLE/MAFFT Software | Tools for generating the multiple sequence alignments (MSAs) required as input for building robust and accurate HMM profiles. | EMBL-EBI, multiple sources |

| Snakemake Workflow Manager | Orchestrates the execution of DIAMOND and HMMER rules, manages dependencies, and ensures reproducible annotation across compute environments. | Open Source |

| High-Performance Compute (HPC) Cluster | Essential for parallel execution of computationally intensive homology searches against large metagenomic datasets in a feasible time. | Institutional or cloud-based (AWS, GCP). |

Within the context of a targeted enzyme discovery Snakemake workflow, Rule 5 represents the critical bioinformatic and analytical juncture where putative enzyme candidates are refined from a broad list into a prioritized subset for experimental validation. This rule integrates multi-parametric data—including sequence homology, predicted physicochemical properties, structural models, and docking scores—to filter out poor candidates, rank the remainder based on customizable criteria, and generate comprehensive, reproducible reports for research teams.

Core Components and Quantitative Metrics

The filtering and ranking process relies on defined thresholds across several computational domains. The following table summarizes typical quantitative metrics and their associated thresholds used in contemporary enzyme discovery pipelines.

Table 1: Standard Filtering Metrics and Thresholds for Enzyme Candidates

| Metric Category | Specific Metric | Typical Threshold (Inclusive) | Rationale |

|---|---|---|---|

| Sequence & Homology | Percentage Identity to Known Template | 20% - 95% (context-dependent) | Balances novelty with modeling reliability. |

| E-value (BLAST/HMMER) | < 1e-5 | Ensures significant homology. | |

| Query Coverage | > 70% | Ensures alignment spans protein of interest. | |

| Physicochemical | Predicted Solubility (Aggregation Score) | < 0.5 (lower is better) | Favors candidates likely to express solubly. |

| Instability Index | < 40 (stable) | Prioritizes thermodynamically stable proteins. | |

| pI (Isoelectric Point) | Project-specific (e.g., 5.0-9.0) | Matches downstream purification (e.g., IMAC). | |

| Structural | Model Confidence (pLDDT from AlphaFold2) | > 70 (per-residue in active site) | Ensures reliable active site geometry. |

| Ramachandran Outliers (%) | < 2% | Validates backbone torsion plausibility. | |

| Clash Score | < 10 | Indicates minimal steric conflicts. | |

| Functional | Docking Score (Binding Affinity) | Project-specific (lower ΔG better) | Ranks predicted substrate binding strength. |

| Active Site Residue Conservation | > 80% in catalytic residues | Maintains catalytic integrity. | |

| Operational | Sequence Length | Within 2 SD of family mean | Filters fragments or abnormal fusions. |

| Presence of Signal Peptide | Yes/No (as required) | Filters for secretion if needed. |

Experimental Protocols for Cited Validation Steps

Protocol 3.1: In Silico Solubility and Stability Prediction

- Objective: To computationally filter candidates prone to insolubility or instability.

- Materials: Protein sequence in FASTA format, computational tools (e.g., SOLpro, Aggrescan, ProtParam).

- Method:

- Submit candidate sequence to SOLpro (http://scratch.proteomics.ics.uci.edu/) for solubility prediction. Record the probability of solubility (0-1).

- Submit the same sequence to the ProtParam tool on the ExPASy server. Calculate the instability index; proteins with an index below 40 are considered stable.

- Run sequence through Aggrescan3D (if a structural model exists) to identify aggregation-prone surface patches.

- Analysis: Apply thresholds from Table 1. Candidates failing solubility (score <0.5) or stability (index >=40) thresholds are filtered out.

Protocol 3.2: Protein Structure Modeling and Quality Assessment using ColabFold

- Objective: To generate a reliable 3D model for active site analysis and docking.

- Materials: Multiple Sequence Alignment (MSA) of candidate, ColabFold (AlphaFold2 implementation) notebook.

- Method:

- Access the ColabFold notebook via GitHub.

- Input the candidate protein sequence(s). Use default settings for MSA generation (MMseqs2) and structure modeling.

- Execute the notebook. The output includes a predicted model file (.pdb) and a per-residue confidence metric (pLDDT).

- Validate the model using SAVES v6.0 (https://saves.mbi.ucla.edu/). Upload the .pdb file and run PROCHECK (Ramachandran plot) and ERRAT.

- Analysis: Retain models where the pLDDT score for the annotated active site residues is >70 and Ramachandran outliers are <2%. Models with poor quality scores are rejected.

Protocol 3.3: Molecular Docking for Substrate Affinity Ranking

- Objective: To rank filtered candidates based on predicted binding affinity to the target substrate.

- Materials: Protein model (.pdb), substrate molecular file (.mol2 or .sdf), docking software (e.g., AutoDock Vina, GNINA).

- Method:

- Prepare the protein: remove water, add polar hydrogens, assign Kollman charges (using tools like MGLTools for AutoDock).

- Prepare the ligand: define root and torsion trees, optimize 3D geometry.

- Define the docking search space (grid box) centered on the predicted active site.

- Execute docking with AutoDock Vina (command:

vina --receptor protein.pdbqt --ligand ligand.pdbqt --center_x y z --size_x y z --out results.pdbqt). - Extract the binding affinity (ΔG in kcal/mol) from the output log for the top pose.

- Analysis: Sort candidates by docking score (more negative ΔG indicates stronger binding). This score is a primary metric for final ranking.

Visualizations

Diagram 1: Candidate Filtering & Ranking Workflow

Diagram 2: Multi-Parametric Scoring System for Ranking

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Tools for Experimental Validation of Ranked Candidates

| Item | Supplier Examples | Function in Validation Pipeline |

|---|---|---|

| pET Expression Vectors | Novagen (Merck), Addgene | High-copy, T7-promoter based plasmids for recombinant protein expression in E. coli. |

| E. coli Expression Strains (BL21(DE3)) | New England Biolabs, Invitrogen | Chemically competent cells with T7 RNA polymerase gene for induced expression of pET constructs. |

| Ni-NTA Agarose Resin | Qiagen, Cytiva | Immobilized metal-affinity chromatography (IMAC) resin for purifying His-tagged recombinant enzymes. |

| Protease Inhibitor Cocktail (EDTA-free) | Roche, Thermo Scientific | Prevents proteolytic degradation of target enzymes during cell lysis and purification. |

| Size-Exclusion Chromatography (SEC) Column (HiLoad 16/600 Superdex 200 pg) | Cytiva | For final polishing step to obtain monodisperse, pure enzyme sample for kinetic assays. |

| Spectrophotometric Enzyme Assay Kit (e.g., NAD(P)H-coupled) | Sigma-Aldrich, Cayman Chemical | Enables quantitative measurement of enzyme activity and initial kinetic parameters (Km, Vmax). |

| Thermal Shift Dye (e.g., SYPRO Orange) | Invitrogen | Used in Thermofluor assays to measure protein thermal stability (Tm) under different conditions. |

| Crystallization Screening Kits (JCSG+, MORPHEUS) | Molecular Dimensions | Sparse matrix screens for identifying initial conditions for protein crystallization. |

Within the thesis framework "A Scalable Snakemake Workflow for High-Throughput Targeted Enzyme Discovery," the management of computational parameters is critical for reproducibility and adaptability. This Application Note details the implementation of centralized configuration files (YAML/JSON) to decouple experimental parameters from workflow logic, enabling rapid retargeting of the discovery pipeline to new enzyme families or organisms without code modification.

Core Configuration Architecture

The Snakemake workflow uses a hierarchical configuration system. The central config.yaml file defines all adjustable parameters, which are ingested into the Snakefile via the configfile: directive.

Table 1: Primary Configuration File Sections and Parameters

| Section | Key Parameter | Data Type | Default Value | Function in Targeted Discovery |

|---|---|---|---|---|

input |

reference_proteome |

string (file path) | null |

FASTA file of the target organism's proteome. |

known_enzyme_seeds |

list (file paths) | [] |

Curated seed sequences for the enzyme family of interest. | |

search |

hmmer_evalue |

float | 1e-5 |

E-value cutoff for HMMER homology searches. |

blastp_identity |

integer | 30 |

Minimum percent identity for BLASTp validation. | |

clustering |

cdhit_identity |

float | 0.9 |

Sequence identity threshold for CD-HIT redundancy reduction. |

output |

results_dir |

string | "results/" |

Central directory for all output files. |

Protocol: Adapting the Workflow to a New Enzyme Target

Objective: Reconfigure the existing enzyme discovery pipeline to identify novel Polyketide Synthase (PKS) domains in a newly sequenced Streptomyces genome.

Materials & Software:

- Snakemake workflow (v7.0+)

- Central

config.yamlfile - Target genome proteome (FASTA)

- Seed sequences for PKS Ketosynthase (KS) domain (PF00109)

Procedure:

- Preparation of Input Files:

a. Download the proteome of Streptomyces sp. (e.g., from NCBI RefSeq) and save as

data/streptomyces_proteome.faa. b. Obtain seed sequences. Curate a multiple sequence alignment of known PKS KS domains or download the Pfam HMM (PF00109). Save asresources/pks_ks.hmm.

Configuration File Update: a. Open the central

config.yamlfile in a text editor. b. Modify theinputsection:c. Adjust search parameters for the target family (PKS domains are well-conserved):

d. Update the

outputdirectory to reflect the experiment:Workflow Execution: a. Execute the Snakemake workflow from the command line. The workflow automatically reads the updated configuration.

b. The pipeline will run the HMMER search with the new seeds against the new proteome using the specified parameters, followed by downstream clustering and annotation steps as defined in the unchanged Snakefile.

Validation: a. Manually inspect the top hits in the final

results/streptomyces_pks_ks/candidate_hits.csv. b. Perform a conserved domain analysis (e.g., using NCBI CDD) on several candidates to confirm the presence of the PKS KS domain.

Diagram: Configuration-Driven Workflow Logic

Title: Snakemake Configuration Flow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Computational Reagents for Targeted Discovery

| Item | Function in Configuration-Driven Workflow | Example/Format |

|---|---|---|

| YAML Configuration File | Central repository for all modifiable parameters (paths, cutoffs, thresholds). Enables adaptation without altering core code. | config.yaml |

| Reference Proteome | The set of all protein sequences from the target organism. The primary search space for enzyme discovery. | FASTA file (.faa, .fasta) |

| Seed Sequence Profile HMM | A statistical model of the enzyme family of interest, built from a curated multiple sequence alignment. Used for sensitive homology search. | HMMER3 file (.hmm) |

| Conda Environment File | Defines the exact software and versions (HMMER, BLAST+, CD-HIT) to ensure computational reproducibility. | environment.yaml |

| Sample Sheet | A CSV file listing biological sample IDs and metadata. Can be referenced in the config file for batch processing. | CSV file |

Application Notes

Effective deployment of the Snakemake workflow for targeted enzyme discovery is critical for scaling computational research from initial validation to high-throughput screening. The transition from local execution to cloud environments enables access to vast, elastic compute resources necessary for processing large genomic and metagenomic datasets.

Local Cluster Deployment: Utilizes on-premise High-Performance Computing (HPC) or institutional clusters (e.g., using SLURM, SGE). This is ideal for controlled, medium-scale analyses where data governance is strict. Performance is bounded by local infrastructure.

Cloud Deployment (AWS, GCP): Provides scalable, on-demand resources. AWS Batch and Google Cloud Life Sciences are native services for orchestrating workflow jobs. Costs are variable and depend on instance selection, storage, and data egress. Cloud deployment is essential for reproducing large-scale analyses and accessing specialized machine types (e.g., memory-optimized for assembly).

Quantitative Comparison of Deployment Environments:

| Feature / Metric | Local Laptop | Local HPC Cluster | AWS Cloud | Google Cloud Platform |

|---|---|---|---|---|

| Typical Setup Time | Minutes (local install) | 1-5 days (account/project) | 1-2 hours (config, IAM) | 1-2 hours (config, IAM) |

| Max Scalability Limit | 1 machine | 100s of nodes (fixed) | 1000s of nodes (elastic) | 1000s of nodes (elastic) |

| Cost Model | Fixed hardware cost | Institutional allocation | Pay-per-use ($/vCPU-hr) | Pay-per-use ($/vCPU-hr) |

| Approx. Cost per 1000 vCPU-hr | N/A | N/A (allocated) | ~$80 - $240 (varies by instance) | ~$70 - $220 (varies by instance) |

| Data Egress Cost | N/A | N/A | ~$0.09/GB | ~$0.12/GB |

| Optimal Use Case | Rule development, debugging | Routine scheduled analyses | Burst, large-scale, reproducible runs | Burst, large-scale, integrated services (BigQuery) |

| Snakemake Integration | --cores |

--cluster (SLURM, etc.) |

--kubernetes or AWS Batch plugin |

--kubernetes or GCP Life Sciences plugin |

Experimental Protocols

Protocol 1: Deploying Snakemake on a Local SLURM Cluster

Objective: Execute the enzyme discovery workflow on an institutional HPC cluster.

- Environment Setup: On the cluster login node, install Miniconda. Create a conda environment using the

environment.ymlfile exported from the development setup. - Profile Configuration: Create a Snakemake profile directory for SLURM. Configure a

config.yamlfile within it:

- Data Staging: Transfer input genomic files to the cluster's shared filesystem (e.g., Lustre, NFS).

- Workflow Submission: Execute the workflow using the profile:

snakemake --profile /path/to/slurm_profile --use-conda. - Monitoring: Monitor job status via

squeueand Snakemake's output. Final results will be in the designated output directory.

Protocol 2: Deploying Snakemake on AWS using AWS Batch

Objective: Scale enzyme discovery workflow elastically using AWS.

- Prerequisites: AWS CLI configured, an S3 bucket for input/output data.

- Infrastructure Setup: Use the

snakemake-executor-plugin-aws-batchTerraform template to create a VPC, an S3-accessible EFS filesystem, a Batch compute environment, job queue, and job definition. - Data Transfer: Upload all input files (

seqs/*.fastq,databases/*.hmm) from the local system to the S3 bucket:aws s3 sync input/ s3://<bucket-id>/input/. - Configuration: Create a Snakemake config file (

config/config_aws.yaml):

- Execution: Run the workflow:

snakemake --executor aws_batch --default-remote-provider S3 --default-remote-prefix <bucket-id>/snakemake --use-conda -c 1. The-c 1limits the local driver to one thread. - Monitoring & Download: Monitor jobs in the AWS Batch console. Upon completion, download results:

aws s3 sync s3://<bucket-id>/snakemake/output/ ./results/.

Protocol 3: Deploying Snakemake on GCP using Google Cloud Life Sciences

Objective: Execute workflow on GCP, leveraging integration with other Google services.

- Prerequisites:

gcloudCLI installed and initialized. A Google Cloud Storage (GCS) bucket created. - Service Enablement: Enable the Cloud Life Sciences, Compute Engine, and GCS APIs.

- Data Transfer: Upload input data to GCS:

gsutil -m cp -r input/*.fastq gs://<bucket-id>/input/. - Configuration: Create a Snakemake profile for GCP Life Sciences. Key settings in

config.yaml:

- Execution: Run from a local machine or a Cloud Shell:

snakemake --profile ./gcp_profile --default-remote-prefix gs://<bucket-id>/snakemake. - Post-processing: Results will be written to the GCS bucket. Use

gsutilfor download or connect results to BigQuery for downstream analysis.

Visualization

Diagram 1: Snakemake Deployment Pathway for Enzyme Discovery

Diagram 2: AWS Batch Execution Architecture for Snakemake

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Deployment Context |

|---|---|

| Snakemake Workflow Management System | Core tool for defining, executing, and scaling the reproducible bioinformatics workflow for enzyme discovery. |

| Conda / Bioconda / Mamba | Provides isolated, reproducible software environments for each workflow rule, ensuring consistent tool versions across all deployments. |

| Docker / Singularity Containers | Containerization solutions to package entire analysis environments, guaranteeing portability between local, cluster, and cloud execution. |

| AWS CLI / gcloud CLI | Command-line tools for configuring, managing, and transferring data to and from AWS and GCP cloud resources, respectively. |

| Terraform / CloudFormation | Infrastructure-as-Code (IaC) tools to programmatically provision and manage the cloud infrastructure (VPC, compute, storage) needed for workflow execution. |

| S3 / Google Cloud Storage (GCS) | Scalable, durable object storage services used as the primary remote data layer for input, output, and intermediate files in cloud deployments. |

| SLURM / SGE Scheduler | Job scheduling and resource management software used to efficiently execute workflow jobs on local HPC clusters. |

| Git / GitHub / GitLab | Version control systems essential for tracking changes to the Snakefile, configuration profiles, and analysis scripts, enabling collaboration and reproducibility. |

Streamlining Your Search: Debugging, Scaling, and Cost-Effective Optimization

Common Snakemake Errors and Debugging Strategies in Metagenomic Contexts

This document serves as an application note within a broader thesis on developing a scalable Snakemake workflow for targeted enzyme discovery from complex metagenomic datasets. It outlines prevalent errors and systematic debugging approaches to ensure robust, reproducible bioinformatics analyses.

Common Snakemake Errors: Identification and Resolution

The table below summarizes frequent errors, their typical causes in metagenomic analyses, and immediate resolution strategies.

| Error Category | Specific Error Message/ Symptom | Common Cause in Metagenomics | Immediate Debugging Action |

|---|---|---|---|

| Rule Dependency | MissingInputException |

Incorrect wildcard pattern matching for highly variable sample names (e.g., sample_001_R1.fq vs sample001_R1.fastq). |

Run snakemake -n -p to visualize the DAG and check expected vs. actual filenames. Use wildcard_constraints in rules. |

| Resource Deadlock | WorkflowError: Cyclic dependency |

Circular logic in rule definitions, common when post-assembly analysis (e.g., binning) is incorrectly fed back into assembly. | Generate a rulegraph (snakemake --rulegraph). Inspect cycles and restructure workflow into a linear DAG. |

| Cluster/Cloud Execution | Job failed with exit code 1 outside of rule. |

Insufficient memory (mem) or runtime (time) defined for resource-intensive steps like metaSPAdes assembly or read mapping. |

Check cluster logs. Implement resource scaling using profiles (e.g., --profile slurm) and define resources: in rules. |

| Configuration Errors | KeyError in config dict. |

Referencing a undefined sample key or path in the config.yaml file, especially with large sample batches. | Validate config file with a YAML linter. Use config.get("key", default) for optional parameters in scripts. |

| Permission & Paths | FileNotFoundError or Permission denied |

Relative vs. absolute path issues when using working directory (--directory) or containerized execution (--use-singularity). |

Use absolute paths in config.yaml. Prepend workflow.basedir to relative paths in rules. Check file permissions. |

| Software Environment | ModuleNotFoundError in Python script. |

Inconsistent Conda environments across rules or missing specific dependency versions for tools like DIAMOND or Prokka. | Use conda: directives per rule. Run snakemake --use-conda --conda-cleanup-pkgs. Isolate tool environments. |

| Ambiguous Wildcards | WildcardError in rule all. |

Multiple rules can produce the same output file extension (e.g., .txt), confusing the final target rule. |

Make output filenames more specific (e.g., gene_count.txt vs sample_stats.txt). Order rules precisely. |

Debugging Protocol: A Stepwise Methodology

Protocol 1: Systematic Snakemake Workflow Debugging

Objective: To diagnose and resolve failures in a metagenomic Snakemake workflow, from quality control through to gene annotation and abundance profiling.

Materials:

- A functional Snakemake installation (v7.0+).

- Workflow Snakefile and configuration file.

- Sample metagenomic read files (e.g., FASTQ format).

- Access to a high-performance computing (HPC) cluster or local server with sufficient resources.

Procedure:

- Dry-run and DAG Generation:

- Execute

snakemake -n -p. This performs a dry-run (-n) while printing shell commands (-p), verifying the workflow logic without execution. - Generate a directed acyclic graph (DAG) visualization:

snakemake --dag | dot -Tsvg > workflow_dag.svg. Visually inspect for missing edges or unexpected nodes.

- Execute

Rulegraph Analysis for Structure:

- Generate a rulegraph to understand high-level rule dependencies:

snakemake --rulegraph | dot -Tsvg > workflow_rulegraph.svg. This abstract view helps identify cyclic dependencies.

- Generate a rulegraph to understand high-level rule dependencies:

Targeted Execution with Debug Flags:

- If a specific rule fails, run Snakemake targeting its output with verbose debugging:

snakemake <target_file> -r -n. The-rflag prints the reason for executing each job. - Use

--debug-dagfor detailed debugging information on dependency resolution.

- If a specific rule fails, run Snakemake targeting its output with verbose debugging: