Beyond Additivity: How to Model Epistasis in Protein Sequence-Function Relationships for Better Drug Design

This article provides a comprehensive guide for researchers and drug development professionals on addressing the critical challenge of epistasis—non-additive interactions between mutations—in protein sequence-function modeling.

Beyond Additivity: How to Model Epistasis in Protein Sequence-Function Relationships for Better Drug Design

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on addressing the critical challenge of epistasis—non-additive interactions between mutations—in protein sequence-function modeling. We explore the fundamental biological basis of epistasis and its impact on protein engineering. The guide details advanced methodological approaches, including machine learning and deep mutational scanning, for capturing these complex interactions. We address common troubleshooting issues and optimization strategies for model performance. Finally, we present frameworks for validating epistatic models and comparing their predictive power. The synthesis offers a roadmap for building more accurate models to accelerate therapeutic protein and drug design.

What is Epistasis? The Foundational Challenge in Protein Sequence Modeling

Troubleshooting Guide & FAQ for Epistasis Research

This technical support center provides solutions for common experimental challenges in epistasis research, framed within the broader thesis of improving protein sequence-function models. The goal is to enable accurate quantification and interpretation of non-additive genetic interactions in protein biochemistry.

FAQ: Conceptual & Computational Issues

Q1: In our deep mutational scanning (DMS) of a kinase, the measured fitness of a double mutant deviates significantly from the predicted sum of single mutant effects. How do we determine if this is true epistasis or an experimental artifact? A: First, verify the reproducibility of the single and double mutant measurements across biological replicates. High variance often indicates noise. Second, check the normalization of your selection assay read counts. Artifacts often arise from improper normalization of sequencing depth or PCR amplification bias. Use a stringent, multi-step normalization pipeline (e.g., median-of-ratios followed by local regression). True biological epistasis should be consistent across replicate experiments and show a pattern across related genotypes.

Q2: When constructing a protein sequence-function model, how should we handle non-additive terms to avoid overfitting? A: Implement regularized regression (e.g., LASSO or ridge regression) when including pairwise or higher-order interaction terms in your model. Start with a additive model (sum of single mutant effects), then iteratively add interaction terms for residue pairs with strong covariance in evolutionary data or spatial proximity in the protein structure. Use cross-validation on held-out mutant combinations to determine the optimal regularization penalty.

Q3: Our statistical model identifies many significant pairwise epistatic interactions. What are the first steps to biochemically validate a putative interaction? A: Prioritize interactions between residues that are physically close in the tertiary structure or part of a known functional motif. The primary validation step is to perform a targeted functional assay (e.g., enzyme kinetics, binding affinity via SPR/ITC) on the purified single and double mutant proteins. Confirm that the deviation from additivity observed in the high-throughput screen is recapitulated in the low-throughput, precise assay.

FAQ: Experimental & Technical Issues

Q4: During a DMS experiment using yeast surface display, we observe a severe dropout of specific single mutant variants in the library post-transformation, before selection. What could cause this? A: This is likely due to mutant-induced toxicity or folding defects that impair cellular growth or surface expression.

- Troubleshooting Steps:

- Check Library Diversity: Sequence the plasmid library before transformation to confirm the mutants are present.

- Reduce Expression: Use a weaker promoter or lower induction levels to mitigate toxicity from misfolded proteins.

- Use a Different Platform: Consider switching to a cell-free display system (e.g., ribosome display) or a more robust chaperone-rich expression host for problematic variants.

- Amplify Post-Selection: Only amplify the library for sequencing after the functional selection step to avoid skewing.

Q5: In our FRET-based assay to measure conformational changes, the signal-to-noise ratio is too low to reliably detect differences between epistatic mutants. How can we improve it? A:

- Optimize Dye Pair: Ensure you are using a FRET pair with high quantum yield and a Förster radius (R0) close to the expected distance change. Consider switching to newer, brighter dyes (e.g., Cy3B/Alexa Fluor 647).

- Purify Protein: Ensure protein labeling efficiency is >90% for both dyes. Use HPLC purification post-labeling to remove free dye.

- Control Environment: Perform assays in an oxygen-scavenging and triplet-state quenching system (e.g., PCA/PCD) to reduce photobleaching and blinking.

Q6: We are using ITC to measure binding affinity (ΔΔG) for single and double mutants. The heats of binding are too small for accurate integration. What should we do? A: This indicates a weak binding event or insufficient protein concentration.

- Solutions:

- Increase the concentration of the protein in the cell, if solubility allows.

- Use a longer injection time to allow the signal to return closer to baseline.

- Ensure degassing is thorough to eliminate baseline noise from bubble formation.

- As an alternative, consider using a more sensitive technique like Surface Plasmon Resonance (SPR) or Microscale Thermophoresis (MST) for low-affinity interactions.

Key Experimental Protocols

Protocol 1: Deep Mutational Scanning for Epistasis Mapping (Yeast Display)

- Objective: Quantify fitness effects of single and double mutants in a protein domain.

- Steps:

- Library Construction: Use NNK codon saturation mutagenesis at two target positions via overlap extension PCR. Clone into a yeast display vector (e.g., pYD1).

- Transformation: Electroporate the library into S. cerevisiae EBY100. Ensure library size is >100x theoretical diversity.

- Induction & Selection: Induce with galactose. Label cells with a fluorescently-labeled ligand. Use FACS to sort populations into 3-5 bins based on binding signal.

- Sequencing & Analysis: Isolate plasmid DNA from each bin and the pre-sort library. Perform NGS. Calculate enrichment ratios (log₂(fpost-sort / fpre-sort)) for each variant. Fitness is the weighted average of its log ratio across bins. Epistasis (ε) is calculated as: ε = Fitness(AB) - (Fitness(A) + Fitness(B) - Fitness(WT)).

Protocol 2: Isothermal Titration Calorimetry (ITC) for Energetic Epistasis Validation

- Objective: Measure the binding free energy (ΔG) of wild-type, single mutant (A, B), and double mutant (AB) proteins to a ligand.

- Steps:

- Sample Prep: Dialyze purified proteins and ligand into identical degassed buffer (e.g., 20 mM HEPES, 150 mM NaCl, pH 7.4).

- Experiment Setup: Load the ligand (at 10-20x the protein concentration) into the syringe. Load protein into the sample cell. Set temperature to 25°C.

- Titration: Perform 19 injections of 2 µL each with 150s spacing. Use a reference power of 5-10 µcal/s.

- Data Analysis: Integrate heat peaks. Fit data to a one-site binding model to obtain ΔH, ΔG, and Kd. Calculate ΔΔG for each mutant relative to WT. Energetic epistasis is: ΔΔG(AB) ≠ ΔΔGA + ΔΔG_B.

Data Presentation

Table 1: Example DMS Fitness and Epistasis Calculations for Hypothetical Protein X

| Variant | Residue 1 | Residue 2 | Fitness (log₂ Enrichment) | Fitness vs. WT (ΔFitness) | Epistasis (ε) |

|---|---|---|---|---|---|

| WT | Leu | Asp | 0.00 | 0.00 | - |

| Mut A | Phe | Asp | -0.85 | -0.85 | - |

| Mut B | Leu | Ala | -0.92 | -0.92 | - |

| Mut AB | Phe | Ala | -1.50 | -1.50 | +0.27 |

Calculation: ε = -1.50 - (-0.85 + -0.92 + 0.00) = +0.27

Table 2: ITC-Derived Energetics for Validated Epistatic Interaction

| Protein | Kd (nM) | ΔG (kcal/mol) | ΔΔG (kcal/mol) | ΔH (kcal/mol) | -TΔS (kcal/mol) |

|---|---|---|---|---|---|

| WT | 10.0 ± 1.5 | -10.22 | 0.00 | -12.5 | 2.28 |

| Mut A (Phe) | 45.0 ± 5.2 | -9.25 | +0.97 | -10.1 | 0.85 |

| Mut B (Ala) | 52.0 ± 6.1 | -9.16 | +1.06 | -14.2 | 5.04 |

| Mut AB (Phe/Ala) | 15.0 ± 2.0 | -10.02 | +0.20 | -11.8 | 1.78 |

Conclusion: Strong positive energetic epistasis observed. The double mutant is more stable than predicted from additive effects.

Mandatory Visualizations

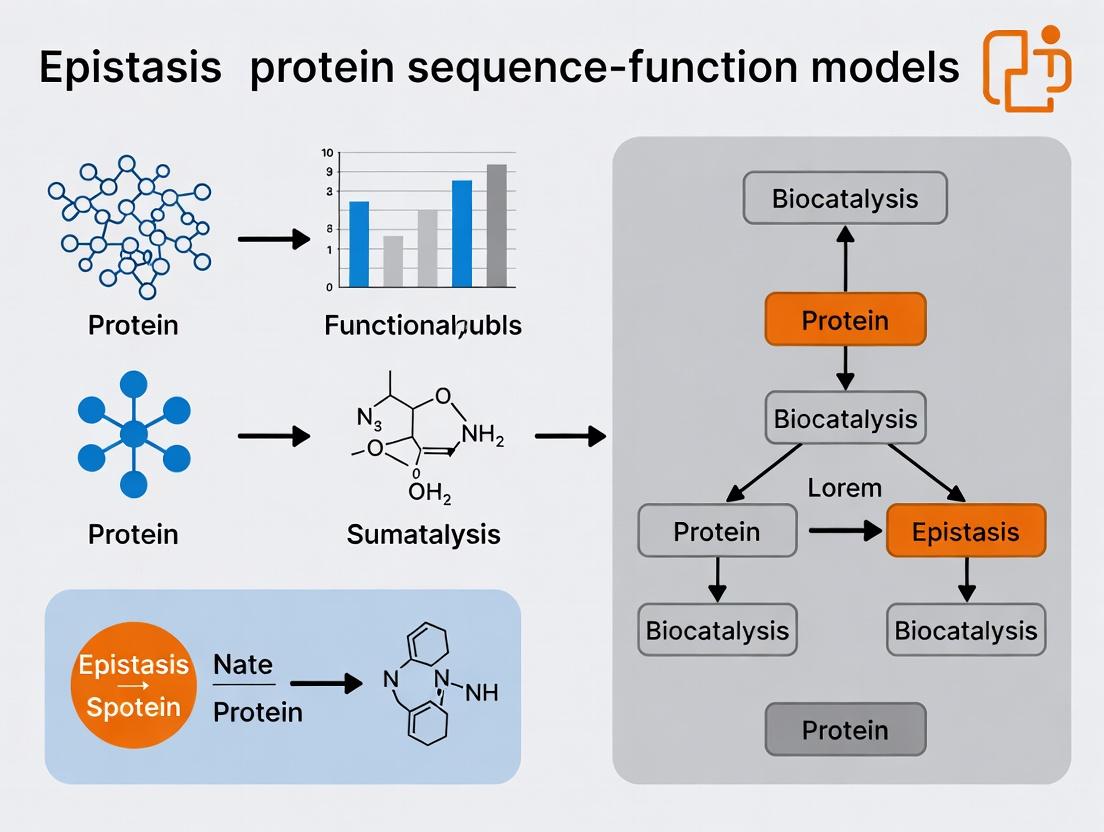

Title: High-Throughput Epistasis Mapping Workflow

Title: Classification of Epistatic Interactions in Proteins

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Epistasis Research |

|---|---|

| NNK Degenerate Oligonucleotides | For saturation mutagenesis library construction; NNK covers all 20 amino acids with only 32 codons. |

| Yeast Display Vector (e.g., pYD1) | Enables eukaryotic expression, folding, and surface display of protein libraries for FACS-based selection. |

| Anti-c-Myc FITC Antibody | Primary detection antibody for confirming surface expression of yeast-displayed proteins (C-terminal tag). |

| Streptavidin-PE / APC | Fluorescent conjugate for detecting biotinylated ligand binding during FACS selection. |

| Next-Generation Sequencing Kit (Illumina) | For high-throughput sequencing of mutant libraries pre- and post-selection to calculate enrichment. |

| HisTrap HP Column | For efficient purification of His-tagged mutant proteins for downstream biochemical assays (ITC, SPR). |

| Alexa Fluor 555/Cy3B NHS Ester | Bright, photostable fluorescent dyes for labeling proteins for FRET-based conformational studies. |

| ITC or SPR Instrument Buffer Kit | Pre-formulated, degassed buffer kits to ensure optimal baseline stability for sensitive binding measurements. |

| ΔΔG Calculation Software (e.g., PyRoS, epistasis) | Python packages specifically designed for statistical modeling and analysis of epistatic interactions. |

Troubleshooting & FAQ Center

Q1: Our epistatic interaction map from Deep Mutational Scanning (DMS) shows unexpectedly high noise. What are the primary sources of this noise and how can we mitigate them?

A: Noise in DMS-derived epistasis maps typically stems from three sources:

- Library Depth & Coverage: Inadequate sequencing depth per variant leads to poor fitness estimates.

- Bottleneck Effects: Population bottlenecks during cell passaging or selection cause stochastic variant loss.

- Selection Stringency: An overly strong or weak selection pressure compresses dynamic range.

Protocol: Optimized DMS for Epistasis Analysis

- Library Design: Use saturated mutagenesis (all possible single and double mutants) for a target region. Ensure theoretical library size is ≥1000x covered by the final sequencing read count.

- Transformation/Transduction: Perform multiple (>3) independent transformations/transductions to create biological replicate libraries. Pool them to minimize bottleneck effects.

- Selection/FACS: Titrate selection pressure in pilot studies. Aim for a modal fitness shift that allows both enriched and depleted variants to be quantified accurately.

- DNA Prep & Sequencing: Use PCR-free library prep methods where possible to avoid amplification bias. Sequence on a platform providing sufficient depth (aim for >500x average coverage per variant post-selection).

- Fitness Calculation: Use a robust pipeline (e.g.,

Enrich2,dms_tools2) that accounts for read count variance and applies regularized shrinkage estimators to fitness scores.

Q2: When constructing a sequence-function model (e.g., from DMS data), how do we distinguish true epistasis from experimental error or global non-additive effects like protein stability thresholds?

A: This requires a multi-step validation framework:

- Error Modeling: Fit a global measurement error model from synonymous/silent mutant replicates. Variants with epistatic terms that fall within the 95% confidence interval of this error can be flagged as unreliable.

- Stability Filtering: Predict ΔΔG for all single mutants using Rosetta

ddg_monomeror FoldX. Plot mutant fitness against predicted ΔΔG. Variants with fitness <10% of wild-type that also have predicted ΔΔG < -2 kcal/mol are likely stability-driven; their interactions may be non-specific. - Double-Mutant Cycle Analysis: For candidate epistatic pairs (A, B, AB), calculate the coupling energy (Ω) = Fitness(AB) - Fitness(A) - Fitness(B) + Fitness(WT). True epistasis requires |Ω| to be significantly greater than the sum of experimental errors for A, B, and AB.

Protocol: Double-Mutant Cycle Validation

- Clone Construction: Precisely construct the single (A, B) and double (AB) mutant clones via site-directed mutagenesis. Include the wild-type (WT) control.

- Quantitative Assay: Perform a controlled, multi-replicate (n≥6) functional assay (e.g., enzyme kinetics, binding affinity via SPR/ITC, cellular reporter activity).

- Calculate Ω: Use direct functional output (e.g., kcat/Km, KD, EC50) for the calculation, not normalized fitness scores.

- Statistical Test: Perform an ANOVA comparing the four genotypes (WT, A, B, AB). A significant interaction term (p < 0.01) confirms non-additivity. Report Ω with 95% confidence intervals from replicate experiments.

Q3: We've identified strong epistatic interactions in our target protein. How can we leverage this for drug design, particularly in avoiding resistance mutations?

A: Epistatic constraints can reveal "evolutionary vulnerable paths." Use the following workflow:

- Resistance Mutation Prediction: In silico, simulate all possible single-point mutations at the drug-binding site and score them for reduced drug affinity (using molecular docking with ΔΔG binding calculations).

- Epistatic Filter: Cross-reference these predicted resistance mutations with your epistasis map. Identify which require permissive "background" mutations (epistatic partners) to be tolerated without catastrophic loss of function.

- Target Vulnerable Nodes: Design drugs that engage residues involved in strongly negative epistatic interactions. A resistance mutation at such a residue would then require a specific, rare compensatory mutation elsewhere, drastically lowering its evolutionary probability.

Protocol: Identifying Evolutionarily Constrained Drug Targets

- Generate Epistasis Network: Create a graph where nodes are residues and edges are weighted by the magnitude of epistasis (Ω) between mutations at those residues.

- Map Binding Site: Annotate nodes/residues that make direct contact with your lead compound (from co-crystal structure or docking model).

- Cluster Analysis: Perform community detection on the epistasis network. Identify clusters (highly interconnected residue groups) that contain both binding-site and distal residues.

- Compound Optimization: Prioritize lead compound derivatives that make additional hydrogen bonds or van der Waals contacts with residues in these clusters, especially those showing strong negative epistasis with their partners.

Data Summary Tables

Table 1: Common Noise Sources in DMS Epistasis Studies

| Source | Typical Impact on Epistasis (Ω) Error | Mitigation Strategy |

|---|---|---|

| Low Sequencing Coverage | High variance (>±1.0 Ω) | Achieve >500x coverage per variant |

| Population Bottleneck | False positive/negative interactions | Use multiple independent library replicates |

| Compressed Selection | Underestimated Ω magnitude | Titrate selection to achieve 10-90% variant survival |

| PCR Amplification Bias | Systematic skew in fitness estimates | Use PCR-free NGS library prep |

Table 2: Analysis of Epistatic Interactions in Beta-Lactamase TEM-1 (Recent Study)

| Mutant Pair (Residues) | Measured Ω (Fitness) | Predicted Additive Fitness | Classification | Implication for Drug Design |

|---|---|---|---|---|

| M182T + G238S | +0.85 | +0.45 | Positive (Suppressive) | Common resistance pathway; target G238S interactions. |

| R164S + H205R | -1.20 | -0.40 | Negative (Synergistic) | Double mutant non-viable; co-targeting may prevent escape. |

| E104K + G238S | +0.10 | +0.70 | Sign Epistasis | E104K permissive mutation enables G238S resistance. |

Experimental Workflow Visualization

Diagram Title: Epistasis Mapping & Application Workflow

Diagram Title: Double Mutant Cycle (DMC) Principle

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Epistasis Research |

|---|---|

| Commercially Saturated Mutagenesis Kits (e.g., Twist Bioscience oligo pools) | Provides high-fidelity, comprehensive variant libraries for DMS with precise control over mutation frequency. |

| Error-Robust NGS Library Prep Kits (e.g., PCR-free kits from NEB) | Minimizes amplification bias during sequencing library construction, crucial for accurate variant frequency counts. |

| Stable Cell Lines for Continuous Selection (e.g., Flp-In T-REx 293) | Enables consistent, inducible expression of variant libraries for long-duration or titration-based selection assays. |

| Cell Sorting & Automation (e.g., SONY SH800S sorter w/ 96-well plate sorting) | Allows high-throughput, quantitative phenotypic separation based on fluorescence reporters linked to protein function. |

| Microscale Thermophoresis (MST) Assay Kits | Facilitates rapid, in-solution measurement of binding affinity (KD) for purified WT and mutant proteins, key for DMC validation. |

| Structure Prediction & ΔΔG Software (e.g., Rosetta, FoldX license) | Computes predicted stability changes (ΔΔG) to filter global stability effects from specific epistatic interactions. |

Technical Support Center: Troubleshooting Protein Epistasis Experiments

This support center is designed to assist researchers investigating epistasis in protein engineering, evolution, and drug design. The guidance is framed within the thesis that accurate sequence-function models require the explicit integration of structural and energetic data to disentangle non-additive mutational interactions.

FAQs & Troubleshooting Guides

Q1: In deep mutational scanning (DMS), we observe strong pairwise epistasis between two distal sites. What are the primary structural mechanisms to investigate? A: Distal epistasis often arises from allosteric communication or through energetic coupling mediated by the protein fold. Follow this protocol:

- Obtain Structures: Use PDB files (wild-type and any single mutant models, if available). Perform homology modeling if necessary.

- Analyze Connectivity: Map the two sites onto the structure. Determine if they are connected via:

- A direct hydrogen-bond network or van der Waals packing (less likely for distal sites).

- A shared, rigid structural element (e.g., an alpha-helix).

- A dynamic allosteric pathway (see Diagram 1).

- Measure Dynamics: Use Molecular Dynamics (MD) simulations (≥100 ns replicate) to calculate correlated motion (MI, LMI, or DCI analysis) between the sites. Increased correlation in the double mutant versus single mutants suggests allosteric epistasis.

- Probe Energetics: If possible, use double mutant cycle analysis with experimental stability (ΔΔG) data (see Table 1).

Q2: Our computational model (e.g., EVmutation, SCA) predicts strong epistasis, but our experimental assay shows additive effects. What could be the source of this discrepancy? A: This is common and points to context-dependency.

- Troubleshooting Steps:

- Assay Condition Check: Ensure your experimental conditions (pH, temperature, buffer, ligand concentration) match the in vivo or target physiological context. Allostery is highly condition-sensitive.

- Functional Readout: Verify your assay measures the function relevant to the model's training data. A model trained on evolutionary covariation may predict fitness epistasis, not specific enzyme activity epistasis.

- Protein Construct: Check if your purified construct includes all necessary domains for allosteric regulation. Truncations can abolish epistasis.

- Data Quality: Re-examine the confidence intervals on your experimental measurements. Weak epistasis can be masked by high measurement noise.

Q3: How can we experimentally distinguish between "direct" (through-bond) and "indirect" (through-space/allosteric) epistasis for two mutations? A: Implement a Double Mutant Cycle (DMC) analysis combined with structural probes.

- Protocol - DMC:

- Clone, express, and purify four protein variants: WT, Mutant A, Mutant B, Double Mutant AB.

- Measure the functional property (e.g., ligand binding affinity Kd, catalytic rate kcat, or stability ΔGfolding) for all four.

- Calculate the coupling energy: ΔΔGint = ΔGAB - (ΔGA + ΔGB). A |ΔΔGint| > ~1 kcal/mol indicates significant epistasis.

- Protocol - Structural Distinction:

- Direct Interaction: Solve high-resolution structures (X-ray crystallography to <2.5Å or cryo-EM) of the single and double mutants. Look for direct atom-atom contacts <4Å that are present only in the double mutant.

- Allosteric/Indirect: Use Hydrogen-Deuterium Exchange Mass Spectrometry (HDX-MS). Compare deuteration patterns. If the double mutant shows protection/deuteration changes in regions distant from both mutations, it indicates a propagated conformational change.

Q4: When performing MD simulations to study epistasis, what are the key energetic and dynamic parameters to extract and compare? A: Beyond root-mean-square deviation (RMSD), focus on these calculated metrics across variants (WT, single mutants, double mutant):

| Parameter | How to Calculate (Typical MD Suite) | Interpretation for Epistasis |

|---|---|---|

| Dynamic Cross-Correlation (DCC) | gmx covar & gmx anaeig in GROMACS; Cα atom motions. |

Identifies networks of correlated/anti-correlated motion. Changes in correlation between sites indicate allosteric epistasis. |

| Binding Free Energy (MM/PBSA or GBSA) | g_mmpbsa or AMBER's MMPBSA.py. Compute for ligand binding or protein-protein interaction. |

Compare ΔGbind across variants. Non-additivity confirms functional epistasis. |

| Per-Residue Energy Decomposition | Part of MM/PBSA or alanine scanning. | Pinpoints which residues' energy contributions change non-additively in the double mutant. |

| Distance & Dihedral Timeseries | gmx distance, gmx angle in GROMACS. Monitor specific distances/angles linking mutation sites. |

Reveals if the double mutant samples a distinct conformational substate. |

| Principal Component Analysis (PCA) | gmx covar, gmx anaeig on Cα atoms. Project trajectories onto dominant eigenvectors. |

Visualizes if the double mutant explores a distinct region of conformational space. |

Experimental Protocols

Protocol 1: Double Mutant Cycle Analysis for Binding Epistasis Objective: Quantify the coupling energy between two mutations on ligand binding affinity. Materials: See "Research Reagent Solutions" table. Steps:

- Variant Generation: Generate expression constructs for WT, Mut A, Mut B, and Double Mut AB via site-directed mutagenesis. Confirm by sequencing.

- Protein Purification: Express variants in E. coli (or relevant system) and purify using affinity (Ni-NTA for His-tag) followed by size-exclusion chromatography (SEC) in identical buffer (e.g., 20 mM Tris, 150 mM NaCl, pH 8.0).

- Binding Assay (ITC Recommended):

- Load the calorimeter cell with protein solution (e.g., 50 μM).

- Fill the syringe with ligand solution (e.g., 500 μM).

- Perform injections at constant temperature (e.g., 25°C).

- Fit the integrated heat data to a one-site binding model to obtain Kd, ΔH, and stoichiometry (n).

- Data Analysis:

- Convert Kd to ΔGbind = RT ln(Kd).

- Calculate ΔΔGbind for each mutant relative to WT: ΔΔGA = ΔGA - ΔGWT, etc.

- Calculate the interaction coupling energy: ΔΔGint = ΔGAB - ΔGWT - (ΔΔGA + ΔΔGB).

Protocol 2: HDX-MS to Probe Allosteric Conformational Changes Objective: Identify regions of altered dynamics or structure due to epistatic mutations. Steps:

- Sample Preparation: Dilute purified protein variants (WT and epistatic double mutant) to 10 μM in deuterated buffer (PBS in D2O, pD 7.4). Incubate for varying times (e.g., 10s, 1min, 10min, 1hr) at 4°C.

- Quenching & Digestion: Quench by lowering pH (adding cold quench buffer to pH 2.5) and temperature (0°C). Pass over an immobilized pepsin column for online digestion.

- LC-MS/MS Analysis: Desalt peptides on a trap column and separate via reversed-phase UPLC (sub-zero temperature). Analyze with a high-resolution mass spectrometer.

- Data Processing: Use software (e.g., HDExaminer, DynamX) to identify peptides and calculate deuterium uptake for each time point.

- Epistasis Analysis: Compare uptake kinetics peptide-by-peptide. A significant difference (>0.5 Da, >5% significance) in the double mutant, especially at regions distant from the mutation sites, indicates an allosteric conformational origin of epistasis.

Visualizations

Diagram 1: Allosteric Pathway Underlying Distal Epistasis

Diagram 2: Workflow for Dissecting Epistatic Mechanisms

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Epistasis Research | Example/Notes |

|---|---|---|

| Site-Directed Mutagenesis Kit | Rapid generation of single and double mutant constructs for DMC. | Q5 Hot Start High-Fidelity (NEB), KLD Enzyme Mix. |

| Stable Isotope-labeled Media | For producing uniformly 15N/13C-labeled proteins for NMR or SILAC-MS. | Celtone base powder, Silantes ISOGRO. |

| Size-Exclusion Chromatography (SEC) Column | Critical for purifying monodisperse, correctly folded protein for consistent assays. | Superdex 75/200 Increase, BioSEC-5 (for HPLC). |

| Isothermal Titration Calorimeter (ITC) | Gold standard for measuring binding thermodynamics (Kd, ΔH, ΔS) for DMC. | MicroCal PEAQ-ITC (Malvern). |

| HDX-MS Quench & Digestion System | Automated, reproducible setup for HDX sample preparation. | LEAP Technologies HDX PAL with immobilized pepsin. |

| Molecular Dynamics Software | Simulate protein dynamics to compute energetic couplings and pathways. | GROMACS (free), AMBER, CHARMM. |

| Epistasis Analysis Software | Statistical models to identify and interpret epistasis from DMS data. | epistasis (Python package), ggplot2 in R for DMC plots. |

Technical Support Center: Troubleshooting Guides & FAQs

Common Experimental Issues & Solutions

FAQ 1: How do I determine if epistasis in my deep mutational scanning data is synergistic or antagonistic?

- Issue: User cannot classify interaction types from double mutant versus single mutant fitness measurements.

- Solution: Calculate the expected multiplicative fitness (w1 * w2) for the double mutant if mutations were independent. Compare to the observed double mutant fitness (w12).

- Synergistic (Positive): Observed w12 > Expected.

- Antagonistic (Negative): Observed w12 < Expected.

- Use the equation: ε = log(w12) - [log(w1) + log(w2)], where ε > 0 indicates synergism, ε < 0 indicates antagonism.

- Protocol: 1) Normalize all fitness values (w) to wild-type (wt=1). 2) For mutations A and B, record wA and wB. 3) Calculate expected = wA * wB. 4) Compare expected to measured w_AB.

FAQ 2: My protein function model fails to predict double mutant phenotypes accurately. How can I incorporate epistasis?

- Issue: Additive or independent models poorly predict experimental results for combinatorial variants.

- Solution: Implement a regression framework that includes an interaction term. Use a linear model: Function = β0 + β1(mut1) + β2(mut2) + β3(mut1 * mut2). The sign and magnitude of β3 quantify the epistatic interaction.

- Protocol: 1) Code single mutant effects as dummy variables (0 for WT, 1 for mutant). 2) For double mutants, include an additional column that is the product of the two single-mutant columns. 3) Fit the linear model to your training data (e.g., fluorescence, activity). 4) Validate the model's prediction of β3 on a held-out test set of double mutants.

FAQ 3: How can I statistically validate that an observed interaction is not due to experimental noise?

- Issue: Uncertainty in single mutant measurements propagates, making epistasis calls unreliable.

- Solution: Perform bootstrapping on replicate data to generate confidence intervals for the epistasis coefficient (ε).

- Protocol: 1) For each genotype (WT, A, B, AB), you have 'n' replicate measurements. 2) Randomly resample (with replacement) from each genotype's replicates to create a bootstrap dataset. 3) Calculate ε for this dataset. 4) Repeat 1000+ times. 5) The 95% confidence interval from the bootstrap distribution shows if ε is significantly different from zero.

Table 1: Common Metrics for Quantifying Epistasis

| Metric | Formula | Interpretation | Best For |

|---|---|---|---|

| Multiplicative Deviation (ε_log) | log(wAB) - [log(wA) + log(w_B)] | εlog > 0: Synergistic. εlog < 0: Antagonistic. | Continuous fitness/activity data. Log scale handles magnitude well. |

| Additive Deviation (ε_add) | wAB - (wA + w_B - 1) | εadd > 0: Synergistic. εadd < 0: Antagonistic. | Function where wild-type is 0 and effect is additive (e.g., binding energy). |

| Interaction Coefficient (β3) | From linear model: Y = β0 + β1X1 + β2X2 + β3(X1*X2) | β3 > 0: Synergistic. β3 < 0: Antagonistic. | High-throughput variant screens, integrating epistasis into predictive models. |

Table 2: Troubleshooting Common Data Artifacts

| Symptom | Possible Cause | Diagnostic Check | Corrective Action |

|---|---|---|---|

| Systematic antagonism at high fitness | Fitness ceiling or assay saturation. | Plot observed vs. expected fitness. Look for flattening at high values. | Use a more sensitive assay or transform data (e.g., log). |

| Massive synergy only in deleterious mutants | Compensatory masking of severe defects. | Check if single mutants wA and wB are both very low (<0.2). | Analysis may be valid; biological context is key. Report with caveat. |

| High variance in ε for low-fitness mutants | Poor signal-to-noise in growth/activity assays. | Plot variance of ε against mean single mutant fitness. | Filter out variants with fitness below assay noise floor. Increase replicates. |

Experimental Protocols

Protocol 1: Deep Mutational Scanning for Epistasis Mapping

- Objective: Quantify fitness effects of single and double mutants in a pooled assay.

- Methodology:

- Library Construction: Use site-saturation mutagenesis at two target positions, followed by DNA shuffling or combinatorial oligo synthesis to generate the single and double mutant library.

- Selection: Clone library into an appropriate expression system (e.g., phage display, yeast surface display, bacterial growth competition). Apply a functional selection (binding, catalysis, survival).

- Sequencing: Perform deep sequencing (Illumina) on the pre-selection (input) and post-selection (output) populations.

- Analysis: Calculate enrichment ratios (output/input) for each variant. Normalize to wild-type to get fitness 'w'. Compute epistasis coefficients (ε) as per Table 1.

Protocol 2: High-Throughput Protein Complementation Assay for Interactions

- Objective: Measure epistasis in a protein function (e.g., enzyme activity) via a compartmentalized assay.

- Methodology:

- Cloning: Generate all single and double mutant constructs in an expression vector.

- Assay Plate Setup: Express variants individually in a 384-well plate with a fluorescent or luminescent reporter of protein function.

- Measurement: Use a plate reader to quantify function (e.g., fluorescence intensity at kinetic endpoint) for each variant with sufficient replicates (n>=4).

- Normalization: Normalize raw data to wild-type (set to 1) and negative control (set to 0). Calculate mean and standard deviation per variant.

- Modeling: Fit the data to the linear model with interaction term (β3) using standard statistical software (R, Python).

Visualizations

Epistasis Mapping Experimental Workflow

Positive vs. Negative Epistasis Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Epistasis Research |

|---|---|

| Combinatorial Mutagenesis Oligo Pools | Synthesizes all single and double mutant gene variants in a single tube for library construction. |

| Phage or Yeast Display System | Provides a physical link between protein variant (genotype) and function for pooled selection. |

| Next-Gen Sequencing Kit (Illumina) | Quantifies variant abundance pre- and post-selection to calculate fitness effects. |

| Fluorescent/Luminescent Reporter Assay | Enables high-throughput, quantitative measurement of protein function in a plate-based format. |

| Statistical Software (R/Python with glm) | Fits linear models with interaction terms to quantify epistasis coefficients (β3). |

| Bootstrapping/Randomization Script | Assesses the statistical significance of calculated epistasis values against experimental noise. |

Technical Support Center: Troubleshooting Non-Linear Modeling & Epistasis Analysis

Frequently Asked Questions (FAQs)

Q1: My deep mutational scanning (DMS) data shows high variance for double mutants, making epistasis estimates unstable. How can I improve data quality? A: This is often due to insufficient sequencing depth or bottlenecks in the experimental workflow. Ensure a minimum of 500x per variant coverage post-filtering. Implement technical replicates using unique molecular identifiers (UMIs) to distinguish PCR duplicates from biological variance. Normalize fitness scores using a robust z-score method against synonymous neutral variants included in your library.

Q2: When fitting a non-linear model (e.g., Gaussian Process), the model overfits the single mutant data but fails to predict double mutant phenotypes. What steps should I take?

A: This indicates a lack of complexity to capture interactions. First, verify your dataset includes a sufficient sampling of higher-order mutants (ideally all possible double mutants for a subset of sites). Switch from a standard radial basis function (RBF) kernel to a combination kernel (e.g., DotProduct + RBF) that explicitly models interaction terms. Implement cross-validation at the mutation order level (train on singles, test on doubles).

Q3: How do I statistically distinguish genuine epistasis from measurement noise in my fitness landscape? A: Apply a global epistasis model as a null. Fit a smooth, non-linear function (sigmoid or simple neural network) that maps additive fitness predictions to observed fitness. Significant deviations of specific variants from this global curve indicate idiosyncratic (specific) epistasis. Use bootstrapping to generate confidence intervals for the global fit.

Q4: My machine learning model for protein function prediction performs well on training data but generalizes poorly to new protein families. How can I improve transferability? A: This is a feature representation problem. Move from one-hot encoding of sequences to embeddings from protein language models (e.g., ESM-2). Use multi-task learning, training the model on diverse functional assays simultaneously to encourage the learning of general biophysical principles. Incorporate evolutionary covariance data from multiple sequence alignments as a regularizer.

Troubleshooting Guides

Issue: Low Predictive Power for Synergistic/Suppressive Epistasis Symptoms: Model accurately predicts additive and mildly antagonistic effects but systematically underestimates strong positive or negative synergy. Diagnosis: The chosen model architecture (e.g., simple additive model with pairwise interaction terms) has an inherent mathematical limitation in capturing high-order, non-linear interactions. Solution:

- Protocol: Experimental Validation of High-Order Interactions

- Design: Select 20-30 variant pairs predicted to have the largest discrepancy between observed fitness and model prediction.

- Cloning: Use combinatorial Golden Gate assembly or inverse PCR to construct these specific double/triple mutants.

- Assay: Measure function with a high-precision, low-noise assay (e.g., fluorescence-activated cell sorting for binding, direct enzyme kinetics).

- Analysis: Re-fit model using a neural network with at least one hidden layer (non-linear activation). Compare the root mean square error (RMSE) on this new validation set before and after re-fitting.

- Protocol: Incorporating Structural Data as a Model Prior

- Input: Generate distance matrices for all mutated residue pairs from a protein structure (PDB file or AlphaFold2 prediction).

- Integration: Use the inverse squared distance (

1/d^2) as a prior weight for the corresponding interaction term in a Bayesian ridge regression model. This constrains physically proximal residues to have potentially stronger interactions. - Validation: Compare the Bayesian model's performance on held-out double mutants against a model without the structural prior.

Issue: Inconsistent Epistasis Measurements Across Different Assays Symptoms: A variant pair shows strong positive epistasis in a growth-based selection assay but appears additive in a direct enzymatic activity assay. Diagnosis: The assays report on different, condition-dependent facets of protein function (e.g., stability vs. catalytic efficiency). The observed "epistasis" is not a fixed property but is context-dependent. Solution:

- Protocol: Multi-Assay Profiling for Context-Specific Epistasis

- Workflow: For a curated set of 50 single mutants and 50 double mutants, perform three parallel assays:

- Assay A: Cell growth/selection (reports on in vivo fitness).

- Assay B: In vitro purified protein activity (reports on intrinsic function).

- Assay C: Thermal shift or protease sensitivity (reports on stability).

- Analysis: Calculate epistasis coefficients (ε) for each variant pair in each assay. Use Pearson correlation to compare epistasis maps between assays.

- Modeling: Train a multi-output model that predicts all three assay outcomes simultaneously, sharing a common latent representation of the sequence.

- Workflow: For a curated set of 50 single mutants and 50 double mutants, perform three parallel assays:

Table 1: Comparison of Model Performance on Predicting Double Mutant Fitness

| Model Type | Training Data (Singles) | Test Data (Doubles) RMSE | Epistatic Variance Captured (%) | Computational Cost (CPU-hr) |

|---|---|---|---|---|

| Linear Additive | 1,000 variants | 1.45 ± 0.12 | ~30% | 0.1 |

| Regularized Pairwise | 1,000 variants | 0.89 ± 0.08 | ~65% | 2.5 |

| Gaussian Process (RBF) | 1,000 variants | 0.71 ± 0.10 | ~78% | 18.0 |

| Neural Network (2 hidden) | 1,000 variants | 0.58 ± 0.07 | ~92% | 45.0 (GPU) |

Table 2: Sources of Variance in Epistasis Measurement (Simulation Data)

| Variance Source | Contribution to ε Error (%) | Mitigation Strategy | Resulting Error Reduction |

|---|---|---|---|

| Sequencing Depth | 40% | Increase depth to >500x & use UMIs | 35% |

| Assay Noise (Biological) | 35% | Use normalized fold change (log scale) & robust stats | 25% |

| Inadequate Global Fit | 20% | Apply global epistasis smoothing prior to analysis | 15% |

| Library Bottleneck | 5% | Maintain library complexity >100x variant count | 5% |

Experimental Protocols

Protocol: High-Throughput Epistasis Mapping via DMS Objective: Quantify genetic interactions between all single mutants at two specified residues (A and B). Materials: See "The Scientist's Toolkit" below. Method:

- Library Design: Synthesize an oligonucleotide library encoding all 400 possible amino acid combinations at the two target positions (20x20), embedded within the wild-type background. Include barcodes and flanking homology arms.

- Library Cloning: Use yeast homologous recombination or Gibson assembly to clone the pooled oligo library into the expression vector of interest. Transform into a highly competent E. coli strain. Plate on large-format agar plates to maintain diversity. Harvest >1e7 colonies.

- Selection & Sequencing (T0): Isothermally amplify the variant region from the plasmid pool using primers adding Illumina adapters and a sample index. Perform 2x150bp sequencing on an Illumina MiSeq to obtain the pre-selection variant counts.

- Functional Selection: Transform the plasmid library into the relevant screening host (e.g., yeast for stability, bacteria for activity). Apply the selective pressure (e.g., antibiotic gradient, fluorescence sorting, auxotrophy complementation) for a predetermined number of generations.

- Selection & Sequencing (T1): Isolate plasmid DNA from the post-selection population. Repeat step 3 sequencing.

- Data Analysis: Count barcodes at T0 and T1. Calculate enrichment scores (log2(T1/T0)). Normalize scores using the median of synonymous neutral variants. Calculate epistasis (ε) for double mutant ij as: ε = Fij - Fi - Fj + Fwt, where F is the normalized fitness.

Visualizations

Diagram Title: DMS Workflow for Epistasis Mapping

Diagram Title: Additive vs Non-Linear Model Schematic

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Epistasis Research | Example/Note |

|---|---|---|

| Combinatorial Oligo Library Pool | Encodes all desired single and higher-order mutants for high-throughput testing. | Custom synthesized as 20-40k oligo pool; include unique barcodes for each variant. |

Global Epistasis R Package (gpglob)` |

Software to fit and visualize global epistasis models from DMS data. | Uses Gaussian processes to separate global non-linearity from specific genetic interactions. |

| Protein Language Model Embeddings (ESM-2) | Pre-trained deep learning model that converts amino acid sequences into contextual numerical vectors. | Provides a rich, evolution-informed feature set for training predictive models (esm.pytorch). |

| FRET-based Biosensor Assay Kits | Enables high-precision, real-time measurement of conformational changes or activity in live cells. | Critical for quantifying functional epistasis in a physiologically relevant context. |

| Next-Generation Sequencing Kit (Illumina) | For accurate, deep sequencing of variant libraries pre- and post-selection. | MiSeq Reagent Kit v3 (600-cycle) provides sufficient read length for barcode and variant identification. |

| Golden Gate Assembly Mix | Enables efficient, one-pot combinatorial assembly of multiple DNA fragments for variant construction. | Allows rapid cloning of specific higher-order mutant combinations for validation. |

Advanced Methods for Capturing Epistasis: From DMS to Deep Learning

This technical support center provides troubleshooting and guidance for researchers designing Deep Mutational Scanning (DMS) experiments specifically aimed at detecting and quantifying epistatic interactions within proteins. This content supports a broader thesis on improving protein sequence-function models by systematically accounting for non-additive genetic interactions (epistasis), a critical challenge in protein engineering and therapeutic development.

Frequently Asked Questions & Troubleshooting

Q1: Our DMS variant library shows extremely biased representation after transformation and selection. What are the likely causes and solutions? A: Biased representation often stems from bottlenecks during library construction or cellular transformation.

- Troubleshooting Steps:

- Quantify Library Diversity: Sequence an aliquot of the plasmid library before transformation. Aim for >10x coverage of all possible variants. If diversity is low at this stage, optimize oligo pool synthesis or assembly PCR conditions.

- Increase Transformation Scale: Use electrocompetent cells and perform large-scale transformations (>10^9 colonies). Pool all colonies thoroughly.

- Minimize Selection Bottlenecks: If using a survival-based screen, ensure selective pressure is applied gradually or at a low level initially to avoid massive cell death.

- Protocol - High-Efficiency Library Transformation:

- Perform 10 separate 50 μL electroporations using high-efficiency NEB 10-beta E. coli.

- Recover each in 1 mL SOC for 1 hour at 37°C.

- Pool all recoveries, take a 1:10,000 dilution to titer, and culture the remainder in 500 mL LB + antibiotic overnight.

- Harvest plasmid DNA via maxiprep. This pooled DNA is your library for the next step.

Q2: We observe high experimental noise, making it difficult to distinguish true epistatic signals from measurement error. How can we improve signal-to-noise? A: Noise reduction requires both biological and technical replication, alongside robust normalization.

- Recommended Protocol - Replication & Sequencing Depth:

- Perform at least three independent biological replicates (separate library transformations and selections).

- Sequence each replicate to a depth of at least 500 reads per variant per replicate post-selection. Pre-selection depth should be higher.

- Use barcoding to distinguish replicates during sequencing.

- Data Processing Step: Normalize variant counts within each replicate using the following steps, implemented in tools like

dms_tools2orEnrich2:- Correct for sequencing errors (e.g., via a naive Bayesian classifier).

- Normalize counts by total read count per sample.

- Compute functional scores (e.g., log2(enrichment ratio)) relative to the pre-selection library.

Q3: What is the best computational method to identify statistically significant epistasis from our DMS fitness data? A: Epistasis is typically identified by comparing observed double-mutant fitness to an expected model based on single mutants. The choice of model is crucial.

- Comparative Table of Epistasis Models:

| Model Name | Formula (Expected Fitness) | Best Use Case | Key Consideration |

|---|---|---|---|

| Additive | log(Wab) = log(Wa) + log(Wb) - 2*log(Wwt) | Initial scan, multiplicative phenotypes | Assumes independent effects. |

| Multiplicative | Wab = Wa * Wb / Wwt | Standard for growth/survival fitness. | Most common null model. |

| Minimum (GEM) | log(Wab) = min(log(Wa), log(W_b)) | Assessing functional dominance. | Conservative estimate. |

- Protocol - Epistasis Calculation:

- Calculate robust fitness scores (W) for all single and double mutants from normalized counts.

- For each double mutant AB, compute the epistasis (ε) as:

ε = log(W_ab_observed) - log(W_ab_expected)where expected is from your chosen model (e.g., multiplicative). - Use an error propagation model (bootstrapping or analytic) to assign a standard error to each ε. Variants with |ε| > 4*SE are strong epistasis candidates.

Q4: How do we design a DMS library to effectively probe higher-order epistasis (interactions beyond pairs)? A: Tiling-based or combinatorial subset libraries are more effective than fully random libraries for higher-order studies.

- Design Strategy:

- Define a Region: Focus on a protein domain (e.g., 50 residues).

- Tile Saturation Mutagenesis: Design oligonucleotides to mutagenize every position in the region to all 20 amino acids, but in small, overlapping tiles (e.g., 5-7 residues per tile).

- Combinatorial Assembly: Use Golden Gate assembly to randomly combine mutated tiles. This generates a library with full saturation within tiles but random combinations between tiles, enabling the detection of interactions between distant sites while keeping library size manageable.

- Troubleshooting: If assembly efficiency is low, optimize the length and GC content of the overlapping sequences between tiles.

Experimental Workflow Diagram

DMS for Epistasis Detection Workflow

Epistasis Models Relationship Diagram

Comparing Epistasis Null Models

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in DMS for Epistasis | Key Consideration |

|---|---|---|

| Oligonucleotide Pool (Array-Synthesized) | Source of all designed codon variants for the library. | Ensure high synthesis quality and minimal dropout; use error-correction PCR. |

| Golden Gate Assembly Mix | Efficient, seamless assembly of multiple mutated DNA tiles into a plasmid backbone. | Critical for combinatorial tiling libraries; optimize enzyme (e.g., BsaI) and fragment ratios. |

| Electrocompetent Cells (e.g., NEB 10-beta) | High-efficiency transformation of the large, complex plasmid library. | Use >10^9 CFU/μg efficiency cells; scale transformations to maintain diversity. |

| Next-Gen Sequencing Kit (Illumina 2x150bp) | Quantifying variant abundance pre- and post-selection. | Must generate sufficient reads for >500x coverage per variant post-selection. |

| Selection Plasmid Backbone | Vector enabling the functional screen (e.g., phage display, yeast display, survival). | Choice dictates selection modality; must be compatible with library size and expression host. |

Normalization & Analysis Software (dms_tools2, Enrich2) |

Processing raw counts into normalized fitness scores and calculating epistasis. | Must implement a robust statistical model (e.g., error propagation) for reliable ε values. |

Troubleshooting Guides & FAQs

Q1: Why is my model with interaction terms showing high variance inflation factors (VIFs), and how do I address it? A: High VIFs (typically >10) indicate multicollinearity between main effects and their interaction terms. This is expected because an interaction term is a product of its constituent variables. To address this:

- Center your predictors before creating interaction terms. This reduces correlation between the main effect and interaction term variables.

- Use Regularization (Ridge Regression) to penalize large coefficients and stabilize estimates.

- Consider Principal Component Analysis (PCA) on predictors before creating interactions to work with orthogonal components.

Q2: My linear regression model with interactions has a good R² on training data but performs poorly on new data. What's wrong? A: This is a classic sign of overfitting, often due to including too many interaction terms relative to your sample size.

- Solution: Implement forward/backward selection or LASSO regression to select only the most significant interactions.

- Rule of Thumb: You need 10-15 observations per parameter (including each coefficient you estimate) for reliable results.

Q3: How do I correctly interpret the coefficients of a model with interaction terms?

A: The coefficient for a main effect (e.g., β₁ for variable X₁) represents its effect on the outcome when the interacting variable (e.g., X₂) is zero. If variables are centered, it represents the average main effect. The interaction coefficient (β₁₂) represents how much the effect of X₁ changes for a one-unit increase in X₂ (and vice versa). Always interpret using the combined effect: Effect of X₁ = β₁ + β₁₂ * X₂.

Q4: My experiment measures epistasis in protein function. When should I use a linear model with interactions versus a more complex machine learning model? A: Use linear regression with interactions when:

- The number of mutations/variants is relatively small.

- Interpretability and hypothesis testing (p-values for specific interactions) are primary goals.

- You have a strong prior for specific pairwise interactions.

Switch to Random Forest, Gradient Boosting, or Neural Networks when:

- You are screening for higher-order interactions (beyond pairwise).

- The number of features (amino acid positions) is very large.

- Predictive power is the sole objective, and interpretability is secondary.

Q5: How can I test if an interaction effect is statistically significant if my standard linear regression assumptions are violated? A: If residuals are non-normal or heteroscedastic:

- Use robust standard errors (Huber-White/sandwich estimators) to calculate reliable p-values.

- Perform a non-parametric permutation test: Randomly shuffle the labels of your interacting variable many times, re-fit the model, and compare your observed interaction coefficient to the null distribution.

- Consider a generalized linear model (GLM) with an appropriate family (e.g., Gamma for positive continuous data).

Data Presentation

Table 1: Comparison of Model Performance on Simulated Epistatic Protein Data

| Model Type | # of Features | Included Interaction Terms | Training R² | Test Set R² (5-fold CV) | Mean VIF |

|---|---|---|---|---|---|

| Linear (Main Effects Only) | 10 | None | 0.65 | 0.63 | 1.8 |

| Linear (All Pairwise Interactions) | 10 | All (45 terms) | 0.92 | 0.41 | 25.7 |

| Linear (Regularized, L1/L2) | 10 + 45 | Selected via LASSO | 0.78 | 0.75 | 4.2 |

| Random Forest | 10 | Implicit | 0.99* | 0.82 | N/A |

| *Indicates severe overfitting without proper tuning. |

Table 2: Required Sample Size for Reliable Interaction Detection (Power = 0.8, α = 0.05)

| Effect Size (f²) | Minimum Sample Size (Main Effects) | Minimum Sample Size (Interaction Effect) |

|---|---|---|

| Small (0.02) | 395 | 1,583 |

| Medium (0.15) | 55 | 221 |

| Large (0.35) | 24 | 97 |

Experimental Protocols

Protocol 1: Detecting Epistasis via Linear Regression with Centered Predictors

- Data Preparation: Encode protein variants. For

kmutations, createkbinary (0 for wild-type, 1 for mutant) or continuous (e.g., hydrophobicity score) predictor variables. - Centering: Center each predictor variable by subtracting its mean from every observation. This yields a mean of zero.

- Interaction Creation: Multiply centered predictors to create interaction terms (e.g.,

X1_centered * X2_centeredfor pairwise epistasis). - Model Fitting: Fit the linear model:

Function = β₀ + ΣβᵢXᵢ_centered + Σβᵢⱼ(Xᵢ_centered * Xⱼ_centered). - Significance Testing: Perform an F-test comparing the full model (with interactions) to a nested model with only main effects. Evaluate individual interaction term coefficients using t-tests with Bonferroni or FDR correction for multiple comparisons.

- Validation: Use k-fold cross-validation (k=5 or 10) and report test set R². Calculate VIFs to diagnose residual multicollinearity.

Protocol 2: Permutation Test for Interaction Significance Under Non-Normality

- Fit Original Model: Fit your linear model with interaction terms to the original data (

D_orig). Record the t-statistic or coefficient (β_orig) for the interaction of interest. - Initialize Null Distribution: Create an empty array

Sto store null statistics. - Permutation Loop (Repeat N=5000 times):

a. Randomly shuffle the values of one of the constituent variables of the interaction term across all samples, breaking its relationship with the outcome and the other variable.

b. Re-fit the model to this permuted dataset.

c. Record the interaction coefficient (

β_perm) intoS. - Calculate p-value: Compute the two-sided p-value as:

p = (count of abs(β_perm) >= abs(β_orig) + 1) / (N + 1). - Decision: Reject the null hypothesis (no interaction) if

p < α(e.g., 0.05).

Mandatory Visualization

Linear Regression with Interactions Workflow for Epistasis

Modeling Epistasis as a Linear Interaction Term

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Deep Mutational Scanning (DMS) Library | A comprehensive pool of protein variant sequences, enabling high-throughput measurement of function for thousands of mutants in a single experiment. |

| Next-Generation Sequencing (NGS) Reagents | For quantifying variant abundance pre- and post-selection in DMS, linking genotype to functional fitness. |

| Fluorescent Reporters or Affinity Tags | Used to engineer a selectable or quantifiable phenotype (function) for the protein of interest in high-throughput assays. |

| Statistical Software (R/Python with packages) | R: lm(), car (for VIF), glmnet (for regularization). Python: statsmodels, scikit-learn (for LinearRegression, Ridge, Lasso). Essential for model fitting and diagnostics. |

| Benchmark Dataset (e.g., GB1, TEM-1 β-lactamase) | Well-characterized protein systems with known epistatic effects, used for validating new modeling approaches. |

| Multi-Well Plate Assays & Automation | Enables parallelized, high-precision measurement of protein function (e.g., enzyme activity, binding) for many variants. |

Technical Support Center: Troubleshooting & FAQs

This support center addresses common issues encountered when using Random Forest (RF) and Gradient Boosting Machines (GBM) for modeling epistasis in protein sequence-function relationships.

FAQ 1: My model is overfitting to the training data, especially on my limited mutational scan dataset. How can I improve generalization?

Answer: Overfitting is common with complex, non-linear models on limited biological data.

- For Random Forests: Reduce

max_depth(e.g., from default of None to 5-10) and increasemin_samples_leaf(e.g., to 5-10). This restricts tree complexity. Lower the number of features considered per split (max_features, e.g., to"sqrt"). - For Gradient Boosting: Use strong regularization. Significantly increase

learning_rate(shrinkage) while proportionally increasingn_estimators. Apply L1/L2 regularization viasubsample(stochastic boosting) andmax_depth(limit to 3-5). Cross-validate these parameters. - General: Employ nested cross-validation to unbiasedly assess performance and perform feature selection based on domain knowledge (e.g., physico-chemical properties) before modeling.

FAQ 2: The feature importance plots from my Random Forest are dominated by single mutant features, but my hypothesis is about epistatic interactions. How can I detect interacting features?

Answer: Standard Gini/Mean Decrease in Impurity importance often misses interactions.

- Use Alternative Importance Metrics: Calculate permutation importance on the out-of-bag samples or on a hold-out set. This can be more reliable.

- Extract Interaction Statistics: Use the

scikit-learntree_graphto compute total decrease in impurity for internal nodes, which can hint at interactions. Libraries likesklearn-gbmiorPDPboxallow calculation of H-statistics or 2D Partial Dependence Plots to quantify feature interaction strengths in both RF and GBM. - Methodology: Train your model. For a suspected pair of positions (e.g., sites 12 and 45), compute the 2D Partial Dependence. The H-statistic is calculated as the proportion of the model's variance that is explained by the interaction. A value > 0 indicates presence of epistasis in the model's representation.

Diagram: Workflow for Detecting Epistatic Interactions

FAQ 3: Training Gradient Boosting is very slow on my dataset of 10,000 protein variants with 500 features each. How can I speed it up?

Answer: Optimize using algorithmic and computational tricks.

- Algorithm Settings: Use

histogram-basedtree growth (e.g.,max_binsin scikit-learn'sHistGradientBoosting, or LightGBM/XGBoost). This dramatically speeds up finding splits. - Early Stopping: Implement early stopping with a large

n_estimatorsand a validation set (validation_fraction). Training stops when validation score does not improve. - Use Efficient Libraries: For large-scale data, employ

XGBoostorLightGBMwith their GPU support enabled. Reduce dimensionality via PCA on physiochemical features before training. - Protocol for Early Stopping:

- Split data into train (70%), validation (15%), test (15%).

- Set

n_estimators=5000,learning_rate=0.01. - Set

early_stopping_rounds=50. Fit model to train set, evaluate loss on validation set at each iteration. - Model stops after 50 consecutive rounds of no improvement on validation loss.

- Evaluate final model on the held-out test set.

FAQ 4: How do I choose between Random Forest and Gradient Boosting for my protein fitness prediction problem?

Answer: The choice depends on data size, noise level, and computational goals.

| Criterion | Random Forest | Gradient Boosting |

|---|---|---|

| Typical Performance | Very good, can be slightly less accurate than well-tuned GBM. | Often achieves higher accuracy, especially on structured problems. |

| Overfitting Resistance | High (bagging + randomness). Robust to noise. | Medium-High (requires careful tuning of depth, learning rate). |

| Training Speed | Faster (trees built in parallel). | Slower (trees built sequentially). |

| Hyperparameter Tuning | Less sensitive, easier to tune. | Very sensitive, requires careful grid/random search. |

| Interpretability | Good (feature importance, partial dependence). | Good (feature importance, partial dependence). |

| Best For (in epistasis context) | Initial exploration, noisy data, stable baseline. | Squeezing out maximum predictive performance, assuming sufficient data and tuning resources. |

FAQ 5: My partial dependence plots for interaction are noisy and hard to interpret. How can I get clearer signals?

Answer: Noisiness often stems from feature correlation or insufficient data coverage.

- Methodology for Clearer PDPs:

- Isolate Key Features: First, identify the top-20 important features from your model.

- Filter by Correlation: For interaction plots, select feature pairs with low to moderate correlation (e.g., |Pearson r| < 0.7) to ensure independent effects. Use a correlation matrix heatmap.

- Use ICE Plots: Generate Individual Conditional Expectation (ICE) plots alongside PDPs. This shows the prediction path for each individual sample, helping distinguish global trends from individual variations and identify subgroups.

- Model Agnostic: Apply the Accumulated Local Effects (ALE) plots instead of PDP. ALE plots are faster and unbiased by correlated features, providing a clearer average effect of a feature.

Diagram: Path to Clearer Model Interpretation

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Protein ML Research |

|---|---|

| scikit-learn | Core Python library providing robust implementations of Random Forests (RandomForestRegressor) and Gradient Boosting (GradientBoostingRegressor, HistGradientBoostingRegressor). Essential for prototyping. |

| XGBoost / LightGBM | Optimized, high-performance GBM libraries offering GPU support, advanced regularization, and efficient handling of large datasets, crucial for large-scale mutational screens. |

| SHAP / PDPbox | Interpretation libraries. SHAP (SHapley Additive exPlanations) provides consistent feature importance scores. PDPbox generates Partial Dependence and ICE plots to visualize feature effects and interactions. |

| ESM-2 / ProtBERT | Pre-trained protein language models. Used to generate informative, continuous vector representations (embeddings) of protein sequences as input features for RF/GBM, capturing evolutionary constraints. |

| DMS Data Processing Pipeline (e.g., dms_tools2) | Specialized tools for quality control, normalization, and statistical preprocessing of deep mutational scanning data before feeding into ML models. |

| Hyperparameter Optimization Suite (Optuna, Ray Tune) | Frameworks for automated, efficient search of hyperparameter spaces (e.g., depth, learning rate, estimators), vital for maximizing GBM performance. |

| Stability Analysis Scripts | Custom code to perform bootstrap or jackknife resampling to assess the stability of identified "important" features and interactions against data perturbations. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: When training a GNN for protein structure-function prediction, my model suffers from severe overfitting, even with small datasets. What are the primary regularization strategies? A1: Overfitting in GNNs for protein graphs is common. Implement these strategies:

- Topological Augmentation: Use stochastic edge dropout or random node feature masking during training. For protein contact graphs, you can randomly drop a small percentage (e.g., 5-10%) of non-covalent edges.

- Depth Regularization: Limit GNN layers to 3-5 to avoid oversmoothing. Combine with skip connections (residual/gated) and intermediate layer normalization.

- Graph Pooling: Use top-k pooling or self-attention pooling to reduce graph complexity before dense layers.

- Contextualized Dropout: Implement dropout not just on node features but also on the attention weights in GAT-based architectures.

Q2: My Transformer model for protein sequences fails to capture long-range epistatic interactions, behaving like a simple additive model. How can I improve its awareness of long-range dependencies? A2: This indicates a failure in the attention mechanism to model higher-order interactions.

- Sparse or Patterned Attention: Replace full self-attention with linear attention, or use attention patterns like BigBird's sparse blocks, which are more efficient and can be tuned to span longer sequence distances relevant to allostery.

- Hierarchical Modeling: First, use a local windowed attention layer to capture nearby residues. Then, apply a secondary "global" attention layer with a lower frequency or on a summarized version of the sequence to capture long-range context.

- Explicit Pairwise Feature Incorporation: Augment the Transformer with a pairwise feature matrix (e.g., from a covariance model or inferred contacts) that is added to the attention logits, directly biasing the model to consider specific long-range residue pairs.

Q3: During inference, my trained model shows high performance on wild-type protein sequences but poor generalization to unseen mutants, especially combinatorial variants. What is the likely cause? A3: This is a classic sign of dataset bias and failure to model epistasis.

- Cause: The training data likely lacks sufficient combinatorial diversity, teaching the model only additive, single-mutation effects.

- Solution:

- Data Strategy: Employ techniques like Directed Evolution dataset augmentation or use synthetic data from statistical models (like Potts) that encode epistasis.

- Architecture Choice: Use an architecture explicitly built for pairwise or higher-order interactions. A Graph Transformer is highly recommended: represent the protein as a graph (nodes=residues, edges=contacts/distances) and apply a Transformer on the graph, allowing messages to pass only through defined edges, which physically constrains and focuses learning on potential epistatic pairs.

Q4: I encounter "CUDA out of memory" errors when processing large protein graphs or long sequences with a Transformer. What are the most effective steps to reduce memory footprint? A4:

- Gradient Accumulation: Reduce batch size to 1 or 2 and accumulate gradients over multiple steps before performing the optimizer step.

- Mixed Precision Training: Use Automatic Mixed Precision (AMP) with PyTorch or TensorFlow to train in

float16precision, which halves memory usage. - Checkpointing: Use activation checkpointing (gradient checkpointing) for both GNN and Transformer layers. This recomputes activations during the backward pass instead of storing them.

- Truncation/Chunking: For sequences, consider splitting long sequences into overlapping chunks with a sliding window, ensuring context is maintained at the boundaries.

Q5: How do I effectively combine a GNN (for structure) and a Transformer (for sequence) into a single model for a joint embedding? A5: A common and effective design is a dual-stream architecture with cross-attention:

- Stream 1 (Sequence): A standard protein language model (e.g., ESM-2) processes the amino acid sequence.

- Stream 2 (Structure): A GNN processes the 3D structure graph (residues as nodes, spatial contacts as edges).

- Fusion Point: Use a cross-attention mechanism where the sequence representations serve as queries and the structure representations as keys/values (or vice-versa). This allows each residue's sequence context to "attend to" its structural context and neighboring residues.

- Training: Use multi-task learning, combining a primary fitness prediction loss with auxiliary losses (e.g., contact prediction, masked residue prediction).

Experimental Protocol: Validating Epistasis Capture in a GNN-Transformer Hybrid

Objective: To experimentally test whether a proposed model captures pairwise and higher-order epistatic effects in protein fitness landscapes.

Materials: DMS (Deep Mutational Scanning) dataset for a target protein containing fitness measurements for single and double mutants.

Method:

- Data Partition: Split data into training (all single mutants + 70% of double mutants) and a held-out test set (30% of double mutants, ensuring no single mutant in the test set is unseen).

- Baseline Model Training: Train a simple additive model (e.g., linear regression on one-hot encoded mutations) and a standard Transformer on the training set.

- Proposed Model Training: Train the GNN-Transformer hybrid model on the same training set.

- Inference & Analysis:

- Predict fitness for all double mutants in the test set.

- Calculate the Mean Squared Error (MSE) for each model on the double-mutant test set.

- Compute the epistatic contribution for each double mutant as:

ε_ij = y_ij - (y_i + y_j), whereyis measured fitness. - Correlate the model's predicted epistatic contribution (using the same formula with model predictions) against the measured epistatic contribution using Pearson's r.

Table 1: Model Performance on Epistasis Prediction

| Model | Test MSE (Double Mutants) | Pearson's r (Epistasis Correlation) |

|---|---|---|

| Simple Additive Model | 1.42 ± 0.08 | 0.05 ± 0.03 |

| Standard Transformer | 0.95 ± 0.05 | 0.41 ± 0.04 |

| GNN-Transformer Hybrid | 0.61 ± 0.04 | 0.78 ± 0.02 |

Diagrams

Diagram 1: GNN-Transformer Hybrid Model Architecture

Diagram 2: Epistasis Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Epistasis-Aware Protein DL Research

| Item | Function in Research |

|---|---|

| Protein DMS Datasets (e.g., from ProteinGym, FireProtDB) | Gold-standard experimental data containing fitness scores for thousands of variants, required for training and benchmarking models on real epistatic landscapes. |

| Structure Prediction Tool (AlphaFold2, ESMFold) | Generates accurate 3D protein structures from sequence when experimental structures are unavailable, enabling structure-based GNN inputs for any variant. |

| Pretrained Protein LM (ESM-2, ProtT5) | Provides powerful, generalizable sequence embeddings that capture evolutionary and biochemical constraints, serving as a foundational input for Transformer streams. |

| Graph Neural Network Library (PyTorch Geometric, DGL) | Specialized frameworks that provide efficient, scalable implementations of GNN layers (GCN, GAT, GIN) essential for building structure-based models. |

| Differentiable MLP Library (JAX/Flax, PyTorch Lightning) | Frameworks that enable rapid, flexible prototyping of hybrid model architectures and ensure differentiability for end-to-end gradient-based learning. |

| Epistasis Metrics Suite (Custom Python scripts) | Code to calculate key metrics like ε (epistasis), fraction of variance due to epistasis (Vepi/Vtotal), and higher-order interaction scores for model validation. |

Technical Support & Troubleshooting Center

FAQs & Troubleshooting Guides

Q1: After extracting the interaction network from my deep learning model, the graph is too dense and uninterpretable. What are the primary filtering strategies? A1: Use a combination of statistical and magnitude-based thresholds.

- Interaction Strength: Filter edges by the absolute value of the calculated interaction weight. Retain only the top 5-10% strongest interactions.

- Statistical Significance: Apply permutation testing (see Protocol P-102). Edges with a p-value > 0.01 should be pruned.

- Minimum Occurrence: Filter interactions that appear in less than 5% of model explanation samples (e.g., from SHAP or integrated gradients).

Q2: My visualized epistatic network shows high interconnectivity but lacks any clear community structure or hubs. Does this imply a lack of strong epistasis in my system? A2: Not necessarily. This is a common issue with global, all-vs-all interaction maps.

- Troubleshooting Steps:

- Re-evaluate Filtering: The thresholds in Q1 may be too permissive. Increase stringency.

- Contextualize the Network: Map your extracted interactions onto a known protein structure or functional domains. Use this to create a constrained prior network and re-extract interactions only between residues in spatial proximity.

- Change Visualization Layout: Force-directed layouts (Fruchterman-Reingold) can obscure hubs. Try a circular layout with nodes ordered by sequence position to reveal linear trends.

Q3: When I compare epistatic networks extracted from two different black-box models (e.g., CNN vs. Transformer) trained on the same dataset, they show low similarity. Which one should I trust? A3: This discrepancy highlights the model-dependency of post-hoc interpretation.

- Diagnostic Protocol:

- Benchmark Against Ground Truth: Use synthetic data with known, designed epistatic couplings to evaluate which model's interpretation tool recovers them more accurately.

- Functional Validation: Select 3-5 top-ranking interactions unique to each model and test them via site-saturation combinatorial mutagenesis (see Protocol P-201). The network with higher experimental validation rate is more trustworthy.

- Consensus Approach: Create an ensemble network containing only interactions identified by both interpretation methods. This consensus is more robust but may miss model-specific insights.

Q4: The computational cost for calculating all pairwise interactions via methods like Integrated Hessians is prohibitive for my protein library (N > 1000 variants). What are the efficient alternatives? A4: Move from exhaustive pairwise to targeted or sampling-based methods.

- Primary Solution: Use Monte Carlo sampling of interaction pairs based on gradient norms. Sample ~20% of possible pairs, focusing on residues with high feature importance scores.

- Alternative Workflow: Implement a two-stage approach: First, identify key residues via per-position attribution (e.g., SHAP). Second, compute interactions only between these top-K residues (K ~ 50).

Q5: How can I validate that my visualized network represents true biological epistasis and not just artifacts of the model or interpretation tool? A5: Direct experimental validation is essential. Follow this staged validation pyramid:

- In silico Control: Run interpretation on a model trained on randomized labels. Your real network should be significantly denser.

- Deep Mutational Scanning (DMS): Correlate predicted interaction strengths with experimental double-mutant coupling effects from a high-throughput DMS study.

- Targeted Combinatorial Mutagenesis: Design and assay specific double/triple mutants suggested by key network hubs (Protocol P-201).

Experimental Protocols

Protocol P-102: Permutation Testing for Significant Epistatic Edges

Objective: To assign statistical significance (p-values) to extracted pairwise interaction weights, distinguishing true signal from noise. Materials: Trained black-box model, held-out validation dataset, interpretation tool (e.g., Captum library). Procedure:

- Compute the observed interaction matrix, I_obs, for all residue pairs (i,j) of interest on the validation set.

- For permutation round p = 1 to P (P=1000): a. Randomly shuffle the labels (fitness scores) of the validation set. b. Retrain or fine-tune the model on the shuffled data for one epoch (to disrupt learned relationships). c. Compute the interaction matrix Ipermp from this perturbed model.

- For each edge (i,j), calculate the p-value:

p_{ij} = (1 + # of permutations where |I_perm_p[i,j]| >= |I_obs[i,j]|) / (1 + P) - Apply False Discovery Rate (FDR) correction (Benjamini-Hochberg) across all tested edges. Edges with FDR-adjusted p-value < 0.05 are considered significant.

Protocol P-201: Combinatorial Mutagenesis for Epistatic Validation

Objective: Experimentally test predicted epistatic interactions. Materials: Template DNA, KLD enzyme mix, primers for site-directed mutagenesis, expression system, functional assay. Procedure:

- Design: Select 3-5 high-weight edges from the visualized network. For each edge (e.g., residues A100 and B205), design constructs: single mutants A100X, B205Y, and the double mutant A100X/B205Y.

- Library Construction: Use sequential or parallel site-directed mutagenesis (e.g., NEB Q5 Kit) to generate all variants.

- Expression & Purification: Express variants in a suitable host (E. coli, HEK293). Purify using a standardized protocol (e.g., His-tag affinity chromatography).

- Functional Assay: Measure activity (e.g., enzymatic rate, binding affinity) for all variants in triplicate.

- Analysis: Calculate the epistasis coefficient (ε):

ε = Fitness(double_mutant) - Fitness(single_mutant_A) - Fitness(single_mutant_B) + Fitness(wild_type)A significant non-zero ε validates the computational prediction.

Table 1: Comparison of Interpretation Tool Performance on Synthetic Epistasis Dataset