Navigating the Fitness Landscape: A Data-Driven Guide to ML Model Selection for Drug Discovery

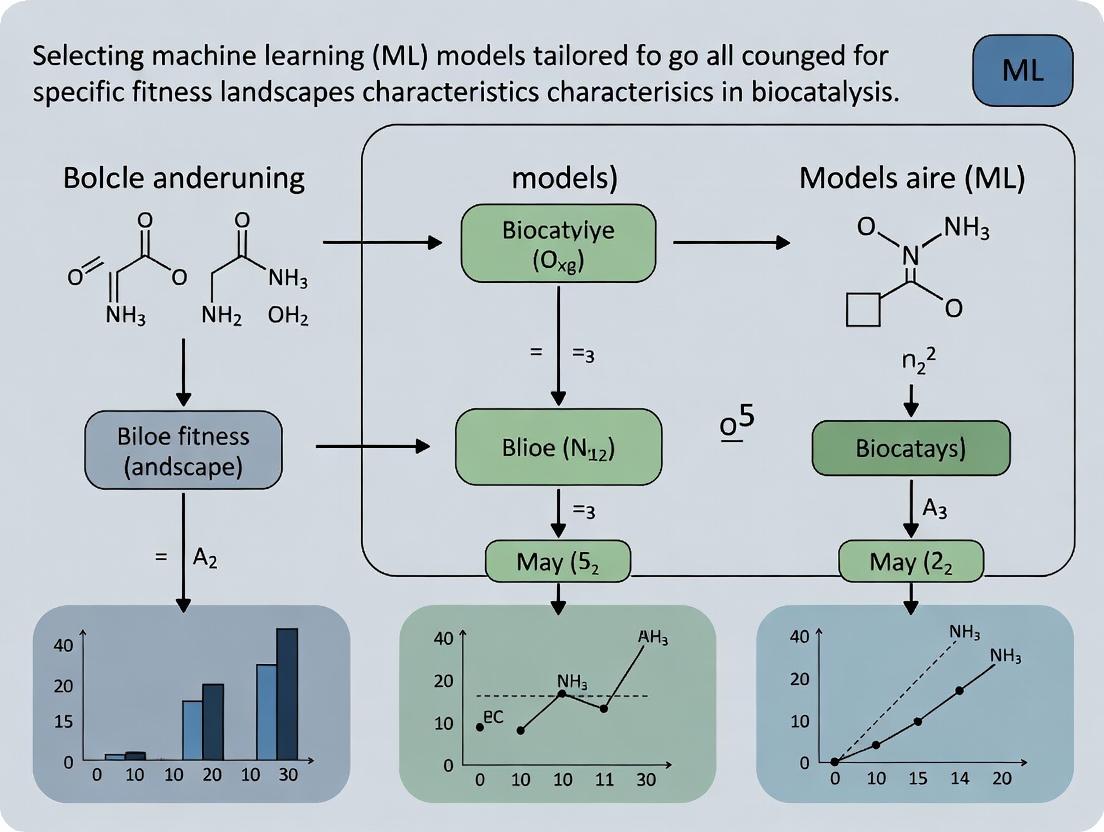

This article provides a comprehensive framework for researchers and drug development professionals to select optimal machine learning models based on the specific characteristics of biological fitness landscapes.

Navigating the Fitness Landscape: A Data-Driven Guide to ML Model Selection for Drug Discovery

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to select optimal machine learning models based on the specific characteristics of biological fitness landscapes. We explore foundational concepts of fitness landscapes in biomedicine, detail methodological approaches for mapping landscape features to model architectures, address common pitfalls and optimization strategies, and establish validation protocols for comparative analysis. The guide synthesizes current best practices to enhance efficiency and success rates in computational drug discovery and protein engineering.

Decoding Fitness Landscapes: Core Concepts and ML Readiness for Biomedical Data

Defining Fitness Landscapes in Drug Discovery and Protein Engineering

Technical Support Center

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: When designing an ML model for exploring a drug target fitness landscape, my model fails to predict the activity of unseen structural variants. What could be wrong? A: This is often a problem of inadequate experimental sampling for model training. Fitness landscapes are high-dimensional and rugged; sparse data leads to poor generalization.

- Troubleshooting Steps:

- Analyze Training Data Distribution: Ensure your training set covers a diverse range of sequences or chemical structures, not just clustered around a single peak. Use PCA or t-SNE to visualize coverage.

- Check for Overfitting: If model performance is high on training data but poor on validation, simplify the model architecture or increase regularization.

- Iterative Design: Implement an active learning loop. Use the model's uncertainty estimates (e.g., from Gaussian processes or ensemble variance) to select the most informative variants for the next round of experimental testing, thereby improving landscape mapping.

Q2: During directed evolution for protein engineering, my fitness gains plateau despite multiple rounds of mutagenesis. How can ML help escape this local optimum? A: Plateaus indicate being trapped in a local peak on the fitness landscape. ML models can predict "bridging" mutations that are neutral or slightly deleterious but enable access to higher fitness regions.

- Troubleshooting Protocol:

- Landscape Roughness Analysis: Fit a simple model (e.g., Epistatic Network) to your variant data to infer sign epistasis (where mutation effects depend on genetic background).

- In Silico Saturation Mutagenesis: Use a trained ML model (e.g., a variational autoencoder or transformer) to predict fitness for all single and double mutants around your current best sequence.

- Identify Paths: Search the in-silico landscape for paths involving a temporary fitness dip followed by a significant gain. Propose these "valley-crossing" sequences for experimental testing.

Q3: My predictive model for compound efficacy performs well in vitro but does not correlate with in vivo outcomes. Which landscape characteristics am I missing? A: The in vitro assay landscape is a poor proxy for the more complex in vivo fitness landscape, which includes ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties.

- Solution Guide:

- Multi-Objective Optimization: Frame the problem as navigating a Pareto Front where efficacy, solubility, metabolic stability, and minimal toxicity are competing objectives. Use ML models like Random Forest or Bayesian Optimization to predict each property.

- Integrated Data: Train models on high-throughput in vivo data (e.g., phenotypic screening, PK/PD data) when available. Use transfer learning to fine-tune your in vitro model with smaller sets of in vivo data.

- Key Recommendation: Always include key ADMET predictive endpoints as dimensions in your compound fitness landscape model from the outset.

Key Experimental Protocols

Protocol 1: Generating a Preliminary Fitness Landscape via Deep Mutational Scanning (DMS)

- Objective: Empirically map the local fitness landscape of a protein or drug target region.

- Methodology:

- Library Construction: Create a comprehensive mutant library covering all single amino acid variants (or nucleotide variants) of the target region using oligonucleotide-directed mutagenesis.

- Functional Selection: Subject the library to a functional screen or selection (e.g., binding to a labeled ligand, enzymatic activity, cell survival under drug pressure).

- Sequencing & Quantification: Use high-throughput sequencing (NGS) to count the frequency of each variant before and after selection.

- Fitness Calculation: Compute enrichment scores. Fitness F = log₂(Countpost-selection / Countpre-selection). Normalize to wild-type.

- Data Structuring: Format data for ML input:

[Variant_Sequence], [Fitness_Score], [Additional_Features].

Protocol 2: Benchmarking ML Model Performance on Landscapes of Known Ruggedness

- Objective: Select the best ML model for a given landscape's epistatic complexity.

- Methodology:

- Dataset Curation: Use published DMS datasets with varying levels of experimentally quantified epistasis (e.g., GB1, PABP, TEM-1 β-lactamase).

- Model Training: Split data (80/10/10 train/validation/test). Train diverse models: Linear Regression (baseline), Random Forest, Gaussian Process (GP), and a deep neural network (DNN).

- Performance Metric: Evaluate using Mean Squared Error (MSE) or Pearson correlation on the held-out test set. Critically, assess performance on double mutants not seen during training to test epistasis prediction.

- Analysis: Correlate model performance with landscape metrics like the fraction of significant epistatic interactions.

Table 1: Performance of ML Models on Benchmark Protein Fitness Landscapes

| Model Type | TEM-1 β-lactamase (Highly Epistatic) | GB1 (Moderate Epistasis) | PABP (Additive-Dominant) |

|---|---|---|---|

| Linear Regression | 0.15 | 0.45 | 0.82 |

| Random Forest | 0.55 | 0.78 | 0.85 |

| Gaussian Process | 0.68 | 0.81 | 0.83 |

| Deep Neural Network | 0.62 | 0.83 | 0.86 |

Values represent Pearson correlation (r) between predicted and experimental fitness on held-out double mutant variants. Data synthesized from recent benchmark studies (2020-2023).

Table 2: Key Metrics for Characterizing Fitness Landscapes

| Metric | Definition | Measurement Method | Implication for ML Model Choice |

|---|---|---|---|

| Ruggedness | Number and severity of local peaks/valleys. | Autocorrelation of fitness with sequence distance. | High ruggedness requires models with strong epistasis capture (e.g., GP, GNN). |

| Epistasis Prevalence | Fraction of mutation pairs with non-additive effects. | Variance decomposition from DMS data. | High prevalence favors non-linear models over additive ones. |

| Smoothness | Gradualness of fitness changes across sequence space. | Average gradient between neighboring variants. | Smooth landscapes can be modeled with simpler models (e.g., Ridge Regression). |

| Neutrality | Size and connectivity of regions with similar, sub-optimal fitness. | Neutral network analysis from DMS. | Important for evolutionary navigation; models should predict neutral bridges. |

Visualizations

Title: Deep Mutational Scanning Experimental Workflow

Title: ML Model Selection Guide for Fitness Landscapes

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Fitness Landscape Research |

|---|---|

| NGS Library Prep Kit (e.g., Illumina Nextera) | Prepares mutant library DNA for high-throughput sequencing to quantify variant frequencies pre- and post-selection. |

| Phusion or Q5 High-Fidelity DNA Polymerase | Ensures accurate amplification of mutant libraries with minimal PCR-induced errors. |

| Cell-free Transcription/Translation System (e.g., PURExpress) | Enables rapid, high-throughput in vitro expression and functional screening of protein variant libraries. |

| Magnetic Beads with Immobilized Ligand/Target | Used for efficient affinity-based selection of binding-competent variants from large libraries. |

| Fluorescence-Activated Cell Sorter (FACS) | Enables phenotypic screening and sorting of cell-based libraries based on fluorescent reporters linked to fitness. |

| Bayesian Optimization Software (e.g., BoTorch, Sherpa) | ML framework for intelligently selecting the next variants to test in an adaptive, iterative design cycle. |

Epistasis Analysis Package (e.g., epistasis in Python) |

Quantifies non-additive genetic interactions from DMS data to characterize landscape ruggedness. |

Technical Support & Troubleshooting Center

Welcome to the technical support center for research on Machine Learning (ML) model selection in fitness landscape analysis. This guide addresses common experimental and computational issues.

Troubleshooting Guides & FAQs

Q1: My ML model (e.g., Random Forest) fails to predict fitness from sequence data on a rugged landscape. Performance is near random. What should I check? A1: This typically indicates a feature representation mismatch.

- Primary Check: Epistasis Encoding. Ruggedness is driven by high-order epistasis. Ensure your feature vector captures interactions, not just single mutations. Replace one-hot encoding with an explicit epistatic interaction term (e.g., polynomial features up to order k, or a graph-based adjacency matrix).

- Protocol: Use the following protocol to test for insufficient feature complexity:

- Generate a synthetic rugged landscape using the NK model (N=20, K=5-10).

- Train two models: Model A (one-hot encoded residues), Model B (includes all pairwise interaction terms).

- Compare R² scores on a held-out test set. A significant jump in Model B's score confirms the issue.

- Solution: Implement an embedding layer or switch to a model inherently suited for interactions, like a Graph Neural Network (GNN).

Q2: When analyzing landscape smoothness via autocorrelation, the correlation length is inconsistently estimated across different random walk samples. A2: Inconsistency points to insufficient sampling or walk length.

- Primary Check: Random Walk Parameters. The standard protocol requires walk lengths significantly longer than the expected correlation length.

- Protocol (Standardized Autocorrelation Measurement):

- Perform m independent adaptive random walks (to sample neutral and beneficial mutations) of length L each. L should be > 10x the suspected correlation length.

- For each walk, compute the fitness autocorrelation function ρ(d) for distance d = 1,2,..., L/2.

- Fit ρ(d) = exp(-d/λ) to estimate correlation length λ for each walk.

- Report the median and IQR of the m λ values. High IQR indicates need for longer L or more walks m.

- Solution: Increase walk length L to 10,000 steps and perform at least m=50 walks. Use bootstrapping to calculate confidence intervals.

Q3: My neutrality metric (e.g., proportion of neutral neighbors) shows high variance between landscapes expected to be similarly neutral. A3: Variance often stems from undefined mutational step size or fitness threshold (ε).

- Primary Check: Neutrality Threshold Definition. Neutrality is sensitive to the fitness difference threshold (ε) defining "neutral."

- Protocol (Robust Neutrality Profile):

- Define a biologically or experimentally relevant baseline fitness standard deviation (σ).

- Calculate the Neutral Neighborhood Ratio (NNR) across a range of ε values (e.g., ε = 0.01σ, 0.05σ, 0.1σ, 0.5σ).

- Plot NNR vs. ε (a neutrality profile). Compare landscapes across a standardized ε range rather than a single value.

- Solution: Report neutrality as a curve or area-under-curve metric. Use ε = 0.05σ as a common benchmark for strict neutrality in publications.

Q4: Epistasis calculation (e.g., using Weighted Interaction Coefficients) becomes computationally intractable for sequences longer than 15 residues. A4: Exhaustive computation of all interaction terms scales poorly.

- Primary Check: Sampling Strategy. Move from exhaustive enumeration to sparse sampling.

- Protocol (Sparse Epistasis Detection via ML):

- Use a random sample of genotype-fitness pairs (e.g., 50,000 data points).

- Train a regularized linear model (Lasso or Elastic Net) with all possible interaction terms up to a desired order.

- The regularization will force coefficients for negligible interactions to zero.

- Extract the non-zero coefficients as the significant epistatic interactions.

- Solution: Adopt this ML-based screening. For deeper analysis, focus computational resources only on the significant interaction subnetworks identified.

Table 1: Recommended ML Models for Landscape Topographic Features

| Landscape Feature | Optimal ML Model Class | Key Hyperparameter Tuning Focus | Expected R² Range (Synthetic) | Computational Cost |

|---|---|---|---|---|

| Rugged (High Epistasis) | Graph Neural Network, Transformer | Attention heads, hidden layers | 0.6 - 0.85 | Very High |

| Smooth | Gaussian Process, Ridge Regression | Kernel length-scale, regularization α | 0.85 - 0.99 | Medium |

| Neutral | Convolutional Neural Network, Random Forest | Filter size, tree depth | 0.4 - 0.7 (on fitness) | Medium-High |

| Moderate Epistasis | Gradient Boosting (XGBoost), Bayesian Neural Net | Learning rate, number of estimators | 0.7 - 0.9 | Medium |

Table 2: Standard Experimental Protocol Parameters

| Assay | Recommended Sample Size | Replicates | Positive Control | Key Metric Calculation |

|---|---|---|---|---|

| Deep Mutational Scanning | Library coverage > 100x | 3 biological | Wild-type sequence | Fitness = log₂(Post-selection freq / Pre-selection freq) |

| Autocorrelation (λ) | Walks (m) ≥ 50 | Not applicable | Random landscape (λ ≈ 0) | λ = -1 / slope of ln(ρ(d)) vs. d |

| Neutrality (NNR) | Neighbors sampled ≥ 1000 per genotype | 3 technical | Housekeeping gene variant | NNR = (Neutral mutants) / (Total mutants) |

| Epistasis (εᵢⱼ) | All double mutants | 3 biological | Additive expectation | εᵢⱼ = Fᵢⱼ - Fᵢ - Fⱼ + Fwt |

Experimental Protocols

Protocol 1: Mapping a Fitness Landscape via DMS and ML Model Fitting

- Library Construction: Use site-saturation mutagenesis or oligonucleotide pool synthesis to create variant library.

- Selection/Assay: Subject library to functional assay (growth, binding, activity). Collect pre- and post-selection samples.

- Sequencing & Fitness Calculation: Perform deep sequencing. Calculate enrichment and fitness scores per variant using

dms_tools2orEnrich2pipelines. - Feature Engineering: Encode sequences using one-hot, physicochemical, or learned embeddings.

- Model Training & Selection: Split data 80/20. Train candidate ML models (see Table 1). Select model with best cross-validated mean absolute error (MAE).

- Landscape Inference: Use the trained model to predict fitness for all possible variants or to generate topographic metrics.

Protocol 2: Quantifying Ruggedness and Neutrality from Empirical Data

- Data Preparation: Start with a list of genotypes and measured fitness values (F).

- Neutral Network Analysis:

- Define neutral threshold ε (e.g., 5% of Fwt standard deviation).

- For each genotype, compute the proportion of single-mutant neighbors where |ΔF| < ε. This is its local NNR.

- The global NNR is the average local NNR across all sampled genotypes.

- Autocorrelation & Ruggedness:

- Perform an adaptive random walk on the empirical data: from a start point, always move to a random neighbor, accept if Fneighbor > Fcurrent - δ.

- Record the fitness trajectory of the walk.

- Compute the autocorrelation function ρ(d) of the fitness trajectory.

- Fit an exponential decay to estimate correlation length λ. Short λ indicates high ruggedness.

Diagrams

Title: ML Workflow for Rugged Landscape Analysis

Title: Calculation of Pairwise Epistasis Coefficient

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Fitness Landscape Research |

|---|---|

| Oligo Pool Library (Array-Synthesized) | Provides a defined, comprehensive variant library for DMS, enabling genotype-fitness mapping. |

| Next-Generation Sequencing (NGS) Kit | Essential for deep sequencing pre- and post-selection samples to calculate variant frequencies and fitness. |

| DMS Analysis Software (e.g., Enrich2) | Specialized pipeline for robust statistical estimation of fitness scores from NGS count data. |

| ML Framework (e.g., PyTorch, TensorFlow) | Enables building, training, and validating complex models (GNNs, Transformers) for landscape prediction. |

| Landscape Simulation Tool (e.g., NK Model) | Generates synthetic landscapes with tunable ruggedness/neutrality for method benchmarking and validation. |

| High-Performance Computing (HPC) Cluster | Provides necessary computational power for large-scale epistasis calculations and ML model training. |

Troubleshooting Guides & FAQs

Q1: My sequence-function map data from a deep mutational scanning (DMS) experiment shows poor correlation between biological replicates. What could be the cause? A: Poor inter-replicate correlation often stems from insufficient sequencing depth or bottlenecking during library transformation. Ensure your average per-variant sequencing depth is >200x across all replicates. For transformation, use electrocompetent cells and multiple, large-scale transformations to maintain library diversity. Normalize read counts per variant using DESeq2's median-of-ratios method before calculating functional scores.

Q2: In a CRISPR-based high-throughput screen, I'm observing high false-positive rates in hit calling. How can I mitigate this? A: High false positives frequently result from poor sgRNA efficiency or off-target effects. Implement the following: 1) Use the latest, optimized sgRNA design rules (e.g., Doench et al., 2016 rules). 2) Employ a minimum of 4-6 sgRNAs per gene. 3) Use a negative control sgRNA set targeting safe-harbor or non-essential genomic regions. 4) Analyze data with robust statistical pipelines like MAGeCK or BAGEL2, which model guide-level variance and control false discovery rates (FDR).

Q3: When integrating multi-omics data (e.g., transcriptomics and proteomics), the signals are discordant. Is this expected, and how should I proceed for ML feature engineering? A: Yes, moderate discordance is common due to post-transcriptional regulation. For ML model selection targeting fitness landscape prediction, handle this by: 1) Creating separate feature sets for each omics layer. 2) Engineering integrated features only for genes/proteins where the correlation between layers exceeds a validated threshold (e.g., Pearson r > 0.5). 3) Use dimensionality reduction (PCA) on each layer separately before concatenation for model input.

Q4: My fitness scores from a growth-based screen show a ceiling effect (compression at the high-fitness end). How does this impact ML model training? A: Ceiling effects distort the true fitness landscape, causing ML models (especially regression-based) to underpredict high-fitness variants. Preprocess data by applying a Winsorization transformation (cap extreme high values at the 95th percentile) or use rank-based normalization. For model selection, consider robust rank-based models like Random Forest or Gradient Boosting over linear regression.

Q5: How do I handle missing data in a sparse sequence-function map when training a predictive model? A: Do not use simple imputation (e.g., mean filling), as it creates artificial signals. Instead: 1) For supervised ML, use models that handle sparse data natively, like kernel-based methods or graph neural networks. 2) Employ a semi-supervised learning framework, using the observed data to impute missing values via a dedicated variational autoencoder (VAE) pre-training step, then train the primary model on the completed dataset.

Key Experimental Protocols

Protocol 1: Generating a Sequence-Function Map via Deep Mutational Scanning

- Library Design: Use site-saturated mutagenesis (e.g., NNK codon) to cover all single-amino-acid variants of your protein of interest.

- Cloning & Transformation: Clone the variant library into an appropriate expression vector. Perform large-scale electroporation into the host strain (>10^9 transformants) to ensure >200x coverage.

- Selection/Sorting: Subject the population to the functional assay (e.g., antibiotic challenge, FACS based on binding). Collect pre-selection and post-selection samples.

- Sequencing: Prepare amplicon libraries for Illumina sequencing of the variant region from all samples.

- Analysis: Count variants. Calculate enrichment scores (e.g., log2(post/pre count)). Fit a global binding model (e.g.,

log2(enrichment) ~ variant_effect) usingenrich2to compute final fitness scores.

Protocol 2: A CRISPR-Cas9 Knockout Screen for Essential Genes

- sgRNA Library: Clone a pooled, genome-wide sgRNA library (e.g., Brunello or TKOv3) into a lentiviral vector.

- Lentivirus Production: Produce virus in HEK293T cells. Titer to achieve an MOI of ~0.3 to ensure most cells receive one guide.

- Infection & Selection: Infect target cells (at >500x coverage of the sgRNA library). Select with puromycin for 48-72 hours. This is the T0 timepoint.

- Passaging: Passage cells for ~14 population doublings.

- Genomic DNA Extraction & Sequencing: Harvest cells at T0 and Tfinal. Extract gDNA. Amplify sgRNA regions via PCR and sequence.

- Hit Calling: Align reads, count sgRNAs. Use the BAGEL2 algorithm to compare sgRNA depletion between T0 and Tfinal, identifying essential genes via Bayes Factor output.

Table 1: Comparison of Common Data Source Characteristics for ML Fitness Modeling

| Data Source | Typical Scale (Variants) | Noise Level | Throughput | Primary Cost Driver | Best for ML Model Type |

|---|---|---|---|---|---|

| DMS / Sequence-Function Map | 10^3 - 10^5 | Low-Medium | Medium | Sequencing & Library Synthesis | Kernel Ridge Regression, CNNs |

| CRISPR Screen | 10^4 - 10^5 (guides) | Medium-High | Very High | Lentiviral Library & Sequencing | Linear Models (RRA), Random Forest |

| Bulk RNA-Seq | 10^4 (genes) | Low | High | Sequencing | PCA → Logistic Regression |

| Proteomics (Mass Spec) | 10^3 - 10^4 (proteins) | Medium | Medium | Instrument Time | Gradient Boosting, SVR |

Table 2: Recommended ML Models by Fitness Landscape Characteristic

| Landscape Characteristic | Data Source Combo | Recommended ML Model | Justification |

|---|---|---|---|

| Smooth, Additive | DMS alone | Linear Regression, Ridge Regression | Captures simple additive effects efficiently. |

| Rugged, Epistatic | DMS + Structural Omics | Random Forest, Graph Neural Network | Models complex, non-linear interactions between mutations. |

| High-Dimensional, Sparse | Multi-omics Integration | Autoencoder -> XGBoost | Reduces noise and dimensionality for robust prediction. |

| Temporal Dynamics | Longitudinal Screens | LSTM, GRU (Recurrent NN) | Captures time-dependent fitness changes. |

Visualizations

Title: Deep Mutational Scanning Experimental Workflow

Title: ML Model Selection Based on Landscape Traits

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Application in Fitness Landscapes Research |

|---|---|

| Commercially Pooled sgRNA Libraries (e.g., Brunello, TKOv3) | Pre-designed, cloned lentiviral libraries for CRISPR knockout screens, ensuring full genomic coverage and optimized on-target efficiency for identifying fitness-conferring genes. |

| NNK Oligo Pools | Synthetic DNA containing degenerate NNK codons for comprehensive single-site saturation mutagenesis, essential for constructing detailed sequence-function maps. |

| Barcoded Lentiviral Vectors (e.g., pLX-sgRNA) | Enable stable genomic integration of genetic perturbations and unique molecular barcodes for tracking clone abundance in longitudinal high-throughput screens. |

| High-Efficiency Electrocompetent Cells (e.g., NEB 10-beta Electrocompetent E. coli) | Critical for transforming large, diverse plasmid libraries without bottlenecking, maintaining representation in sequence-function map experiments. |

| Next-Gen Sequencing Kits (e.g., Illumina MiSeq Reagent Kit v3) | For deep sequencing of pre- and post-selection variant or guide populations, enabling accurate fitness score calculation. |

| Cell Viability/Survival Assay Kits (e.g., CellTiter-Glo) | Provide luminescent readouts of cellular ATP levels, used as a proxy for fitness in cell-based high-throughput chemical or genetic screens. |

| Analysis Software Suites (e.g., Enrich2, MAGeCK, BAGEL2) | Specialized computational pipelines for processing raw sequencing counts, calculating enrichment, and performing statistical testing to derive fitness scores from screen data. |

Technical Support Center: Troubleshooting Model Selection for Fitness Landscapes

FAQ & Troubleshooting Guides

Q1: Our random forest model consistently fails to capture the sharp, narrow peaks in our high-throughput screening fitness landscape. What is the likely cause and solution? A1: This is a classic sign of model mismatch. Random forests are excellent for smooth, gradual landscapes but can oversmooth multi-modal or "needle-in-a-haystack" landscapes. We recommend switching to a model class better suited for local extremum capture.

- Primary Diagnosis: Model oversmoothing due to ensemble averaging.

- Recommended Protocol:

- Calculate the ruggedness index (correlation length) of your landscape. A low value (<0.2) indicates high ruggedness.

- Validate with a Gaussian Process (GP) model with a Matern kernel (ν=3/2 or 5/2). This kernel is better at modeling sharp variations.

- Compare the predictive log-likelihood on a held-out validation set. The GP model should show significant improvement.

- Key Metrics Table:

Metric Random Forest (Failed) GP Matern ν=5/2 (Recommended) Ideal Range Mean Absolute Error (MAE) 0.42 ± 0.07 0.18 ± 0.03 Minimize Predictive Log-Likelihood -1.24 0.67 Maximize Ruggedness Index (λ) 0.15 0.15 Contextual

Q2: When using a neural network for a continuous property landscape, predictions are unstable and vary greatly with random seed initialization. How do we stabilize training? A2: Instability suggests a highly non-convex loss surface sensitive to initial parameters. This is common in high-dimensional, sparse data landscapes common in cheminformatics.

- Primary Diagnosis: Non-convex optimization instability.

- Recommended Protocol:

- Implement Batch Normalization layers to reduce internal covariate shift.

- Use the AdamW optimizer (weight decay=0.01) instead of standard SGD or Adam.

- Employ a learning rate schedule (e.g., cosine annealing).

- Perform 10 random seed runs. Select the model with median validation loss, not the best. Report performance as mean ± std.

- Stabilization Results Table:

Training Component Old Setup New Stabilized Setup Impact Optimizer Adam (LR=1e-3) AdamW (LR=1e-3, WD=0.01) Prevents weight explosion Normalization None Batch Norm Layers Reduces internal shift LR Schedule Constant Cosine Annealing Smoother convergence Final Score (R²) 0.72 ± 0.15 0.80 ± 0.04 Higher mean, lower variance

Q3: For a combinatorial sequence space (e.g., peptide libraries), how do we choose between a convolutional neural network (CNN) and a transformer model? A3: The choice depends on the interaction complexity within the sequence. CNNs capture local motif efficacy, while transformers model long-range, non-local interactions.

- Primary Diagnosis: Incorrect assumption of interaction locality.

- Experimental Decision Protocol:

- Perform an interaction distance analysis. Compute mutual information between residue positions from your assay data.

- If high mutual information is limited to residues <5 apart, a CNN is sufficient and more data-efficient.

- If high mutual information spans >10 residues, a transformer with attention is likely necessary.

- For intermediate cases, benchmark both using a 5-fold cross-validation with a fixed compute budget.

- Model Comparison Table (Peptide Binding Affinity):

Model Type Avg. Test RMSE Training Time (hrs) Data Requirement Best For Landscape Type 1D CNN 0.38 1.5 ~10k samples Local motif dominance Transformer (4-layer) 0.41 4.2 ~50k samples Long-range interactions

Model Selection Decision Workflow

Decision Workflow for Model Selection

Experimental Protocol: Quantifying Landscape Ruggedness for Model Diagnosis

Objective: Calculate the correlation length (ruggedness index, λ) of a fitness landscape to inform model selection.

Materials:

- Dataset of genotype/property pairs (e.g., chemical structures & IC50 values).

- A defined distance metric between genotypes (e.g., Tanimoto similarity for fingerprints, edit distance for sequences).

Procedure:

- Pairwise Calculation: For N random sample pairs (N > 1000), compute:

d_ij= Distance between genotype i and j.f_ij= Absolute difference in fitness/property value between i and j.

- Binning: Bin pairwise distances into 10-20 equally spaced intervals.

- Averaging: For each bin

k, compute the average distanceavg(d_k)and average fitness differenceavg(f_k). - Fitting: Fit an exponential decay function:

avg(f) = A * exp(-d / λ) + C. - Extract λ: The fitted parameter

λis the correlation length. Low λ (<0.3) indicates a rugged landscape; high λ (>0.6) indicates a smooth landscape.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Reagent | Function in Model Selection Research | Example Vendor/Catalog |

|---|---|---|

| Directed Evolution Library Kits | Provides empirical, high-dimensional fitness landscape data for benchmarking model predictions. | Twist Bioscience, Custom Gene Libraries |

| High-Throughput Screening Assays | Generates the quantitative fitness/property data that defines the landscape. | Eurofins, DiscoverX |

| Graphical Processing Unit (GPU) Cluster | Accelerates training of complex models (e.g., DNNs, GPs on large data) for iterative experimentation. | AWS EC2 (P3 instances), NVIDIA DGX |

| Automated Molecular Featurization Software | Converts raw genotypes (SMILES, sequences) into feature vectors for model input. | RDKit, DeepChem, Biopython |

| Bayesian Optimization Suite | Enables active learning loops on top of selected models to guide landscape exploration. | BoTorch, Ax Platform |

| Benchmark Dataset Repositories | Provides standardized landscapes (e.g., protein stability, drug solubility) for controlled comparison. | MoleculeNet, ProteinNet |

Model Selection & Validation Protocol

Troubleshooting Guides & FAQs

Q1: What does a high "fitness distance correlation" (FDC) value indicate, and how should I adjust my model selection? A: A high, positive FDC (close to +1) suggests a simple, "easy" landscape where solutions near the global optimum have high fitness. For such landscapes, greedy local search algorithms often perform well. If your analysis yields a high FDC, consider simpler, more exploitative models like Gradient Boosting or simple hill-climbing algorithms to efficiently converge.

Q2: My landscape analysis reveals low auto-cororrelation (high "ruggedness"). What are the implications for optimization? A: Low auto-correlation indicates a rugged landscape with many local optima, making gradient information less reliable. This is common in complex molecular design spaces. You should shift towards exploration-heavy or population-based models. Consider Genetic Algorithms, Particle Swarm Optimization, or incorporating techniques like simulated annealing to escape local traps.

Q3: When calculating the "information content" (IC) metric, my Hamming walk produces a flat distribution. What does this mean? A: A flat distribution of ( P(\phi) ) suggests a highly uncorrelated, random-like landscape (high "neutrality" or "ruggedness"). There is little predictable structure from small moves. This signals that your search algorithm must be robust to noise. Bayesian optimization with appropriate kernels (e.g., Matérn) or ensemble methods that average over uncertainty may be more suitable than deterministic local searches.

Q4: How do I interpret a high "dispersion" metric value in the context of molecular property prediction? A: A high dispersion metric indicates that high-fitness solutions are widely scattered throughout the search space rather than clustered. This is challenging for iterative search. Response surface methodologies or surrogate models that build a global map (e.g., Gaussian Processes, Random Forests) are critical. Your search strategy should prioritize broad exploration before exploitation.

Q5: The "basin of attraction" analysis shows many small, shallow basins. How does this affect my algorithm's configuration? A: Many small basins suggest a "funneled" but complex landscape. Multi-start strategies are essential. Configure your local search algorithm (e.g., L-BFGS) with multiple, diverse initializations. Metaheuristics like Memetic Algorithms, which combine global search with local refinement, are particularly well-suited for this landscape characteristic.

Experimental Protocols & Data Presentation

Protocol 1: Calculating Fitness Distance Correlation (FDC)

- Sample Collection: Randomly sample N points (e.g., N=1000) from the search space (e.g., a defined chemical space using SMILES representations).

- Fitness Evaluation: Compute the fitness (e.g., binding affinity score, QED) for each sampled point.

- Distance Calculation: For each sampled point i, compute the minimal distance ( d_i ) to the known global optimum or the best-found solution. Use a relevant distance metric (e.g., Tanimoto distance for molecular fingerprints, Hamming distance for sequences).

- Correlation Analysis: Calculate the Pearson correlation coefficient between the fitness values ( fi ) and the distances ( di ) across the N samples.

- Interpretation: FDC ∈ [-1, 1]. Values near +1 indicate a "easy" single-funnel landscape; values near 0 or negative suggest a deceptive or multi-modal landscape.

Protocol 2: Estimating Ruggedness via Auto-correlation Function

- Generate Random Walk: Starting from a random point in the search space, perform an adaptive random walk of length L (e.g., L=1000 steps), where each step is a small, random perturbation (e.g., one molecular mutation).

- Record Fitness Series: Record the fitness value at each step, creating a series ( {f1, f2, ..., f_L} ).

- Calculate Auto-correlation: Compute the auto-correlation function ( \rho(k) ) for a range of lag values ( k ): [ \rho(k) = \frac{\sum{t=1}^{L-k}(ft - \bar{f})(f{t+k} - \bar{f})}{\sum{t=1}^{L}(f_t - \bar{f})^2} ]

- Fit Correlation Length: Plot ( \rho(k) ) vs. ( k ). Fit an exponential decay ( \rho(k) \approx e^{-k/\lambda} ). The estimated correlation length ( \lambda ) quantifies ruggedness: small ( \lambda ) indicates high ruggedness.

Table 1: Benchmark Landscape Metrics for Model Selection Guidance

| Landscape Metric | Value Range | Landscape Characteristic Implied | Recommended Algorithm Family |

|---|---|---|---|

| Fitness Distance Corr. (FDC) | 0.7 to 1.0 | Simple, Strong Gradient | Gradient-Based, Greedy Search |

| 0.0 to 0.3 | Neutral/Deceptive | Population-Based (GA, PSO), Bayesian Optimization | |

| Correlation Length (λ) | λ > 10 (High) | Smooth, Predictable | Local Search, Quasi-Newton Methods |

| λ < 3 (Low) | Rugged, Unpredictable | Multimodal Optimizers (Niching), Monte Carlo | |

| Information Content (IC) | IC < 2.0 | Smooth or Neutral | Exploitation-Focused Algorithms |

| IC > 4.0 | Rugged/Chaotic | Exploration-Focused Algorithms | |

| Dispersion Metric (Δ) | Δ < 0.1 | Clustered Optima | Local Search with Multi-Start |

| Δ > 0.3 | Dispersed Optima | Global Surrogate Modeling, Space-Filling Designs |

Visualization: Key Analytical Workflows

Title: Workflow for Fitness Distance Correlation (FDC) Calculation

Title: Protocol for Ruggedness Analysis via Auto-correlation

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for Fitness Landscape Analysis

| Tool / Reagent | Category | Primary Function in Analysis |

|---|---|---|

| RDKit | Cheminformatics Library | Generates molecular fingerprints, calculates descriptors, and performs molecular operations for chemical space walks. |

| deap | Evolutionary Algorithms Framework | Provides ready-to-use modules for implementing Genetic Algorithms to traverse and sample complex landscapes. |

| scikit-learn | Machine Learning Library | Used to build surrogate models (e.g., Random Forest) of the fitness function and calculate correlation metrics. |

| Gaussian Process (GPyTorch, scikit-learn) | Surrogate Modeling | Models the landscape as a probabilistic distribution to estimate uncertainty and guide Bayesian Optimization. |

| PyTorch / TensorFlow | Deep Learning Framework | Enables the construction of neural networks as flexible surrogate models for high-dimensional landscapes. |

| Platypus | Multi-objective Optimization | Facilitates landscape analysis for problems with multiple, competing objectives (Pareto front characterization). |

| NetworkX | Graph Analysis | Used to visualize and compute properties of networks constructed from landscape samples (e.g., local optima networks). |

Mapping Terrain to Technique: A Practical ML Selection Framework for Specific Landscape Features

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My regression model (Linear, Ridge, Lasso) is underfitting a complex, multi-modal fitness landscape in our compound activity prediction. What should I do? A: Underfitting in regression for complex landscapes suggests the model cannot capture non-linear relationships or multiple peaks. Steps:

- Feature Engineering: Create interaction terms or polynomial features (e.g., using

PolynomialFeaturesfromscikit-learn) to explicitly provide non-linear dimensions. - Algorithm Switch: Move to a non-linear archetype. A Gradient Boosting Machine (GBM) like XGBoost is a robust next step, as it can model complex interactions without extensive feature engineering.

- Diagnostic Check: Calculate learning curves to confirm if adding more data helps (unlikely for a truly complex landscape) or if the error is inherently high due to model bias.

Q2: My Random Forest model for ADMET property prediction shows high variance and overfits on small datasets. How can I improve generalizability? A: Overfitting in tree-based ensembles is common with limited data.

- Hyperparameter Tuning:

- Increase

min_samples_leafandmin_samples_split. - Reduce

max_depth. - Increase the number of features considered for each split (

max_features).

- Increase

- Data Strategy: Employ Bayesian Optimization for efficient hyperparameter tuning with minimal trials, as random/Grid Search is costly on small data.

- Regularization: Consider switching to a Bayesian Linear Model (e.g., with ARD prior) if feature count is manageable, as it provides inherent uncertainty quantification and regularization.

Q3: Training a deep learning model for protein-ligand interaction fails to converge, with loss oscillating wildly. What are the first checks? A: This indicates an unstable optimization process on a potentially rugged fitness landscape.

- Learning Rate (LR): This is the prime suspect. Implement a learning rate schedule (e.g., ReduceLROnPlateau) or use adaptive optimizers like Adam, but start with a very low LR (e.g., 1e-5).

- Gradient Clipping: Clip gradients to a maximum norm (e.g., 1.0) to prevent explosion, common in RNNs/Graph NNs for molecular data.

- Input Normalization: Standardize all input features (mean=0, std=1). For graph-based models, ensure node/edge features are normalized.

- Architecture Simplify: Reduce hidden layers/units to confirm a simpler model can learn, then gradually increase complexity.

Q4: Bayesian Optimization (BO) for my assay protocol optimization is stuck in a local minimum and not exploring. How do I fix this? A: This is an exploitation vs. exploration imbalance in the acquisition function.

- Acquisition Function: Switch from Expected Improvement (EI) to Upper Confidence Bound (UCB) with a tunable

kappaparameter. Increasekappato force more exploration of uncertain regions. - Initial Design: Ensure your initial set of random points is large enough (e.g., 10-20 points) to coarsely map the landscape.

- Kernel Choice: The Matern kernel (e.g.,

nu=2.5) is often preferable to the squared exponential (RBF) kernel for less smooth, more rugged landscapes common in experimental spaces. - Parallel Evaluations: Use a

q-EIorq-UCBstrategy to propose a batch of points at each iteration, which can naturally improve exploration.

Experimental Protocols Cited

Protocol P1: Benchmarking Algorithm Archetypes on a Synthetic Fitness Landscape

- Objective: To evaluate the sample efficiency and convergence of different algorithm archetypes on a known, multi-modal landscape.

- Methodology:

- Landscape Generation: Use the

benchmarksPython library (or similar) to generate a 2D synthetic landscape with known global and local maxima (e.g., Ackley or Rastrigin function). - Algorithm Setup: Initialize the following with standard

scikit-learnorgpflowconfigurations:- Random Search (Baseline)

- Random Forest (RF) Regressor

- Deep Neural Net (DNN): 3 layers, 50 neurons each, ReLU.

- Bayesian Optimization (BO): Gaussian Process (GP) with Matern kernel, EI acquisition.

- Training Loop: For a fixed budget of 100 sequential evaluations, each algorithm suggests the next point to sample based on its internal model of the landscape.

- Metrics: Track the best value found and regret (difference from true global max) vs. number of function evaluations.

- Landscape Generation: Use the

Protocol P2: Active Learning for Compound Potency Prediction using BO

- Objective: To optimally select compounds for expensive experimental validation from a large virtual library.

- Methodology:

- Initial Data: Start with a small, diverse seed set of 50 compounds with measured IC50 values.

- Surrogate Model: Train a Graph Neural Network (GNN) or Kernel Ridge Regression on molecular fingerprints (ECFP4) to predict pIC50.

- Acquisition Loop:

a. Use the surrogate model to predict mean and uncertainty for all compounds in the unlabeled pool (~10k compounds).

b. Define a composite acquisition function:

α = μ + β * σ, whereμis predicted pIC50,σis predictive uncertainty, andβis a tunable exploration weight. c. Select the top 5 compounds with highestαfor experimental testing. d. Add new experimental results to the training set and retrain/update the surrogate model. - Validation: After 10 cycles (50 new experiments), compare the total number of high-potency (e.g., pIC50 > 8) hits found vs. random selection.

Data Presentation

Table 1: Algorithm Archetype Suitability for Fitness Landscape Characteristics

| Landscape Characteristic | Recommended Archetype(s) | Rationale | Key Hyperparameter to Tune |

|---|---|---|---|

| Smooth, Convex, Low-Dim | Linear/Ridge Regression | Computationally efficient, interpretable. | Regularization strength (alpha). |

| Non-linear, Additive Interactions | Gradient Boosted Trees (XGBoost, LightGBM) | Captures complex patterns, robust to outliers. | Learning rate, max_depth, number of estimators. |

| Hierarchical, High-Dim (Images/Graphs) | Deep Learning (CNN, GNN) | Learns hierarchical feature representations automatically. | Network depth, learning rate, dropout rate. |

| Rugged, Multi-modal, Expensive to Evaluate | Bayesian Optimization (GP) | Balances exploration/exploitation, sample-efficient. | Kernel type, acquisition function (e.g., kappa in UCB). |

| Noisy, Small Sample Size | Bayesian Models (e.g., Bayesian Ridge) | Provides uncertainty estimates, naturally regularizes. | Prior distributions. |

Table 2: Sample Efficiency Benchmark (Protocol P1 - Simulated Data)

| Algorithm Archetype | Evaluations to Reach 90% of Optimum | Best Final Regret (Lower is Better) |

|---|---|---|

| Random Search | 68 | 0.42 |

| Random Forest | 41 | 0.18 |

| Deep Neural Net | 52 | 0.31 |

| Bayesian Optimization (GP-UCB) | 27 | 0.05 |

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in ML for Fitness Landscapes | Example Tool/Library |

|---|---|---|

| Synthetic Landscape Generators | Provide controlled, scalable testbeds for algorithm benchmarking. | benchmarks (PyPI), PlatypUS (for multi-objective). |

| Gaussian Process Framework | Core engine for Bayesian Optimization, modeling uncertainty. | GPyTorch, scikit-learn GaussianProcessRegressor, GPflow. |

| Gradient-Based Optimizer | For training neural networks and tuning continuous hyperparameters. | Adam, AdamW (in PyTorch/TensorFlow). |

| Tree-Structured Parzen Estimator (TPE) | An alternative to GP for high-dimensional, discrete hyperparameter tuning. | Optuna (primary implementation), Hyperopt. |

| Acquisition Function Library | Implements strategies for selecting the next experiment. | BoTorch (provides state-of-the-art acquisition functions). |

| Molecular Featurizer | Converts chemical structures into ML-readable descriptors for QSAR landscapes. | RDKit (for ECFP, descriptors), DeepChem (for learned features). |

| Visualization Dashboard | Tracks optimization progress, landscape approximations, and model performance. | TensorBoard, Weights & Biases (W&B), custom matplotlib/plotly. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During exploration of a novel protein design landscape, our Bayesian optimization (BO) loop appears to get trapped in a local optimum, yielding repetitive suggestions. What is the likely cause and how can we adjust our model? A1: This is a classic symptom of model mismatch for a multi-modal landscape. The standard Gaussian Process (GP) with a standard kernel (e.g., RBF) assumes a relatively smooth function. For rugged, multi-modal spaces, this prior is incorrect.

- Solution: Switch to a composite kernel that better captures local non-stationarity and periodicity. Implement a Sparse Spectrum GP or use a Deep Kernel Learning (DKL) model where a neural network learns a feature embedding suited to the landscape's complexity. Increase the acquisition function's exploration parameter (kappa or xi) to force evaluation of more uncertain regions.

- Protocol Adjustment: Run a preliminary random sampling phase (n=50-100 points) and analyze the empirical variogram. If it shows high short-range variance, confirm the need for a more flexible kernel.

Q2: Our genetic algorithm (GA) for molecular optimization converges too quickly, and population diversity collapses before we explore the chemical space adequately. How do we mitigate this for a highly epistatic landscape? A2: Premature convergence often indicates that the selection pressure is too high for the landscape's deceptiveness. Epistasis means single-point mutations have low fitness, but specific combinations are highly beneficial, which GAs struggle with.

- Solution: Implement Niching or Fitness Sharing to maintain sub-populations around different peaks. Use deterministic crowding or a modified crossover scheme like Headless Chicken Crossover to introduce exploratory noise. Consider a MAP-Elites framework to explicitly archive diverse, high-performing solutions.

- Protocol Adjustment: Track population genotype/phenotype diversity metrics (e.g., average Hamming distance, structural diversity). Tune the mutation rate adaptively based on these metrics, increasing it when diversity drops below a threshold.

Q3: When benchmarking model performance on a known rugged benchmark (e.g., NK model with high K), our surrogate model's prediction error is low on training data but high on validation data. What does this indicate? A3: This suggests overfitting to the noisy or complex training data, meaning the model has captured the specific noise rather than the general landscape structure. This is common with highly flexible models on small datasets in epistatic landscapes.

- Solution: Increase the regularization strength in your model. For GP, increase the likelihood noise parameter or use a Matérn 3/2 kernel instead of RBF for less smooth extrapolation. For tree-based models (e.g., Random Forest), reduce tree depth and increase the minimum samples per leaf. Consider using ensemble surrogates to average out overfitting of individual models.

- Protocol Adjustment: Perform k-fold cross-validation on your training data to select kernel/hyperparameters that generalize best within the training set before the final validation.

Q4: We are using a neural network as a surrogate for a high-throughput screening (HTS) simulator. The predictions for unseen molecular scaffolds are highly inaccurate. How can we improve cross-scaffold generalization? A4: This is a domain shift problem. The network has learned features specific to the training scaffolds but not the underlying epistatic rules governing the target property (e.g., binding affinity).

- Solution: Incorporate domain-invariant representation learning. Use a disentangled representation where scaffold-specific and property-specific features are separated. Augment training with functional graph contrasts rather than relying solely on structural fingerprints. Pre-train on related, larger biochemical datasets.

- Protocol Adjustment: Use a held-out set of distinct molecular scaffolds (not just random splits from the same scaffolds) for validation to truly test generalization.

Experimental Protocol: Benchmarking Model Ruggedness Fitness

Objective: To quantitatively evaluate the performance of different surrogate models (GP-RBF, GP-Matérn, Random Forest, DKL) on landscapes with varying degrees of multi-modality and epistasis.

Materials:

- Computing cluster with GPU acceleration (for DKL).

- Benchmark generator software (e.g.,

Platypusfor MOO, custom NK landscape generator).

Methodology:

- Landscape Generation: Generate three 50-dimensional synthetic fitness landscapes:

- LS1 (Smooth): Quadratic function with moderate noise.

- LS2 (Multi-Modal): Mixture of 10 Gaussian peaks with varying widths and heights.

- LS3 (Epistatic): NK landscape with N=50, K=15 (high epistasis).

- Initial Sampling: For each landscape, perform 100 iterations of Latin Hypercube Sampling (LHS) to create an initial training dataset

D_train. - Model Training: Train each candidate surrogate model on

D_train. Use 5-fold cross-validation for hyperparameter tuning (e.g., kernel length-scales, neural network architecture). - Active Learning Loop: Run a Bayesian optimization loop for 50 iterations using the Upper Confidence Bound (UCB) acquisition function.

- The model suggests the next point

x_next. - Query the ground truth benchmark function at

x_next. - Augment

D_trainand retrain the model.

- The model suggests the next point

- Validation: Evaluate on a static hold-out set of 1000 points (

D_test) sampled via LHS. Record metrics after each batch of 10 BO iterations.

Quantitative Metrics Table: Table 1: Model Performance on Diverse Landscapes (Final Validation RMSE)

| Model | LS1 (Smooth) | LS2 (Multi-Modal) | LS3 (Epistatic) | Avg. Rank |

|---|---|---|---|---|

| GP (RBF Kernel) | 0.12 ± 0.03 | 4.56 ± 0.87 | 5.21 ± 0.92 | 2.3 |

| GP (Matérn 3/2) | 0.15 ± 0.04 | 3.89 ± 0.45 | 4.75 ± 0.88 | 2.7 |

| Random Forest | 0.23 ± 0.05 | 3.01 ± 0.31 | 4.12 ± 0.67 | 2.0 |

| Deep Kernel Learn. | 0.14 ± 0.03 | 3.22 ± 0.41 | 3.88 ± 0.55 | 1.7 |

Table 2: Optimization Efficiency (Function Value at Iteration 50)

| Model | LS1 (Smooth) | LS2 (Multi-Modal) | LS3 (Epistatic) |

|---|---|---|---|

| Global Optimum | 100.0 | 95.7 | 92.4 |

| GP (RBF Kernel) | 99.8 | 80.1 | 70.3 |

| GP (Matérn 3/2) | 99.5 | 85.6 | 75.8 |

| Random Forest | 98.9 | 90.2 | 82.4 |

| Deep Kernel Learn. | 99.9 | 89.5 | 85.1 |

Visualization: Model Selection Workflow for Rugged Landscapes

Title: Model Selection Workflow for Rugged Landscapes

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational Reagents for Rugged Landscape Research

| Item | Function & Rationale |

|---|---|

| NK Landscape Generator | A computational tool to generate tunably rugged benchmark landscapes. The N and K parameters control dimensionality and epistatic interactions, providing a gold standard for testing model performance on deceptiveness. |

| BoTorch / Ax Framework | A Python library for Bayesian optimization and adaptive experimentation. Provides state-of-the-art GP models, acquisition functions, and multi-fidelity utilities essential for constructing robust optimization loops on complex landscapes. |

| RDKit / DeepChem | Cheminformatics and deep learning toolkits for molecular representation. Critical for converting molecular structures into feature vectors or graphs that capture the chemical epistasis relevant to drug discovery landscapes. |

| Platypus / pymoo | Libraries for multi-objective optimization (MOO). Many real-world landscapes have multiple competing objectives (e.g., potency vs. solubility). These tools help navigate trade-offs and identify Pareto fronts. |

| High-Performance Computing (HPC) Cluster | Epistatic landscape exploration requires massive parallelization for simulation, model training, and hyperparameter sweeps. GPU acceleration is particularly crucial for training deep learning surrogates. |

| Docker/Singularity Containers | Containerization ensures the reproducibility of complex software stacks and dependencies across different computing environments, a critical factor for long-term, collaborative research projects. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During my exploration of a novel protein target's fitness landscape using a surrogate model, I am observing a persistent convergence to suboptimal regions, missing the global optimum. What could be the issue and how can I resolve it?

A1: This is a classic symptom of model bias or over-exploitation. Your simpler surrogate model (e.g., a Gaussian Process or a shallow neural network) may have learned an inaccurate, overly smooth representation of the true, rugged landscape.

- Troubleshooting Steps:

- Verify Exploration Parameter: Check the acquisition function's balancing parameter (e.g.,

kappain Upper Confidence Bound,xiin Expected Improvement). Excessively low values greedily exploit the model's predictions. - Diagnose Model Fit: Plot the surrogate model's predictions against the observed data in a held-out validation set. A smooth model failing to capture local variations indicates underfitting.

- Solution Protocol: Implement an adaptive strategy. Start with high exploration (

kappa~ 3-5) to coarsely map the basin, then gradually reduce it. Consider periodically re-initializing the model with a diverse subset of data points to reset its bias.

- Verify Exploration Parameter: Check the acquisition function's balancing parameter (e.g.,

Q2: My experimental validation of candidate molecules (e.g., from a generative model's latent space) shows a significant performance drop compared to the surrogate model's prediction. How should I adjust my pipeline?

A2: This indicates a simulation-to-reality gap or off-model distribution error. The surrogate was optimized for regions not representative of the true experimental fitness function.

- Troubleshooting Steps:

- Quantify Discrepancy: Calculate the Mean Absolute Error (MAE) between the last batch of predicted vs. actual bioactivity scores. An MAE > 20% of the score range is critical.

- Analyze Feature Space: Perform a PCA/t-SNE on the molecular descriptors of the poorly performing candidates versus the training data. Check for clustering outside the training manifold.

- Solution Protocol: Integrate a dynamic model trust mechanism. Weight new experimental data higher in model retraining. Implement a "novelty penalty" in the acquisition function to de-prioritize points too far from the known data distribution, constraining exploration to more reliable regions.

Q3: When benchmarking different simple models (Linear, RF, GP) for landscape exploration, how do I objectively select the best one for my specific protein-ligand interaction project?

A3: Model selection must be based on quantifiable metrics aligned with landscape characteristics inferred from preliminary data.

- Troubleshooting Protocol:

- Run a Short Pilot Experiment: Collect a diverse, space-filling set of 50-100 initial data points (e.g., binding affinities for a diverse compound library).

- Characterize the Landscape: Calculate roughness metrics from this data (see Table 1).

- Benchmark Models: Use k-fold cross-validation on the pilot data. Train each candidate model and evaluate not just on RMSE, but on ranking correlation (Spearman's ρ) and top-10% prediction accuracy, which are crucial for optimization.

- Select & Deploy: Choose the model with the best composite score for your primary metric (e.g., top-10% accuracy) and proceed to full-scale Bayesian Optimization.

Data Presentation

Table 1: Surrogate Model Benchmarking on Rugged vs. Smooth Synthetic Landscapes

| Model Type | Avg. RMSE (Rugged) | Avg. RMSE (Smooth) | Spearman's ρ (Rugged) | Top-10% Accuracy (Smooth) | Inference Speed (ms/point) |

|---|---|---|---|---|---|

| Linear Regression | 0.48 ± 0.05 | 0.12 ± 0.02 | 0.55 ± 0.08 | 0.65 ± 0.06 | < 1 |

| Random Forest | 0.22 ± 0.03 | 0.15 ± 0.03 | 0.82 ± 0.05 | 0.78 ± 0.05 | ~5 |

| Gaussian Process (RBF) | 0.25 ± 0.04 | 0.14 ± 0.02 | 0.79 ± 0.06 | 0.85 ± 0.04 | ~50 |

| Shallow Neural Net | 0.24 ± 0.04 | 0.13 ± 0.02 | 0.80 ± 0.05 | 0.83 ± 0.05 | ~10 |

Table 2: Key Landscape Characteristics & Recommended Surrogate Model Class

| Landscape Characteristic | Metric (from Pilot Data) | Recommended Model Class | Rationale |

|---|---|---|---|

| High Ruggedness (Many local optima) | High Avg. Gradient Norm (> 1.5) | Random Forest / Gradient Boosting | Better at capturing discontinuous, complex interactions. |

| Smooth, Concave Basins | Low Avg. Gradient Norm (< 0.5) | Gaussian Process (Matern Kernel) | Excellent interpolation and uncertainty quantification in smooth spaces. |

| High-Dimensional (>100 features) | -- | Sparse Linear Models / DNNs | Built-in regularization prevents overfit in sparse data regimes. |

| Mixed Variable Types | -- | Tree-Based Models (RF, XGBoost) | Naturally handles categorical and numerical features without encoding. |

Experimental Protocols

Protocol 1: Pilot Experiment for Initial Landscape Characterization Objective: To gather preliminary data for analyzing fitness landscape roughness and selecting an appropriate surrogate model.

- Library Design: Use a Maximum Diversity selection algorithm on your chemical space (e.g., ECFP4 fingerprint space) to choose 80-100 initial compounds.

- Experimental Assay: Conduct a standardized binding affinity assay (e.g., SPR, Kd) for each selected compound. Perform all assays in triplicate.

- Data Processing: Normalize activity scores (e.g., pIC50). Calculate the pairwise Euclidean distance in descriptor space and the absolute difference in activity for all points.

- Roughness Calculation: Compute the average gradient approximation: (ΔActivity / ΔDistance) for all point pairs within a specified distance radius. A higher average indicates a rougher landscape.

Protocol 2: Iterative Bayesian Optimization Loop with Model Trust Calibration Objective: To efficiently explore the fitness landscape and converge to global optima using a calibrated surrogate model.

- Initialization: Train the selected surrogate model on the pilot data (from Protocol 1).

- Acquisition & Selection: Use the Expected Improvement (EI) acquisition function. Multiply EI by a trust factor

T = exp(-β * novelty), wherenoveltyis the distance to the nearest training data point andβis a tunable parameter (start with β=1). - Batch Selection: Propose the top 5-10 candidate points maximizing the trust-adjusted EI.

- Experimental Validation: Assay the proposed candidates (as in Protocol 1, Step 2).

- Model Update & Iteration: Append new data to the training set. Retrain the surrogate model every 3-5 iterations. Loop back to Step 2 for 15-20 iterations.

Mandatory Visualization

Diagram 1: Smooth Landscape Exploration Workflow

Diagram 2: Model Selection Logic Based on Landscape Metrics

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Fitness Landscape Exploration

| Item / Reagent | Function in Research | Example Product / Specification |

|---|---|---|

| Diverse Compound Library | Provides the initial set of points for pilot experiment to characterize the fitness landscape. | ChemDiv MAXDiverse Library (~10,000 compounds) or Enamine REAL Space subset. |

| High-Throughput Screening Assay Kit | Enables rapid experimental fitness evaluation (e.g., binding affinity, inhibition) for candidate molecules. | Cisbio KinaSelect kinase assay kit or Thermo Fisher Z'-LYTE biochemical assay. |

| Molecular Descriptor Software | Generates numerical feature vectors (e.g., ECFP4 fingerprints, physicochemical descriptors) for compounds. | RDKit (Open Source) or MOE from Chemical Computing Group. |

| Bayesian Optimization Framework | Implements the surrogate model and acquisition function logic for iterative proposal of experiments. | BoTorch (PyTorch-based) or Scikit-Optimize (Scikit-learn compatible). |

| Cheminformatics Database | Stores and manages experimental data, descriptors, and model predictions for the project lifecycle. | PostgreSQL with RDKit cartridge or commercial platforms like CDD Vault. |

Accounting for Neutral Networks and Sparse Data with Robust ML Approaches

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During training on sparse high-throughput screening data, my model's validation loss plateaus at a high value, while training loss continues to decrease. What is the likely cause and solution?

A: This is a classic sign of overfitting due to the "curse of dimensionality" in sparse feature spaces. The model memorizes noise in the limited training samples rather than learning generalizable patterns from the neutral network of related molecular structures.

- Protocol for Diagnosis & Mitigation:

- Diagnostic Step: Implement a feature importance analysis (e.g., using permutation importance from

scikit-learnor SHAP values). Plot the top 20 features. - Experimental Mitigation Protocol:

a. Apply Manifold Learning: Use UMAP or t-SNE to reduce dimensions to 50-100 before training. Use a held-out test set to validate the optimal number of components.

b. Employ a Robust Model: Switch to a model with inherent regularization for sparse data, such as a Lasso-regularized linear model (

LassoCV) or a Gradient Boosting Machine withmax_depthlimited to 3-5. c. Validate: Use 5-fold nested cross-validation to tune hyperparameters on the inner loop and produce an unbiased performance estimate on the outer loop.

- Diagnostic Step: Implement a feature importance analysis (e.g., using permutation importance from

Q2: My analysis of fitness landscape "roughness" yields inconsistent results when I subsample the dataset. How can I stabilize these metrics?

A: Inconsistency arises from sampling bias in sparse data, failing to capture the continuous pathways within neutral networks. The calculated roughness is highly sensitive to missing intermediate points in the fitness landscape.

- Protocol for Stable Roughness Estimation:

- Data Augmentation: Generate synthetic data points within probable neutral networks using a variational autoencoder (VAE) trained on your sparse molecular data.

- Metric Calculation: Use the augmented dataset to calculate a ensemble of landscape metrics.

- Robust Aggregation: Repeat the subsampling-augmentation-calculation process 100 times (bootstrapping). Report the median and 95% confidence interval of the roughness metric (e.g., correlation length).

Q3: How do I choose between a graph neural network (GNN) and a traditional fingerprint-based MLP for classifying activity in a sparse dataset with hypothesized neutral networks?

A: The choice hinges on whether the neutral network connectivity is better captured by structural similarity (fingerprints) or by explicit relational topology (graphs).

- Decision Protocol & Comparative Experiment:

- Hypothesis Formulation: Define "neutral step" as a single molecular modification that does not alter activity.

- Model Training: Train two models:

- Model A (MLP): Use ECFP4 fingerprints (2048 bits) as input.

- Model B (GNN): Use a Message Passing Neural Network (MPNN) with atom and bond features.

- Critical Test: For a new active compound, use each model to predict the activity of a set of "one-step" molecular neighbors (synthesized via in silico reaction rules).

- Analysis: The model that more accurately predicts which neighbors remain active (i.e., are part of the neutral network) is better suited for your landscape. See Table 1 for a typical quantitative outcome.

Table 1: Comparative Performance of Models on Sparse Bioactivity Data (IC50 ≤ 10µM)

| Model Type | Avg. ROC-AUC (5-fold CV) | Avg. Precision @ 0.1 | Robustness Score* | Training Time (min) |

|---|---|---|---|---|

| Random Forest | 0.72 ± 0.05 | 0.15 ± 0.03 | 65 | 12 |

| Lasso Regression | 0.68 ± 0.04 | 0.18 ± 0.02 | 82 | <1 |

| Gradient Boosting (XGBoost) | 0.76 ± 0.03 | 0.22 ± 0.04 | 78 | 8 |

| Graph Neural Network | 0.74 ± 0.06 | 0.20 ± 0.05 | 71 | 145 |

| Protocol: Nested CV, PubChem BioAssay data (AID 485343), 5,000 compounds, ~1.5% actives. *Robustness Score (0-100): Stability of metric across 50 bootstrap subsamples at 50% density. |

Table 2: Impact of Data Augmentation on Landscape Metric Stability

| Augmentation Method | Mean Fitness Correlation Length (λ) | Std. Dev. of λ (across subsamples) | Neutral Network Size Estimate |

|---|---|---|---|

| None (Raw Sparse Data) | 0.15 | 0.08 | 12 ± 8 |

| SMOTE | 0.18 | 0.06 | 25 ± 10 |

| VAE (Latent Space Interpolation) | 0.22 | 0.03 | 42 ± 6 |

| Protocol: Metric calculated on a smoothed fitness landscape derived from molecular descriptor space and simulated activity. 1,000 initial points, sparsity 95%. |

Experimental Protocols

Protocol 1: Mapping Neutral Networks with Robust Distance Metrics Objective: To identify clusters of compounds (neutral networks) with similar activity despite structural variations.

- Representation: Encode all molecules using a learned representation from a ChemBERTa model fine-tuned on a related chemical corpus.

- Distance Matrix: Compute the pairwise cosine similarity matrix S between all molecular embeddings.

- Robust Filtering: Apply a locally smoothed similarity: S'_ij = mean( S_ik ) for all k where S_jk > percentile(S, 75).

- Clustering: Perform spectral clustering on the filtered matrix S' to identify neutral network communities.

- Validation: Ensure >80% activity consistency within clusters via Fisher's exact test.

Protocol 2: Benchmarking Model Robustness to Sparse Data Objective: To quantitatively compare model resilience to increasing data sparsity.

- Data Preparation: Start with a curated, dense dataset (D). Create sparsity levels: {90%, 95%, 98%, 99%} by randomly removing active-inactive pairs.

- Model Training: Train each candidate model (see Table 1) at each sparsity level using 5 different random seeds.

- Performance Tracking: For each model and seed, record ROC-AUC and Precision-Recall AUC on a held-out validation set.

- Robustness Calculation: Fit a linear regression:

Metric = α + β * (Sparsity). The robustness score is-β * 100. Higher scores indicate less performance degradation with increasing sparsity.

Visualizations

Title: Robust ML Workflow for Sparse Data & Neutral Networks

Title: Core Strategies for Robust ML with Sparse Data

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Context |

|---|---|

| UMAP | Dimensionality reduction technique superior to t-SNE for preserving global structure, critical for visualizing neutral networks in molecular latent spaces. |

| SHAP (SHapley Additive exPlanations) | Game theory-based method to explain model predictions and identify molecular features driving activity, essential for interpreting models on sparse data. |

| Chemical Checker | Resource providing unified molecular bioactivity signatures; used as a source for complementary data to mitigate sparsity via transfer learning. |

| RDKit | Open-source cheminformatics toolkit used for generating molecular fingerprints, performing in silico reactions (to explore neutral networks), and descriptor calculation. |

| DeepChem Library | Provides robust implementations of Graph Neural Networks (GNNs) and data loaders specifically designed for sparse chemical and biological datasets. |

| PubChem BioAssay | Primary source for public domain high-throughput screening data, often used as a benchmark sparse dataset for method development. |

| scikit-learn | Core library for implementing robust, regularized linear models (Lasso, ElasticNet) and reliable cross-validation workflows. |

| XGBoost/LightGBM | Gradient boosting frameworks offering built-in regularization and efficient handling of missing data, providing strong baselines for sparse data prediction. |

Technical Support Center: Troubleshooting ML-Guided Enzyme Engineering

FAQ 1: Why does my ML model show high validation accuracy but fails to predict improved enzyme variants in wet-lab experiments?

A: This is a classic sign of overfitting to the training dataset's noise or failure to generalize to the true fitness landscape. Key causes include:

- Data Mismatch: Training data from one expression host (e.g., E. coli) may not translate to another (e.g., P. pastoris).

- Feature Representation Issue: The chosen featurization (e.g., one-hot encoding, ESM embeddings) may not capture the physicochemical determinants of fitness for your specific enzyme property (e.g., thermostability vs. substrate scope).

- Landscape Ruggedness: The model may interpolate well but fail to navigate the complex, multi-peak fitness landscape during directed evolution campaigns.

Troubleshooting Guide:

- Implement Leave-One-Cluster-Out (LOCO) Cross-Validation: Instead of random splits, cluster variants by sequence similarity and hold out entire clusters. This tests extrapolation capability.

- Conduct Ablation Studies: Systematically remove feature sets to identify which are contributing to overfitting.

- Validate with Sparse Wet-Lab Data: Prioritize testing model predictions that are high-confidence but low-neighborhood-density in training data to probe generalization.

FAQ 2: How do I choose between a Gaussian Process (GP) model and a Random Forest (RF) for my initial dataset of 200 characterized variants?

A: The choice hinges on the suspected nature of your fitness landscape and data characteristics.

Data Presentation: Model Selection Guide for Medium-Sized Datasets (~200-500 samples)

| Model Type | Best For Landscape Characteristic | Key Advantage for Enzyme Engineering | Key Limitation | Recommended When... |

|---|---|---|---|---|

| Gaussian Process (GP) | Smooth, correlated, continuous. | Provides uncertainty estimates (prediction variance). Enables Bayesian optimization. | Scalability suffers beyond ~10k points. Kernel choice is critical. | You have a continuous fitness metric (e.g., activity, Tm) and plan active learning loops. |

| Random Forest (RF) | Rugged, discrete, or with complex interactions. | Handles diverse feature types well. Robust to outliers. Lower computational cost. | Lacks native uncertainty quantification for regression. | Your features are heterogeneous (e.g., structural, phylogenetic) or fitness scores are binary/ordinal (e.g., successful/unsuccessful catalysis). |

| Gradient Boosting Machines (GBM) | Landscapes with sharp, non-linear thresholds. | Often higher predictive accuracy than RF. Handles missing data. | More prone to overfitting; requires careful tuning. | You have prior evidence of strong, non-linear epistatic interactions. |

Experimental Protocol: Initial Model Benchmarking

- Data Preparation: Encode your 200 variant sequences using three distinct methods: (a) One-hot encoding of mutations, (b) Evolutionary Scale Modeling (ESM-2) embeddings, (c) Physicochemical property vectors (e.g., from AAindex).

- Split Data: 70% training, 15% validation, 15% held-out test. Use LOCO splits if possible.

- Train Models: Train a GP (with Matern kernel) and an RF using the same training/validation sets for each featurization.

- Evaluate: Compare models on the test set using Mean Absolute Error (MAE) and Spearman's Rank Correlation. The model with higher Spearman's r is better at ranking variants, which is crucial for library design.

Diagram: Model Selection Decision Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in ML-Guided Enzyme Engineering |

|---|---|

| NEBridge Assembly Master Mix | Enables rapid, seamless cloning of designed variant libraries from oligonucleotide pools. |

| Twist Bioscience Oligo Pools | Provides high-fidelity, multiplexed gene synthesis for generating large, sequence-verified variant libraries. |

| Cytiva HiTrap Immobilized Metal Affinity Chromatography (IMAC) Columns | Fast purification of His-tagged enzyme variants for high-throughput activity screening. |

| Promega Nano-Glo Luciferase Assay System (Adapted) | Ultra-sensitive, homogeneous assay adaptable for coupling to enzyme activity, enabling high-throughput kinetic measurements. |

| Microfluidics Droplet Generators (e.g., Bio-Rad QX200) | Allows ultra-high-throughput screening via compartmentalization of single variants with substrates/reporters. |

| Crystallization Screens (e.g., Hampton Research) | For structural validation of top-predicted variants to confirm mechanistic hypotheses from ML models. |

FAQ 3: What experimental protocol should I use to generate training data optimal for ML models?

A: Avoid random mutagenesis libraries for initial data generation. Use a designed library strategy.

Experimental Protocol: Generating Informative Training Data with Saturation Mutagenesis

- Target Selection: Choose 8-10 residues hypothesized to be functionally important (e.g., active site, lid regions, hinge points).

- Library Design: For each position, synthesize all 20 amino acid variants individually (Single-Site Saturation Mutagenesis).

- Multiplex Assembly: Use a Golden Gate or Gibson Assembly strategy to combine a subset of these single mutations into defined double and triple mutant combinations.

- High-Throughput Screening: Assay all variants in a quantitative, continuous assay (e.g., fluorescence, HPLC yield) to obtain robust fitness values. Normalize signals to expression level (e.g., via His-tag ELISA).

- Data Curation: Assemble a clean dataset with features (variant sequence) and labels (normalized fitness value). Include negative controls and replicates to estimate experimental noise.

Diagram: Data Generation to Model Deployment Workflow

Overcoming Rough Terrain: Troubleshooting Model Failure and Performance Optimization

Technical Support Center: Troubleshooting Guides & FAQs

FAQ 1: How do I diagnose if my model is overfitting on a complex fitness landscape? Answer: Monitor the divergence between training and validation performance metrics. A key indicator is a low training error but a high and increasing validation error as training progresses. For quantitative assessment, use the following table summarizing key metrics:

| Metric | Expected Trend for Overfitting | Diagnostic Threshold (Typical) |

|---|---|---|

| Training Loss | Decreases monotonically | N/A |

| Validation Loss | Decreases then increases | Minimum point + 10% |

| Training AUC / R² | High (>0.95) | Context-dependent |

| Validation AUC / R² | Significantly lower than training | Delta > 0.15 |

| Norm of Weight Parameters | Tends to increase sharply | Rapid rise post early-stopping point |

Experimental Protocol for Diagnosis:

- Data Splitting: Use a structured split (e.g., 70/15/15 for Train/Validation/Test) ensuring representative distribution of landscape complexity regions.

- Training with Validation: Implement a training loop that evaluates the model on the validation set at the end of each epoch.

- Early Stopping Patience: Set a patience parameter (e.g., 10-20 epochs). Record the epoch where validation loss is minimized.

- Post-Stop Analysis: Continue training for an additional 20 epochs while logging all metrics. Plot training vs. validation curves. The sustained divergence confirms overfitting on complex, high-frequency features.