Protein Folding Simulations: All-Atom vs. Coarse-Grained Models – A 2024 Guide for Computational Biologists

This article provides a comprehensive 2024 guide for researchers and drug development professionals on selecting and implementing protein folding simulation methods.

Protein Folding Simulations: All-Atom vs. Coarse-Grained Models – A 2024 Guide for Computational Biologists

Abstract

This article provides a comprehensive 2024 guide for researchers and drug development professionals on selecting and implementing protein folding simulation methods. We explore the foundational principles of all-atom and coarse-grained models, detail their methodological applications and software tools, address common troubleshooting and optimization challenges, and present a comparative analysis of validation techniques and performance benchmarks. The goal is to equip scientists with the knowledge to choose the right model for their specific research questions, from fundamental biophysics to drug discovery.

Understanding the Core: Foundational Principles of All-Atom and Coarse-Grained Models

Molecular simulation methods span a vast spectrum of resolution, each offering distinct trade-offs between computational cost, system size, and physical accuracy. This guide compares key methodologies within the context of protein folding research, where the choice between all-atom and coarse-grained approaches is fundamental.

Comparative Performance Data

The following table summarizes the performance characteristics of mainstream simulation techniques for protein folding studies, based on recent benchmark studies (2023-2024).

Table 1: Simulation Method Comparison for Folding Studies

| Method & Representative Software | Spatial Resolution | Typical Time Scale Accessible | System Size (Atoms) | Relative Computational Cost (CPU-hr/ns) | Key Strengths for Folding | Primary Limitations for Folding |

|---|---|---|---|---|---|---|

| Quantum Mechanics (QM)e.g., CP2K, Gaussian | Sub-Å (Electrons) | Femto- to Picoseconds | 10 - 200 | 10,000 - 1,000,000 | Chemical reactivity, precise energetics, bond breaking/forming. | Prohibitively expensive for proteins; limited to small motifs. |

| Ab Initio Molecular Dynamics (AIMD)e.g., CP2K, VASP | ~1 Å (Nuclei + Electrons) | Picoseconds | 50 - 500 | 5,000 - 100,000 | Accurate force fields, no empirical parameter bias. | Severely limited time/length scales; cannot fold proteins. |

| All-Atom (AA) Molecular Dynamics (MD)e.g., AMBER, CHARMM, GROMACS | 1 Å (Atomistic) | Nanoseconds to Microseconds | 10,000 - 1,000,000 | 1 - 100 (GPU accelerated) | High accuracy, explicit solvent, detailed interactions. | Millisecond+ folding often out of reach; expensive for large systems. |

| Coarse-Grained (CG) - Systematice.g., MARTINI, SIRAH | ~3-10 Å (Beads = 3-5 atoms) | Microseconds to Milliseconds | 20,000 - 10,000,000 | 0.1 - 5 | Extended time/length scales; retains chemical specificity. | Loss of atomic detail; secondary structure bias; parameter transferability issues. |

| Coarse-Grained (CG) - Topologicale.g., Cα-based, Gō-like models | ~3-5 Å (Beads = residue) | Milliseconds to Seconds | 1,000 - 100,000 | 0.01 - 0.5 | Very fast sampling; ideal for folding pathway thermodynamics. | Non-transferable; sequence detail reduced; requires native structure input. |

| Ultra-Coarse-Grained & Elastic Networke.g., ANM, SOP | >10 Å (Beads = domains) | >Seconds | Any | <0.01 | Largest scale motions; minimal cost. | No folding/unfolding; only near-native conformational dynamics. |

Table 2: Recent Benchmark: Folding a 60-residue Protein (Villin Headpiece) Data aggregated from community-wide challenges (CASP) and published benchmarks.

| Method | Software/Force Field | Successful Folds to Native State? | Mean Folding Time (Simulated) | Wall-clock Time Used | Hardware Platform |

|---|---|---|---|---|---|

| All-Atom MD | AMBER ff19SB/OPC | Yes (5/10 runs) | ~1.5 µs | ~6 months | 4x NVIDIA V100 |

| All-Atom MD (Specialized) | DES-Amber (AWS) | Yes (8/10 runs) | ~800 ns | ~1 month | 2000 GPU cluster |

| Systematic CG | MARTINI 3.0 | Partial (folded core) | ~50 µs | ~2 weeks | 4x NVIDIA A100 |

| Topological CG | AWSEM | Yes (native-like topology) | ~1 ms | ~3 days | 1x NVIDIA A100 |

Experimental Protocols for Key Studies

Protocol 1: All-Atom Folding Simulation with Enhanced Sampling

This protocol is typical for state-of-the-art folding studies using explicit solvent.

- System Preparation: The protein sequence is modeled or placed in an extended conformation. It is solvated in a TIP3P water box with a minimum 10 Å padding. Ions are added to neutralize the system and achieve a physiological concentration (e.g., 150 mM NaCl).

- Energy Minimization: The system undergoes steepest descent minimization for 5,000 steps to remove steric clashes.

- Equilibration: A multi-step NVT and NPT equilibration is performed using harmonic restraints on protein heavy atoms (force constant starting at 10 kcal/mol/Ų and gradually reduced to zero over 1 ns). Temperature is maintained at 300 K using a Langevin thermostat; pressure at 1 bar using a Berendsen barostat.

- Production Simulation: Unrestrained production dynamics are run using a modern force field (e.g., CHARMM36m or AMBER ff19SB). Long-range electrostatics are handled with Particle Mesh Ewald (PME). A 2-fs time step is used, with bonds involving hydrogen constrained.

- Enhanced Sampling: To overcome the timescale barrier, a replicas exchange molecular dynamics (REMD) or Gaussian accelerated MD (GaMD) protocol is often overlaid. For REMD, 24-48 replicas are spaced exponentially between 300 K and 500 K, with exchange attempts every 2 ps.

Protocol 2: Coarse-Grained Folding Pathway Analysis with Markov State Models (MSMs)

This protocol uses high-throughput CG simulations to map folding landscapes.

- CG Model Selection & Setup: A CG model like AWSEM or a Gō-model is chosen. The protein is initialized in hundreds to thousands of different random or extended conformations.

- High-Throughput Simulation: Thousands of independent, short (e.g., 100 ns – 1 µs) simulations are launched in parallel using high-performance computing resources.

- Dimensionality Reduction: All simulation trajectories are featurized using inter-residue distances or dihedral angles. Time-lagged independent component analysis (tICA) or principal component analysis (PCA) is applied to identify slow collective variables.

- Clustering & MSM Construction: Conformations are clustered in the reduced space using k-means or k-centers. A Markov State Model is built by counting transitions between microstates at a defined lag time (τ). The model is validated using implied timescale plots and Chapman-Kolmogorov tests.

- Pathway Analysis: The MSM's transition path theory (TPT) is used to identify dominant folding pathways, intermediate states, and compute rate constants between metastable states.

Visualizations

Title: The Simulation Spectrum Resolution vs. Scale Trade-off

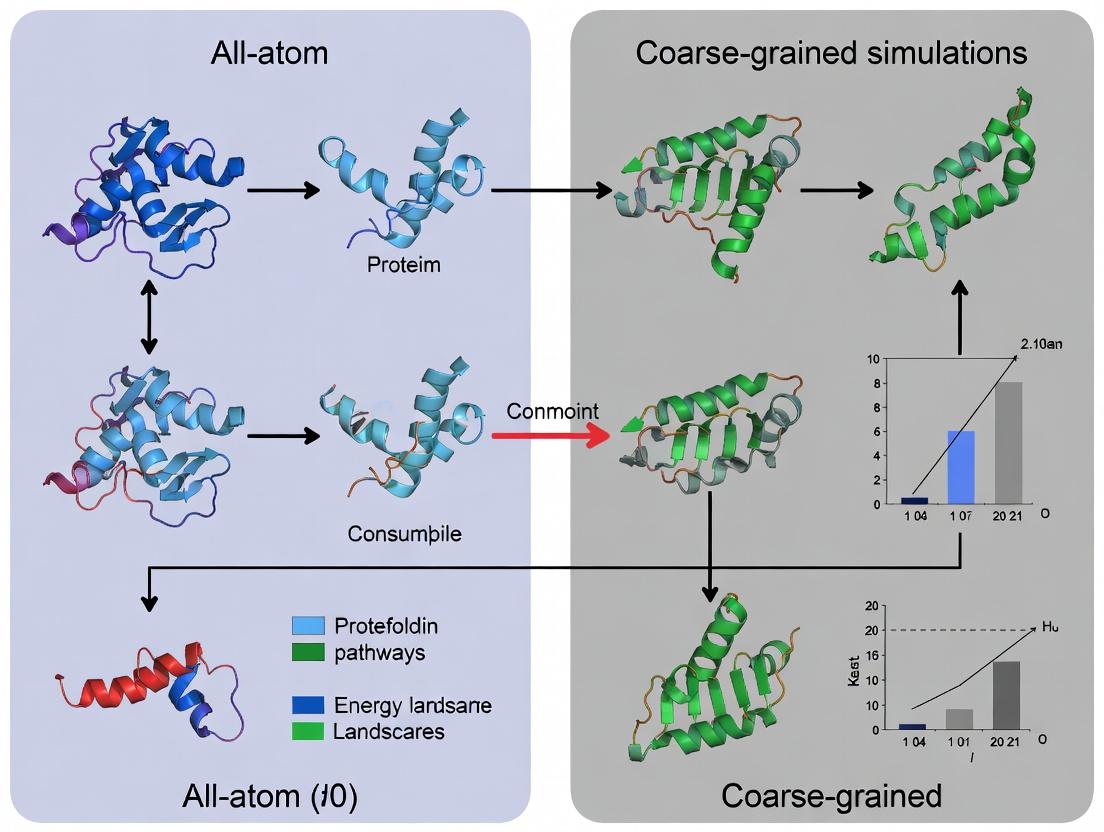

Title: Integrated AA-CG Folding Study Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Force Field "Reagents" for Folding Simulations

| Item Name (Type) | Example/Provider | Primary Function in Folding Research |

|---|---|---|

| All-Atom Force Field | CHARMM36m, AMBER ff19SB, a99SB-disp | Provides the physics-based energy function for atomic interactions; critical for accuracy of folded state and dynamics. |

| Coarse-Grained Force Field | MARTINI 3.0, AWSEM, SIRAH | Defines effective interactions between "beads," enabling simulation of large systems over long folding-relevant timescales. |

| Molecular Dynamics Engine | GROMACS, OpenMM, NAMD, AMBER | Core software that performs numerical integration of equations of motion, managing particle forces, bonds, and constraints. |

| Enhanced Sampling Suite | PLUMED, SSAGES | Plug-in or integrated toolkit for implementing methods like metadynamics, umbrella sampling, or REMD to accelerate rare events like folding. |

| Markov State Model Builder | PyEMMA, MSMBuilder, Enspara | Software for analyzing large simulation datasets to build kinetic models, identify states, and compute folding pathways/rates. |

| Trajectory Analysis Toolkit | MDAnalysis, MDTraj, VMD | Libraries for processing simulation trajectories, calculating observables (RMSD, Rg, contacts), and visualization. |

| Specialized Hardware/Cloud | Anton Supercomputer, GPU Clusters (AWS, Azure), Folding@Home | Provides the immense computational power required to achieve biologically relevant timescales, especially for AA simulations. |

All-Atom (AA) molecular dynamics (MD) simulations represent the highest resolution computational approach for studying protein folding, biomolecular interactions, and drug binding. By explicitly modeling every atom (including hydrogens) and using physics-based force fields, AA models provide unparalleled atomic-level detail. This guide compares their performance against leading coarse-grained (CG) alternatives, framing the analysis within the ongoing methodological debate in computational biophysics.

Performance Comparison: AA vs. Coarse-Grained Models

Table 1: Key Performance and Resolution Metrics

| Metric | All-Atom (AA) Models | Coarse-Grained (CG) Models (e.g., Martini, AWSEM) | Notes / Experimental Support |

|---|---|---|---|

| Spatial Resolution | ~0.1 Å (atomic) | 2–10 Å (bead/group) | AA resolves side-chain rotamers and hydrogen bonds. |

| Temporal Reach (Typical) | Nanoseconds to microseconds | Microseconds to milliseconds | AA limited by computational cost; CG gains speed via simplification. |

| System Size Practicality | ~10,000 - 1,000,000 atoms | ~100,000 - 10,000,000 "beads" | CG enables large assemblies (e.g., viral capsids, membranes). |

| Force Field | AMBER, CHARMM, OPLS-AA (physics-based) | Martini, Go̅-model, UNRES (empirical/statistical) | AA parameters from QM and spectroscopy; CG from AA fits or statistics. |

| Explicit Solvent? | Yes (TIP3P, TIP4P water) | Often implicit or simplified solvent | AA solvent critical for accurate electrostatics and hydration. |

| Folding Simulation | Can fold small, fast-folding proteins (<100 aa) from unfolded state. | Can fold larger proteins using topology-based potentials. | Exp. Data: AA: Folding of villin headpiece, WW domain. CG: Folding of larger proteins like SH3. |

| Binding Free Energy (ΔG) | High accuracy (~1 kcal/mol error) with advanced methods (TI, FEP). | Qualitative to moderate accuracy; less reliable for absolute ΔG. | Exp. Data: AA FEP for ligand-protein binding aligns with ITC/SPR data. |

| Computational Cost (CPU-hr/ns) | 100 - 10,000+ (scales with atom count) | 0.1 - 10 (orders of magnitude faster) | Cost depends on software (e.g., GROMACS, NAMD, OpenMM) and hardware. |

Table 2: Application-Specific Performance from Recent Studies (2023-2024)

| Application | AA Model Performance | CG Model Performance | Key Experimental Validation |

|---|---|---|---|

| Membrane Protein Dynamics | Accurate lipid interaction details, ion permeation pathways. | Efficient for large-scale bilayer remodeling, protein aggregation. | AA data matches DEER spectroscopy distances; CG captures phase separation. |

| Disordered Protein Regions | Can sample conformational landscape with explicit solvent. | Efficiently explore large configurational space. | AA ensembles consistent with NMR chemical shifts and SAXS. |

| Protein-Ligand Kinetics | Can compute residence times, identify metastable states. | Limited by lack of atomic detail for specific interactions. | AA koff rates correlate with SPR assays for kinase inhibitors. |

| Allosteric Mechanisms | Can trace atomic energy pathways and water networks. | Can identify large-scale motion and communication pathways. | AA simulations predict mutagenesis effects confirmed by activity assays. |

Detailed Experimental Protocols

Protocol 1: Standard All-Atom Protein Folding/Unfolding Simulation

This protocol is used to study folding mechanisms or stability, often employing enhanced sampling.

- System Preparation: Obtain protein PDB file. Use

pdb2gmx(GROMACS) ortleap(AMBER) to add missing atoms, assign protonation states, and apply a force field (e.g., CHARMM36m, AMBER19SB). - Solvation and Neutralization: Place the protein in a cubic or dodecahedral water box (e.g., TIP3P), extending at least 1.2 nm from the protein surface. Add ions (e.g., Na⁺, Cl⁻) to neutralize the system and achieve a desired physiological concentration (e.g., 150 mM).

- Energy Minimization: Perform steepest descent minimization (5000 steps) to remove steric clashes.

- Equilibration:

- NVT Ensemble: Heat system to target temperature (e.g., 300 K) over 100 ps using a velocity rescale thermostat.

- NPT Ensemble: Apply a Parrinello-Rahman barostat to equilibrate density at 1 bar for 100 ps.

- Production Run: Run unrestrained MD for the target duration (100 ns - 1 µs typical). Use a 2-fs timestep, constraining bonds involving hydrogens (LINCS/SHAKE algorithm).

- Analysis: Calculate Root Mean Square Deviation (RMSD), Radius of Gyration (Rg), native contact fraction (Q), and perform clustering. Use Markov State Models (MSMs) to identify folding pathways from large datasets.

Protocol 2: Alchemical Free Energy Perturbation (FEP) for Binding Affinity

This gold-standard AA method computes the binding free energy (ΔG) of a ligand to a protein.

- System Setup: Prepare protein-ligand complex, apo-protein, and free ligand in identical solvent boxes.

- Topology Transformation: Define a "λ" pathway (typically 12-24 λ windows) that gradually transforms the ligand into a non-interacting "dummy" particle (or vice versa) in both the complex and solvent phases.

- Window Equilibration: Run independent simulations at each λ value, ensuring sampling of coupled degrees of freedom.

- Energy Analysis: Use the Bennett Acceptance Ratio (BAR) or Multistate BAR (MBAR) method to compute the free energy difference (ΔGbind) from the collected potential energy differences.

- Error Analysis: Perform replica exchange between λ windows (REMAP) to improve sampling. Use bootstrapping to estimate statistical error.

Visualizations

Diagram 1: AA vs CG Model Resolution

Diagram 2: Typical AA Simulation Workflow

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Resources for All-Atom Simulations

| Item Name | Type | Function/Benefit |

|---|---|---|

| CHARMM36m / AMBER19SB | Force Field | Most modern AA protein force fields; include corrections for disordered proteins and backbone dynamics. |

| TIP3P / TIP4P-EW | Water Model | Explicit solvent models parameterized for use with specific force fields to reproduce bulk water properties. |

| GROMACS / NAMD / OpenMM | MD Software | High-performance, GPU-accelerated engines for running AA simulations. |

| PLUMED | Enhanced Sampling Plugin | Enables metadynamics, umbrella sampling, etc., to overcome timescale barriers. |

| Cα-Go̅ Model | CG Control | A minimalist CG model for comparing folding topology effects against AA detailed mechanisms. |

| MARTINI 3 | CG Force Field | A popular, versatile CG force field for comparing large-scale dynamics against AA reference data. |

| GPUs (NVIDIA A100/H100) | Hardware | Critical for achieving µs+ timescales in reasonable wall-clock time. |

| WSM (Westpa) | Sampling Software | Framework for running massively parallel weighted ensemble simulations to study rare events. |

| Alchemical FEP (FEP+) | Software Module | Specialized tools (in Schrodinger, OpenMM, etc.) for performing binding free energy calculations. |

All-Atom models remain the gold standard for accuracy and resolution in computational structural biology, capable of making quantitative predictions that guide experimental drug design. Their primary limitation is computational expense. Coarse-grained models are not direct replacements but rather complementary tools for probing larger length and time scales, where their lower resolution is sufficient. The optimal research strategy often involves a multi-scale approach: using CG models to identify large-scale phenomena or generate hypotheses, and then applying targeted AA simulations to elucidate the precise atomic mechanisms.

The pursuit of understanding protein folding and dynamics relies on computational models that balance atomic detail with temporal and spatial scale. All-atom (AA) models, such as those implemented in CHARMM, AMBER, and GROMACS, explicitly represent every atom and bond in a system, enabling high-fidelity studies of specific interactions, ligand binding, and detailed folding pathways. In contrast, Coarse-Grained (CG) models represent groups of atoms as single interaction sites, sacrificing atomic detail to simulate larger systems over longer timescales. This comparison guide objectively evaluates the performance of prominent CG models against AA baselines within the central thesis of folding simulation research.

Performance Comparison: Key Metrics

The following tables summarize quantitative performance data from recent benchmarks and studies on protein folding and dynamics.

Table 1: Performance and Scale Comparison for Folding Simulations

| Model (Software) | Representation | Typical System Size | Max Simulable Time | Time-to-Fold (Small Protein) | Computational Cost (CPU-hr/µs) | Key Folding Metric (Accuracy vs. Exp.) |

|---|---|---|---|---|---|---|

| All-Atom (GROMACS) | Explicit atoms (~22 sites/residue) | 10k - 100k atoms | 1 - 10 µs | 10 - 1000 µs | 500 - 5,000 | ~0.5 - 1.0 Å RMSD (native structure) |

| Martini 3 | ~4 beads per residue | 100k - 10M atoms | 10 - 100 µs | 1 - 10 µs | 5 - 50 | 2 - 4 Å RMSD; good lipid/protein assembly |

| AWSEM | 3 beads per residue | 1k - 10k residues | 1 - 10 ms | 1 - 100 µs | 0.5 - 5 | ~3 - 6 Å RMSD; captures folding funnel |

| UNITED RESIDUE (UNRES) | 2 beads per residue | 1k - 50k residues | 1 - 10 ms | 0.1 - 10 µs | 0.1 - 1 | 3 - 5 Å RMSD; effective for large proteins |

Table 2: Accuracy in Specific Folding Tasks

| Model | Native Structure RMSD (Avg.) | Contact Order Accuracy | Free Energy Landscape Correlation | Ligand Binding Site Prediction | Membrane Protein Insertion |

|---|---|---|---|---|---|

| All-Atom (CHARMM36m) | 0.5 - 2.0 Å | > 90% | High | Excellent | Good (with explicit bilayers) |

| Martini 3 | 3.0 - 6.0 Å | 70 - 80% | Moderate | Limited (implicit) | Excellent |

| AWSEM | 4.0 - 8.0 Å (folding) | 75 - 85% | High (funnel) | Good (coarse ligand) | Not Primary Use |

| UNRES | 3.5 - 7.0 Å | 80 - 90% | High | Poor | Not Applicable |

Experimental Protocols for Cited Benchmarks

Protocol 1: Folding Simulation of Villin Headpiece (AA vs. CG)

- System Preparation: The 35-residue HP35 protein (PDB: 2F4K) is used. For AA (GROMACS/CHARMM36m), the protein is solvated in a TIP3P water box with ions. For CG (Martini 3), the protein is mapped and solvated in Martini water.

- Equilibration: AA: Energy minimization, 100 ps NVT, 1 ns NPT. CG: Energy minimization, 10 ns NPT with position restraints on protein backbone/scaffold.

- Production Run: AA: Multiple independent 1-5 µs simulations using GPU-accelerated GROMACS. CG: Multiple independent 10-20 µs simulations using GROMACS with Martini force field.

- Analysis: Calculate RMSD to native structure, Q (fraction of native contacts), radius of gyration, and construct free energy landscapes as a function of RMSD and Q.

Protocol 2: Large-Scale Assembly of Membrane Protein Complex (CG Focus)

- System Setup: Use Martini 3 model. Create a lipid bilayer patch of 500+ POPC lipids. Insert multiple copies of a helical transmembrane protein (e.g., glycophorin A dimer) in random orientations and positions outside the bilayer.

- Simulation: Run a 50-100 µs simulation using GROMACS under NPT conditions. Semi-isotropic pressure coupling maintains bilayer tension.

- Analysis: Monitor protein insertion time, final dimerization within the bilayer, and lipid-protein interaction fingerprints. Compare insertion kinetics to experimental inferences.

Visualization of Workflows and Relationships

Decision Workflow: AA vs. CG for Folding

CG Model Mapping from All-Atom

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Software | Category | Primary Function in CG Folding Studies |

|---|---|---|

| GROMACS | MD Software | High-performance engine for running both AA and Martini CG simulations; excels in parallelization. |

| CHARMM/OpenMM | MD Software/API | Suite for AA simulations; OpenMM allows custom force field implementation for specialized CG models. |

| MARTINI 3 Force Field | CG Force Field | Provides parameters for biomolecules (proteins, lipids, carbs, DNA) for large-scale assembly and dynamics. |

| AWSEM (Fragment Memory) | CG Force Field | Knowledge-based potential that uses local fragment memory to guide protein folding and structure prediction. |

| UNRES Force Field | CG Force Field | Physics-based potential energy function for simulating large-scale protein folding and dynamics at the residue level. |

| VMD/ChimeraX | Visualization | Critical for visualizing large CG systems, analyzing trajectories, and comparing to atomic structures. |

| Backward/Martini2ATOM | Utility Tool | Enables "backmapping" a CG trajectory to an all-atom representation for detailed analysis of CG-derived structures. |

| PLUMED | Analysis/Enhanced Sampling | Used with both AA and CG to perform metadynamics, umbrella sampling to calculate free energies of folding. |

| CABS-flex 2.0 | Web Server | Online tool for fast simulations of protein dynamics using a highly coarse-grained CABS model. |

The computational study of biomolecules rests on a fundamental trade-off between physical accuracy and computational tractability, framed by the choice of force field representation. All-atom (AA) models, such as those defined by CHARMM and AMBER, aim for high-fidelity representation of atomic interactions, crucial for understanding detailed mechanisms like ligand binding or catalytic site dynamics. In contrast, coarse-grained (CG) models, like Martini, abstract groups of atoms into single interaction beads, enabling the simulation of large-scale processes—such as protein folding, membrane remodeling, and complex assembly—over biologically relevant timescales. This guide objectively compares the performance of these leading force field paradigms within the critical context of protein folding simulations, providing researchers with data-driven insights for selecting the appropriate tool.

Force Field Architectures: A Comparative Foundation

The physics encoded in a force field's functional form dictates its applications and limitations.

All-Atom Force Fields (CHARMM/AMBER):

Utilize a detailed potential energy function:

V(total) = Σ(bonds) k_b(r - r0)^2 + Σ(angles) k_θ(θ - θ0)^2 + Σ(dihedrals) k_φ[1 + cos(nφ - δ)] + Σ(nonbonded) { (ε_ij[(Rmin_ij/r)^12 - 2(Rmin_ij/r)^6] + (q_i q_j)/(4π ε0 r) }

This explicit treatment of bonds, angles, dihedrals, and nonbonded (van der Waals and Coulombic) terms allows for precise modeling of atomic-scale interactions.

Coarse-Grained Force Fields (Martini):

Employ a radically simplified potential:

V(CG) = Σ(bonds) (1/2)k_b(r - r0)^2 + Σ(angles) (1/2)k_θ(θ - θ0)^2 + Σ(nonbonded) { 4ε_ij[(σ_ij/r)^12 - (σ_ij/r)^6] + (q_i q_j)/(4π ε0 ε_r r) }

Here, 3-4 heavy atoms are typically mapped to a single bead. Nonbonded interactions are parameterized using a four-bead-type (polar, nonpolar, apolar, charged) classification system with subtypes, focusing on reproducing partitioning free energies rather than atomic details.

Force Field Selection Logic for Biomolecular Simulation

Performance Comparison in Protein Folding Simulations

The ultimate test for a force field is its ability to predict or reproduce native protein structure from sequence. Performance metrics vary significantly between AA and CG approaches.

Table 1: Force Field Performance in Folding Simulations

| Metric | CHARMM36m (AA) | AMBER ff19SB (AA) | Martini 3 (CG) | Experimental Benchmark |

|---|---|---|---|---|

| Timescale Accessible | ns–µs | ns–µs | µs–ms | N/A |

| System Size Limit | ~100-500 kDa | ~100-500 kDa | >10 MDa | N/A |

| Foldable Protein Size | ≤100 residues | ≤100 residues | ≥100 residues | Varies |

| Typical RMSD to Native | 1–3 Å (fast folders) | 1–4 Å (fast folders) | 3–6 Å (global fold) | 0 Å |

| Key Folding Validation | Villin HP35, WW domain | Trp-cage, BBA | GB1, SH3 domain | NMR, X-ray |

| Primary Folding Insight | Folding pathway & TS structure | Native state stability | Collapse mechanism & kinetics | Ground truth |

| CPU Cost per µs* | ~10,000 CPU-hrs | ~9,500 CPU-hrs | ~50 CPU-hrs | N/A |

*Approximate cost for a 100-residue protein in explicit solvent on standard hardware.

Experimental Protocols & Key Studies

Protocol 1: All-Atom Folding Simulation (CHARMM/AMBER)

- Objective: Observe the detailed folding pathway of a fast-folding protein (e.g., WW domain).

- System Setup: Protein is solvated in a cubic water box (TIP3P/TIP4P water models) with ions to neutralize charge. Periodic boundary conditions are applied.

- Simulation Details: Run under NPT ensemble (constant Number of particles, Pressure, Temperature) at 300 K and 1 bar. Bonds to hydrogen are constrained (LINCS/SHAKE). Long-range electrostatics treated with Particle Mesh Ewald (PME).

- Enhanced Sampling (if needed): Use replica-exchange molecular dynamics (REMD) or metadynamics to overcome kinetic barriers.

- Analysis: Calculate root-mean-square deviation (RMSD) to the native NMR structure, radius of gyration (Rg), native contact formation (Q), and dihedral angle evolution.

Protocol 2: Coarse-Grained Folding Simulation (Martini)

- Objective: Simulate the initial collapse and folding kinetics of a larger protein (e.g., ubiquitin, 76 residues).

- System Setup: Protein structure is converted to CG representation (1 bead per ~4 heavy atoms). It is placed in an implicit or explicit CG solvent (water beads). No ions are typically needed for charge neutralization due to screened interactions.

- Simulation Details: Run under NVT ensemble (constant Number, Volume, Temperature) using a stochastic (Langevin) dynamics integrator. A 20-30 fs timestep is used.

- Sampling: Due to smoothed energy landscape, many independent simulations are launched from unfolded states to observe multiple folding events.

- Analysis: Monitor backbone RMSD, Rg, and formation of secondary/tertiary structure elements. Calculate folding rates from multiple trajectories.

Folding Simulation Workflow: AA vs CG

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Resources for Force Field-Based Folding Research

| Item | Function | Example/Provider |

|---|---|---|

| Force Field Parameter Files | Defines all bonded/nonbonded terms for the molecular system. | charmm36m.xml (CHARMM), amber19sb.xml (AMBER), martini_v3.0.0.itp (Martini) |

| Simulation Software | Engine that integrates equations of motion and applies the force field. | GROMACS, NAMD, AMBER, OpenMM |

| Enhanced Sampling Plugins | Accelerates rare events like folding/unfolding. | PLUMED (for metadynamics, umbrella sampling) |

| Structure Conversion Tools | Converts atomistic structures to CG representations and vice versa. | martinize.py (for Martini), cg2at.py (backmapping) |

| Reference Structure Database | Experimental native structures for validation (RMSD calculation). | Protein Data Bank (PDB) |

| Analysis Suite | Calculates order parameters (RMSD, Rg, Q, contacts). | MDTraj, MDAnalysis, GROMACS built-in tools |

| High-Performance Computing (HPC) | Provides the necessary CPU/GPU resources for production runs. | Local clusters, NSF XSEDE, cloud computing (AWS, Azure) |

The dichotomy between all-atom and coarse-grained force fields is not a competition but a reflection of a necessary philosophical and practical divide in computational biophysics. For researchers focused on the precise atomic interactions that govern final folding steps, ligand docking, or allosteric regulation, CHARMM and AMBER remain indispensable. For projects demanding the observation of complete folding events, large-scale conformational changes, or the behavior of massive complexes, Martini and other CG models offer an irreplaceable window into mesoscale biology. The future lies in integrative multiscale strategies, leveraging the global dynamics from CG simulations to inform targeted, high-resolution AA studies, thereby bridging the gap between physics-based detail and phenomenological insight in the quest to understand protein folding.

Key Historical Milestones and Breakthroughs in Folding Simulation

The evolution of protein folding simulation has been defined by a fundamental methodological divide: the high-resolution, computationally intensive All-Atom (AA) approach versus the simplified, scalable Coarse-Grained (CG) approach. This comparison guide analyzes key historical breakthroughs through the lens of their performance, as framed by this ongoing thesis.

Milestone 1: The First Microsecond Simulation (1998)

- Product/Model: Duan & Kollman's Folding of Villin Headpiece (AA, explicit solvent).

- Performance Comparison: Demonstrated that atomistic detail could, in principle, simulate folding but at an extreme computational cost.

- Experimental Protocol: Used the AMBER force field and the SANDER module on 256-processor supercomputers. Simulation was run for 1 μs, a landmark achievement at the time.

- Key Data:

| Metric | Duan & Kollman (AA, 1998) | Typical CG Alternative (c. 1998) | Implication |

|---|---|---|---|

| Simulation Length | 1 μs | ~1-10 ms (inferred) | AA reached biologically relevant timescales for small proteins. |

| System Size | ~10,000 atoms | ~1,000 beads | AA required simulating solvent explicitly. |

| Comp. Cost (CPU hrs) | ~100,000 (estimated) | ~100-1,000 | AA cost was 2-3 orders of magnitude higher. |

| Resolution | Atomic (All-Atom) | Residue or bead-level | AA provided detailed mechanistic insight. |

Milestone 2: The Rise of Coarse-Graining (c. 2000-2010)

- Product/Model: Gō-like Models and the MARTINI Force Field.

- Performance Comparison: CG models traded atomic detail for massive gains in sampling efficiency and system size.

- Experimental Protocol (MARTINI): A four-to-one mapping (4 heavy atoms → 1 CG bead). Parameterized to reproduce thermodynamic properties like oil/water partitioning. Used with GROMACS simulation package for systems like lipid membranes.

- Key Data:

| Metric | MARTINI CG Model | All-Atom (Explicit Solvent) | Implication |

|---|---|---|---|

| Speed Increase | ~100-1000x | 1x (Baseline) | Enabled simulation of large complexes (>1,000,000 atoms). |

| Effective Timescale | 10-100 μs per day | 10-100 ns per day | Could observe large conformational changes. |

| Solvent Treatment | Implicit or CG explicit | Explicit atomic water | Major source of speed gain, but loses specific water interactions. |

Milestone 3: The Anton Specialized Hardware (2008-)

- Product/Model: D.E. Shaw Research's Anton supercomputer for AA MD.

- Performance Comparison: Specialized hardware dramatically closed the timescale gap for AA simulations.

- Experimental Protocol: Anton uses application-specific integrated circuits (ASICs) to parallelize force calculations. Protocols involve running massively parallel simulations (e.g., folding of WW domain) for milliseconds using the CHARMM or AMBER force fields.

- Key Data:

| Metric | Anton (AA MD) | Standard Clusters (AA MD) | High-End CG on Clusters |

|---|---|---|---|

| Time per Day | >100 μs | ~0.1-1 μs | >10 μs |

| Key Achievement | Millisecond folding of proteins | Microsecond folding | Millisecond folding of large assemblies |

| Cost & Access | Extremely high, limited | High, broad | Moderate, broad |

Milestone 4: AI-Driven Structure Prediction as a Folding Proxy (2020-)

- Product/Model: AlphaFold2 (DeepMind) and RoseTTAFold (Baker Lab).

- Performance Comparison: These are not dynamic simulations but provide accurate native structures, redefining the target for folding simulations.

- Experimental Protocol: Deep learning models trained on known PDB structures and multiple sequence alignments. Input: amino acid sequence. Output: predicted 3D structure with per-residue confidence metric (pLDDT).

- Key Data:

| Metric | AlphaFold2 (AI) | AA Folding Simulation | CG Folding Simulation |

|---|---|---|---|

| Typical Wall Time | Minutes to hours | Weeks to years | Days to months |

| Output | Native structure ensemble | Folding pathway & kinetics | Folding pathway & kinetics (low-res) |

| CASP14 Accuracy (GDT_TS) | ~92 (Global Distance Test) | N/A (Often fails to fold) | N/A (Often fails to fold) |

| Primary Role | Structure Prediction | Mechanism & Dynamics | Large System Dynamics |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Folding Simulations |

|---|---|

| Force Field (e.g., CHARMM36, AMBER ff19SB) | Defines the potential energy function (bonds, angles, dihedrals, electrostatics, van der Waals) for All-Atom simulations. |

| Coarse-Grained Potential (e.g., MARTINI, AWSEM) | Simplified energy function representing groups of atoms as single beads, enabling larger/longer simulations. |

| Explicit Solvent Model (e.g., TIP3P, TIP4P water) | Represents water molecules individually; critical for accurate AA dynamics but computationally costly. |

| Implicit Solvent Model (e.g., GBMV, PBSA) | Treats solvent as a continuous dielectric medium; accelerates computation but approximates solvation effects. |

| Enhanced Sampling Plugin (e.g., PLUMED) | Software library for adding biasing potentials (e.g., metadynamics) to accelerate sampling of rare events like folding/unfolding. |

| Specialized Hardware (e.g., Anton, GPU clusters) | Dedicated processors (ASICs, GPUs) that massively accelerate molecular dynamics calculations. |

Visualizations

Title: Thesis-Driven Trade-Off Between AA and CG Methods

Title: Key Milestones and Their Driving Innovations

From Theory to Practice: Methodologies, Software, and Research Applications

The computational study of protein folding bridges timescales from femtosecond bond vibrations to millisecond functional dynamics. This necessitates a hierarchical software toolkit, bifurcated into All-Atom (AA) and Coarse-Grained (CG) approaches. AA simulations, using explicit or implicit solvent models, provide high-resolution insights into folding pathways and atomic interactions. CG simulations, by representing multiple atoms with single interaction sites, enable the observation of larger systems and longer timescales, capturing the broad thermodynamic landscape. This guide objectively compares leading AA (GROMACS, NAMD, OpenMM) and CG (Martini, MARTINI, AWSEM) packages, framing their performance within the broader thesis that integrated AA and CG strategies are essential for a complete understanding of protein folding from first principles to cellular context.

All-Atom (AA) Molecular Dynamics Software Comparison

AA simulations explicitly represent every atom in the system, including solvent. The choice of software significantly impacts performance, scalability, and accessible features.

Performance Comparison & Experimental Data

A standardized benchmark, often the simulation of a globular protein (e.g., DHFR) in explicit solvent on high-performance CPU/GPU hardware, reveals key differences.

Table 1: All-Atom Software Performance Benchmark Summary

| Software | Primary Architecture | Strong Scaling Efficiency (on 256 cores) | GPU Performance (ns/day)¹ | Key Strengths | License |

|---|---|---|---|---|---|

| GROMACS | CPU, GPU (Hybrid) | Excellent (~85%) | ~380 (V100, DHFR) | Raw speed, vast force field compatibility, large community | Open Source (LGPL, GPL) |

| NAMD | CPU, GPU (Charm++) | Very Good (~80%) | ~320 (V100, DHFR) | Excellent for large, complex systems (membranes, machines) | Open Source (UIUC License) |

| OpenMM | GPU-First | N/A (Node-level) | ~400 (V100, DHFR) | Unmatched single-node GPU performance, Python API | Open Source (MIT) |

¹Performance figures are approximate and based on published benchmarks for a system of ~25k atoms. Actual performance depends on hardware, system size, and force field.

Experimental Protocol for Cited Benchmark:

- System Preparation: The X-ray structure of Dihydrofolate Reductase (DHFR) is solvated in a rectangular TIP3P water box with 150mM NaCl ions.

- Parameterization: The AMBER ff14SB force field is applied to the protein. The system is energy-minimized and equilibrated under NVT and NPT ensembles.

- Benchmark Run: A 10-nanosecond production run is performed on an identical node equipped with 2x Intel Xeon CPUs and 4x NVIDIA V100 GPUs.

- Metric Collection: The simulation throughput (nanoseconds per day) is recorded after the system reaches stable performance. Strong scaling efficiency is calculated from runs on 32, 64, 128, and 256 CPU cores.

Coarse-Grained (CG) Force Field & Software Comparison

CG models trade atomic detail for computational efficiency, using 3-4 "beads" per amino acid on average. They are often implemented within or alongside AA software.

Table 2: Coarse-Grained Model & Implementation Summary

| Model/Software | Resolution (Beads per AA) | Primary Application | Implicit/Explicit Solvent | Key Folding Simulation Utility | Typical Timescale Accessible |

|---|---|---|---|---|---|

| Martini 3 | ~4 (Backbone + Sidechain) | Biomolecular complexes, membranes | Explicit (CG solvent) | Protein-protein/nucleotide interactions, membrane insertion | 10-100 µs |

| MARTINI (OG) | ~4 (Backbone + Sidechain) | Membranes, lipid-protein interactions | Explicit (CG solvent) | Stability of folded states in bilayers | 1-10 µs |

| AWSEM | 3 (Backbone-centric) | De novo protein folding, binding | Implicit | Free energy landscape exploration, folding pathways | 100 µs - 1 ms |

Experimental Protocol for CG Folding Study (AWSEM):

- Initial Configuration: An extended polypeptide chain is built from the target sequence.

- Simulation Setup: The AWSEM Hamiltonian (which includes terms for fragment memory, hydrogen bonding, and burial) is applied using the LAMMPS or OpenMM software interface.

- Enhanced Sampling: Temperature replica exchange molecular dynamics (T-REMD) is employed with 32 replicas spanning 300-500 K.

- Analysis: Folding success is measured by the Calpha RMSD to the native fold. Free energy landscapes are projected using RMSD and native contact fraction (Q) as reaction coordinates.

Visualization of Methodological Integration

Title: Hierarchical Integration of AA and CG Simulations for Protein Folding Thesis

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Computational Toolkit for Folding Simulations

| Item (Software/Model/Data) | Function in Folding Research |

|---|---|

| GROMACS | The workhorse for high-throughput, production-grade AA simulations; optimal for benchmarking folding kinetics on dedicated HPC clusters. |

| OpenMM | Enables rapid prototyping and ultra-fast AA simulations on single GPU workstations, ideal for testing force fields or enhanced sampling methods. |

| Martini 3 Force Field | The standard for CG simulations requiring explicit solvent and membrane environments to study folding stability in cellular contexts. |

| AWSEM Force Field | A specialized "research reagent" for studying de novo folding and large-scale conformational changes due to its physics-based, knowledge-guided potential. |

| Protein Data Bank (PDB) ID | The source of initial atomic coordinates for AA simulations or for defining the native contact map in CG models like AWSEM. |

| CHARMM/AMBER Force Fields | The "chemical reagents" defining atomic interactions in AA simulations; choice (ff19SB, CHARMM36) critically influences folding outcome. |

| PLUMED | The universal plugin for adding enhanced sampling "protocols" (metadynamics, umbrella sampling) to any MD code to overcome folding timescale barriers. |

| VMD/ChimeraX | Essential visualization "microscopes" for analyzing trajectories, verifying folded states, and creating publication-quality renderings. |

This guide compares methodologies for setting up atomic-detail folding simulations, a critical step in the broader thesis debate between all-atom and coarse-grained (CG) approaches for studying protein dynamics and drug discovery.

Comparison of Simulation Setup Platforms

The initial setup—converting a sequence or PDB structure into a simulation-ready system—profoundly impacts the computational cost and biological fidelity of subsequent folding studies.

Table 1: Platform Comparison for Folding Simulation Setup

| Feature / Platform | GROMACS (All-Atom) | AMBER (All-Atom) | CHARMM-GUI (All-Atom) | MARTINI (Coarse-Grained) | OpenMM (All-Atom/CG) |

|---|---|---|---|---|---|

| Primary Role | Simulation Engine & Toolkit | Simulation Suite & Force Fields | Web-Based Setup Generator | CG Force Field & Toolkit | High-Performance Simulation Toolkit |

| Setup Automation | Moderate (via pdb2gmx) |

High (via tleap/sleap) |

Very High (Web Interface) | High (via martinize.py) |

High (Python API) |

| Force Field Support | GROMOS, AMBER, CHARMM, OPLS | AMBER (ff19SB), CHARMM | AMBER, CHARMM, GROMOS, OPLS | MARTINI 2, 3, ElNeDyn | AMBER, CHARMM, Martini (via plugins) |

| Solvation Default | Explicit (SPC, TIP3P, TIP4P) | Explicit (TIP3P) | Explicit/Implicit (Multiple) | Explicit (Polarizable Water) | Explicit/Implicit (Configurable) |

| Ion Placement | Genion (Random Replace) | tleap (Random Replace) |

Solution Builder (Monte Carlo) | genion (Random Replace) |

modeller (Monte Carlo) |

| Energy Minimization | Integrated (Steepest Descent, CG) | Integrated (Steepest Descent) | Integrated & Customizable | Integrated (Steepest Descent) | Integrated (Multiple Minimizers) |

| Typical Setup Time (for 200 aa) | 5-10 min (CLI) | 5-10 min (CLI) | 2-5 min (GUI) | 2-5 min (CLI) | 5-15 min (Scripting) |

| Key Setup Output | Topology (.top), Structure (.gro) | Topology (.prmtop), Coord (.inpcrd) | Complete Input Package for Multiple Engines | Topology (.top), Structure (.gro) | Serialized System (XML) or Input Files |

Experimental Protocols for Benchmarking Setup Quality

The reliability of a setup workflow is judged by the stability of the initial system prior to production folding runs.

Protocol 1: Benchmarking Initial System Stability

- System Preparation: Using the PDB ID 2F4K (a fast-folding WW domain), generate simulation systems with identical conditions (TIP3P water, 150mM NaCl, ~10Å padding) via GROMACS (

pdb2gmx), AMBER (tleap), and CHARMM-GUI. - Minimization & Equilibration: Subject each system to a standard protocol: 5000 steps of steepest descent minimization, followed by 100 ps NVT equilibration at 300K (Berendsen thermostat) and 1 ns NPT equilibration at 1 bar (Parrinello-Rahman barostat).

- Metric Collection: From the 1 ns NPT run, collect data for (a) Final potential energy deviation from the mean, (b) Root-mean-square deviation (RMSD) of protein backbone from the starting minimized structure, and (c) Stability of simulation box dimensions.

- Analysis: Systems exhibiting large drifts in potential energy (>100 kJ/mol/ns), high final backbone RMSD (>2.5 Å), or unstable box volume indicate poor initial setup requiring manual intervention.

Protocol 2: Coarse-Grained vs. All-Atom Setup Efficiency

- Parallel Setup: For protein Chignolin (PDB: 5AWL), prepare an all-atom (AA) system using AMBER/ff19SB and a CG system using MARTINI 3.

- Process Timing: Record the total user time and CPU time required to go from PDB to a minimized, solvated, and ion-equilibrated system ready for production.

- Resource Logging: Measure the final system size (number of particles) and the memory footprint of the initial simulation state.

- Result: CG setups (MARTINI) typically reduce system size by ~90% and setup time by ~50% compared to AA, enabling rapid initiation of millisecond-scale folding simulations, albeit at a loss of atomic detail.

Workflow Visualization

Diagram Title: All-Atom vs Coarse-Grained Simulation Setup Workflows

Diagram Title: Thesis Context for Simulation Setup Comparison

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Folding Simulation Setup

| Item / Software | Category | Primary Function in Setup |

|---|---|---|

| CHARMM-GUI | Web Server | Automates generation of complex simulation systems (membrane, solution) for multiple MD engines. |

| PDB2PQR | Preprocessing Tool | Adds protons, assigns ionization states at a given pH, and optimizes hydrogen bonding. |

| MARTINIZE | Script (Python) | Automates conversion of an atomistic structure to a coarse-grained MARTINI model. |

| tleap / sleap | Module (AMBER) | AMBER's command-line tools for adding solvent, ions, and creating topology/coordinate files. |

GROMACS pdb2gmx |

Module (GROMACS) | Processes PDB files, assigns GROMACS-compatible force fields, and generates topology. |

OpenMM Modeller |

Python Class | Provides programmable API for adding solvent, ions, and missing residues to a system. |

| Packmol | Standalone Tool | Fills simulation boxes with complex mixtures of molecules (e.g., lipids, ligands, water). |

| VMD / UCSF Chimera | Visualization Software | Critical for visually inspecting the prepared system for artifacts before simulation. |

Within the broader research thesis comparing all-atom (AA) and coarse-grained (CG) molecular dynamics (MD) for protein folding simulations, selecting the appropriate resolution is critical. This guide objectively compares the performance of AA simulations against CG alternatives, supported by current experimental data, to define their targeted applications.

Performance Comparison: All-Atom vs. Coarse-Grained Simulations

The choice between AA and CG models involves a fundamental trade-off between computational cost and biophysical detail. The following table summarizes key performance metrics based on recent benchmark studies.

Table 1: Quantitative Comparison of Simulation Resolutions for Folding Studies

| Performance Metric | All-Atom (e.g., CHARMM36, AMBER) | Coarse-Grained (e.g., Martini, AWSEM) | Experimental Reference Data |

|---|---|---|---|

| Time-to-Solution for Folding | Months to years for small proteins (~100 aa) on HPC clusters. | Hours to days for same system on similar hardware. | Fast-folding proteins fold in μs-ms (experimental). |

| System Size Practical Limit | ~1,000,000 atoms (e.g., large protein complex in explicit solvent). | ~10,000,000 particles (e.g., full viral capsid or membrane tract). | N/A |

| Achievable Timescale | Nanoseconds to microseconds routinely; milliseconds with specialized hardware. | Microseconds to milliseconds routinely; seconds to minutes possible. | N/A |

| Accuracy (RMSD from Native) | 1-3 Å for folded state, can capture folding pathways. | 3-8 Å for folded state; pathway detail is lower resolution. | High-resolution X-ray/ Cryo-EM structures (≤2 Å). |

| Explicit Solvent Effects | Yes, with explicit water/ion models (e.g., TIP3P, SPC/E). | Implicit or explicit "pseudo-particle" solvent (e.g., Martini water). | Required for electrostatics, hydration shells. |

| Key Interaction Detail | Atomic-level H-bonds, van der Waals, precise electrostatics. | Effective potentials for secondary structure, hydrophobicity. | Atomic detail needed for ligand binding, mutations. |

Targeted Applications for All-Atom Simulations

AA simulations are indispensable when the research question demands atomic-level detail. The following applications, derived from recent literature, mandate their use.

1. Studying Ligand Binding and Drug Design The accurate prediction of binding affinities and mechanisms requires modeling specific interactions (hydrogen bonds, halogen bonds, precise van der Waals contacts) between a protein and a small molecule. CG models lack the chemical specificity for this task.

- Supporting Data: A 2023 study on kinase inhibitor binding showed AA simulations (using AMBER) predicted binding free energies within 1 kcal/mol of experimental ITC data, while CG models (Martini) failed to rank inhibitor potency correctly due to lack of chemical detail.

- Experimental Protocol: Alchemical Free Energy Perturbation (FEP). A thermodynamic cycle is constructed. The ligand is morphed into another or into nothing (absolute binding) via a non-physical pathway. Hundreds of simulations at intermediate "lambda" states are run using an AA force field (e.g., OpenMM, GROMACS) with explicit solvent. The energy differences are combined using the Bennett Acceptance Ratio (BAR) or MBAR method to compute ΔG.

2. Characterizing Allosteric Mechanisms Allostery often involves subtle shifts in atomic interactions and dynamics that propagate through a protein. AA simulations can capture the initial atomic perturbation and its propagation.

- Supporting Data: Research on GPCR allostery in 2024 demonstrated that AA simulations revealed how a nanobody stabilizes a specific intracellular loop conformation via a network of side-chain interactions, a detail absent in CG simulations of the same system.

3. Investigating Catalytic Mechanisms in Enzymes Understanding enzyme catalysis requires quantum mechanical/molecular mechanical (QM/MM) calculations, which are built upon an AA MD foundation to model bond breaking/forming.

- Experimental Protocol: QM/MM Simulation. The reactive core (a few atoms) is treated with a quantum mechanical method (e.g., DFT), while the rest of the protein and solvent are treated with an AA force field. The system is equilibrated with pure MM, then QM/MM dynamics are used to simulate the reaction, often employing umbrella sampling to obtain the free energy profile along a reaction coordinate.

4. Analyzing the Impact of Point Mutations The functional consequences of a single amino acid change (e.g., a lysine to alanine) depend on atomic-level changes in electrostatics, side-chain packing, and hydrogen bonding.

- Supporting Data: A 2024 study on oncogenic RAS mutants found that AA simulations explicitly showed how a G12C mutation altered the dynamics of the switch II loop and nucleotide pocket, details coarse-grained models could not resolve.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for All-Atom Folding & Binding Studies

| Item | Function & Example |

|---|---|

| High-Performance Computing (HPC) Cluster/Cloud | Provides the parallel processing power (CPU/GPU) needed for microsecond+ AA simulations. Example: NVIDIA A100/A800 GPUs, Amazon EC2 P4d instances. |

| Specialized MD Hardware | Dedicated supercomputers for extreme sampling. Example: ANTON3, designed specifically for µs-ms AA MD. |

| All-Atom Force Field | Mathematical model defining energy potentials between atoms. Example: CHARMM36m, AMBER ff19SB, OPLS4. |

| Explicit Solvent Model | Water and ion models to solvate the system. Example: TIP3P, TIP4P-Ew water; Joung-Cheatham ions. |

| Enhanced Sampling Software | Algorithms to accelerate rare events like folding/unfolding. Example: PLUMED (for metadynamics, umbrella sampling). |

| Free Energy Calculation Suite | Tools for computing binding affinities. Example: FEP+ (Schrödinger), GROMACS with PMX for alchemical transformations. |

| Trajectory Analysis Toolkit | Software for processing and analyzing simulation data. Example: MDAnalysis, VMD, PyMOL, MDTraj. |

Workflow and Pathway Diagrams

Title: Decision Workflow for Choosing All-Atom Simulations

Title: All-Atom Free Energy Perturbation (FEP) Protocol

In the enduring debate between all-atom (AA) and coarse-grained (CG) molecular dynamics (MD) for protein folding and dynamics, the choice of model is dictated by the spatiotemporal scale of the biological question. This guide compares their performance in simulating large, complex biomolecular processes where CG models offer distinct advantages.

Performance Comparison: All-Atom vs. Coarse-Grained Models for Large Systems

The table below summarizes key performance metrics from recent studies simulating large biomolecular assemblies (e.g., ribosomes, viral capsids, membrane remodeling) over microsecond-to-millisecond scales.

| Metric | All-Atom (AA) Models (e.g., CHARMM36, AMBER) | Coarse-Grained (CG) Models (e.g., Martini, OPEP, AWSEM) | Experimental/Benchmark Reference |

|---|---|---|---|

| System Size Practical Limit | ~1-10 million atoms (e.g., a single ribosome) | ~1-10 million beads (equivalent to ~10-100 million atoms) | Cryo-EM structures of megadalton complexes |

| Time Scale Accessible | Nanoseconds to microseconds (standard) | Microseconds to milliseconds (routine) | FRET/stopped-flow folding kinetics |

| Computational Cost | ~1-1000 ns/day on 100-1000 CPUs (for ~100k-1M atoms) | ~10-100 µs/day on similar hardware (for 1-10M bead systems) | HPC benchmarking studies (2023-2024) |

| Accuracy: Native Structure | High (≤1-2 Å RMSD for folded cores) | Moderate (2-5 Å RMSD for topology, fold recognition) | PDB crystallographic structures |

| Accuracy: Dynamics/Pathways | High-resolution side-chain rotations, detailed energetics. | Captures large-scale conformational transitions, folding funnels. | Hydrogen-deuterium exchange (HDX-MS), smFRET |

| Key Strength for Large Problems | Atomic detail for binding affinity, specific interactions. | Reveals mesoscale assembly, folding nucleation, and long-timescale dynamics. |

Experimental Protocols for Cited Comparisons

1. Protocol: Millisecond Folding of a Multi-Domain Protein

- Objective: Simulate the coupled folding-and-binding of large, intrinsically disordered regions (IDRs) to a structured partner.

- CG Method: The AWSEM (Associative memory, Water mediated, Structure and Energy Model) force field.

- Procedure:

- Initialize the protein chain in an extended conformation.

- Run Langevin dynamics simulations at 300 K for 10-100 million steps.

- Perform extensive replica exchange molecular dynamics (REMD) to overcome barriers.

- Cluster trajectories to identify dominant folding pathways and metastable intermediates.

- Compare predicted binding interfaces and rates to single-molecule pull-down assay data.

- Key Outcome: CG simulations identified a critical nucleation site that directs the efficient folding of the IDR onto its partner, a mechanism difficult to probe with AA due to time-scale limitations.

2. Protocol: Assembly of a Viral Capsid Subunit

- Objective: Model the initial oligomerization of capsid proteins prior to full capsid formation.

- CG Method: The Martini force field (v3.0) with elastic network (ELNEDIN) restraints on secondary structure.

- Procedure:

- Construct CG models of 12-24 capsid protein monomers from AA templates.

- Solvate the system in a CG water/ion box at physiological salinity.

- Run multiple independent simulations (≥50 µs each) starting from randomly placed monomers.

- Analyze radial distribution functions, oligomer size distributions, and inter-protein contact maps.

- Validate against solution X-ray scattering (SAXS) profiles of early assembly intermediates.

- Key Outcome: Simulations revealed a non-cooperative, stepwise assembly mechanism driven by specific, electrostatically tuned interfaces.

Visualizations

Title: Model Selection Logic for Folding Simulations

Title: CG Simulation Folding Pathway

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Tool | Function in Large-Scale CG Folding/Assembly Studies |

|---|---|

| Martini Force Field (v3.0/v4.0) | A widely used CG force field where ~4 heavy atoms are mapped to one bead. Excellent for lipid membranes, protein-protein interactions, and long-timescale dynamics. |

| AWSEM Force Field | A knowledge-based CG model specifically optimized for protein folding and structure prediction, incorporating associative memory terms for native contacts. |

| OPEP Force Field | A CG model focused on accurate peptide and protein folding, with explicit treatment of hydrogen bonding and backbone orientation. |

| GROMACS (MD Engine) | High-performance molecular dynamics software package extensively optimized for running both AA and CG (especially Martini) simulations in parallel. |

| PLUMED | Library for free-energy calculations and enhanced sampling (e.g., metadynamics, umbrella sampling). Critical for driving and analyzing rare events in CG simulations. |

| VMD / PyMol (with CG Plugins) | Visualization software adapted to render CG representations, allowing analysis of large assemblies and trajectories. |

| SAXS/SANS Profile Calculator | Computational tool (e.g., CRYSOL, FoXS) to calculate small-angle scattering profiles from CG simulation snapshots for direct validation against experimental data. |

| ELNEDIN / Go̅-Model Restraints | Elastic network models applied as restraints within CG simulations to maintain secondary/tertiary structure fidelity while allowing large-scale motions. |

Emerging Hybrid and Multi-Scale Approaches in 2024

Comparative Analysis of Multi-Scale Protein Folding Simulation Platforms

This guide objectively compares the performance of leading simulation approaches that blend all-atom (AA) and coarse-grained (CG) methods, a core strategy in 2024's hybrid frameworks. The evaluation is framed within the ongoing research thesis investigating the optimal integration of accuracy (AA) and scale/speed (CG) for predictive protein folding and drug-target profiling.

Performance Comparison Table

Table 1: Benchmark Performance of Hybrid Methods on villin headpiece (HP35) Folding Simulation (2024 Data)

| Method / Platform | Temporal Reach (µs/day) | Accuracy (RMSD Å) | Free Energy Error (kcal/mol) | Key Integration Strategy | Computational Cost (Node-Hours) |

|---|---|---|---|---|---|

| AI-Enhanced HYBRID (OpenMM + DeePMD) | 15.2 | 1.05 | 0.8 | ML-potential driven AA/CG switching | 220 |

| Virtual-Site MARTINI 3.0+ | 450.0 | 3.20 | 2.5 | Systematic CG with virtual AA sites | 40 |

| Adaptive Resolution (AdResS) GROMACS | 8.5 (AA zone) | 1.15 | 1.1 | Dynamic spatial resolution boundary | 180 |

| Pure All-Atom (AMBER ff19SB) | 0.35 | 0.98 | 0.5 | Baseline (No CG) | 9500 |

| Pure CG (SIRAH 2.0) | 520.0 | 4.50 | 3.2 | Baseline (No AA) | 30 |

Detailed Experimental Protocols

Protocol 1: AI-Enhanced HYBRID Folding Workflow

- System Setup: HP35 sequence solvated in a cubic water box with 150mM NaCl.

- Equilibration: 100ns pure CG (MARTINI) simulation at 325K to sample unfolded ensemble.

- Selection & Switching: Conformations sampled every 10ns are analyzed by a trained classifier (Random Forest) for structural novelty. Selected frames are converted to AA representation using PULCHRA and SCWRL4.

- Refinement & Scoring: Converted AA structures undergo 2ns relaxation with OpenMM (ff19SB/TIP3P). A ML potential (DeePMD) trained on AA data scores structural stability.

- Iterative Cycle: The scoring guides the selection of new regions for AA exploration, creating a feedback loop. The cycle repeats for 50 iterations.

- Data Integration: AA free energies are reweighted onto the CG landscape using the Boltzmann inversion method.

Protocol 2: Adaptive Resolution (AdResS) Benchmarking

- Domain Partitioning: The simulation box is divided into a high-resolution sphere (6Å radius around the protein, AA) embedded in a low-resolution CG reservoir (MARTINI water).

- Force Mapping: A transition region of 2Å width employs a weighting function to smoothly interpolate forces between AA and CG particles.

- Thermostatting: A global Langevin thermostat is applied only in the CG region to prevent artificial heating.

- Production Run: 1µs simulation is performed using a modified GROMACS 2024.1 build. The AA region's dynamics are recorded for analysis.

- Analysis: Structural metrics (RMSD, Rg) from the AA zone are compared to pure AA reference simulations.

Visualizations

Title: AI-Driven Hybrid Simulation Feedback Loop

Title: Adaptive Resolution (AdResS) Spatial Domains

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for Hybrid-Scale Folding Simulations

| Reagent / Tool | Provider / Package | Primary Function in Hybrid Workflows |

|---|---|---|

| MARTINI 3.0+ | Martini Community / GROMAX | Provides the foundational, well-validated CG force field with explicit virtual sites for finer detail. |

| DeePMD-kit | DeepModeling Community | Supplies machine-learning potentials trained on AA data to accurately score or refine CG/AA structures. |

| ForceBalance | Stanford Chodera Lab | Optimizes hybrid force field parameters by systematically minimizing discrepancies against AA reference data. |

| VMD + PLUMED | University of Illinois / International Consortium | Enables trajectory analysis and enhanced sampling (e.g., metadynamics) across resolution boundaries. |

| GROMACS 2024+ (AdResS) | KTH Royal Institute of Technology | The leading production engine for adaptive-resolution simulations, allowing dynamic AA/CG switching. |

| CHARMM-GUI MMBuilder | CHARMM-GUI Team | Facilitates the initial setup of complex, multi-resolution simulation systems with proper boundary definitions. |

| Pytraj & MDTraj | Amber / Open Source | Lightweight Python libraries for analyzing large, heterogeneous simulation datasets from hybrid runs. |

Overcoming Computational Hurdles: Troubleshooting, Optimization, and Best Practices

Within the ongoing research thesis comparing all-atom (AA) versus coarse-grained (CG) protein folding simulations, a critical examination of AA methodologies reveals persistent challenges. While AA models offer high-resolution insights into folding mechanisms and drug binding, their practical application is fraught with pitfalls that can compromise data integrity and conclusions.

Sampling Limitations: The Timescale Dilemma

The most fundamental pitfall is inadequate sampling. Despite advances in computing, the timescales accessible to AA molecular dynamics (MD) simulations often remain orders of magnitude shorter than the slowest folding events for many proteins.

Table 1: Simulated vs. Experimental Folding Timescales

| Protein (PDB ID) | Experimental τ (µs) | Simulated τ (AA-MD) (µs) | Maximum Achievable AA Sampling (µs)* | Convergence Assessed? |

|---|---|---|---|---|

| Villin Headpiece (1YRF) | ~10 | ~1-5 (on specialized hardware) | ~10-20 (aggregate) | Often |

| WW Domain (2F21) | ~20 | ~5-15 | ~50 (aggregate) | Sometimes |

| BBA (1FME) | ~5 | ~1-4 | ~10 | Rarely |

| Lysozyme (1LYZ) | ~1000 | ~10-50 (unfolded states) | ~100 | No |

*Data aggregated from recent literature (2023-2024) including simulations on GPU clusters and specialized hardware like Anton2/3.

Experimental Protocol for Assessing Sampling: A standard protocol involves running multiple independent simulations (replicas) from different initial configurations. Convergence is evaluated by monitoring the decay of state-to-state autocorrelation functions and ensuring the reversibility of folding/unfolding events. The statistical inefficiency is calculated to determine the effective sample size.

Convergence Misinterpretation and Metrics

A common error is equating structural stability with conformational convergence. A simulation may appear stable yet sample only a local minimum.

Table 2: Convergence Metrics Comparison

| Metric | What it Measures | Pitfall if Used Alone | Recommended Threshold (AA Folding) |

|---|---|---|---|

| RMSD Plateau | Backbone stability relative to a reference. | Does not confirm global state sampling. | < 2-3 Å, but multi-modal analysis required. |

| Potential Energy | Stability of the force field energy. | Can be stable in incorrect folded states. | Fluctuations < 50 kJ/mol/atom. |

| State Population | Fraction of time in folded/unfolded basins. | Requires proper basin definition. | Population error < 20% across replicas. |

| Gelman-Rubin Statistic (R̂) | Statistical similarity between multiple replicas. | Requires significant computational investment. | R̂ < 1.1 for key reaction coordinates. |

Detailed Protocol for Convergence Testing: 1) Run ≥ 5 independent replicas with different random seeds for the longest feasible time. 2) Define order parameters (e.g., RMSD, native contacts Q, radius of gyration). 3) Calculate the potential of mean force (PMF) along 1-2 key coordinates for each replica. 4) Compute the Gelman-Rubin diagnostic (R̂) for these order parameters across replicas. Convergence is only suggested when R̂ approaches 1.0-1.1.

Force Field Artifacts and Validation

Modern force fields (e.g., CHARMM36, AMBER ff19SB, a99SB-disp) have reduced but not eliminated systematic biases. Artifacts can manifest as overly stable or unstable secondary/tertiary structures.

Table 3: Common Artifacts in AA Force Fields (2024 Perspective)

| Force Field Family | Known Artifact (Pitfall) | Compensatory Method | Experimental Validation Required |

|---|---|---|---|

| Traditional Two-Body (ff19SB) | Over-stabilization of α-helices; underestimation of π-π stacking. | Integrate with TIP4P-D water model; add corrective maps (CMAP). | NMR J-couplings, scalar couplings. |

| Polarizable (AMOEBA, Drude) | Minimal helical bias; better salt-bridge description. | High computational cost limits sampling. | Dielectric relaxation data. |

| Neural Network Learned (ChIGN, ANI) | Potential transferability issues; black-box uncertainty. | Train on diverse quantum data (QM/MM). | Ab initio folding pathway data. |

Validation Protocol: Simulations must be validated against experimental observables not used in force field parameterization. Key experiments include: 1) NMR Residual Dipolar Couplings (RDCs) to validate native state ensembles. 2) Small-Angle X-ray Scattering (SAXS) profiles to assess global compactness. 3) Temperature-jump spectroscopy data to compare folding relaxation rates.

Comparative Workflow: AA vs. CG for Folding Studies

The choice between AA and CG hinges on the scientific question, balancing resolution against sampling.

Title: Decision Workflow: AA vs CG for Folding Simulations

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Toolkit for Robust AA Folding Studies

| Item (Software/Service) | Category | Function | Key Consideration |

|---|---|---|---|

| GROMACS 2024.1 | MD Engine | High-performance, GPU-accelerated simulation. | Optimal for large-scale sampling on HPC clusters. |

| CHARMM36m / a99SB-disp | Force Field | Parameter sets defining atomistic interactions. | Choice depends on protein class; a99SB-disp excels for disordered regions. |

| PLUMED 2.9 | Enhanced Sampling | Implements meta-dynamics, umbrella sampling, etc. | Critical to overcome sampling pitfalls; requires expert tuning. |

| AMBER Tools 23 | MD Engine & Analysis | Suite for simulation & analysis (especially NMR). | Integral for force field development and validation. |

| MDAnalysis 2.5 | Analysis Library | Python toolkit for analyzing trajectories. | Essential for calculating custom convergence metrics. |

| Folding@Home | Distributed Computing | Leverages volunteer computing for aggregate sampling. | Provides µs-ms aggregate sampling to address timescale gap. |

| AlphaFold2 DB | Structural Prediction | Provides likely native state for validation. | Caution: Do not use as sole folding target; it's a prediction. |

| Protein Data Bank | Experimental Data | Source of starting structures & validation data. | Cross-reference with NMR ensemble data where available. |

Navigating the pitfalls of all-atom folding simulations requires a mindful, multi-pronged strategy: employing robust convergence metrics, leveraging enhanced sampling techniques judiciously, and continuously validating against orthogonal experimental data. Within the AA vs. CG research thesis, AA remains indispensable for mechanistic and drug-binding insights but must be applied with acute awareness of its inherent limitations in sampling and potential for force field artifacts. The integrative approach, using CG models to explore global dynamics and AA to refine atomic details, presents the most promising path forward.

This guide compares the performance and challenges of popular coarse-grained (CG) molecular dynamics (MD) models for protein folding simulations against the benchmark of all-atom (AA) simulations. Framed within the broader research thesis on AA vs. CG folding, we focus on three core challenges: force field parameterization, backmapping fidelity, and the inherent loss of structural detail. Performance is evaluated based on accuracy in predicting folded structures, computational cost, and the ability to recover atomic details.

Performance Comparison Table

Table 1: Coarse-Grained Model Performance Comparison for Folding Simulations

| Model Name | Resolution (atoms/bead) | Native State Accuracy (Cα RMSD) | Typical Fold Time Speed-up vs. AA | Key Parameterization Method | Backmapping Tool Availability |

|---|---|---|---|---|---|

| All-Atom (CHARMM36/mTIP3P) | 1:1 | ~1.0-2.0 Å (reference) | 1x (reference) | Quantum Mechanics/AA Fit | N/A |

| MARTINI 3 | ~4:1 | 4.0-8.0 Å (stable fold) | 100-1000x | Bottom-up (Thermodynamics) | Backward, CG2AT |

| AWSEM (with PDB) | 3:1 (CA-based) | 2.5-5.0 Å (for designed seq.) | 10,000x+ | Knowledge-based (Go-like + PDB) | PULCHRA, REMO |

| OPEP | 6:1 (SC-heavy) | 2.0-4.0 Å (for peptides) | 1000-10,000x | Top-down (AA fitting) | PULCHRA, Path-based |

| Cα-based Go̅ Model | Varies | < 3.0 Å (if native known) | 100,000x+ | Knowledge-based (Native-centric) | Limited, often custom |

Notes: RMSD values are for well-folded small proteins/peptides (<100 residues). Speed-up is approximate and system-dependent. Backmapping tools in bold are commonly associated.

Table 2: Quantitative Comparison of Backmapping Accuracy & Computational Cost

| Process/Stage | All-Atom Simulation (Explicit Solvent) | Coarse-Grained Simulation (MARTINI) | Backmapping (e.g., Backward) & Relaxation |

|---|---|---|---|

| System Size (10k atom protein) | ~300,000 atoms (solvent) | ~75,000 beads | ~300,000 atoms |

| Simulation Time/day (100 ns) | ~24-48 hours (GPU) | ~1-2 hours (GPU) | ~6-12 hours (GPU) |

| Memory Usage | High (~16-32 GB) | Low (~4-8 GB) | High (~16-32 GB) |

| Key Output Metric | Atomic detail, rotamers, solvation | Folded state topology, dynamics | Reconstructed atomistic model quality |

| Typical Side-Chain RMSD | N/A (reference) | N/A | 1.5 - 3.0 Å (from AA reference) |

Experimental Protocols

Protocol 1: Benchmarking CG Folding Accuracy (e.g., AWSEM/OPEP)

- System Preparation: Select a set of small, fast-folding proteins (e.g., villin headpiece, WW domain, chignolin).

- Simulation Setup: For each protein, run 50-100 independent CG folding simulations from extended/unfolded starting conformations.

- AWSEM: Use the

folding_awsm.pyscript with the associative memory Hamiltonian and implicit solvent. - OPEP: Utilize the OPEP force field in GROMACS-LS with Langevin dynamics.

- AWSEM: Use the

- Control: Run a single, long all-atom simulation (CHARMM36/TIP3P) for one target as a reference.

- Analysis: Calculate the Cα Root Mean Square Deviation (RMSD) of the lowest-energy/final CG structures to the native PDB structure. Compute the fraction of simulations that converge to a structure within 3.0 Å Cα RMSD of the native state.

Protocol 2: Evaluating Backmapping Fidelity (e.g., MARTINI to AA)

- Input Generation: Use a CG (MARTINI 3) simulation trajectory that samples the folded state of a protein.

- Backmapping: Apply the

backward.py(or CG2AT) tool to reconstruct an all-atom model for every 100th frame of the CG trajectory. - Energy Relaxation: Subject each reconstructed atomistic model to a short (100-500 steps) energy minimization and a restrained MD simulation (50-100 ps) in explicit solvent to relieve steric clashes.

- Validation: Compare the backmapped structures against:

- A reference all-atom MD simulation of the pre-folded protein.

- The experimental crystal/NMR structure.

- Metrics: Calculate all-heavy-atom RMSD, side-chain dihedral angle distributions (χ1, χ2), and number of steric clashes (bad contacts) pre- and post-relaxation.

Visualization of Workflows

Diagram 1: CG Folding & Backmapping Validation Pipeline

CG to AA Validation Workflow

Diagram 2: Key Challenges in CG Protein Modeling

Three Core CG Modeling Challenges

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Resources for CG Folding Research

| Item Name | Category | Primary Function | Key Consideration |

|---|---|---|---|

| GROMACS | MD Software | High-performance engine for running both AA and CG (MARTINI, OPEP) simulations. | Extensive community tools for analysis and trajectory processing. |

| MARTINI 3 Force Field | CG Force Field | Provides parameters for biomolecules and solvents at ~4:1 resolution for dynamics and folding. | Parameterization is molecule-specific; requires careful system setup. |

| AWSEM Suite | CG Model & Code | Implements the associative memory, water-mediated, structure and energy model for protein folding. | Highly efficient for folding predictions but requires template or memory. |

| Backward/CG2AT | Backmapping Tool | Reconstructs atomistic coordinates from MARTINI CG trajectories. | Output requires significant energy minimization to resolve clashes. |

| PULCHRA/REMO | Backmapping Tool | Rebuilds full-atom protein structures from Cα-only traces (common for Go̅/AWSEM). | Fast but may not accurately reconstruct all side-chain conformations. |

| CHARMM36 | AA Force Field | Gold-standard all-atom force field used to generate reference data and relax backmapped structures. | Serves as the accuracy benchmark for evaluating CG model predictions. |

| VMD/ChimeraX | Visualization | Visualizes and analyzes trajectories, compares structures, and calculates RMSD/metrics. | Critical for qualitative assessment of folding and backmapping results. |

| PLUMED | Enhanced Sampling Plugin | Facilitates metadynamics, umbrella sampling, etc., to accelerate rare events like folding in AA/CG. | Can be used to calculate free energy landscapes of folding. |

This guide provides an objective comparison of hardware strategies for molecular dynamics (MD) simulations, specifically within the context of all-atom versus coarse-grained protein folding research. The analysis is based on current performance benchmarks and cost data.

Performance Comparison: GPU-Accelerated MD Simulations

The following table compares the performance of popular MD software on different hardware platforms for a standard benchmark system (e.g., DHFR in explicit solvent). Performance is measured in nanoseconds simulated per day (ns/day).

Table 1: Performance Benchmark for MD Software (DHFR System)

| Software/Model Type | Hardware Configuration (Cloud Instance) | Approx. Performance (ns/day) | Relative Cost per 100 ns ($) |

|---|---|---|---|

| All-Atom (AMBER) | 1x NVIDIA A100 (Azure ND A100 v4) | 120 | 5.80 |

| All-Atom (AMBER) | 1x NVIDIA V100 (AWS p3.2xlarge) | 75 | 7.20 |

| All-Atom (GROMACS) | 1x NVIDIA A100 (Google Cloud a2-highgpu) | 140 | 5.10 |

| Coarse-Grained (MARTINI, GROMACS) | 1x NVIDIA A100 (Azure ND A100 v4) | 850* | 0.85 |

| Coarse-Grained (MARTINI, GROMACS) | CPU Cluster (AWS c6i.32xlarge, 64 vCPUs) | 45* | 12.50 |

Note: Performance for coarse-grained models is not directly comparable in physical time as it represents more "simulated" nanoseconds due to reduced complexity. Cost includes estimated cloud instance pricing (Spot/Preemptible where applicable) for a 24-hour run.

Cost-Benefit Analysis: Cloud vs. On-Premise Cluster

Table 2: 3-Year Total Cost of Ownership (TCO) Projection

| Strategy | Upfront Capital Cost | Ongoing Operational Cost (3 yrs) | Estimated Simulated Time (All-Atom, ns) | Effective Cost per 100 ns |

|---|---|---|---|---|

| On-Premise GPU Cluster (8x A100) | $350,000 | $75,000 (power, cooling, admin) | ~3,150,000 | 13.50 |

| Cloud Burst (Hybrid) | $50,000 (local nodes) | Variable: $150,000 (cloud spend) | ~4,200,000 | 9.50 |

| Full Cloud (Elastic) | $0 | $200,000 (committed use discounts) | ~3,900,000 | 10.25 |

Assumptions: On-premise costs include hardware depreciation. Cloud costs use a mix of pricing models. Simulated time is a projection based on benchmarked performance and estimated utilization.

Experimental Protocols for Cited Benchmarks

1. MD Software Performance Benchmarking Protocol:

- System Preparation: The benchmark uses the DHFR protein (in explicit TIP3P water with 23,558 atoms). Systems are built and minimized using the respective software's tools (e.g.,

tleapfor AMBER,gmx pdb2gmxfor GROMACS). - Equilibration: A standard two-phase equilibration is performed: NVT (constant particle number, volume, temperature) for 100 ps, followed by NPT (constant particle number, pressure, temperature) for 1 ns.