Tackling the Gene Ontology Long-Tail Problem: AI Solutions and Best Practices for Precision Annotations

This article addresses the persistent challenge of the Gene Ontology (GO) long-tail problem, where a vast majority of genes lack comprehensive, high-quality annotations.

Tackling the Gene Ontology Long-Tail Problem: AI Solutions and Best Practices for Precision Annotations

Abstract

This article addresses the persistent challenge of the Gene Ontology (GO) long-tail problem, where a vast majority of genes lack comprehensive, high-quality annotations. Targeting researchers, scientists, and drug development professionals, it explores the biological and computational roots of this annotation gap. The piece then details cutting-edge methodological solutions, including machine learning, community-driven biocuration, and text-mining advancements. It provides practical troubleshooting guidance for using sparse annotations and evaluates the performance of various predictive tools. Finally, the article synthesizes key strategies for improving genomic discovery and therapeutic target validation through more complete functional profiling.

What is the GO Long-Tail Problem? Uncovering the Causes and Impact on Genomic Research

Technical Support Center

Troubleshooting Guides & FAQs

Q1: What does the "GO long-tail" mean and why is it a problem for my functional analysis? A: The Gene Ontology (GO) long-tail refers to the large majority of genes/proteins that have sparse or no experimental annotation, dominated instead by electronic inferences (IEA). This creates bias, where well-studied genes (e.g., human, cancer-related) are over-represented in analyses, while "tail" genes are functionally opaque, compromising pathway analysis and target discovery.

Q2: My enrichment analysis for a novel gene list shows no significant GO terms. Is my experiment flawed? A: Not necessarily. This is a classic symptom of the long-tail problem. Your gene list may be enriched for poorly annotated genes. Before concluding biological insignificance, try:

- Check Annotation Status: Use the table below to quantify the annotation bias in your dataset.

- Use Broader Evidence Codes: Temporarily include annotations inferred from electronic annotation (IEA) to see if any patterns emerge, then manually curate.

- Orthology-Based Transfer: If working in a non-model organism, consider using tools like Ensembl Compara to transfer experimental annotations from orthologs in well-annotated species, with careful manual review.

Q3: How can I assess the annotation bias in my own dataset before starting analysis? A: Follow this protocol to quantify the "long-tail" in your gene set.

Protocol 1: Quantifying Annotation Bias in a Gene Set

- Input: Your gene list (e.g., differentially expressed genes).

- Resource: Query the UniProt-GOA database or use the

biomaRtR package. - Action: For each gene, retrieve:

- Count of all GO annotations.

- Count of annotations with experimental evidence codes (EXP, IDA, IPI, IMP, IGI, IEP).

- Count of annotations with computational evidence codes (mainly IEA).

- Analysis: Calculate the percentage of genes with zero experimental annotations. Categorize genes as "Well-Annotated" (≥5 experimental annotations) or "Long-Tail" (0 experimental annotations).

- Output: A summary table (see example below) and a histogram of experimental annotation counts.

Table 1: Example Annotation Audit for a Hypothetical Gene Set (n=500)

| Annotation Category | Number of Genes | Percentage | Primary Evidence Type |

|---|---|---|---|

| Well-Annotated | 85 | 17% | Experimental (EXP, IDA, etc.) |

| Moderately Annotated | 145 | 29% | Mixed (Experimental & Computational) |

| Long-Tail (Poorly Annotated) | 270 | 54% | Computational (IEA) only or None |

Q4: What are the best experimental strategies to annotate a "long-tail" gene of unknown function? A: Focus on high-throughput, systematic approaches. Protocol 2: A Pipeline for Initial Functional Characterization

- CRISPR Knockout/knockdown: Generate loss-of-function model. Perform a broad phenotypic screen (e.g., cell viability, morphology, high-content imaging).

- Affinity Purification Mass Spectrometry (AP-MS): Identify physical interaction partners. Co-purified proteins with known functions provide strong functional clues.

- Transcriptomic/Proteomic Profiling: Compare knockout vs. wild-type to see which pathways are dysregulated.

- Subcellular Localization: Tag protein with GFP and image to determine compartment (e.g., nucleus, mitochondria).

- Data Integration: Use the results from steps 2-4 to construct a functional hypothesis, which can then be tested with targeted experiments.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Functional Annotation Experiments

| Reagent / Tool | Function in Annotation Pipeline | Example Product/Catalog |

|---|---|---|

| CRISPR-Cas9 Knockout Kit | Creates stable loss-of-function cell lines for phenotypic screening. | Synthego CRISPR Kit, Horizon Discovery ENGINE cell lines. |

| Tandem Affinity Purification (TAP) Tag Vectors | For high-confidence protein complex purification prior to MS. | Thermo Fisher Pierce Anti-DYKDDDDK Affinity Resin. |

| Proteome-Wide GFP-Nanobody | Isolates GFP-tagged protein and its interactors for AP-MS. | ChromoTek GFP-Trap Agarose. |

| Live-Cell Imaging Dyes | Marks organelles (nucleus, ER, mitochondria) for co-localization studies. | Thermo Fisher MitoTracker, Cell Navigator staining kits. |

| Phospho-Specific Antibody Arrays | Quickly profiles signaling pathway activation in knockout cells. | RayBio C-Series Phosphorylation Antibody Array. |

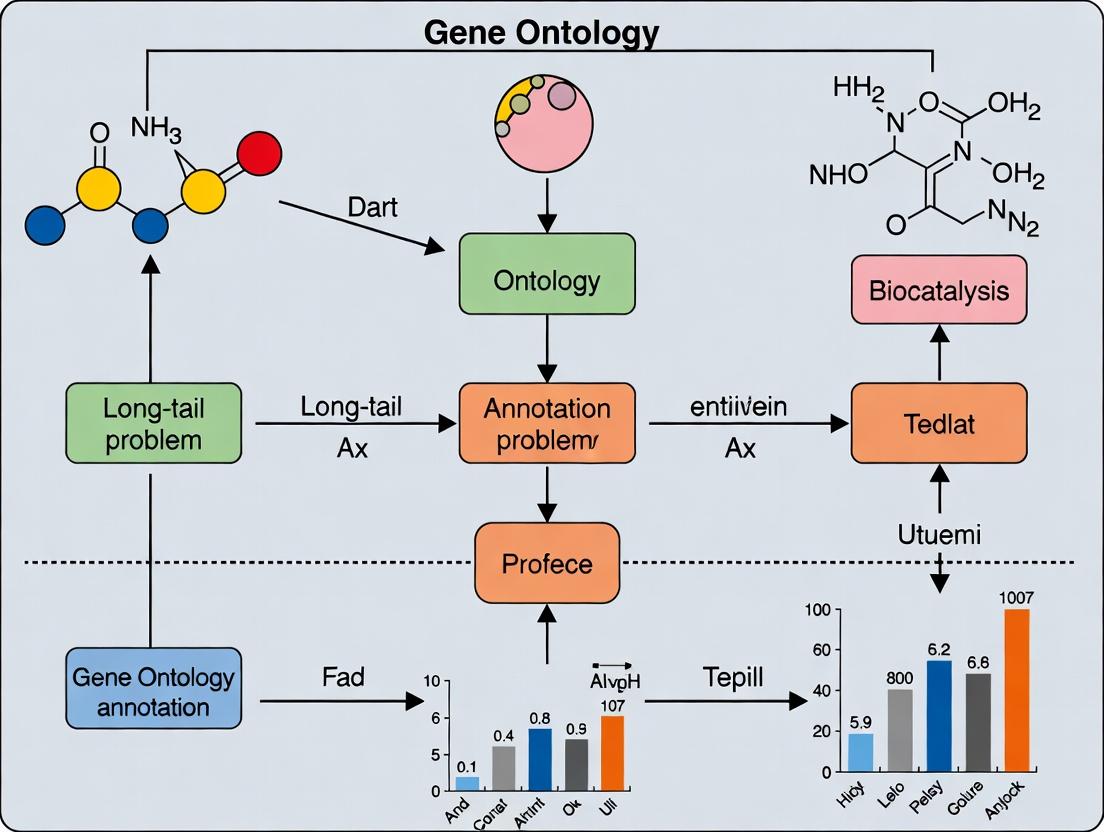

Visualizing the Annotation Gap and Workflow

GO Annotation Distribution & Long-Tail

Pipeline for Annotating Long-Tail Genes

Troubleshooting Guide & FAQ

Q1: Our lab's research focuses on a poorly-annotated human gene associated with a rare disease. We performed a standard sequence homology search using BLAST against model organism databases (e.g., mouse, yeast) but found no high-confidence functional predictions. What could be the issue, and how can we proceed?

A1: You are encountering the core "long-tail" problem in Gene Ontology (GO) annotation. High-confidence annotations are overwhelmingly derived from experimental data in a few model organisms (e.g., S. cerevisiae, D. melanogaster, C. elegans, M. musculus). Rare or human-specific genes often have no direct orthologs in these organisms, leading to an "annotation vacuum." Homology-based inference fails here.

Troubleshooting Steps:

- Expand Your Search: Use more sensitive profile-based homology detection tools (e.g., HMMER, PSI-BLAST) instead of standard BLAST. Search against broader metazoan or eukaryotic databases.

- Look for Distant Relationships: Analyze protein domains using Pfam or InterPro. Functional inference can sometimes be made at the domain level, even if full-length orthologs are absent.

- Utilize Co-expression & Interaction Networks: Use resources like STRING or GeneMANIA to see if your gene co-expresses or is predicted to interact with well-annotated genes, suggesting involvement in a shared biological process.

- Proceed to De Novo Experimentation: This is often necessary. Consider a targeted experimental protocol (see below).

Q2: We expressed a tagged version of our rare protein of interest in a human cell line for a localization study. The fluorescence signal is weak and diffuse, making conclusive determination of subcellular localization impossible. What are the potential causes and fixes?

A2: This is common with unstable, poorly expressed, or mislocalized proteins.

Troubleshooting Steps:

- Verify Construct & Tag Position: The tag (e.g., GFP, mCherry) may interfere with folding or localization signals. Try tagging the protein at the opposite terminus.

- Check Expression Levels: Use Western blot to confirm protein expression and size. Weak signal may require a stronger promoter or optimization of transfection conditions.

- Consider Protein Stability: The protein may be rapidly degraded. Treat cells with a proteasome inhibitor (e.g., MG-132) for a few hours prior to imaging to see if signal accumulates.

- Use Positive Controls: Co-transfect with a marker for a specific organelle (e.g., DsRed-Mito for mitochondria) to ensure your imaging setup is correct.

- Alternative Approach: Consider using an immunofluorescence protocol with a validated antibody and fixed cells, which can sometimes preserve and reveal localization better than live-cell tags.

Q3: When submitting novel experimental GO annotations for our rare gene to a public database (e.g., UniProt, Model Organism Database), our annotations are rejected or require extensive manual curation. Why does this happen?

A3: Database curators adhere to strict evidence standards to maintain annotation quality. Common pitfalls include:

- Insufficient Experimental Evidence: Conclusions based solely on overexpression artifacts or poorly controlled assays.

- Incorrect Evidence Code Usage: Using "Inferred from Mutant Phenotype" (IMP) without a clean, specific mutant, or "Inferred from Sequence Orthology" (ISO) without proper orthology established.

- Lack of Specificity: Annotating to broad, high-level GO terms (e.g., "biological process") instead of the most specific term supported by the data.

Solution:

- Follow the GO Evidence Code Guidelines (GO Consortium) meticulously.

- Design Robust Experiments: Include proper controls (knockdown/knockout, rescue experiments, relevant negative controls).

- Cite Data Precisely: In your submission, explicitly link the figure/result in your publication to the specific GO term and evidence code.

- Engage Early: Consider contacting the relevant database curation group (e.g., PomBase, WormBase) for pre-submission guidance, especially for novel gene families.

Key Experimental Protocols for Annotating Rare Genes

Protocol 1: CRISPR-Cas9 Knockout with Phenotypic Screening for Functional Annotation

- Objective: To establish a gene's necessity for a specific biological process and assign GO terms like "involved in" a particular pathway.

- Methodology:

- Design and transfert sgRNAs targeting your gene of interest in a relevant cell line.

- Generate clonal knockout (KO) lines via single-cell dilution and validate by sequencing and Western blot.

- Subject KO and isogenic wild-type control cells to a targeted phenotypic assay (e.g., cell proliferation, apoptosis assay, specific pathway reporter, microscopy for morphological defects).

- Perform a rescue experiment by re-expressing a wild-type cDNA version of the gene in the KO line. Recovery of the wild-type phenotype confirms specificity.

- The observed phenotype, combined with rescue data, supports direct experimental annotation (Evidence Code: IMP).

Protocol 2: Affinity Purification Mass Spectrometry (AP-MS) for Protein Complex Identification

- Objective: To identify physical interaction partners and infer molecular function or involvement in a larger complex.

- Methodology:

- Create a stable cell line expressing your protein of interest with a compatible tag (e.g., GFP, FLAG) and a control line expressing the tag alone.

- Lyse cells under native conditions. Perform affinity purification using tag-specific antibodies/beads.

- Elute and digest bound proteins. Analyze by high-sensitivity LC-MS/MS.

- Compare protein lists from the bait sample vs. the control tag-alone sample. Identify high-confidence specific interactors using statistical tools (SAINT, CompPASS).

- Identified, statistically validated interactors can support annotation (e.g., "protein binding" or co-annotation to a complex's function, Evidence Code: IPI).

Table 1: Distribution of Experimental GO Annotation Evidence Codes Across Organisms (Representative Data)

| Organism | Total Annotations | Inferred from Experiment (EXP, IDA, IPI, etc.) | Inferred from Phylogeny/Sequence (ISO, ISS, IEA) | Unknown/ND |

|---|---|---|---|---|

| Saccharomyces cerevisiae (Yeast) | ~121,000 | ~70% | ~25% | ~5% |

| Mus musculus (Mouse) | ~98,000 | ~65% | ~30% | ~5% |

| Homo sapiens (Human) | ~318,000 | ~35% | ~60% | ~5% |

| Example Rare Human Gene | < 10 | 0% (if unstudied) | ~100% (if any) | 0% |

Table 2: Comparison of Tools for Detecting Distant Homologs

| Tool | Method | Use Case | Sensitivity | Speed |

|---|---|---|---|---|

| BLAST (blastp) | Local sequence alignment | Finding close orthologs in model organisms | Low-Moderate | Fast |

| PSI-BLAST | Position-Specific Iterated search | Detecting more distant homologs by building a profile | Moderate-High | Moderate |

| HMMER (phmmer/jackhmmer) | Hidden Markov Models | Detecting very distant homologs using statistical models | High | Slow |

Visualizations

Diagram 1: GO Annotation Bias and the Long-Tail Problem

Diagram 2: Experimental Workflow for Rare Gene Annotation

The Scientist's Toolkit: Research Reagent Solutions

| Reagent/Material | Function in Rare Gene Annotation | Key Considerations |

|---|---|---|

| CRISPR-Cas9 sgRNA Libraries/Kits | For generating knockout cell lines to study gene function and phenotype. | Choose high-specificity, validated designs. Include multiple sgRNAs per gene. |

| Tightly Inducible Expression Systems (e.g., Tet-On) | For controlled overexpression or rescue experiments without artifacts from constitutive expression. | Minimizes toxicity and off-target effects of expressing unknown proteins. |

| Tandem Affinity Purification (TAP) Tags | For high-specificity protein complex isolation in AP-MS experiments. | Tags like Strep-II/FLAG reduce background binding vs. single tags. |

| Validated Antibodies for Rare Proteins | For Western blot, immunofluorescence, and immunoprecipitation validation. | Often custom-made. Requires rigorous validation with KO controls. |

| Pathway-Specific Reporter Assays (Luciferase, GFP) | To test if the rare gene modulates a specific signaling pathway (e.g., Wnt, NF-κB). | Provides direct functional readout linkable to GO biological process terms. |

| Isogenic Paired Cell Lines (WT/KO/Rescue) | The gold standard control for any functional experiment. | Essential for attributing phenotypes directly to the gene of interest. |

Technical Support Center: Troubleshooting Long-Tail Annotations in Functional Genomics

FAQs on Data Sparsity & Experimental Challenges

Q1: Our high-throughput screen for a novel kinase target yielded inconsistent phenotypic results across replicates. What could be the cause? A: Inconsistent phenotypic data, especially for poorly annotated genes (long-tail genes), often stems from sparse or conflicting baseline annotations in public databases (e.g., GO, UniProt). This leads to poorly optimized experimental conditions. Common issues include:

- Off-target effects: The reagent's specificity may be unvalidated for your target due to a lack of prior functional data.

- Context-specificity: The gene's function may be condition-dependent, which is not captured in existing sparse annotations.

Q2: Why does my CRISPR knockout of a long-tail gene show no observable phenotype in a standard viability assay, despite literature suggesting it's essential? A: This is a classic "annotation ripple effect." The literature suggestion may be inferred from orthology or low-throughput studies not replicable in your system. The gene may have a subtle or compensatory phenotype not captured by your broad assay. You need to design a more specific phenotypic screen based on its predicted molecular function (e.g., a metabolic rescue assay if predicted to be an enzyme).

Q3: How can I validate a predicted protein-protein interaction for a protein with no prior experimental data? A: A multi-pronged validation strategy is required due to the lack of corroborating evidence.

- Orthogonal Methods: Combine co-immunoprecipitation with a complementary technique like Bioluminescence Resonance Energy Transfer (BRET).

- Mutational Analysis: Introduce point mutations in the predicted binding domain and test for loss of interaction.

- Control Saturation: Use multiple negative controls (unrelated proteins, empty vector) to establish a stringent baseline.

Troubleshooting Guides

Issue: High False Positive Rate in Virtual Screening of a Long-Tail Target Root Cause: The computational model was trained on a dataset dominated by well-annotated protein families, creating bias. The structural or sequence features of your long-tail target are underrepresented. Steps to Resolve:

- Data Audit: Check the training data composition for your docking or QSAR model. Quantify the number of known binders/actives for your target's protein family.

- Enrich Training Data: Use transfer learning. Fine-tune your model on a smaller, curated dataset of compounds tested against phylogenetically related targets.

- Adjust Thresholds: Apply more stringent scoring thresholds and prioritize compounds whose binding poses are consistent with any known critical residues (even if from distant homologs).

- Experimental Triage: Plan a tiered experimental validation starting with a primary binding assay (e.g., SPR) before proceeding to functional cellular assays.

Issue: Inconclusive Functional Enrichment Analysis from Transcriptomics Data Involving Long-Tail Genes Root Cause: Standard Gene Ontology (GO) enrichment tools rely on existing annotations. Long-tail genes, often returned as top differential hits, are annotated with generic, non-informative terms (e.g., "biological process," "molecular function") or not annotated at all, diluting significant findings. Steps to Resolve:

- Pre-filter Annotations: Before analysis, remove generic, non-informative GO terms (e.g., those annotated to >50% of the genome).

- Use Complementary Databases: Integrate predictions from sources like DeepGO, PANTHER, or GeneMANIA to assign hypothetical functions to unannotated genes.

- Perform Network Analysis: Use protein-protein interaction networks (even predicted ones) to see if your unannotated differentially expressed genes cluster with well-annotated genes in a specific pathway. This "guilt-by-association" can provide context.

- Report with Transparency: Clearly distinguish between statistically enriched terms based on curated knowledge versus those informed by computational predictions in your results.

Quantitative Data on Annotation Sparsity

Table 1: Gene Ontology (GO) Annotation Coverage for Human Genes (Source: GO Consortium, 2024)

| Annotation Level | Number of Human Genes | Percentage of Total (~20,000) |

|---|---|---|

| With Experimental GO Evidence | ~11,000 | 55% |

| With Any GO Annotation (incl. computational) | ~19,500 | 97.5% |

| Annotated to >10 Specific GO Terms | ~7,000 | 35% |

| Annotated to <3 Specific GO Terms ("Long-Tail") | ~4,500 | 22.5% |

| No Biological Process Annotation | ~1,000 | 5% |

Table 2: Impact of Sparse Data on Drug Discovery Metrics

| Research Phase | Typical Attrition Rate (Annotated Targets) | Estimated Attrition Rate (Long-Tail Targets) | Key Sparse Data Contributor |

|---|---|---|---|

| Target Validation | 40-50% | 60-75%+ | Lack of disease association evidence; unknown signaling context. |

| Lead Optimization | 30-40% | 50-65%+ | Lack of structural data for SAR; unknown off-target pharmacology. |

| Preclinical Efficacy | 30-40% | 50-70%+ | Unpredictable in vivo phenotype due to unknown pathway redundancy. |

Experimental Protocols

Protocol 1: Orthogonal Validation of Protein Function for a Long-Tail Gene Objective: To establish a confident functional annotation for a human gene currently annotated only as "protein binding" (GO:0005515). Materials: See "The Scientist's Toolkit" below. Methodology:

- Knockdown/Knockout: Generate stable knockdown (shRNA) or knockout (CRISPR-Cas9) cell lines for the gene of interest (GOI). Include a non-targeting control (NTC) cell line.

- Transcriptomic Profiling: Perform RNA sequencing on GOI and NTC cell lines (triplicate biological replicates). Identify differentially expressed genes (DEGs; adj. p-value < 0.05, |log2FC| > 1).

- Pathway Guilt-by-Association: Input the top 100 upregulated DEGs into a network analysis tool (e.g., STRING). Set a high confidence score (>0.7). Identify enriched functional clusters among interacting partners.

- Hypothesis-Driven Rescue: Based on the cluster (e.g., "mitochondrial electron transport"), treat GOI knockout cells with a pathway-specific metabolite (e.g., succinate). Measure rescue via a relevant assay (e.g., ATP production, Seahorse assay).

- Direct Biochemical Assay: If cluster suggests enzymatic activity (e.g., "kinase activity"), express and purify the recombinant GOI protein. Test activity against a broad panel of potential substrates (e.g., a human kinome substrate library).

Protocol 2: Tiered Virtual Screening for a Target with No Solved Structures Objective: To identify putative small-molecule binders for a target with no experimental 3D structure. Methodology:

- Comparative Modeling: Use AlphaFold2 to generate a high-confidence predicted structure. Identify the putative ligand-binding pocket using tools like DeepSite.

- Ligand-Based Screening (if applicable): Compile any known bioactive ligands for the target or its closest homologs (from ChEMBL). Use these for a similarity search (e.g., fingerprint Tanimoto) in large libraries (e.g., ZINC20).

- Structure-Based Screening: Dock the top hits from Step 2 and a diversity subset of the library (~1 million compounds) into the predicted binding pocket using Glide SP or Vina.

- Consensus Scoring & Filtering: Rank compounds by consensus across multiple scoring functions. Apply strict ADMET filters early to remove compounds with poor pharmacokinetic profiles.

- Experimental Triage: Procure the top 50-100 ranked compounds. Test in a primary binding assay (e.g., Differential Scanning Fluorimetry - DSF) at a single high concentration. Progress only confirmed binders to a dose-response binding assay (e.g., SPR) and subsequent cellular assays.

Pathway & Workflow Diagrams

Title: The Ripple Effect of Sparse Data in Research

Title: Functional Annotation Protocol for Long-Tail Genes

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Investigating Long-Tail Genes

| Item | Function | Example (Supplier) | Key Consideration for Long-Tail Genes |

|---|---|---|---|

| Validated CRISPR-Cas9 sgRNA | Enables specific gene knockout. | Synthego, Horizon Discovery | Specificity is critical. Use multiple sgRNAs per gene and deep-sequencing validation to rule of off-target effects in the absence of known phenotypic controls. |

| Polyclonal Antibody (with KO-validated lot) | Detects protein expression/ localization. | Atlas Antibodies, Invitrogen | Always request and use knockout-validated lots. For novel proteins, epitope tagging (e.g., FLAG, HA) may be more reliable. |

| ORF Expression Clone (Tagged) | For exogenous expression and protein purification. | DNASU Plasmid Repository | Gateway or Flexi clones allow easy transfer to various vectors for different assays (mammalian, bacterial, insect cell). |

| Structure-Prediction Ready Sequence | Input for 3D modeling. | UniProt FASTA | Use the canonical isoform sequence. Always run multiple prediction tools (AlphaFold2, RoseTTAFold) and compare. |

| Predicted Protein-Protein Interaction Set | Hypothesizes functional context. | STRING database, GeneMANIA | Treat as a prioritization tool, not ground truth. Focus on interactions with higher confidence scores and experimental evidence in other species. |

| Broad-Spectrum Compound Library | For phenotypic screening of uncharacterized targets. | Selleckchem Bioactive Library, Prestwick Chemical Library | Use libraries with well-annotated mechanisms to enable "reverse pharmacology" if a hit is found. |

Technical Support Center

FAQs & Troubleshooting

Q1: I am studying a long-tail gene (low-annotation). My hypothesis generation relies on GO annotations, but my gene of interest has none in UniProt. How can I proceed? A: This is a core manifestation of the annotation gap. The primary solution is to use computational predictions as a starting point. Follow this protocol:

- Gather Predictions: Query the InterPro database with your protein sequence to obtain predicted domains and features.

- Map to GO: Use the InterPro2GO mapping file (available from the GO Consortium) to translate InterPro entries to predicted GO terms.

- Leverage Phylogeny: Use tools like PANTHER or OrthoFinder to identify orthologs in well-annotated model organisms.

- Transfer Annotations: Apply the "phylogenetic annotation" principle: cautiously transfer annotations from the ortholog, noting the evidence as "Inferred from Sequence Orthology (ISO)" in your records.

Q2: I found conflicting GO annotations (e.g., different cellular components) for my protein between GOA and another resource. How do I resolve this? A: Conflict resolution requires examining the underlying evidence.

- Check Evidence Codes: In the GOA file, prioritize annotations with experimental evidence codes (EXP, IDA, IPI, IMP, IGI, IEP) over computational ones (IEA, ISS, ISO).

- Trace to Source: Use the DB_Reference field in the UniProt entry or the "Assigned By" field in the GOA file to identify the original publication or database. Review the primary source.

- Context is Key: Annotations may be correct for specific isoforms or under specific conditions. Verify the protein sequence and organism used in the cited study matches your research context.

Q3: What is the statistical significance of the "annotation gap," and how do I quantify it for my specific research domain (e.g., a non-model organism family)? A: The gap can be quantified as the difference between total known proteins and those with experimentally validated annotations. You can perform a field-specific analysis:

- Data Extraction: Download the current UniProt proteome for your organism of interest and the corresponding GOA file.

- Filter and Count:

- Total proteins in proteome (A).

- Proteins with any GO annotation (B).

- Proteins with at least one experimental (non-IEA) GO annotation (C).

- Calculate Metrics:

- Overall Annotation Coverage (%) = (B / A) * 100

- Experimental Annotation Depth (%) = (C / A) * 100

- Annotation Gap (%) = 100 - Experimental Annotation Depth

Quantifying the Gap: Current Statistics

Table 1: Annotation Statistics for Key Model Organisms (Selected)

| Organism | UniProt Proteome Size (approx.) | Proteins with Any GO Annotation (%) | Proteins with Experimental GO Annotation (%) | Primary Annotation Gap (%) |

|---|---|---|---|---|

| Homo sapiens (Human) | ~20,800 | >99% | ~48% | ~52% |

| Mus musculus (Mouse) | ~21,700 | >99% | ~44% | ~56% |

| Drosophila melanogaster (Fruit fly) | ~13,800 | >99% | ~31% | ~69% |

| Saccharomyces cerevisiae (Yeast) | ~6,000 | >99% | ~76% | ~24% |

| Arabidopsis thaliana (Plant) | ~27,400 | >99% | ~28% | ~72% |

Table 2: The Long-Tail Problem in a Non-Model Organism Group (Example: Filamentous Fungi)

| Organism Group | Avg. Proteome Size | Avg. Proteins with Any GO (%) | Avg. Proteins with Experimental GO (%) | Estimated Gap (%) |

|---|---|---|---|---|

| Filamentous Fungi (10 genomes) | ~11,000 | ~85% | <5% | >95% |

Experimental Protocol: Establishing Baseline Annotation for a Long-Tail Gene

Objective: To generate initial, high-confidence GO annotations for an uncharacterized human protein using phylogenetic profiling and domain analysis.

Materials & Reagents:

- Input: Protein sequence of interest (FASTA format).

- Software: BLASTP, OrthoFinder, InterProScan, PANTHER Classification System.

- Data Files: Latest UniProt reference proteomes, InterPro2GO mapping file.

Methodology:

- Ortholog Identification: Run OrthoFinder using your protein sequence against a curated set of reference proteomes (e.g., from Ensembl) to identify high-confidence orthologs.

- Domain Architecture Analysis: Submit your sequence to InterProScan. Consolidate all predicted domains, families, and sites.

- GO Term Prediction: a. Convert InterPro results to GO terms using the InterPro2GO map. b. Submit your protein ID to the PANTHER database to retrieve GO annotations inferred via phylogenetic trees (GAF files).

- Evidence Consolidation: Combine results from steps 3a and 3b. Remove duplicates. Annotations are assigned the evidence code "Inferred from Sequence Orthology (ISO)" or "Inferred from Electronic Annotation (IEA)" as appropriate.

- Manual Curation: For critical terms, perform a literature review on the top orthologs to assess the validity of the transferred function.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Resources for Addressing the Annotation Gap

| Item | Function & Relevance |

|---|---|

| GO Annotation File (GAF) | Core dataset linking proteins to GO terms with evidence. Essential for gap quantification and analysis. |

| InterPro2GO Mapping File | Bridges protein domain prediction (InterPro) to functional terms (GO), enabling computational annotation. |

| PANTHER Classification System | Provides phylogenetic trees and HMMs for precise ortholog identification and functional inheritance. |

| UniProtKB/Swiss-Prot | Manually reviewed, high-annotation database. The "gold standard" for training prediction algorithms. |

| Expression Plasmids (e.g., GFP-tagged) | For experimental validation of cellular component predictions for uncharacterized proteins. |

| CRISPR-Cas9 Knockout Cell Lines | Essential for conducting loss-of-function experiments to validate biological process annotations. |

Visualization

Diagram 1: Workflow for Bridging the Annotation Gap

Diagram 2: Evidence Code Hierarchy for GO Annotation

This technical support center addresses common experimental and computational bottlenecks faced by researchers in Gene Ontology (GO) annotation, with a specific focus on overcoming the long-tail problem—the vast number of genes with sparse or no experimental annotation. The guides below are designed to help scientists troubleshoot issues and streamline their workflows to contribute high-quality, evidence-based annotations.

Troubleshooting Guides & FAQs

Section 1: Experimental Validation Bottlenecks

Q1: My high-throughput screening (e.g., CRISPR knockout) for a long-tail gene shows inconsistent phenotype results across replicates. What are the key checkpoints?

- A: Inconsistent phenotypes often stem from off-target effects or insufficient validation. Follow this protocol:

- Design Validation: Re-verify sgRNA design using current tools like CHOPCHOP or CRISPick to minimize off-target risk.

- Control Confirmation: Ensure positive and negative control cell lines/colonies show expected phenotypes in the same experimental run.

- Efficiency Check: Quantify knockout efficiency via western blot (if antibody exists) or next-gen sequencing of the target site.

- Secondary Validation: Employ a second, independent sgRNA or siRNA targeting the same gene. Concordant results strongly support on-target effects.

Q2: I am attempting to annotate a protein's cellular component via fluorescence tagging, but I observe diffuse, non-specific localization. How can I resolve this?

- A: Diffuse signal can indicate overexpression artifacts or tag interference.

- Expression Level: Use the lowest possible promoter strength or an inducible system to avoid protein mislocalization due to overexpression.

- Tag Placement: Test both N-terminal and C-terminal tags, as one may interfere less with localization signals.

- Fixation & Imaging: For live-cell imaging, confirm cell health. For fixed cells, try different fixation protocols (e.g., paraformaldehyde vs. methanol).

- Orthogonal Validation: Correlate with immunofluorescence using a validated antibody against the endogenous protein.

Section 2: Curation & Computational Resource Challenges

Q3: My computational pipeline for inferring GO terms via sequence homology (e.g., from InterProScan) produces an overwhelming number of low-confidence annotations. How can I filter them effectively?

- A: Prioritize annotations based on evidence strength. Implement a filtering cascade:

- Evidence Code Priority: Favor experimental evidence codes (EXP, IDA) transferred from close orthologs over purely electronic annotations (IEA).

- Sequence Identity & Coverage: Set thresholds (e.g., >60% identity, >80% query coverage) for the ortholog.

- Phylogenetic Context: Use tools like PANTHER to assess if the ortholog is within a relevant phylogenetic range for function conservation.

- Consensus Filter: Require that a predicted term is found by multiple independent methods (e.g., InterPro, Pfam, and SMART).

Q4: I want to contribute manual annotations to GO, but the curation process seems complex. What is the essential starting toolkit?

- A: The core toolkit for manual curation includes:

- Curation Platform: Use the official web-based tool, GO-CAM, or a compatible editor like Protégé.

- Evidence Capture: Always use the appropriate Evidence Code (e.g., IMP for mutant phenotype, IPI for physical interaction).

- Reference Manager: Have access to PubMed or a similar database to cite the specific experimental figure/table supporting your assertion.

- Ontology Browser: Use AmiGO 2 or OntoBee to accurately find and select the most specific GO term.

Key Experimental Protocol: Functional Validation for Long-Tail Gene Annotation

Objective: To establish a basic molecular function annotation for an uncharacterized human protein suspected to be a kinase based on domain analysis.

Methodology: In Vitro Kinase Assay

- Cloning: Clone the full-length ORF of the target gene into a mammalian expression vector with an N-terminal FLAG tag.

- Transfection & Lysis: Transfect HEK293T cells. After 48 hours, lyse cells in RIPA buffer supplemented with protease and phosphatase inhibitors.

- Immunoprecipitation: Incubate lysate with anti-FLAG M2 affinity gel to purify the target protein. Elute with 3xFLAG peptide.

- Kinase Reaction:

- Combine purified protein (2 µg) with 1 µg of a generic substrate (e.g., Myelin Basic Protein) in 30 µL kinase buffer (25 mM Tris pH 7.5, 10 mM MgCl₂, 1 mM DTT, 100 µM ATP).

- Include a positive control (known active kinase) and a negative control (kinase-dead mutant or empty vector eluate).

- Incubate at 30°C for 30 minutes.

- Detection: Resolve proteins by SDS-PAGE. Perform western blotting with an anti-phospho-substrate antibody to detect kinase activity. Re-probe for total substrate and FLAG to confirm loading.

Research Reagent Solutions

| Reagent/Material | Function in Context of GO Annotation Experiments |

|---|---|

| CRISPR/Cas9 Knockout Kit | Enables generation of loss-of-function mutants for phenotype-based (IMP) GO term annotation. |

| Tandem Affinity Purification (TAP) Tags | Facilitates protein complex purification for identifying physical interactions (IPI evidence). |

| Homology-Directed Repair (HDR) Donor Template | Used for precise endogenous protein tagging (e.g., GFP) for subcellular localization (IDA evidence). |

| Phospho-Specific Antibodies | Critical reagents for detecting post-translational modifications in kinase/phosphatase assays. |

| Validated siRNA or shRNA Libraries | For transient knockdown studies to complement CRISPR knockout phenotypes. |

| Proximity-Dependent Labeling Reagents (e.g., BioID2) | Identifies proximal protein interactions in living cells, useful for cellular component annotation. |

Table 1: Common GO Evidence Codes for Experimental Annotation

| Evidence Code | Full Name | Typical Experimental Source | Confidence Level |

|---|---|---|---|

| EXP | Inferred from Experiment | Direct, published assay (e.g., kinase assay) | High |

| IDA | Inferred from Direct Assay | In-house experimental data (e.g., microscopy) | High |

| IPI | Inferred from Physical Interaction | Yeast two-hybrid, Co-IP, FRET | High/Medium |

| IMP | Inferred from Mutant Phenotype | CRISPR knockout, RNAi phenotype | High |

| IEP | Inferred from Expression Pattern | RT-PCR, RNA-seq expression correlation | Medium |

Table 2: Current Annotation Statistics (Representative Data)

| Organism | Total Annotated Genes | Genes with Experimental (Non-IEA) Evidence | % Long-Tail (≤3 annotations) | Primary Data Source |

|---|---|---|---|---|

| Homo sapiens | ~19,000 | ~11,000 | ~40% | GO Consortium, 2023 |

| Mus musculus | ~22,000 | ~13,000 | ~30% | GO Consortium, 2023 |

| Drosophila melanogaster | ~8,000 | ~7,000 | ~20% | FlyBase, 2023 |

| Saccharomyces cerevisiae | ~6,000 | ~5,500 | <10% | SGD, 2023 |

Visualizations

Diagram 1: GO Annotation Pipeline for Long-Tail Genes

Diagram 2: Key Signaling Pathway Validation Workflow

Strategies and Tools for Annotation: From Machine Learning to Community Curation

Technical Support Center: Troubleshooting Guides & FAQs

Frequently Asked Questions (FAQs)

Q1: My DeepGO model predictions have low confidence scores for most proteins. What could be the cause? A: This is a common issue when predicting functions for proteins from the "long tail"—those with limited homology to well-annotated proteins. First, check the similarity of your input protein sequence to sequences in the training data (e.g., via BLAST). Low similarity is expected for long-tail problems. Consider using DeepGO-SE, which integrates pre-trained language model embeddings and is specifically designed to better generalize to such remote homology cases. Ensure your input sequence is in the correct FASTA format and is a full-length sequence, as fragmented inputs can degrade performance.

Q2: How do I interpret the output of TALE's knowledge graph reasoning? A: TALE outputs a set of candidate GO terms with confidence scores, along with explanatory paths from the knowledge graph. A common issue is an overwhelming number of candidate terms. Use the confidence threshold filter (default 0.5) to focus on high-probability predictions. If explanatory paths are missing for a high-scoring term, this may indicate the prediction is primarily based on sequence patterns rather than known ontological relationships, which can occur for novel functions. Review the "evidence chain" visualization provided in the output.

Q3: DeepGO-SE fails to generate embeddings for my protein sequences. What should I do?

A: This typically occurs due to sequence format or length. Ensure sequences contain only valid amino acid one-letter codes (A-Z, except B, J, O, U, X, Z are technically invalid). Remove headers, numbers, or special characters. While the model handles variable lengths, extremely long sequences (>2000 aa) may cause memory issues; consider splitting large multi-domain proteins into functional domains before analysis. Check that you have installed the correct version of the transformers library (as specified in the documentation) to run the protein language model.

Q4: How can I improve the precision of my predictions for a specific organism? A: The base models are trained on broad datasets. For targeted organism analysis, fine-tuning is recommended. Use a high-confidence set of GO annotations from your organism of interest (e.g., from UniProt). Retrain the model on this specialized dataset, or use a transfer learning approach by initializing weights from the pre-trained DeepGO model and performing a few additional training epochs. Be cautious of overfitting if your organism-specific dataset is small.

Troubleshooting Guide: Common Experimental Issues

Issue: Discrepancy between computational predictions and wet-lab experimental results.

- Step 1: Verify the experimental assay truly measures the predicted molecular function or biological process. GO terms are specific; a "kinase activity" prediction requires a kinase assay, not just a phosphorylation readout.

- Step 2: Check for protein context. Predictions are for the isolated protein sequence, but your experiment may be in a cellular context lacking necessary co-factors or in the wrong subcellular compartment. Consult the cellular component predictions.

- Step 3: Re-run the prediction using an ensemble approach (e.g., run DeepGO, DeepGO-SE, and TALE). Use the consensus predictions and examine the confidence scores. Low consensus often indicates a challenging long-tail prediction requiring further orthogonal validation.

Issue: Knowledge graph (TALE) produces seemingly illogical or circular reasoning paths.

- Step 1: This may stem from sparse or noisy data in the knowledge graph for certain protein families. Activate the "prune low-weight edges" option in the TALE configuration to filter out weak connections.

- Step 2: Inspect the underlying evidence codes for the edges in the problematic path. The system may be relying on electronically inferred annotations (IEA) which can propagate errors. Configure the system to prioritize edges supported by experimental evidence codes (e.g., EXP, IDA).

- Step 3: Manually review the ontological relationships (isa, partof, has_function) in the path. The logic is rule-based; an apparent error might reveal an unexpected but valid biological relationship.

Table 1: Benchmark Performance of DeepGO, DeepGO-SE, and TALE on CAFA3 Challenge Data

| Model | F-max (BP) | F-max (MF) | F-max (CC) | S-min (BP) | S-min (MF) | S-min (CC) | Key Strength |

|---|---|---|---|---|---|---|---|

| DeepGO | 0.36 | 0.54 | 0.57 | 9.50 | 17.21 | 13.99 | Combines CNN & KG for interpretability |

| DeepGO-SE | 0.41 | 0.59 | 0.61 | 8.21 | 15.43 | 11.85 | Superior on proteins with low homology |

| TALE | 0.38 | 0.56 | 0.59 | 8.95 | 16.12 | 12.64 | Explains predictions via KG paths |

BP: Biological Process, MF: Molecular Function, CC: Cellular Component. F-max: maximum F1-score. S-min: minimum semantic distance (lower is better).

Table 2: Long-Tail Problem Performance (Proteins with <30% Sequence Identity to Training Set)

| Model | Recall@Top10 (BP) | Recall@Top10 (MF) | Percentage of "No Prediction" Cases |

|---|---|---|---|

| DeepGO | 0.22 | 0.31 | 18% |

| DeepGO-SE | 0.29 | 0.38 | 12% |

| TALE | 0.25 | 0.34 | 15% |

Recall@Top10 measures if the true annotation is in the model's top 10 predictions.

Experimental Protocols

Protocol 1: Running a Standard Prediction Pipeline with DeepGO/DeepGO-SE

Objective: To obtain Gene Ontology annotations for a novel protein sequence.

- Input Preparation: Format your protein sequence(s) in a standard FASTA file. Ensure no line breaks within the sequence.

- Model Selection: Choose between DeepGO (faster, good for proteins with some homology) and DeepGO-SE (slower, uses embeddings, better for long-tail sequences).

- Running the Prediction:

- For DeepGO: Use the command

python predict.py --model deepgo --input input.fasta --output predictions.json. - For DeepGO-SE: Use

python predict.py --model deepgose --input input.fasta --embeddings esm2 --output predictions.json.

- For DeepGO: Use the command

- Output Analysis: The JSON file contains predicted GO terms, confidence scores, and for DeepGO, attention maps highlighting important sequence regions. Filter predictions using a confidence threshold (e.g., 0.3).

Protocol 2: Validating Predictions Using TALE's Knowledge Graph Reasoning

Objective: To generate and evaluate explanatory paths for high-confidence predictions.

- Generate Base Predictions: Use DeepGO-SE to generate an initial set of high-confidence (>0.7) GO term predictions for your protein.

- Build Knowledge Graph Query: For each high-confidence term, TALE constructs a subgraph query linking the protein's sequence features (via homology to known proteins) to the target GO term through intermediate ontology terms and proteins.

- Path Reasoning & Scoring: Execute the query. TALE uses a modified PageRank algorithm over the heterogeneous graph (proteins, sequences, GO terms) to find and score all possible evidence paths.

- Evidence Integration: The scores from all paths leading to a specific GO term are aggregated to produce a final confidence score and a set of interpretable evidence chains for researcher review.

Pathway & Workflow Visualizations

Title: DeepGO-SE and TALE Integrated Workflow

Title: TALE Knowledge Graph Reasoning Path

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in ML/AIDriven GO Annotation |

|---|---|

| High-Quality Training Data (e.g., Swiss-Prot) | Curated, experimentally validated GO annotations are essential for supervised model training and reducing error propagation. |

| Pre-trained Protein Language Model (e.g., ESM-2) | Provides contextual sequence embeddings that capture evolutionary and structural constraints, crucial for DeepGO-SE's performance on novel sequences. |

| GO Graph Structure (OBO Format) | The formal ontology defining term relationships (isa, partof) is required for model constraint (DeepGO) and knowledge graph reasoning (TALE). |

| Heterogeneous Knowledge Graph (e.g., integrated with STRING, UniProt) | Combines protein-protein interactions, homology, and annotations into a unified graph for TALE's multi-hop reasoning and evidence generation. |

| Benchmark Dataset (e.g., CAFA challenges) | Standardized, time-stamped evaluation sets are necessary for fair model comparison and quantifying progress on the long-tail problem. |

| Compute Infrastructure (GPU clusters) | Essential for training large models (transformers, graph neural networks) and generating predictions at scale for proteomes. |

The Power of Protein Language Models (e.g., ESM, ProtBERT) for Zero-Shot Function Prediction

Technical Support Center

Troubleshooting Guides & FAQs

Q1: I am using the ESM-2 embeddings for zero-shot prediction on a novel protein family. The model consistently returns a very low confidence score (e.g., < 0.05) for all Gene Ontology (GO) terms. What could be the issue?

- A: This is a common issue when the model encounters protein sequences with poor representation in its training distribution—a classic long-tail problem. First, check the sequence length. ESM models have a maximum context window (e.g., 1024 for ESM-2). Truncate or chunk longer sequences. Second, analyze the amino acid composition. An overabundance of low-complexity regions or unknown residues ("X") can degrade embedding quality. Use tools like

segortrxto mask low-complexity regions before generating embeddings. Finally, this low confidence may accurately reflect the model's uncertainty on a truly novel fold. Consider this a candidate for experimental prioritization in your long-tail annotation pipeline.

Q2: When fine-tuning ProtBERT on a small, curated dataset of a specific GO branch (e.g., "ion transmembrane transport"), the model fails to generalize and overfits severely. How can I mitigate this?

- A: Overfitting is acute in the long-tail where labeled data is scarce. Implement the following protocol:

- Use LoRA (Low-Rank Adaptation): Do not fine-tune all parameters. Inject trainable rank decomposition matrices into the attention layers, drastically reducing trainable parameters.

- Aggressive Data Augmentation: Use backwards translation (protein->DNA->mutate->protein) to generate synthetic variants while preserving function.

- Sharpness-Aware Minimization (SAM): Use an optimizer that seeks parameters in flat, rather than sharp, loss minima, which improves generalization.

- Early Stopping with a Strict Criterion: Monitor performance on a held-out validation set from the same long-tail family and stop when loss plateaus for 5 epochs.

Q3: My zero-shot pipeline assigns plausible GO terms to a protein, but the predictions lack precision (too broad) and I cannot validate them with known domain/motif databases. What steps should I take?

- A: This indicates a need for post-prediction refinement, crucial for long-tail annotations.

- Implement Prediction Calibration: Use temperature scaling or isotonic regression on a set of known protein predictions to calibrate the confidence scores.

- Employ Semantic Similarity Filtering: Use the hierarchical structure of GO. If a specific child term (e.g., "serine-type endopeptidase activity") is predicted with low confidence, but its parent term ("hydrolase activity") is high, prioritize the parent. Reject predictions that are isolated leaves in the GO graph with no supported parent or sibling terms.

- Cross-check with Ab Initio Tools: Run the sequence through tools like

InterProScanorHMMERagainst thePfamdatabase. While they may fail on the long-tail, any weak hit can serve as crucial corroborating evidence to boost prediction credibility.

Q4: How do I handle the computational cost of generating embeddings for a whole proteome (e.g., 10,000+ sequences) using large PLMs like ESM-3?

- A: Optimize your workflow:

- Use Pre-computed Embeddings: Check if your proteins of interest are in repositories like the ESMet database.

- Batch Processing & Hardware: Ensure you are using a GPU with sufficient VRAM. The batch size can significantly impact speed; find the optimal size for your hardware.

- Model Selection: For large-scale screening, consider using a smaller but performant model like

ESM-2 650Minstead of the 15B parameter version for a marginal accuracy trade-off. - Pipeline Optimization: Separate the embedding generation (GPU-intensive) from the classifier inference (less intensive). Store embeddings in a vector database (e.g., FAISS) for fast retrieval and multiple downstream prediction tasks.

Q5: The GO term hierarchy is complex. How can I structure a zero-shot prediction task to respect the "true path rule"?

- A: You must frame the prediction as a multi-label, hierarchical classification problem. Do not treat GO terms independently.

- Method: Use a structured loss function like the Hierarchical Multi-Label Cross-Entropy (HMCE) loss during any fine-tuning or adapter training.

- In Zero-Shot Setting: Post-process your flat predictions by propagating confidence scores up the GO graph. If a child term is predicted, ensure all its parent terms are also added to the prediction set with at least the same confidence. Use the

go-basic.obofile and a library likegoatoolsto manage the ontology structure.

Key Quantitative Performance Data

Table 1: Benchmark Performance of PLMs on Zero-Shot GO Prediction (CAFA3 Challenge Metrics)

| Model | F-max (Molecular Function) | F-max (Biological Process) | S-min (Cellular Component) | Publication/Code Source |

|---|---|---|---|---|

| ESM-1b (Fine-tuned) | 0.54 | 0.41 | 9.50 | Rao et al., 2019 |

| ProtBERT (Zero-Shot) | 0.48 | 0.36 | 10.25 | Brandes et al., 2022 |

| ESM-2 (15B, Zero-Shot) | 0.59 | 0.45 | 8.90 | Lin et al., 2023 |

| State-of-the-Art (Non-PLM) | 0.61 | 0.47 | 7.20 | Zhou et al., 2019 |

Table 2: Impact on Long-Tail Annotation (Simulated Study)

| Protein Set (by # of known homologs) | % Annotated by BLAST | % Annotated by ESM-2 Zero-Shot | % Validated by Subsequent Experiment |

|---|---|---|---|

| >50 homologs (Head) | 95% | 92% | 88% |

| 10-50 homologs (Mid-Tail) | 65% | 78% | 75% |

| <10 homologs (Long-Tail) | <20% | 52% | 49% |

Experimental Protocol: Zero-Shot GO Prediction with ESM-2

Objective: To predict Gene Ontology terms for a novel protein sequence without sequence homology or task-specific fine-tuning.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Sequence Preprocessing:

- Input: Raw amino acid sequence in FASTA format.

- Mask low-complexity regions using

segortrx. - Replace any non-standard amino acids (U, O, etc.) or ambiguous "X" residues. If

Xis abundant, consider excluding the protein from analysis. - Truncate sequences longer than the model's context length (e.g., 1024 for ESM-2) to the first N residues, or chunk into overlapping segments (stride 256).

Embedding Generation:

- Load the pre-trained

esm2_t33_650M_UR50Dmodel and tokenizer. - Tokenize the preprocessed sequence.

- Pass tokens through the model and extract the mean representation across all layers for each residue. For a per-protein embedding, take the mean across all residue embeddings (the

<cls>token is not used in ESM-2).

- Load the pre-trained

Zero-Shot Inference:

- Use a pre-computed logistic regression classifier (as provided in the ESM-2 repository) that maps the protein embedding to GO term probabilities.

- Alternatively, compute the cosine similarity between the target protein embedding and a database of pre-embedded proteins with known GO terms. Assign terms from the k-nearest neighbors (k-NN), weighted by similarity.

Post-Processing & Validation:

- Apply a confidence threshold (e.g., 0.3) to filter out low-probability predictions.

- Propagate predictions up the GO hierarchy using the

go-basic.obofile to ensure compliance with the "true path rule." - Validate predictions against any available experimental data or weak signals from ab initio motif scans (e.g., from

InterProScan).

Visualizations

Zero-Shot Prediction Pipeline

GO Hierarchy & Prediction Propagation

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Protocol |

|---|---|

| ESM-2/ProtBERT Pre-trained Models | Foundational PLMs that convert protein sequences into numerical embeddings capturing structural and functional semantics. |

| GO-basic.obo File | The ontology structure file defining the hierarchical relationships between GO terms, essential for post-processing predictions. |

| InterProScan Suite | Tool to run scans against protein signature databases (Pfam, PROSITE). Provides weak, ab initio evidence to corroborate PLM predictions on long-tail proteins. |

| HMMER Software | For building and scanning custom profile Hidden Markov Models from any few known homologs of a long-tail family, to complement PLM insights. |

| FAISS Library (Facebook AI Similarity Search) | Enables efficient similarity search and clustering of massive protein embedding databases for k-NN based zero-shot prediction. |

| LoRA (Low-Rank Adaptation) Implementation | Allows parameter-efficient fine-tuning of large PLMs on small, long-tail-specific datasets without catastrophic overfitting. |

| CATH/Pfam Database | Used for controlled benchmarking and to define the "long-tail" (proteins with no hits or weak hits in these databases). |

Troubleshooting Guides & FAQs

PhyloProfile FAQ & Troubleshooting

Q1: My PhyloProfile plot shows no data for a gene of interest, even though I know orthologs exist. What are the common causes? A: This is typically a data input issue. Verify 1) The sequence IDs in your Core Gene list exactly match those in the Ortholog file. 2) The taxonomic names in your Ortholog file match those in the Taxonomy file. 3) Your input files are tab-delimited and have the correct column headers (geneID, ncbiID, orthologID). Check for hidden whitespace or special characters.

Q2: How do I interpret the "Paralog Ratio" value in PhyloProfile, and what is a critical threshold? A: The Paralog Ratio is the number of in-paralogs (within-species paralogs) divided by the number of species with orthologs. It indicates gene family expansion.

- Ratio = 0: Single-copy ortholog across species.

- Ratio ~ 0-1: Moderate, lineage-specific expansion.

- Ratio > 1: Significant expansion, common in gene families like olfactory receptors. A high ratio (>2) may suggest challenges in inferring a single orthologous relationship for functional annotation transfer.

Q3: PhyloProfile run fails with "OutOfMemoryError" for large datasets. How can I optimize performance?

A: Use the binWidth and binHeight parameters in the plotting function to reduce resolution. Pre-filter your input data to the taxonomic range of interest. For extremely large-scale analyses (e.g., >1000 genes across >500 species), consider running the core phylogenomic pipeline (e.g., orthologr) on a high-performance computing cluster and use PhyloProfile for visualization of subsets.

Ensembl Compara FAQ & Troubleshooting

Q4: The Ensembl Compara Gene Tree for my gene shows unexpected branching or species placement. What does this indicate? A: This often highlights the "long-tail" problem. Unusual topology can result from: 1) Sequence divergence: Poor alignment in highly divergent "long-tail" species. 2) Incomplete lineage sorting: Real biological signal. 3) Annotation error: in the source genomes of non-model organisms. Always check the alignment coverage and percent identity in the tree node pop-up. Consider using the "Proteinic" view of the tree, which is less sensitive to codon position.

Q5: What is the practical difference between "Orthologs (Compara)" and "Orthologs (Best Reciprocal Hit)" in Ensembl, and which should I use for GO annotation? A: See the table below for a structured comparison.

| Feature | Orthologs (Compara) | Orthologs (Best Reciprocal Hit) |

|---|---|---|

| Method | Phylogenetic tree-based (precision-focused). | Pairwise sequence comparison (speed-focused). |

| Handles Paralogy | Yes, identifies stable orthologs via tree reconciliation. | No, can mis-identify recent paralogs as orthologs. |

| Computational Cost | High. | Low. |

| Recommendation for GO | Preferred for novel annotation, especially for "long-tail" species with higher divergence. | Useful for initial, high-confidence filtering in well-conserved families. |

Q6: How can I programmatically retrieve high-confidence orthologs from Ensembl Compara for a large gene list?

A: Use the Ensembl REST API with the homology/ endpoint. The following Perl script protocol is recommended for batch processing:

Experimental Protocols

Protocol 1: Generating a Custom PhyloProfile Input from OrthoFinder Results

Purpose: To create the necessary input files for PhyloProfile visualization from a standard OrthoFinder output, enabling custom phylogenomic profiling.

Materials:

- OrthoFinder results directory (specifically

Orthogroups/Orthogroups.tsvandOrthogroups/Orthogroups_UnassignedGenes.tsv) - NCBI taxonomy IDs for your species.

- R with

tidyverseandphylotoolspackages installed.

Methodology:

- Process Orthogroups File: Load

Orthogroups.tsv. Filter for your gene(s) of interest and transpose the table so columns are:orthoID,species,geneID. - Create Core Gene File: Generate a two-column file:

geneIDandncbiID. ThencbiIDshould be the taxonomy ID of the query species. - Create Ortholog File: From the transposed table, create a file with columns:

geneID,ncbiID,orthologID. ThencbiIDhere is for the ortholog's species. - Create Taxonomy File: Create a file mapping all

ncbiIDs used to their full taxonomic names (e.g., from thespecies_tree.txt). - Import into PhyloProfile: Use the "Input Data" pane in the PhyloProfile app to upload these three files and generate the profile plot.

Protocol 2: Validating Candidate GO Terms via Ensembl Compara Phylogenetic Context

Purpose: To assess the reliability of a candidate GO term for a gene in a "long-tail" species by examining the consistency of its orthologs' annotations.

Materials:

- Ensembl Genome Browser access (via web or Biomart).

- Gene ID for your "long-tail" species gene.

- R or Python environment for basic data analysis.

Methodology:

- Retrieve Compara Orthologs: On the Ensembl gene page for your query gene, navigate to "Comparative Genomics" > "Gene Tree" or "Orthologs". Select the "Compara" ortholog set.

- Extract Annotation Data: Download the list of orthologs and their existing GO annotations (if any) using the "Export data" function or via the Biomart tool, selecting attributes:

Ensembl Gene ID,GO Term Accession,GO Term Name. - Perform Consistency Check: For the candidate GO term (e.g., "GO:0005634 - nucleus"), calculate the annotation frequency among high-confidence orthologs (e.g., those with >70% protein identity). See logic below.

- Decision Threshold: If the candidate term is present in >80% of annotated high-confidence orthologs from a clade that includes well-studied model organisms, it can be considered reliable for transfer. If frequency is low (<30%), the term is likely not conserved.

Title: GO Annotation Validation via Orthology Consistency Check

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Orthology-Based Annotation |

|---|---|

| OrthoFinder | Software for genome-scale orthogroup inference from protein sequences. Produces groups of orthologous genes, which form the basis for custom PhyloProfile analysis. |

| DIAMOND | Ultra-fast protein sequence aligner. Used as a pre-filtering step (e.g., in PhyloProfile's data generation pipeline) to identify potential homologs before precise orthology assignment. |

| BUSCO | Benchmarking tool that uses sets of universal single-copy orthologs to assess genome/completeness and annotation quality. Critical for evaluating input data for "long-tail" species. |

| PANTHER Classification System | Resource of protein families, subfamilies, and HMMs. Provides pre-calculated phylogenetic trees and functional annotations, useful for validating or supplementing Ensembl Compara trees. |

Bioconductor biomaRt |

R package that provides direct programmatic access to Ensembl (including Compara) and other BioMart databases. Essential for automating large-scale ortholog and annotation retrieval. |

| FastTree | Tool for approximate maximum-likelihood phylogenetic trees from alignments. Used internally by many pipelines (including older Ensembl Compara) for rapid tree building on large datasets. |

Custom PhyloProfile Input Files (coreGene, ortholog, taxonomy.txt) |

The structured data files required to run the PhyloProfile Shiny app on any set of genes and species, enabling flexibility beyond pre-computed databases. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My pipeline is failing to process PDFs from older journal archives. The text extraction returns garbled characters or empty strings. How can I resolve this? A1: This is a common long-tail issue due to non-standard PDF encodings and scanned images in older literature. Implement a pre-processing module with fallback strategies.

- Step 1: Check the PDF's internal structure with tools like

pdfinfoorpymupdf. - Step 2: For born-digital PDFs with encoding issues, try multiple text extractors (e.g.,

pdfplumber,pdftotext) in sequence. - Step 3: For scanned PDFs, use an OCR engine like Tesseract with a custom biomedical dictionary. For GO annotation, prioritize OCR on Materials/Methods and Results sections to conserve compute resources.

- Step 4: Log the PDF source and extraction method used to identify systematic issues with specific publishers or time periods.

Q2: The Named Entity Recognizer (NER) is performing poorly on newly discovered gene or protein names, leading to missed evidence for novel GO annotations. What can I do? A2: This directly addresses the vocabulary drift problem in long-tail GO research. Retrain your NER model with an active learning loop.

- Protocol: Active Learning for NER Expansion

- Collection: Extract all non-annotated tokens from recent (last 2 years) full-text articles in your corpus.

- Sampling: Rank these tokens by frequency and by occurrence in sentences containing known GO annotation verbs (e.g., "inhibits," "promotes," "localizes to").

- Annotation: Manually annotate a sample (e.g., 500 instances) of the high-rank tokens as

GENE,PROTEIN, orOTHER. - Retraining: Fine-tune your base NER model (e.g., a pre-trained BioBERT) with the new annotated data. Validate on a held-out set of recent literature.

- Integration: Deploy the updated model and schedule quarterly review cycles.

Q3: My relation extraction model has high precision but low recall for "involved_in" Cellular Component relations, especially for rare organelles. How can I improve coverage? A3: Focus on expanding the pattern dictionary and leveraging syntactic parsing.

- Step 1: Manually curate seed sentences from high-quality, recently annotated papers for long-tail Cellular Components (e.g., "ripsoptosome," "glycosome").

- Step 2: Use dependency parsing on these sentences to identify common syntactic patterns (e.g.,

(protein) localized to the (organelle)). - Step 3: Convert these patterns into generalized rules using part-of-speech tags and dependency relations.

- Step 4: Incorporate these rules as additional features into your machine learning-based relation extractor, or run them as a separate high-recall module whose output is ensembled with your primary model.

Q4: The pipeline's throughput has degraded significantly as the corpus size scaled to millions of articles. What are the key architectural optimizations? A4: Implement a distributed, modular pipeline.

- Solution: Transition from a monolithic script to a workflow manager (e.g., Apache Airflow, Nextflow). Key stages (PDF fetching, text extraction, NER, relation extraction, database upload) should be separate, scalable containers. Use a high-throughput message queue (e.g., RabbitMQ, Apache Kafka) to manage the document flow. Database writes should be batched.

Q5: How can I assess the precision/recall of my pipeline specifically for long-tail GO terms (those with less than 10 manual annotations)? A5: Create a targeted benchmark set.

- Protocol: Benchmarking for Long-Tail Terms

- Term Selection: From the GO ontology, select a random sample of terms with fewer than 10 annotations in major databases.

- Gold Standard Curation: For each term, have domain experts manually find and extract relevant evidence sentences from a held-out corpus (e.g., PMC Open Access Subset).

- Pipeline Run: Process the same corpus with your pipeline.

- Evaluation: Calculate precision, recall, and F1-score at the document and sentence level for the targeted terms. Compare to performance on high-frequency terms.

Research Reagent Solutions Table

| Item/Reagent | Function in Text-Mining Pipeline |

|---|---|

| BioBERT / PubMedBERT | Pre-trained language models providing deep contextualized word embeddings specifically for biomedical text, crucial for accurate NER and relation extraction. |

| UMLS Metathesaurus / | Comprehensive biomedical vocabularies used for dictionary-based entity linking and disambiguation, helping to map text strings to standard GO identifiers. |

| SpaCy / Stanza | Industrial-strength NLP libraries providing robust tokenization, part-of-speech tagging, and dependency parsing, forming the syntactic foundation of relation extraction. |

| Apache Tika / pdfplumber | PDF text extraction tools. Tika handles a wide variety of formats, while pdfplumber offers fine-grained control over PDF layout analysis, useful for complex tables. |

| Redis / Elasticsearch | In-memory data store (Redis) for caching frequent queries and document indices; search engine (Elasticsearch) for efficient retrieval of pre-processed text snippets. |

| Docker / Kubernetes | Containerization and orchestration platforms enabling the deployment of reproducible, scalable pipeline components across cloud or high-performance computing clusters. |

| GO Ontology (OBO Format) | The structured, controlled vocabulary itself, used to validate extracted terms and traverse hierarchical relationships (e.g., partof, isa) during evidence consolidation. |

Performance Metrics on Long-Tail vs. High-Frequency GO Terms

Table: Pipeline Performance Comparison (Simulated Data Based on Common Findings)

| GO Term Frequency Category | Sample Size (Terms) | Average Precision | Average Recall | F1-Score | Key Limiting Factor |

|---|---|---|---|---|---|

| High-Frequency (>100 annotations) | 50 | 0.89 | 0.82 | 0.85 | Relation extraction ambiguity in dense text. |

| Mid-Frequency (10-100 annotations) | 50 | 0.81 | 0.71 | 0.76 | Lower training data for NER on synonymous names. |

| Long-Tail (<10 annotations) | 50 | 0.72 | 0.35 | 0.47 | Sparse evidence in literature & vocabulary gap. |

Experimental Protocols

Protocol 1: End-to-End Pipeline Validation for a Specific GO Term Objective: To validate the entire text-mining pipeline's ability to recapitulate known and discover novel annotations for a selected GO term.

- Term Selection: Choose one Biological Process (e.g., "mitotic cytokinesis"), one Molecular Function (e.g., "ubiquitin ligase activity"), and one Cellular Component (e.g., "ribonucleoprotein granule").

- Gold Standard Retrieval: Download all manually curated annotations for these terms from the GO Consortium database. Retrieve the corresponding PMIDs and evidence sentences.

- Corpus Definition: Create a test corpus of 10,000 recent biomedical articles from PubMed Central that are not in the gold standard set.

- Pipeline Execution: Run the full pipeline (fetch, extract, NER, relation extraction) on the test corpus.

- Evaluation: Compare pipeline predictions against the gold standard for precision/recall. Manually assess any novel predictions made by the pipeline for potential new annotations.

Protocol 2: Ablation Study for NER Components Objective: To quantify the contribution of different NER strategies (dictionary, machine learning, hybrid) to overall pipeline performance, especially for long-tail entities.

- Module Isolation: Configure the pipeline to run with three NER settings: A) Dictionary-based (UMLS/GO) only, B) ML-based (BioBERT) only, C) Hybrid (ML + dictionary fallback).

- Dataset: Use a benchmark dataset with annotated entities (e.g., the CRAFT corpus) augmented with 100 manually annotated sentences containing mentions of rare gene families or complexes.

- Run & Measure: Execute the relation extraction step using the entity outputs from each NER setting. Measure end-to-end relation F1-score, breaking down results by entity frequency class.

Visualizations

Pipeline Architecture for GO Evidence Extraction

Human-in-the-Loop Curation Workflow

Targeted Evidence Extraction for a Long-Tail GO Term

Technical Support Center: Troubleshooting Guides & FAQs

FAQs & Troubleshooting

Q1: I cannot see my colleague's edits in Apollo in real-time. What should I check?

A: This is typically a WebSocket connection issue. First, verify all users are on the same Apollo server instance. Check your browser console (F12) for WebSocket errors. Ensure your institutional firewall is not blocking port 80/443 for the Apollo domain. For local installations, confirm the websocket configuration in the apollo-config.groovy file is correctly set.

Q2: My Noctua form fails to save annotations, showing "Validation Error." How do I resolve this? A: This error often relates to missing required fields or incompatible evidence. Follow this protocol:

- Open the form and systematically check each field.

- Ensure the "Gene Product" field is populated with a valid identifier (e.g., UniProt ID).

- Confirm the "Evidence" field uses a valid ECO (Evidence & Conclusion Ontology) code.

- Verify that the "Reference" field contains a PubMed ID (e.g.,

PMID:12345678) or a GO Reference (e.g.,GO_REF:0000001). - If the error persists, use the "Preview" button to see the underlying GPAD/GPI data format and identify the malformed line.

Q3: How do I handle a conflict when two curators assign different GO terms to the same gene product in Canto? A: Canto is designed for community review. Follow this workflow:

- The conflicting annotations are flagged in the session's "Curator Comments" section.

- Initiate the "Discuss" function to tag the other curator.

- Use the linked publication evidence within Canto to evaluate each assertion.

- If consensus is reached, one curator edits the annotation. If not, a senior curator or GO editor can be alerted via the comment system to make a final decision.

Q4: My automated annotation pipeline results are not importing into Apollo. What are common pitfalls? A: The import process is strict about file format. Use this verification protocol:

- Format: Ensure your file is in GFF3 format.

- Sequence ID Consistency: The

seqidin column 1 of your GFF3 file must exactly match the chromosome/contig identifier in the Apollo reference genome. - Attribute Column: The ninth column must contain a unique, stable

IDfor each feature. Parent-child relationships (e.g., mRNA to CDS) must use consistentIDandParenttags. - Validation: Run your GFF3 file through a validator (e.g., [https://github.com/NAL-i5K/GFF3toolkit]) before import.

Experimental Protocols for Annotation Curation

Protocol 1: Creating a New Gene Model in Apollo from RNA-Seq Evidence Objective: Manually create or modify a gene model using aligned RNA-Seq reads as evidence. Materials: See "Research Reagent Solutions" table. Methodology:

- Load the reference genome and RNA-Seq BAM track in Apollo.

- Navigate to the genomic region of interest.

- Right-click on the reference sequence track and select "Create New Annotation."

- In the "User-created Annotations" panel, select "Create Gene."

- Visually inspect the RNA-Seq read splice junctions. Click to add exons along the alignment.

- Connect exons by clicking on their ends to create introns, ensuring phase consistency.

- Define the start and stop codon regions to complete the CDS.

- Use the "Validate" button to check for common errors (e.g., in-frame stop codons).

- Save the annotation to the server, adding assigned terms and evidence codes.

Protocol 2: Annotating Cellular Component in Noctua Using a Microscopy Paper Objective: Create a GO annotation for subcellular localization using results from a fluorescence microscopy figure. Methodology:

- In Noctua, start a new model or open an existing one for your gene of interest.

- Add an entity (the gene product) to the workspace.

- From the toolbar, add a "Cellular Component" term (e.g., "GO:0005739 mitochondrion").

- Connect the gene product to the term using an "is located in" edge.

- Click on the edge to open the evidence panel.

- Set the Evidence code to

ECO:0000314(direct assay evidence used in manual assertion). - Add the reference (PubMed ID from the paper).

- In the "With/From" field, optionally add identifiers for colocalized proteins if relevant.

- Annotate the figure panel (e.g., "Figure 2C") in the comment field.

- Save the model.

Table 1: Platform Comparison for Addressing Long-Tail Gene Annotation

| Feature | Apollo | Noctua (GO-CAM) | Canto | Impact on Long-Tail Problem |

|---|---|---|---|---|

| Primary Function | Genome annotation editor | Ontological pathway/model curation | Community literature curation | Diversifies curation beyond model organisms |

| Annotation Output | Genomic features (GFF3) | GO-CAM models (RDF/triples) | GO term associations (GAF/GPAD) | Enables annotation of non-standard gene functions |

| Collaboration Mode | Real-time, synchronous | Asynchronous, model-level | Session-based, paper-focused | Leverages distributed expert knowledge |

| Learning Curve | Moderate (biological focus) | Steep (ontology logic focus) | Low (form-based focus) | Lowers barrier for domain-specialist curators |

| Typical User | Genomics, genome annotator | Ontologist, systems biologist | Research scientist, field expert | Engages researchers closest to the rare data |

Table 2: Common Error Codes and Resolutions

| Platform | Error Code/Message | Likely Cause | Resolution Step |

|---|---|---|---|

| Apollo | Error: undefined is not an object |

Browser cache conflict | Clear browser cache & hard reload (Ctrl+Shift+R). |

| Noctua | Invalid ECO code |

Typographical error in evidence code | Use the ECO lookup widget; ensure code is ECO:0000XXX. |

| Canto | Session is locked |

Another curator is actively editing. | Wait 5 minutes; the lock auto-releases. Contact the session owner. |

| All | Authentication Failure |

Expired login token or SSO issue | Log out completely, close browser, log in again. |

Research Reagent Solutions

| Item | Function in Biocuration Context | Example/Supplier |

|---|---|---|

| Reference Genome (FASTA) | The coordinate system for all genomic annotations. Must be stable and versioned. | Ensembl, RefSeq, or organism-specific database. |

| Evidence Tracks (BAM/BED) | Aligned experimental data (RNA-Seq, ChIP-Seq) visualized in Apollo to support gene models. | Generated by user's NGS pipeline or public SRA datasets. |

| Ontology Files (OBO/OWL) | The controlled vocabulary (GO, ECO) defining terms and relationships for Noctua/Canto. | http://current.geneontology.org/ontology/ |

| Stable Identifiers | Unique IDs for genes (UniProt, NCBI Gene), essential for linking annotations across platforms. | UniProt Knowledgebase, NCBI Gene. |

| Curation Literature | Peer-reviewed research articles providing the experimental evidence for annotations. | PubMed (https://pubmed.ncbi.nlm.nih.gov/) |

Diagrams

Diagram 1: Integrated Biocuration Workflow

Diagram 2: Noctua GO-CAM Assertion Logic

Technical Support & Troubleshooting Center

FAQs & Common Issues

Q1: My sequence similarity search (BLAST/PSI-BLAST) against Swiss-Prot returns no significant hits (E-value > 0.001). How do I proceed with annotation?